A crypto project has disclosed that it placed bets on its own fundraising outcome on Polymarket, drawing attention to how newly tightened market integrity rules may apply in practice.

In a public statement, P2P.me confirmed that an account labeled “P2P Team” on-chain was controlled by its team. The account was used to bet on whether the project would reach a $6 million fundraising target.

The bets were placed roughly 10 days before the raise concluded, when the outcome had not yet been finalized.

The project stated that the capital used came from its foundation’s treasury and that all proceeds would be returned. It added that it plans to liquidate the positions and introduce internal policies governing prediction market activity.

Case emerges days after Polymarket tightened insider trading rules

The disclosure comes just days after Polymarket updated its rules on 23 March, introducing stricter definitions around insider trading and manipulation.

Among the changes, the platform explicitly prohibited trading by individuals who hold positions of influence over an outcome. That category includes participants directly involved in events tied to prediction markets.

While P2P said the bets were placed before the raise was completed and not based on guaranteed allocations, the timing of the disclosure places the case within a broader shift toward tighter oversight on prediction platforms.

On-chain activity shows active trading and profits

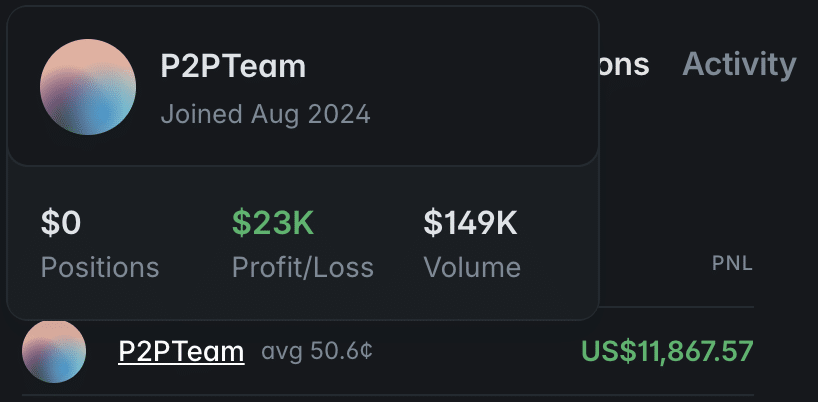

Data from the “P2P Team” account indicates the activity was not purely symbolic.

The account recorded roughly $149,000 in trading volume and around $23,000 in profit and loss. Individual positions generated gains of over $11,000. The figures suggest the trades were executed as active positions rather than passive signaling.

P2P acknowledged that failing to disclose the activity at the time was a mistake. The team notes that trading on outcomes that a team can influence may erode trust, even if the result is not predetermined.

Incident highlights challenges in prediction market enforcement

The case underscores a broader challenge facing decentralized prediction markets: how to manage participation by individuals who may influence event outcomes.

Polymarket’s model relies on open participation and transparent on-chain activity. However, the presence of informed or involved actors can complicate enforcement, particularly when trades occur before outcomes are finalized.

As platforms move to formalize rules around insider activity, real-world cases like this may shape how those standards are interpreted and applied.

Final Summary

- P2P disclosed betting on its own fundraise outcome, raising questions about insider participation in prediction markets.

- The incident comes as platforms like Polymarket tighten rules, highlighting ongoing challenges in enforcing market integrity.