原创 | Odaily 星球日报(@OdailyChina)

作者|Azuma(@azuma_eth)

马斯克和 Anthropic,竟然牵上手了!

北京时间 5 月 7 日凌晨,Anthropic 和 SpaceX 联合发布的一则公告,瞬间震惊了整个 AI 圈。根据公告内容,双方已签署合作协议,Anthropic 将使用 SpaceX Colossus1 数据中心的全部算力容量,这将在一个月内为 Anthropic 提供超过 300 兆瓦的新算力(相当于超 22 万张 NVIDIA GPU),直接改善 Claude Pro 与 Claude Max 订阅用户的使用体验。

这是马斯克的商业帝国首次与 Anthropic 达成如此直接、正式、且大规模的合作。过去多年间,Anthropic 的核心合作方一直是 Amazon 和 Google,无论是云基础设施、芯片供应还是模型训练,Anthropic 长期都深度绑定 AWS 与 Google TPU 体系。马斯克不仅从未公开投资过 Anthropic,甚至还曾多次公开批评其 AI 安全路线以及政治倾向。双方之间,此前几乎不存在任何公开的基础设施合作、模型合作或商业联盟记录。

当最能打的大模型公司和最具话题性的世界首富终于搭上线。这样的合作,天然就自带话题感。

马斯克的小心思

对于马斯克而言,本次合作更微妙的地方在于时间点。

就在十天前(4 月 27 日),马斯克起诉 OpenAI 一案正式已于加利福尼亚州北区联邦地区法院进入庭审阶段,双方在近些天的出庭中花式互喷,火药味十足。这场被外界视作“AI 时代第一大案”的诉讼,几乎已经成为马斯克与 OpenAI 长期恩怨的全面公开化。

而 Anthropic,恰恰正是 OpenAI 当前最核心、最直接的竞争对手之一。于是这场合作便天然多出了一层耐人寻味的意味 —— 敌人的敌人就是朋友,只要能让 OpenAI 难受,老马什么都干得出来。

而若从 AI 竞争格局的角度往深处去看,这场合作也预示着马斯克在 AI 时代下的布局思路演变。

表面上,这只是一次标准的算力交易 —— SpaceX 提供 GPU 集群,Anthropic 获得更多推理资源,双方各取所需。但这件事显然没那么简单。

因为马斯克如今围绕 AI 所做的事,早已不只是“下场做模型”。过去两年,由于马斯克亲自下场做了 xAI(如今已改名叫 SpaceXAI),外界更多把 OpenAI、Anthropic 等大模型公司视作马斯克的潜在竞争对手,但随着超级计算集群 Colossus 的建成与投用,数据中心能力开始外溢,马斯克的角色定位已悄然发生变化。

如今的马斯克已越来越像 AI 世界里的“军火商” —— 谁缺算力,都就可以来找他,哪怕对方是曾经的潜在竞争对手。

深陷“降智”风波的 Anthropic,终于等来了盖世英雄

对于 Anthropic 来说,这次合作的重要性甚至可能比外界想象得更高。

过去几个月,Claude 的口碑一直在经历微妙变化。一方面,Claude Opus 4.7 以及神秘的 Mythos 依旧被视作市面上最优秀的模型;但另一方面,关于 Claude “降智”的声音也开始越来越频繁地出现在社区之中。

尤其是在重度开发者群体里,这种情绪格外明显。有人发现,Claude 在处理长代码、复杂工程任务时,推理能力出现“断崖式下跌”;也有研报指出,Claude 部分模型的“思考预算”或回答长度被大幅削减;更多散户则反馈 Claude 的幻觉越来越严重,模型在处理复杂信息时更容易“一本正经地胡说八道”。

“降智”风波发酵之后,Anthropic 官方已发布技术复盘报告,承认在 3 月至 4 月期间,由于产品层面的调整和 Bug,导致 Claude 模型在复杂任务中出现“性能退化”。

但这一理由并未说服市场,舆论仍普遍认为,高昂的推理成本及算力短缺才是导致 Claude 等大模型产品在实际应用中性能波动的主因。

模型能力越强,推理成本越高;用户规模越大,GPU 消耗越恐怖。所有 AI 公司都绕不开一个商业矛盾 —— 用户希望模型永远“满血运行”,但公司必须控制成本。于是动态限流、推理预算调整、回答长度控制、优先级调度......这些机制几乎都会不可避免地出现,而用户最终感知就是“模型降智了”。

这也是为什么,SpaceX 这笔算力合作对 Anthropic 如此关键。

Anthropic 在公告中表示,随着合作的达成,Claude 核心用户的体验将得到直接改善:

- 首先,Anthropic 会将 Pro、Max、Team 以及按席位计费 Enterprise 方案的 Claude Code 五小时使用额度提升一倍。

- 其次,Anthropic 会取消 Pro 和 Max 账户在高峰时段对 Claude Code 的限流措施。

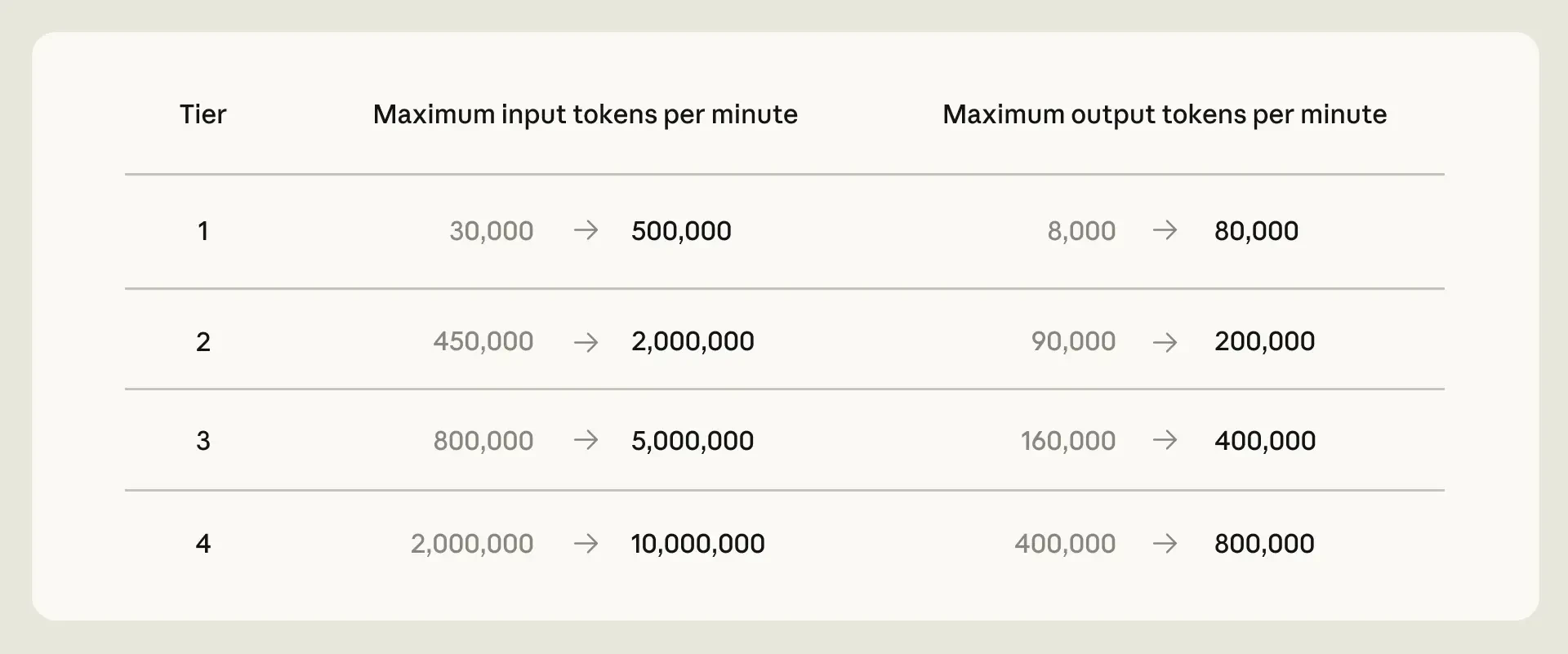

- 第三,Anthropic 将显著提高 Claude Opus 模型的 API 速率限制。

AI 模型测评大佬 Alex Finn 就双方的本次合作表示,Anthropic 在过去几个月里一直有些熄火,额度下降,模型变笨...然后现在马斯克来救火了,他给了 Anthropic 使用全球最大超级算力集群的机会。Anthropic 的算力危机,一直是整个公司的阿喀琉斯之踵,用户口碑和市场情绪也因此一路下滑,而马斯克只靠一笔合作,就把这个问题解决了。

Alex Finn 用了一句美国球迷们更容易理解的比喻:“马斯克的帮助,相当于直接让Anthropic 拿到了文班亚马!”

最终幻想 —— 上太空找电

在合作公告中,有一段被许多人忽视了小字 —— “双方还有兴趣合作开发数吉瓦级的轨道 AI 算力”。翻译成人话就是,马斯克和 Anthropic 想把 AI 数据中心搬去太空。这听起来属实科幻,但背后反映的问题却非常现实。

AI 圈流量最高的分析师 Aakash Gupta 就此解释道:“地球上的电力、土地和散热能力,已经无法足够快地满足需求。”

Anthropic 如今已锁定了大约 15 吉瓦的算力规模,这相当于 1100 万户家庭用电量,但这依然不够......英伟达能生产芯片,Anthropic 手里也有足够的钱,但真正无法按时制造出来的,是电力、土地,以及散热能力 —— 而模型需求增长的速度已远超这些基础设施的建设速度。

算力竞赛的前沿,如今已经开始冲出地球。而全球只有一家公司,真正有能力把吉瓦级的太阳能阵列大规模送入轨道 —— SpaceX。

如果这种星际穿越般的故事最终成为现实,能做到的人,可能只有马斯克。