Editor's Note: This article takes a sharp look at the current prosperous facade of prediction markets. The author incisively points out that today's prediction markets are falling into the "local optimum" trap reminiscent of BlackBerry and Yahoo. While the binary options model adopted by mainstream prediction markets has gained massive traffic in the short term, it is plagued by structural issues of liquidity scarcity and capital inefficiency. The article proposes a vision for prediction markets to evolve towards a "perpetual contract" model, offering constructive and in-depth thinking for realizing a true "market for everything".

Why do companies find themselves chasing the wrong goals? Can we fix prediction markets before it's too late?

"Success is like a strong drink, intoxicating. It is not easy to handle the fame and praise that follow. It corrupts your mind, making you start to believe that everyone around you is in awe of you, that everyone desires you, that everyone's thoughts revolve around you all the time." — Ajith Kumar

"The roar of the crowd has always been the most beautiful music." — Vin Scully

Early success is intoxicating. Especially when everyone tells you you won't succeed, the feeling is even stronger. Fuck the haters, you were right, they were wrong!

But early success harbors a unique danger: you might be winning the wrong prize. While we often joke about "play stupid games, win stupid prizes," in reality, the games we play are often evolving in real-time. Therefore, the very factors that led you to win the first stage may become stumbling blocks to winning a bigger prize as the game matures.

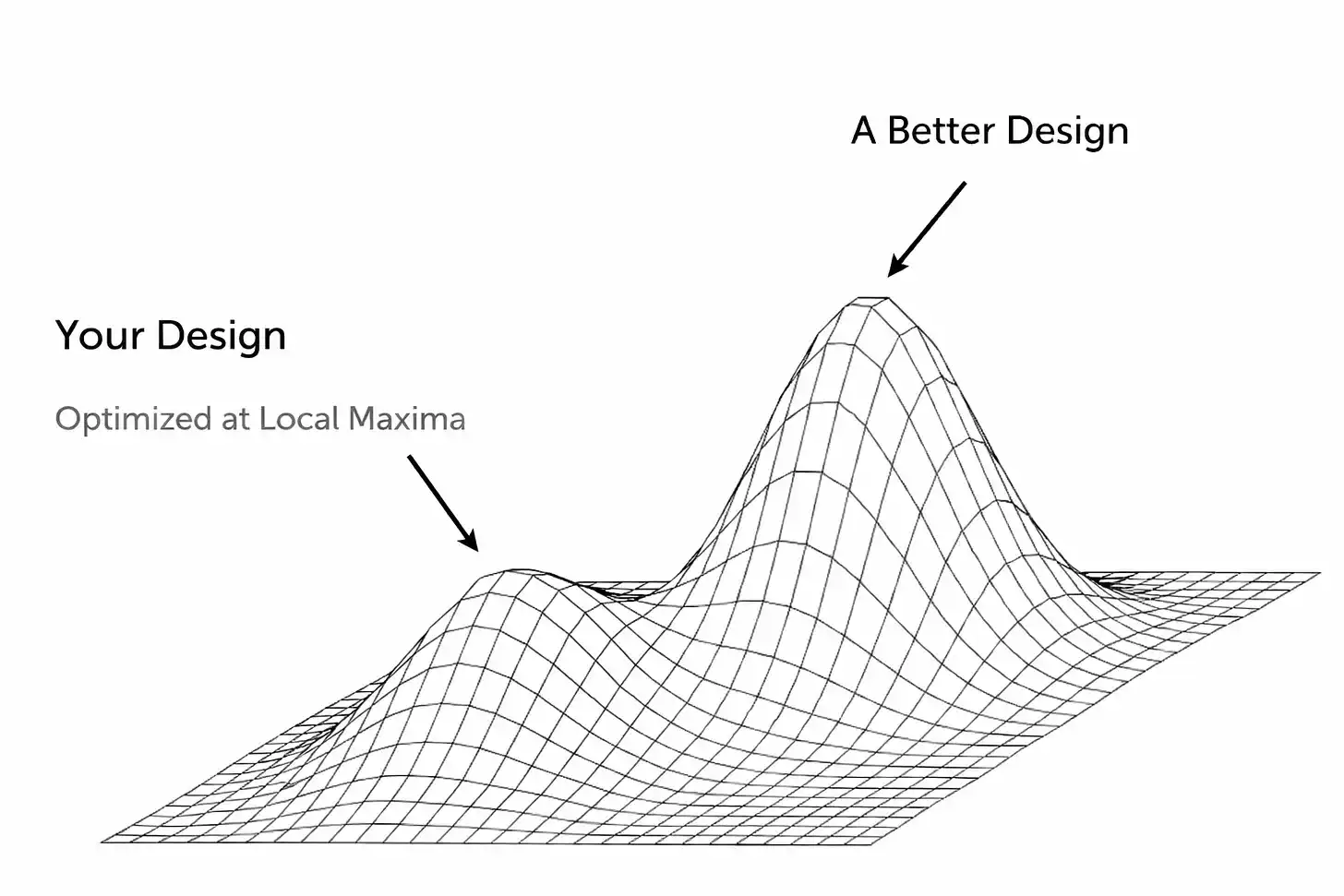

One manifestation of this outcome is: a company mistakenly enters a "local optimum" without realizing it. Winning feels so good, so good that you not only lose your direction but also block self-awareness, unable to see the true situation you are in.

In many cases, this might just be a mirage, an illusion propped up by external factors (like an economic boom leading to a flood of disposable income in consumers' hands). Or, the product or service you built does work well, but only within a specific range or under specific conditions, unable to scale to a broader market.

The core conflict here is that to chase the true ultimate prize (the global optimum), you need to come down from your current peak. This requires great humility. It means making tough decisions: abandoning a core feature, completely refactoring the tech stack, or personally颠覆 the model you once thought was effective. Making this even more challenging is...

Most of the time, you have to make this decision when people (mainly investors and media) are telling you "how great you are"! Many who said you were wrong before are now rushing to validate your success. This is an extremely dangerous position because it breeds complacency at the very time you most need to make radical changes.

This is exactly the position prediction markets are in today. In their current form, they will never achieve mass-market adoption. I don't want to waste words here arguing whether they have already achieved this status (after all, there's a huge gap between knowing something exists and actually having the desire to use it). Maybe you disagree with this premise and are now ready to close the page or read the rest with hate. That's your right. But I will reiterate why this model is broken today and what I think this type of platform should look like.

I don't want to sound too much like a tech bro, I won't rehash "The Innovator's Dilemma," but the classic cases here are Kodak and Blockbuster. These companies (and many others) achieved massive success, which created an inertia resistant to change. We all know how those stories ended, but just throwing up our hands and saying "do better" isn't constructive. So, what caused these outcomes? Do we see these signs in today's prediction markets?

Sometimes, the obstacle is technical. Startups often build products in a specific, subjective way that might work well initially (which is an achievement in itself for a startup!), but quickly solidifies into an architectural straitjacket for the future. Wanting to scale after the initial explosion, or adjust the product design, means threatening some core components that seem to work. People naturally tend to fix problems with incremental patches, but this quickly turns the product into a Frankenstein's monster. And, it only delays the time of accepting the brutal truth: what's really needed is a complete rebuild or reimagining of the product.

This happened with early social networks when they hit performance ceilings. Friendster was the pioneer of social networking in 2002, connecting millions of users online to "friends of friends." But trouble came when a specific feature (viewing friends within "three degrees") caused the platform to crash under the load of calculating exponential connections.

The team refused to scale back this feature, instead focusing on new ideas and fancy partnerships, even as existing users threatened to churn to MySpace. Friendster reached a local peak of popularity but couldn't cross it because its core architecture was flawed, and the team refused to acknowledge, dismantle, and fix it. (By the way, MySpace later fell into its own kind of "local optimum" trap: it was built on a unique user experience of highly customizable profile pages and focused on music/pop culture groups. The platform was primarily ad-driven and eventually over-relied on its ad portal model, while Facebook emerged with a cleaner, faster network based on "real" identities. Facebook attracted some early MySpace users but was undoubtedly more appealing to the next large wave of social media users.)

The persistence of this type of behavior is not surprising. We are all human. Achieving some semblance of success, especially as a startup with an extremely high failure rate, naturally inflates the ego. Founders and investors start believing their own hype and double down on the formula that got them here, even as warning signs flash brighter and brighter. It's easy to ignore new information, even refuse to face the reality that the current environment is different from the past. The human brain is so interesting; with enough motivation, we can rationalize many things.

Stagnant "Research In Motion"

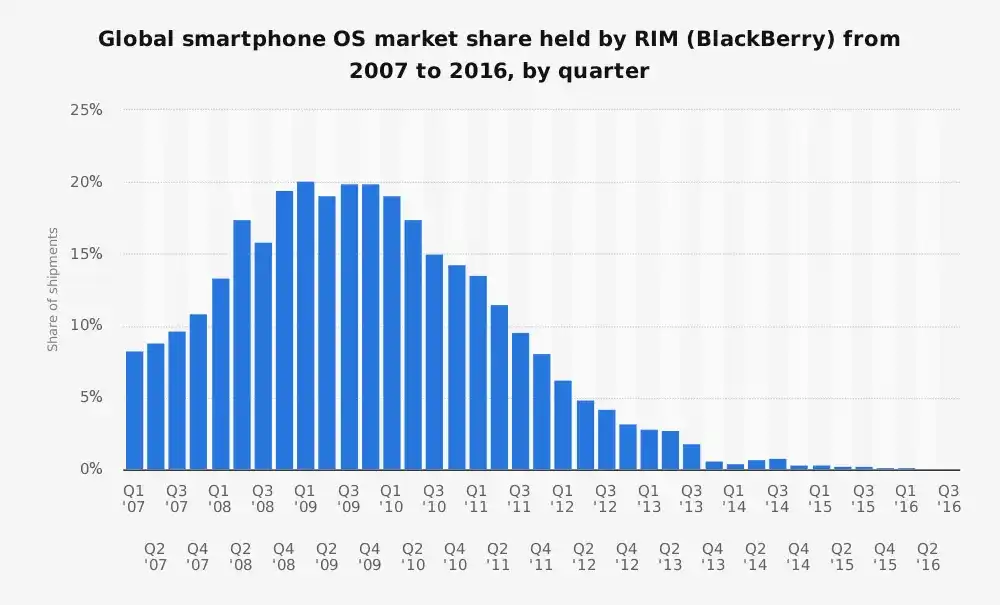

Before the iPhone, Research In Motion (RIM)'s BlackBerry was the king of smartphones, holding over 40% of the US smartphone market. It was built on a specific philosophy of a smartphone: a better PDA (Personal Digital Assistant) optimized for enterprise users, specifically for email, battery life, and that addictive physical keyboard. However...

What might be underestimated today is that BlackBerry served its customers exceptionally well. Precisely because of this, RIM was unable to change as the world transformed around them.

It's well known that its leadership team initially dismissed the iPhone.

"It's insecure. The battery drains extremely fast, and it has a terrible digital keyboard." — Larry Conlee (RIM COO)

They quickly became defensive afterwards.

RIM arrogantly believed this new phone would never attract its enterprise customer base, which wasn't entirely unfounded. But this completely missed the epoch-making shift of smartphones transcending "email machines" to become "universal devices for everyone." The company suffered from severe "technical debt" and "platform debt," common symptoms of companies with early success. Their operating system and infrastructure were optimized for secure messaging and battery efficiency. By the time they accepted reality, it was too late.

There's a view that companies in such situations (the greater the initial success, the harder it is to evolve, which is also why Zuckerberg is the "GOAT/Greatest Of All Time") should operate with an almost schizophrenic mindset: one team dedicated to leveraging current success, another dedicated to颠覆ing it. Apple might be the prime example here, letting the iPhone cannibalize the iPod, then letting the iPad cannibalize the Mac. But if it were easy, everyone would be doing it.

Yahoo

This is probably the Mount Rushmore level of "missed opportunities." Once upon a time, Yahoo was the internet homepage for millions. It was the portal to the internet (you could even say the original "everything app")—news, email, finance, games, you name it. It treated search as just one of many features, so much so that Yahoo in the early 2000s didn't even use its own search technology (it outsourced search to third-party engines, even using Google at one point).

It's now well known that its leadership team passed up multiple opportunities to deepen search capabilities, most notably the chance to acquire Google for $5 billion in 2002. Obvious in hindsight, but Yahoo failed to understand what Google knew: search is the foundation of the digital experience. Whoever owns search will own internet traffic, and thus advertising revenue. Yahoo over-relied on its brand strength and display ads, while disastrously underestimating the massive shift towards "search-centric" navigation and later, personalized content streams from social networks.

Forgive me for using a cliché, but in bubble markets, "a rising tide lifts all boats." The crypto space knows this all too well (see Opensea and countless other examples). It's hard to judge if your startup has genuine traction or is just riding a wave of unsustainable momentum. Making it even fuzzier, these periods often overlap with a surge in venture capital and speculative consumer behavior, which masks underlying fundamental issues. WeWork's ironic rapid rise and fall illustrates this well: easy capital led to massive expansion, obscuring a completely broken business model.

Stripping away all the branding and fancy wording, WeWork's core business model was very simple:

Long-term lease office space → Spend money on renovation → Sublet short-term at a premium.

If you're unfamiliar with the story, you might think, hmm, that sounds a lot like a short-term landlord. That's exactly what it was. A real estate arbitrage trade disguised as a software platform.

But WeWork wasn't necessarily interested in building a lasting business; they were optimizing for something completely different: explosive growth and valuation narrative. This worked for a short time because Adam Neumann was extremely charismatic and could sell the vision. Investors bought into it and fueled a specific type of growth completely detached from reality (in WeWork's case, this meant opening as many offices in as many cities as possible不顾 profitability, i.e., "blitzscaling," locking in massive long-term leases, and scoffing at the idea that unit economics were crucial, believing "we can grow out of the losses"). Many outsiders (analysts) saw through it: this was a real estate company with inverted risk profiles, unstable customers, and structural losses built into the business itself.

Most of the above is retrospective analysis of failed companies. In a sense, it's "hindsight is 20/20." But it reflects three different failure insights: companies fail because they cannot progress technically, cannot identify and respond to competition, or cannot adjust their business model.

I believe we are now seeing the same play out in prediction markets.

The Promise of Prediction Markets

The theoretical promise of prediction markets is seductive:

Leverage the wisdom of the crowd = better information = turn speculation into collective insight = infinite markets

But today's leading platforms have hit a local peak. They've discovered a model that generates some traction and trading volume, but this design cannot achieve the true vision of "everything predictable and liquid."

On the surface, both show signs of success, no one doubts that. Kalshi reports the industry's annualized trading volume this year will be around $30 billion (more on how much of this is organic growth later). The industry saw a renewed surge of interest in 2024-25, especially as the on-chain finance narrative coupled with the gamification of trading further penetrates the cultural zeitgeist. The aggressive marketing pushes by Polymarket and Kalshi probably have something to do with it too (in some cases, brute force marketing does work).

But if we peel back the onion one layer deeper, we find some red flags suggesting growth and PMF might not be as they appear. The elephant in the room is liquidity.

For these markets to function, they need deep liquidity, meaning lots of people willing to bet on either side of the market so that prices are meaningful and reveal true price discovery.

Kalshi and Polymarket struggle with this outside of a few very high-profile markets.

Huge trading volume is concentrated around major events (US elections, highly anticipated Fed decisions), but most markets exhibit extremely wide bid-ask spreads and little activity. In many cases, market makers don't even want to trade (a Kalshi founder recently admitted their internal market maker isn't even profitable).

This indicates these platforms haven't cracked the code on scaling market breadth and depth. They are stuck at a level: performing decently in a few dozen popular markets, but the long-tail vision of "markets for everything" remains unrealized.

To mask these issues, both companies have resorted to incentives and unsustainable behavior (sound familiar?), a classic sign of hitting a local optimum with insufficient organic growth (A side note here, in this particular market dynamic, I have a feeling most people think these two are the only main players.

I don't think it necessarily matters at this stage, but if the teams believe this, then it becomes an existential threat to their company if the other is perceived as "leading" in this supposed "two-horse race." This is a particularly precarious position, based in my view on a false assumption).

Polymarket launched a liquidity rewards program, trying to narrow spreads (theoretically, you get rewarded for placing orders near the current price). This helps make the order book look tighter and does provide a better experience for traders by reducing slippage to some extent. But this is still a subsidy. Similarly, Kalshi introduced trading volume incentive programs, essentially offering cashback based on user trading volume. They are paying people to use the product.

Now I can feel some of you shouting "Uber subsidized for a long time too!!!". Yes, incentives themselves aren't bad. But that doesn't mean they are good! (I also find it amusing how people always like to point to the exceptions to the rule rather than look at the pile of corpses.) Especially considering the dynamics of prediction markets currently, this quickly becomes a hamster wheel you can't stop before it's too late.

Another fact we need to know is that a significant portion of the trading volume is wash trading. I think it's pointless to argue about the exact percentage, but clearly, wash trading makes markets appear more liquid than they are, when in reality it's just a few participants trading frequently to capture rewards or create market hype. This means natural demand is actually weaker than it appears.

"Last Trader Pricing"

In a healthy, well-oiled market, you should be able to place a bet near the current market odds without moving the price much. But that's not the case on these platforms today. Even medium-sized orders significantly impact the odds, a clear sign of insufficient volume. These markets often only reflect the moves of the last trader, which is at the heart of the liquidity issue I mentioned earlier. This status quo shows that while a small core group of users keeps some markets running, the markets overall are neither reliable nor liquid.

But why is this?

The market structure of pure binary trading cannot compete with perpetual contracts. It's a cumbersome approach that leads to fragmented liquidity, and even though these teams try to work around it with band-aids, the effect is clumsy at best. In many of these markets, you also encounter a weird structure where there's an "other" option representing the unknown, but this introduces the problem of splitting emerging competitors out of that basket into their own separate markets.

The binary nature also means you cannot offer true leverage in the way users want, which in turn means you cannot generate valuable trading volume like perpetual contracts do. I see people arguing about this on Twitter, but I'm still shocked they fail to recognize: betting $100 on a prediction market for a 1-cent probability outcome is not the same as opening a $100, 100x leveraged position on a perpetual exchange.

The dirty secret here is that to solve this fundamental problem, you need to redesign the underlying protocol to allow for generalization and treat dynamic events as first-class citizens. You have to create a perpetual contract-like experience, which means you must solve the jump risk inherent in binary outcome markets. This is obvious to anyone actively using both perpetual exchanges and prediction markets—and unbeknownst to these teams, these are precisely the users you need to attract.

Solving jump risk means redesigning the system to ensure asset prices move continuously, i.e., they don't just arbitrarily jump from, say, 45% probability to 100% (We've seen how frequently and brazenly these events are manipulated/insider traded, but that's another can of worms I don't want to open right now. Please stop committing crimes.).

Without addressing this core limitation, you can never introduce the kind of leverage needed to make the product attractive to users (the ones who bring real value to your platform). Leverage relies on continuous price movement to safely close positions before losses exceed collateral, preventing sudden spikes (e.g., from 45% to 100% instantly) from wiping out one side of the order book. Without this, you cannot margin call or liquidate in time, and the platform will go bankrupt sooner or later.

Another core reason these markets don't work in their current structure is the lack of a native mechanism for multi-outcome hedging. First off, as is, there's no natural way to hedge because these markets resolve YES/NO, and the "underlying" is the outcome itself. In contrast, if I'm long BTC perpetuals, I can short BTC elsewhere to hedge. This concept doesn't exist in today's prediction market structure, making it extremely difficult to provide deep liquidity (or leverage) if market makers are forced to take on direct event risk. This again reinforces why I find the argument "prediction markets are nascent, we're in a high-growth phase" naive.

Prediction markets eventually settle (i.e., they actually close at resolution), whereas perpetual futures obviously do not. They are open-ended. A perpetual contract-like design could change the market by incentivizing active trading, making it function more continuously, thus alleviating some of the common behaviors that make prediction markets unattractive (many participants just hold until resolution instead of actively trading probabilities). Furthermore, since prediction outcomes are one-time discrete results, and oracle price feeds, while also problematic, are at least continuously updated, the oracle problem is also more pronounced in prediction markets.

Behind these design issues lies the capital efficiency problem, but this seems well understood by now. Personally, I don't think "earning stablecoin yield" on committed capital moves the needle substantially. Especially considering exchanges offer this yield anyway. So what's the trade-off being made here? Pre-funding every trade is certainly good for eliminating counterparty risk! And you will attract a subset of users.

But it's disastrous for the broader user base you need, it's extremely inefficient from a capital perspective, and it just massively increases the cost of participation. This is especially bad when these markets need different types of users to function at scale, as these choices mean the experience is worse for each user group. Market makers need huge amounts of capital to provide liquidity, and retail traders face massive opportunity costs.

There's certainly more to unpack here, especially around how one might try to solve some of these fundamental challenges. More complex and dynamic margining would be necessary, particularly factoring in things like "time to event" (risk is highest when event resolution is near and odds are close to 50/50). Introducing concepts like leverage decay as resolution approaches would also be needed, and early tiered liquidation levels would help.

Adopting a broker model from traditional finance, enabling instant collateralization, is another step in the right direction. This would free up capital for more efficient use, allow placing orders across markets simultaneously, and update the book after fills. Introducing these mechanisms first in scalar markets, then expanding to binary markets, seems the most logical sequence.

The point is, there's a huge design space here that remains unexplored, partly because people believe today's model is the final form. I just don't see enough people willing to first acknowledge these limitations exist. Perhaps unsurprisingly, those who recognize this are often the very user types these platforms should want to attract (aka perpetual traders).

But what I see is their criticism of prediction markets being mostly waved away by devotees and told to look at the trading volume and growth numbers of these two platforms (absolutely real and organic numbers, ahem). I want prediction markets to evolve, I want them to achieve mass adoption, and I personally think trading everything is a good thing. Much of my frustration stems from a generally accepted view that today's version is the best version, but clearly I don't share that view.