By Xiang Xianzhi

The night before last, Moonshot AI released Kimi K2.6 and raised the API input price from $0.60 per million tokens to $0.95 per million tokens.

A 58% increase. The first price hike since the K2 series launched.

But it seems no one is paying attention to this.

Four months ago, in an internal letter on the last day of 2025, Yang Zhilin wrote that Moonshot AI was "not in a hurry for an IPO in the short term." At that time, Zhipu and MiniMax had already submitted their prospectuses to the Hong Kong Stock Exchange. This was clearly a deliberate positioning strategy.

He also wrote in that letter that the company's cash reserves exceeded $1.4 billion, and the Series C round of $500 million was oversubscribed—the subtext being that the potential of the primary market had not been fully utilized, and there was no rush for the secondary market.

Three months later, Bloomberg reported that he had begun talks with CICC and Goldman Sachs. Three weeks after that, K2.6 was launched.

A person who dislikes "rushing" did in four months what he previously said he wouldn't do.

K2.6 is certainly not the last product release before Moonshot AI's IPO. But this version release is Yang Zhilin's first roadshow after Moonshot AI planned to go public.

Kimi Has Never Released a Model Version Like This Before

Kimi had a set routine for releasing models in the past.

Publish a technical report, open-source the weights, top the HuggingFace leaderboard, and then await scrutiny from the tech community. K1.5 countered o1 with a reasoning methodology, with technical details outweighing benchmark numbers; K2 Thinking directly dumped the weights on HuggingFace, letting developers run their own tests. These moves were aimed at developers and researchers.

The rhetoric was also from the tech community: what problem did we solve, why is our method better, welcome to reproduce.

K2.6's moves are somewhat different.

First, the price increase. In RMB terms, the input price for K2.6 is 6.5 yuan per million tokens (cache miss), compared to 4 yuan for K2.5. The output price increased from 21 yuan to 27 yuan. The cache hit price is 1.1 yuan.

This is a structured price increase. Superficially, all tiers are increasing, but the cache hit tier has the smallest increase—from 0.7 yuan to 1.1 yuan, which is $0.16 per million tokens in USD.

This $0.16 is the key to understanding this price hike.

For enterprise users who repeatedly call the same system prompt: code assistants, Agent orchestration frameworks, smart customer service—their prefix is highly reusable, and cache hit rates can reach 75% to 83%. Moonshot AI left a nearly flat price for these customers.

For scattered customers who use it occasionally with different prompts each time, this price increase falls squarely on them.

This is a friendly price adjustment for "enterprises already tied to Kimi" and an unfriendly one for "scattered customers still comparing prices". The former are the "enterprise locked-in clients" in the IPO story, the latter are the "long-tail users" that won't appear on the roadshow PPT. Moonshot AI knows very well who its valuation assets are.

The compute structure of the Agent era is different from the chat era. Chat models are dozens of tokens back and forth, Agents are thousands of tool calls and hundreds of thousands of token consumption. Official K2.6 use cases—Mac local deployment Qwen3.5 model calling tools over 4000 times, running for 12 hours; refactoring the open-source matching engine exchange-core, 1000+ tool calls over 13 hours; more extreme, 5 days of autonomous operation monitoring alerts, fault response—the token consumption for these single tasks is hundreds or even thousands of times that of chat scenarios in the K2.5 era.

Of course, these cases are meant to illustrate long-range reasoning capabilities, but coupled with K2.6's 300-agent cluster, the token consumption must be staggering.

At the old price of $0.60, this kind of Agent task might lose money per call. At $0.95, it barely covers the inference cost.

So the price increase isn't confidence, it's necessity. Moonshot AI has raised $2.5 billion cumulatively, with $1.4 billion cash reserve from Series C to C+, but if the next-gen K3 is truly a 3-4 trillion parameter scale, a single pre-training run might eat up half of that.

Without a price increase, the gross margin data for the last few quarters before the IPO would look bad. The prospectus must disclose gross margin.

This could have been explained openly—the Agent era requires a new pricing model. But Moonshot AI didn't. Because C-end users just came from the free era of K2 Thinking, and telling them "I raised prices" now is not a good product narrative.

It's a story for another audience—Kimi already has a group of enterprise clients who can't leave it, and they'll use it even if it's more expensive. (Like myself)

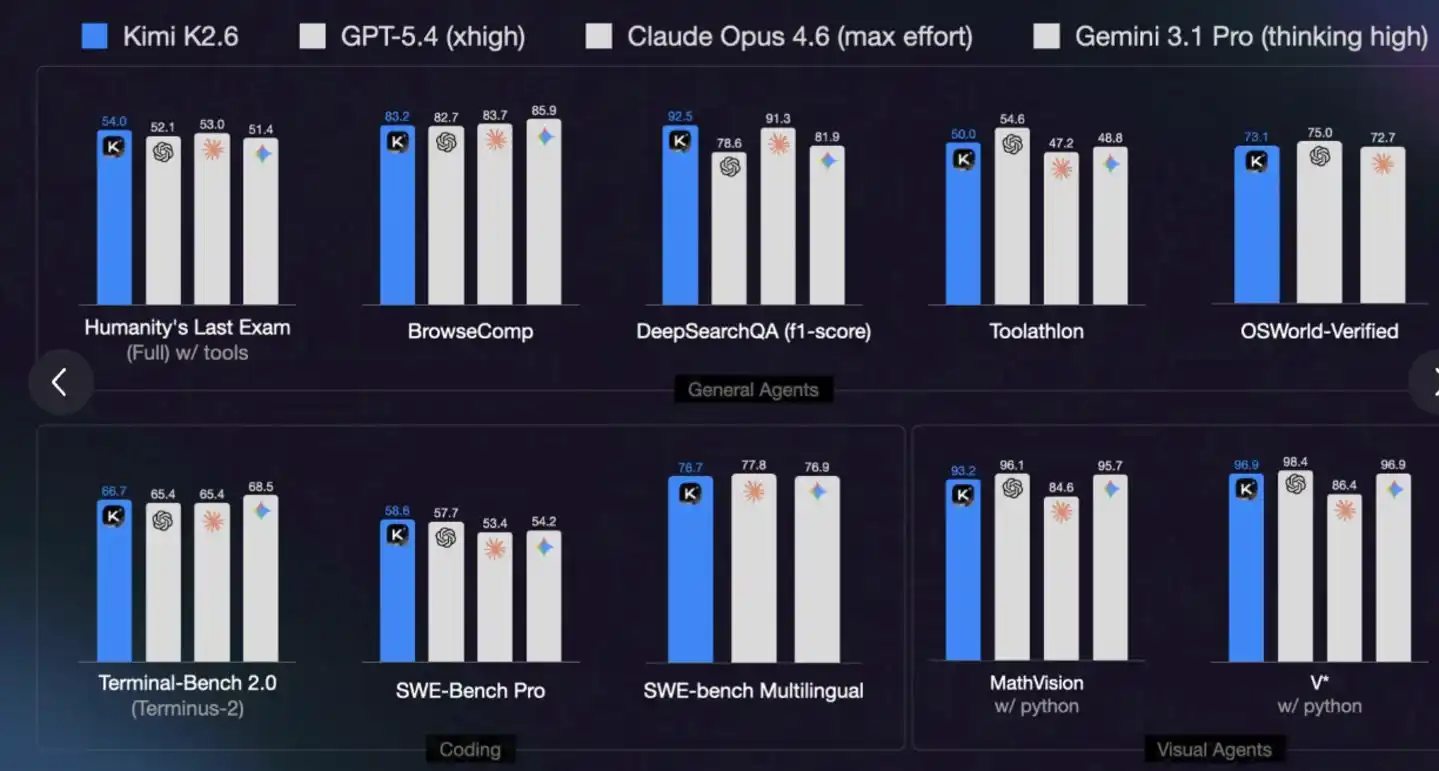

The second thing is benchmark comparisons. K2.6's official chosen references are GPT-5.4, Claude Opus 4.6, Gemini 3.1 Pro. All three are previous-generation flagships.

The same week, Anthropic released Claude Mythos, and Opus 4.7 just launched—both are a generation stronger than Opus 4.6. K2.6 didn't benchmark against them.

This is actually an active choice. Benchmarking against Mythos, K2.6 falls into the "catch-up" position; benchmarking against Opus 4.6, K2.6 falls into the "first tier" position. An $18 billion valuation needs the latter.

Kimi didn't really do this in the past. When K2 Thinking was released, the official ran full benchmarks, good and bad results, all released for developers to judge for themselves. That was the tech community's way—the community understands where you are strong and weak, and is willing to accept a model with obvious shortcomings but a clear roadmap.

Roadshow PPTs are not. Roadshow PPTs need a conclusion a fund manager can understand in 30 seconds: "on par with or superior to top international closed-source models." This sentence is verbatim from the K2.6 official blog.

The third thing is the Agent cluster and open-source dual track. K2.6 upgraded something called Claw Groups—a heterogeneous Agent ecosystem where Agents with different devices, different models, and different toolchains run in a collaborative space, with K2.6 acting as the scheduler. 300 sub-Agents in parallel, 4000 steps of collaboration, 5 days of autonomous operation.

These numbers are written for enterprise clients. Not for developers. For a developer, "300 Agents in parallel" has no practical meaning—they won't run 300 Agents in a local project. This configuration only makes sense for one type of client: large enterprises that need an Agent matrix to automate entire operational processes.

It's targeting the Salesforce story, not the HuggingFace story.

Meanwhile, K2.6 is fully open-sourced. Yang Zhilin said at the Zhongguancun Forum on March 26th that open source will be an absolute victory.

Open source + enterprise Agent clusters—this is a position between DeepSeek and Anthropic, half and half of both models. It sounds like a good story. But occupying both ends means having to prove both.

The capital market doesn't really care if these questions have answers. It only requires you to have a story for each line.

Price increase, benchmarking, Agent cluster—these three things together have an反常的共同点 (abnormal common point). None are for the tech community.

Kimi's underlying logic for releasing models in the past was—if developers like us, enterprise clients will eventually follow, and the capital market will follow even later. This playbook has a name: technical sincerity.

K2.6 isn't waiting. The price increase is a direct declaration of B-end pricing power; benchmarking against GPT-5.4 is preemptively securing a valuation position; Agent clusters and Claw Groups are the showroom for the enterprise service story.

Each thing corresponds to a question on the roadshow PPT: What is your commercialization capability? What is your benchmark position? What is your B-end moat?

Compressing the time from Preview to GA to 8 days is also this logic. Previous versions of the K2 series all went through 2-3 month preview periods, letting the community test enough, provide feedback, and iterate enough. K2.6 didn't give itself this space. It's not that the technology matured faster; the window won't wait.

An IPO in the second half of 2026 requires 4 to 6 months for filing, inquiry, hearing, roadshow, pricing, and cooling-off period according to HKEX procedures. Starting the roadshow in September means the product must be ready by April.

If GA isn't released in April, there's no window later.

K3 is the Real Grand Finale

But K2.6 is also not the strongest card Moonshot AI can play.

There is a very restrained sentence in the official blog—K2.6 is the "runway prepared for K3".

12-hour long-range coding, 300-Agent cluster, context compressor—these are not the final form of the K2 series; they are the execution layer infrastructure that a larger base model can support. Moonshot AI wouldn't spend effort making this work unless it was certain a larger model would consume these capabilities.

Rumors about K3 leaked on Reddit earlier, targeting a parameter scale of 3-4 trillion. Compared to the trillion-scale of the K2 series, this is a base leap.

If K3 can be released during the roadshow window—that is the real answer sheet. The runway paved by K2.6 allows K3 to take off.

The question is whether it can make it. How long does it take to train a 3-4 trillion parameter model? GPT-5 and Claude Opus 4.6 both had roughly 6-9 month pre-training cycles, plus several months for post-training and safety evaluation. Can Moonshot AI's existing compute—judging from the Alibaba Cloud cooperation and current cash reserves—compress this cycle to 5-6 months?

This bet is placed on K2.6.

Eight days from Preview to GA, Agent cluster expanding from 100 to 300 in one go, long-range execution stretching from hundreds of steps to 4000 steps—every move compresses time, making room for the possibility of K3.

If K3 can be released before August or September—that's the grand finale on the roadshow.

If it doesn't make it—K3 becomes a "model that can only be released after the IPO," and K2.6 has to shoulder the entire valuation narrative alone.

Moonshot AI is betting it can be done.

What Does the $18 Billion Valuation Anchor?

Back to valuation.

Three months ago, Moonshot AI was valued at $4.3 billion; two months ago, $5.5 billion; now, $18 billion.

It's not that Moonshot AI became four times stronger in these three months. It's that Zhipu and MiniMax went public and rose 4x, pushing the ceiling of the entire sector up. Zhipu's HK market cap is HK$305 billion, MiniMax's is HK$309.2 billion—both exceeding SenseTime's historical peak.

The valuation logic for these two is not "what the next-gen technology can do," but "how much AI assets can be priced in the Hong Kong market pool."

Moonshot AI's $18 billion valuation anchors the same thing. It is no longer proving it is the strongest Chinese AI company; it is proving it is a priceable Chinese AI company.

All of K2.6's moves—price increase, benchmarking, Agent cluster, open-source dual track—respond to this proposition.

But there is one thing K2.6 has not yet proven. Will Kimi's C-end users be willing to pay for the more expensive K2.6? Will paying subscribers churn to DeepSeek or MiniMax? How many enterprise clients are actually running Claw Groups, and how many just signed a POC?

These are numbers investors will definitely ask during the roadshow. K2.6 can only put the product out now. Whether it turns into numbers depends on the next three months.

When Zhipu went public, it submitted a prospectus where profits weren't yet positive; MiniMax did too. Investors accepted this story because the grand narrative of "Chinese AI assets" had just opened. Moonshot AI is half a year late. For the same question, Zhipu and MiniMax could say "we are validating," Moonshot AI must say "we are monetizing."

This pressure falls entirely on the three months between K2.6 and K3.

So back to the initial question—Is K2.6 Moonshot AI's final roadshow before the IPO?

No.

If K3 catches the roadshow window, K3 is the real grand finale. K2.6 is just the runway paved for it. If K3 misses the roadshow window, K2.6 has to carry the entire IPO narrative. Then it is Yang Zhilin's被迫提前开讲的第一场 (first, forced-to-start-early one).

Neither outcome was what Yang Zhilin wanted four months ago.

But everything that happened in these four months—Zhipu MiniMax IPO, valuation ceiling pushed up, window period compressed—forced a person who dislikes "rushing" to have to rush.

When K3 is released, it will be the second act.