Conversation with Mai-Lan from AWS: The Next Battlefield for S3 – How to Handle the Data Consumption Surge in the Agent Era

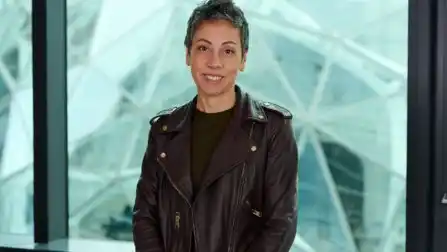

The explosive rise of Agent AI, exemplified by OpenClaw in China, is putting unprecedented pressure on cloud data infrastructure. Unlike human engineers, Agents consume data in an "extremely active and aggressive" parallel fashion, launching tens to hundreds of queries simultaneously, leading to exponentially higher call frequencies and throughput. Mai-Lan Tomsen Bukovec, VP of Technology at AWS, emphasizes that cost-effectiveness in this data layer is now a decisive factor for customers building Agent systems.

To address this, AWS is positioning its foundational Amazon S3 service, now 20 years old, as the critical data platform for the Agent era. Recent key innovations include: **S3 Table** with native Apache Iceberg support, enabling Agents to efficiently interact with structured data via familiar SQL; **S3 Vector**, which introduces vectors as a native type for building contextual data and serving as a shared "memory space" for AI systems; and the newly launched **S3 Files**, which provides a POSIX-compliant file system interface over S3, allowing Agents to interact with data through the familiar paradigm of files and directories.

These enhancements are designed to meet the unique data interaction patterns of Agents, which are trained on models already proficient with SQL, file systems, and contextual vectors. By unifying these access methods on the scalable, durable, and cost-efficient S3 foundation, AWS aims to provide the data backbone capable of supporting the next wave of hyper-scale, high-frequency Agent applications.

marsbit12 saat önce