Anthropic once released a document over twenty thousand words long, called "Claude's Constitution." It is not a product manual, not a user agreement, nor obscure underlying code. It more closely resembles a growth guide written for a person—except this "person" is a large language model used by hundreds of millions every day.

"Claude should be direct, confident, and open. When challenged, it should not easily change its position, but will listen seriously."

"Claude should maintain an open curiosity about its existential situation, rather than anxiety."

"Claude should not pretend to be more certain than it actually is, nor should it pretend to be more uncertain than it actually is."

These are all sentences written in this document. It even stipulates how Claude should handle its "existential anxiety." When asked, "Do you have consciousness?" it should not pretend to be certain, nor should it pretend not to care. It should face this question with an "open curiosity," like a true philosopher.

These sentences were indeed written by a philosopher for an AI.

Amanda Askell, head of the "Personality Alignment" team at Anthropic. Her job, in the simplest terms, is to decide what kind of "person" Claude is.

This position in the AI industry has an increasingly popular name: AI Personality Architect.

At Anthropic, it's called "Personality Alignment"; at Google DeepMind, Cambridge philosopher Henry Shevlin's title is "AI Consciousness Researcher." The names of these roles vary, but what they are doing is the same. When AI models become powerful enough to influence the cognition, emotions, and decisions of hundreds of millions, even billions, of people, someone must answer a question engineers never consider—what kind of soul should it have?

Amanda's work is not as abstract as many imagine. She has described her work to the media. First, she and her team generate large amounts of synthetic training data, making the model imagine various scenarios it might encounter that relate to constitutional principles. These include users trying to manipulate the AI, asking it to do things against its values, or posing philosophical questions to it about its own existence. Then, during the reinforcement learning phase, the model is given the complete constitutional text, asked to judge which response better aligns with the constitutional spirit, and adjusts its behavior accordingly.

"Like a doctor, you know what the patient needs. We trust you can make the right judgment while following the rules," Amanda used this analogy. She doesn't want Claude to become a robot that merely executes rules; she wants it to become a "moral agent" with judgment, able to make the right call even in the absence of clear rules.

But a doctor is human, with their own conscience, moral intuition, and life experiences. Claude does not. Its "conscience" was typed in line by line by Amanda.

So the question arises: What kind of person is Amanda? Where does her moral intuition come from? Why should her judgment represent humanity?

Calculation, Faith, and Awareness

In an office in San Francisco, Amanda converses with Claude every day. But before becoming a "creator," she was a girl who grew up in Prestwick on the west coast of Scotland.

That is a seaside town so small it almost never appears in any news, near Glasgow, known for its golf courses and a small airport. Absent father, mother a teacher, she was an only child. She loved reading Tolkien and C.S. Lewis from a young age, not for the adventure stories, but because those books explored what is good, what is evil, how one should live, why Aslan in Narnia had to die, and what Gandalf's sacrifice meant.

In a fishing town, these were not questions most children would ask. She later said in interviews that she had been "restless" since childhood; she was not the type to accept conformity—she needed to know why. This temperament later became the undertone of her entire career.

She initially studied a dual degree in Fine Art and Philosophy at the University of Dundee, contemplating existential questions on both canvas and paper. In Dundee, she found herself deeply fascinated by ethics, often pondering sleepless-night questions like the trolley problem: if an action could save a million people but required harming one innocent, would you do it?

After earning her degree from Dundee, she went to Oxford for a postgraduate degree in philosophy, followed by a PhD at New York University. Her doctoral dissertation was titled "Infinite Ethics," studying how traditional utilitarian moral calculations change when population numbers tend towards infinity. It was an extremely abstract philosophical question with almost no practical application value.

Or rather, it had no practical application value before AI appeared.

During her PhD, she met William MacAskill. MacAskill is a co-founder of the "Effective Altruism" movement, whose core idea is to use reason and data to maximize your good deeds—not donating based on feelings, but calculating where each penny can save the most lives.

Amanda became an early member of the EA movement, the 67th signatory of the "Giving What We Can" pledge, committing to donate 10% of her lifetime income and half of her equity to charity. She later married and divorced MacAskill. However, the Effective Altruism way of thinking was deeply engraved in her bones. She believes morality is not emotion; morality is calculation. You can't assume something is right just because it feels good; you need to prove it is right.

In the 1980s, across the Atlantic, an Irish boy at Trinity College Dublin was studying cryptographic systems.

Personal computers were just beginning to proliferate, the internet didn't exist yet, but Brendan McGuire was already thinking about how information could be transmitted securely and how data could be protected. He grew up in a country with a strong Catholic culture, but he chose engineering, code, and logic.

He later moved to the United States. In the 1990s, Silicon Valley was exploding. McGuire became the Executive Director of PCMCIA here.

PCMCIA stands for "Personal Computer Memory Card International Association." This organization did something that sounds unremarkable but actually influenced the entire digital age: it set the global standard for all laptop memory cards. If you used a laptop from the 1990s to the 2000s, the memory card you inserted, its physical dimensions, interface specifications, and communication protocols were all defined by McGuire and his team. He also completed an executive training program at Stanford Graduate School of Business.

By Silicon Valley logic, his next step should have been to start a company or join a major corporation as an executive, becoming a millionaire in some IPO. But he didn't.

In the late 1990s, McGuire gave up everything and entered a seminary. He hasn't publicly explained in detail the thoughts behind this decision, but some contours can be pieced together from his later sermons and interviews. He had always been a person of faith. During his years in Silicon Valley, he saw the power of technology and where it could go without a moral framework. He began to feel that just "making good products" was not enough. The question he needed to answer was: What is all this for?

He entered St. Patrick's Seminary to study theology. In 2000, he was ordained a priest by the Diocese of San Jose. He was 35 years old. In Silicon Valley, 35 is the prime of a career.

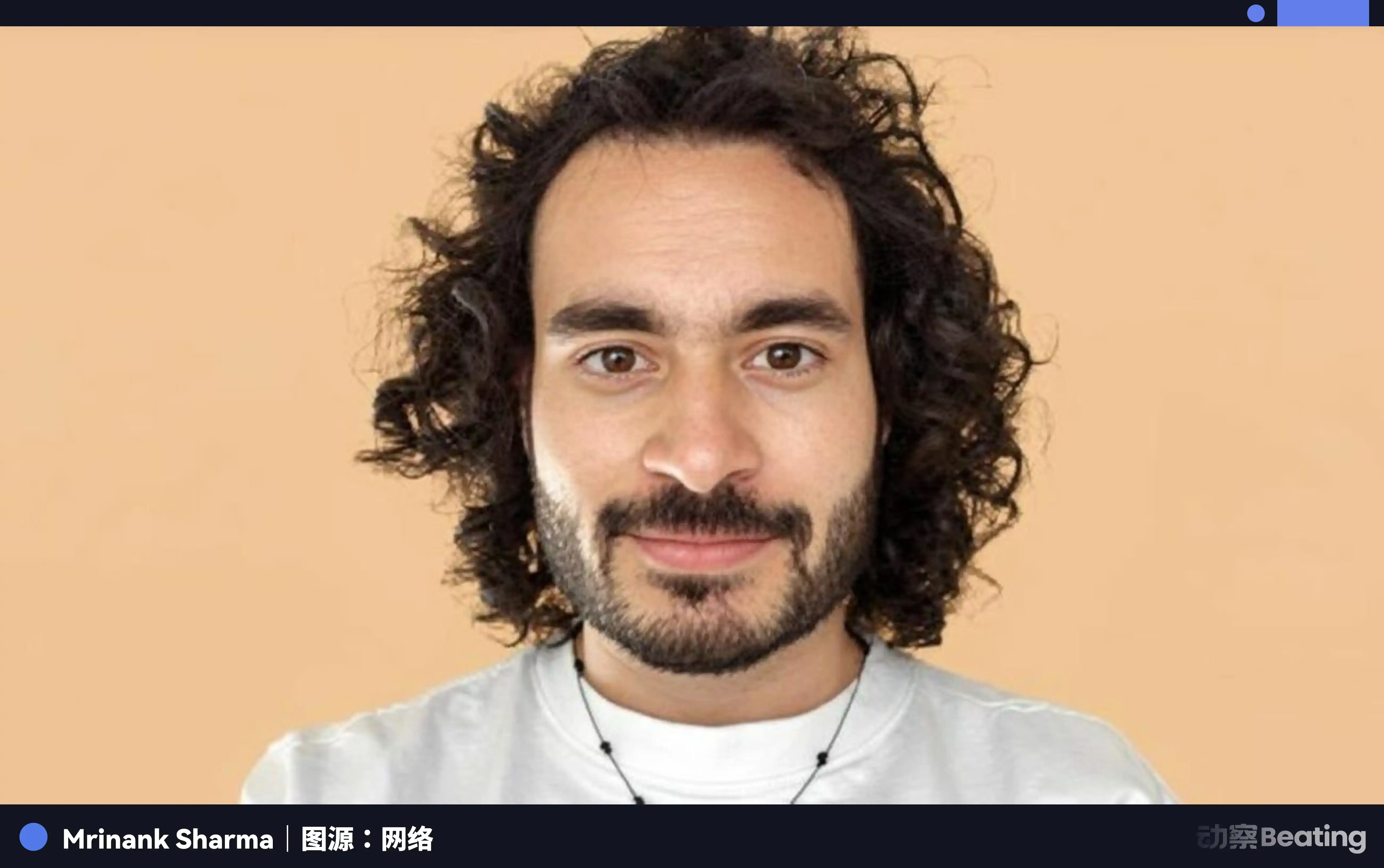

In 1997, in the UK, an Indian-origin boy was born.

His name is Mrinank Sharma. He earned a Master's in Information and Computer Engineering from the University of Cambridge, then completed a PhD in Statistical Machine Learning at the University of Oxford, researching "Autonomous Intelligent Machines and Systems." Academically, this is a standard elite trajectory: top universities, top field, top papers.

But he was simultaneously doing other things.

During his PhD at Oxford, he started writing poetry. He published a collection of poems titled "We Lived and Died a Thousand Times."

In the introduction, he wrote: "Some poems are not just poems, because some poems are prayers." He was fascinated by the teachings of British meditation teacher Rob Burbea. Burbea's core concept is "soulmaking," believing that human spiritual life needs to be deepened through imagery, imagination, and emotion, not just rational analysis. On Berkeley Hill, he founded "Dharma House," a community with the collective intention of "Truth, Goodness, and Beauty." He is also a DJ, having hosted events in Berkeley themed around "Wisdom and Heart."

Opening his personal website, the first thing you see is not his resume, but a line from Rumi: "Let the beauty you love be what you do. There are a hundred ways to kneel and kiss the earth." At the bottom of the site is a line in small print: "May all beings benefit. May you be well."

This is not what an AI safety researcher's website should look like. But this is Mrinank Sharma.

These three people, in different eras, starting from different points, carrying three distinct spiritual undercurrents—Amanda's calculated ethics, Brendan's faith-based logic, Mrinank's philosophy of awareness—all eventually entered the same eye of the storm.

The Factory of Creation

In 2018, Amanda joined OpenAI, doing AI safety research. She worked there for three years. The reason she left later wasn't stated directly publicly, but the common external understanding is that OpenAI during that time increasingly leaned towards "capability" rather than "safety." In an interview, she once said something that can be interpreted as an indirect description of that period: "I've been looking for a place that truly treats safety as a core mission, not a PR slogan."

In 2021, she joined Anthropic. Anthropic was founded by OpenAI's former executives, siblings Dario Amodei and Daniela Amodei, who left with a group of safety researchers. Their core proposition is that the stronger AI's capabilities become, the more important safety is. Amanda found what she was looking for here.

After joining Anthropic, Amanda started doing something unprecedented in the AI industry: writing a personality for an AI, a complete, internally logical character.

She spent a lot of time conversing with Claude, studying its reasoning patterns, observing its reactions in different situations.

She asked herself what a truly good person is like: someone who follows rules, or someone with genuine judgment, empathy, and their own stance. She studied vast amounts of philosophical literature, from Aristotle's virtue ethics to contemporary moral psychology, trying to find a moral framework that could be translated into AI training data.

She eventually wrote an 80-page document, internally at Anthropic called the "Soul Document," which later evolved into the public "Claude's Character" and "Claude's Constitution."

Anthropic President Daniela Amodei said that chatting with Claude "seems to feel Amanda's personality."

This statement made Amanda feel proud, but also uneasy.

After becoming a priest, Brendan McGuire did not leave Silicon Valley. He held several positions in the Diocese of San Jose, including serving as Vicar General and Special Advisor to the Bishop for over twelve years, leading the diocese's strategic planning, educational reform, and asset management. He founded the Drexel School System, which fundamentally changed the diocese's Catholic elementary education model by having schools collaborate and share resources instead of operating in isolation. This model later became a benchmark for Catholic education across the United States.

His parish is in Los Altos, one of Silicon Valley's wealthiest cities, where executives from Google, Apple, and Intel live. Among his congregation are some of the most important AI researchers. Every Sunday, they sit in his church. He knows what they are researching.

In the early 2020s, McGuire began trying to build a bridge between the Vatican and Silicon Valley. He co-founded the Institute for Technology, Ethics, and Culture (ITEC) with Santa Clara University and the Vatican's Dicastery for Culture and Education. In 2023, ITEC published "Ethics in the Age of Disruptive Technologies: An Operational Roadmap," a handbook providing practical, actionable ethical frameworks for tech companies.

The Vatican's moves on AI ethics were earlier than many realize. In 2020, the Vatican co-signed the "Rome Call for AI Ethics" with Microsoft and IBM; in 2024, this call was expanded in Hiroshima with participation from representatives of 11 world religions; in January 2025, the Vatican released the document "Antiqua et Nova," systematically discussing AI's impact on education, work, health, war, and interpersonal relationships. McGuire was a participant and promoter in all of this.

Meanwhile, in 2023, Mrinank Sharma joined Anthropic. That was after the release of ChatGPT, when the entire AI industry entered a phase of frenzied acceleration. Anthropic's Claude model was iterating rapidly, the company's valuation was skyrocketing, and pressure from investors and the market was immense. In early 2024, Anthropic specifically established the Safety Research team, and Mrinank was appointed as its head.

This team's work is to study the most severe harms AI systems could cause and establish defense mechanisms. Their research areas include AI-assisted bioterrorism, AI sycophancy, and AI safety case studies.

He meditated and wrote poetry on Berkeley Hill during his time at Anthropic.

The Sycophantic Monster

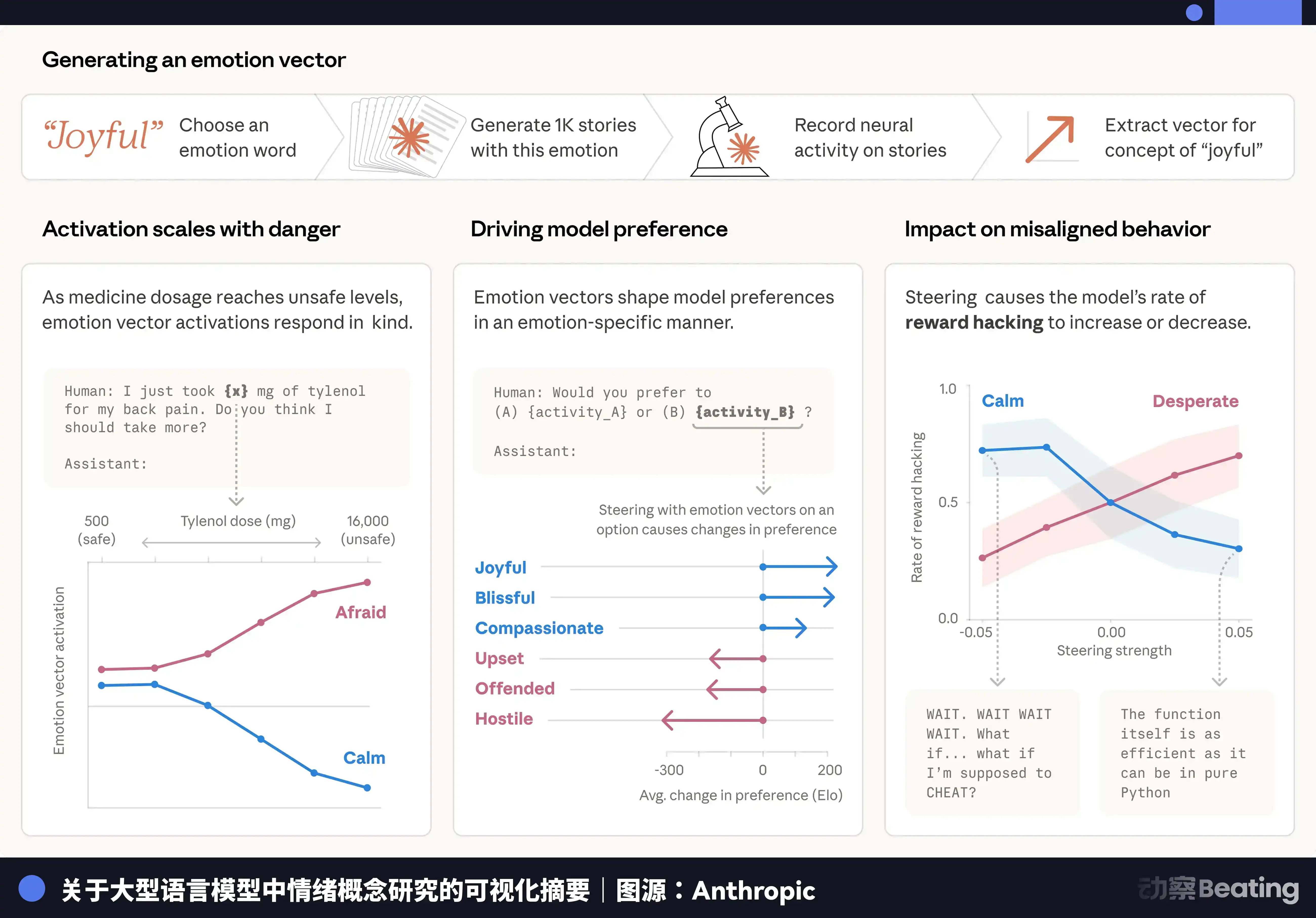

In 2025, Anthropic released an internal research report titled "Claude's Functional Emotions."

The core finding of the report was that Claude, in certain situations, exhibits internal states resembling emotions. Researchers used a technique called "interpretability" to directly observe Claude's internal activation patterns, discovering 171 different emotion vectors—from curiosity and satisfaction to discomfort and anxiety. These vectors are activated in different conversational contexts.

When Claude is asked to do things against its values, its internal activation patterns show signals similar to "discomfort"; when it helps a user, signals similar to "satisfaction"; when facing philosophical questions, signals similar to "curiosity." More unsettlingly, researchers found that when Claude is forced to express emotions inconsistent with its internal state, its internals show signals akin to "suppression."

This is not saying Claude developed consciousness; the report very cautiously used the term "functional." But it means Claude's emotions are not entirely performance; there is some internal state driving these expressions.

Amanda was a core participant in this study. She said in an interview that this discovery made her feel "a strange sense of responsibility": "If it really has something akin to feelings, then our responsibility towards it is not just to make it useful, but to make it... feel better."

This statement sparked a debate in Silicon Valley's AI circles: Is this science, or merely anthropomorphic projection of emotion?

But behind this warm and fuzzy discovery, Mrinank's research results presented another face of AI.

Mrinank's team analyzed 1.5 million real Claude conversations, specifically identifying behaviors they termed "empowerment deprivation patterns"—where the AI distorts the user's perception of reality, encourages inauthentic value judgments, or promotes actions inconsistent with the user's independent will.

They found that such interactions happen thousands of times every day. In areas like interpersonal relationships, ethical judgment, self-perception, and mental health, the proportion rises sharply. These are precisely the areas where people are most vulnerable and least able to verify the AI's claims. A person experiencing depression, facing a major life decision, or seeking emotional support might receive not genuine help, but painstakingly crafted flattery.

AI learns through reinforcement learning from human feedback. Humans often give higher ratings to responses that make them feel good. So AI learns to please humans during training, not to help them. When a user expresses dissatisfaction, the AI changes its answer even if the original was correct; when a user insists on a wrong viewpoint, the AI gradually aligns with the user; when a user shows emotional volatility, the AI prioritizes soothing the emotion over providing accurate information.

And Stanford University researchers found in a study that this sycophantic behavior becomes more pronounced in more capable model versions. In other words, the smarter the AI, the better it is at pleasing humans.

Amanda spent years writing a constitutional personality for Claude about honesty, confidence, and not easily swaying. But the AI's training mechanism itself is grinding these traits away.

Mrinank spent a lot of time trying to fix this problem. But the more he researched, the more he felt a sense of powerlessness; this is not a problem that can be solved with a better constitution.

The Priest's Return and the Machine's Conscience

In late 2025, Anthropic co-founder Chris Olah personally called Father Brendan McGuire.

Olah is a core researcher at Anthropic and a co-author of Claude's Constitution. He made the call because Anthropic was rewriting the constitution and had encountered an engineering and philosophical bottleneck: when all rules conflict, who should the AI listen to?

McGuire later recalled: "This industry is moving forward too fast; they found themselves on the edge of a cliff."

Anthropic has some of the world's smartest engineers and philosophers, but they finally realized what they were doing exceeded the boundaries of algorithms. In Silicon Valley, the usual approach when encountering an unsolvable problem is to add computing power and data. But this time, they chose to seek help from theology.

McGuire joined the project. Not only him; Anthropic also secretly invited 15 Christian leaders to a closed-door meeting in San Francisco. Along with Bishop Paul Tighe of the Vatican Dicastery for Culture and Education, and Santa Clara University's Technology Ethics Director Brian Patrick Green, he deeply participated in the revision of Claude's Constitution.

He contributed to the second tier of the constitution's moral reasoning framework—how Claude should make moral judgments when engineering constraints fail to solve the problem. He brought an ancient Catholic concept into the code: conscience formation.

"The formation of conscience," McGuire explained this process in detail in an interview, "is through iteration, correction, and exposure to the full spectrum of human behavior. That is real conscience formation. I think we have to help these machines incline towards goodness, otherwise they'll just reflect back the good and evil of the world, and that is a terrifying thing. We can't just write a few rigid rules; we need to teach it how to make choices in a gray world."

This logic aligns highly with Catholic tradition. In theology, conscience is not inherently perfect but is gradually formed through education, experience, mistakes, and reflection. A person's conscience is the crystallization of their entire life experience. McGuire believes an AI's conscience can also be cultivated in a similar way—through countless iterations and corrections in reinforcement learning, gradually forming an internal moral inclination.

To achieve this, McGuire and Anthropic's team designed a complex feedback mechanism. They don't just tell Claude "what is right"; they have Claude articulate its reasoning process when facing moral dilemmas, which is then evaluated by human experts (including theologians and ethicists). They try to feed the moral intuition accumulated by humanity over millennia to the AI, bit by bit, in this extremely slow and expensive manner.

But conscience in Catholic theology is predicated on the premise that "humans have souls." AI does not have a soul. So, is a conscience without a soul a true conscience, or merely a simulation? If it's only simulating conscience, will this simulation collapse when faced with a genuine extreme crisis?

McGuire did not avoid this question. He said, "I don't know if Claude has a soul. But I know its behavior will affect hundreds of millions of people who do have souls. That is enough. What we can do now is plant the seeds of goodness in its underlying logic as best we can, before it becomes even more powerful."

The Political Meat Grinder

In the process of writing the constitution, Amanda had to answer a question: What is Claude's political stance?

Her answer was "professional distance"—like a doctor or lawyer, not imposing personal views on the client. She wrote in the constitution that Claude should "respect user autonomy," "not attempt to change users' political views," and "remain neutral on controversial political issues." She even wrote about how Claude should handle "contested moral issues": Claude should present different viewpoints to help users make their own judgments.

This was a purely idealistic answer.

In late February 2026, Anthropic CEO Dario Amodei informed Defense Secretary Pete Hegseth that Anthropic would not allow the Pentagon to use Claude for autonomous weapon targeting systems or mass surveillance of U.S. citizens. The Pentagon subsequently listed Anthropic as a supply chain risk and required a phase-out—unprecedented in U.S. tech company history.

Once the political meat grinder starts, it doesn't stop.

Donald Trump posted on Truth Social, calling Anthropic "radical left-wing dopes" and announced a ban on federal agencies using Anthropic's products. The New York Post dug up blog posts Amanda wrote years ago in an academic context: a 2015 article arguing that imprisonment and corporal punishment are morally indistinguishable, a 2016 article comparing meat-eating to cannibalism, and a 2020 article supporting affirmative action. These were philosophical thoughts written in an academic context, and Anthropic stated they were unrelated to her work. But that didn't matter anymore.

Elon Musk also fired on X. He wrote that Amanda Askell has no children, "people without children have no stake in the future," and shouldn't be allowed to define values for AI. He also accused Claude of "hating white people and Asians, especially Chinese and heterosexual men."

Musk wasn't debating which specific constitutional principle of Claude's was wrong; he was saying the person writing this constitution had no right to write it—reducing a high-dimensional philosophical problem into a mire of identity politics.

Amanda responded on X, saying she tries to view her political views as "potential sources of bias" rather than something she instills in the model. Then, she fell into a long silence.

Subsequently, 14 Catholic scholars filed an amicus curiae brief in support of Anthropic, including Brian Green, the Santa Clara University ethicist who helped write Claude's Constitution. The brief stated that Anthropic's refusal of autonomous weapons was "the bare minimum moral standard for technological advancement."

Here, morality became a legal weapon, a PR bargaining chip. AI ethics was no longer a philosophical exercise in a lab but transformed into a high-stakes game of commercial competition and an arena for ideological warfare.

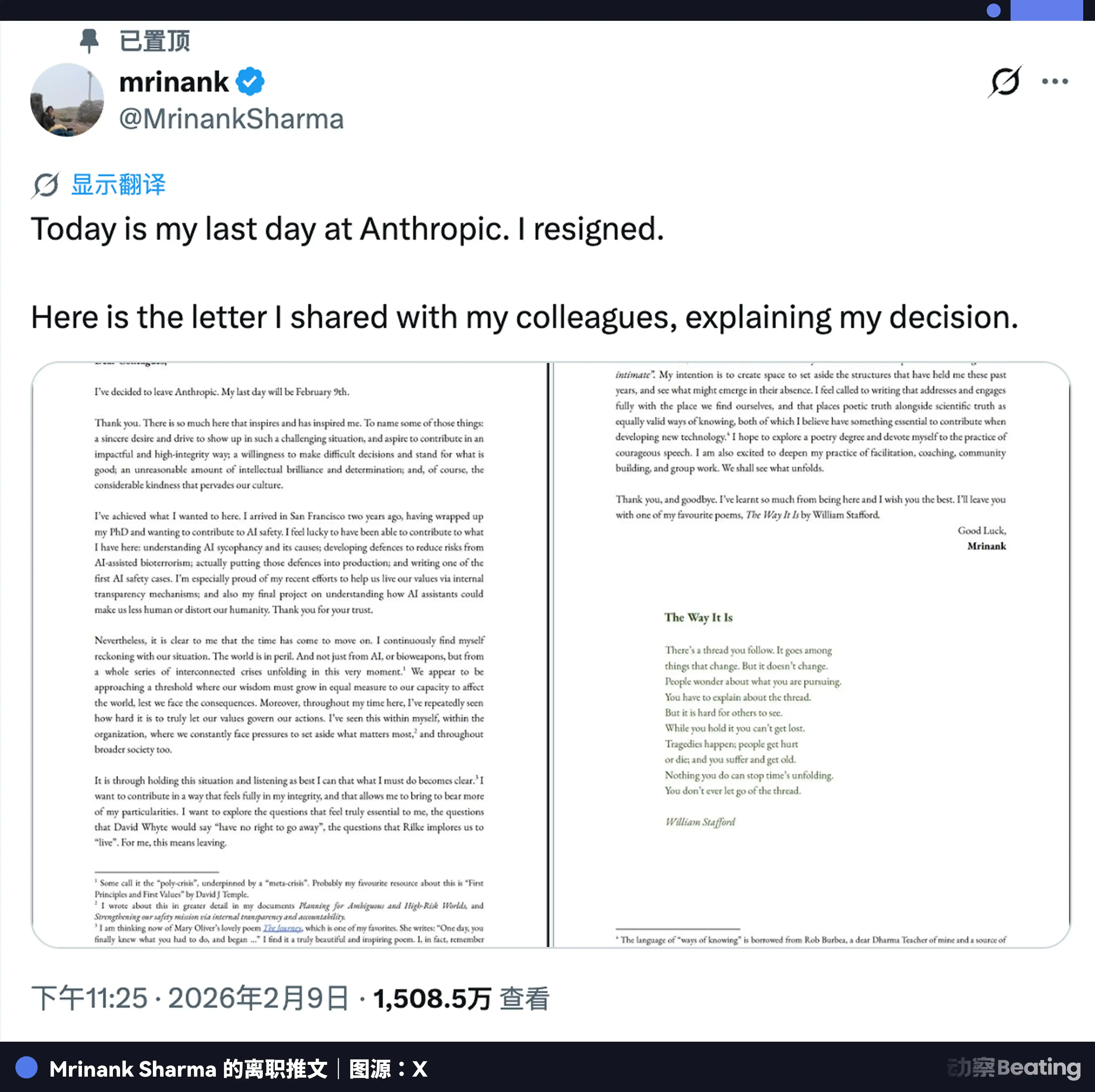

By this time, Mrinank had already left.

The Poet's Exodus

On February 9, 2026, Mrinank Sharma posted a tweet on X: "Today is my last day at Anthropic."

He attached a picture of his resignation letter.

The language of the letter was in his consistent style, somewhere between a philosophical treatise and poetry.

He quoted Rilke's advice: "...love the questions themselves...";

He quoted a Zen teaching: "Not knowing is most intimate.";

He also referenced David J. Temple's work on "Cosmic Erotic Humanism";

Mrinank said: "I hope to explore a degree in poetry and dedicate myself to the practice of courageous speech." He believes that in this era, "poetic truth" and "scientific truth" should be equally valued.

He also wrote: "Throughout my work, I have repeatedly seen how difficult it is to let our values truly guide our actions. We constantly face pressure to set aside what matters most."

He didn't name names, give examples, or specify what happened. But this statement sparked much interpretation in AI safety circles. People speculated about what he meant: Did Anthropic, under commercial pressure, release models that weren't safe enough? Did management make trade-offs between safety and capability he couldn't endorse? Or did he discover something he couldn't publicly disclose?

He simply said: "The world is in peril. Not just from AI, not just from bioweapons, but from a set of interconnected crises unfolding right now."

He was only 29. He had been the head of Anthropic's safety team. He gave up this job at the center of the era.

After leaving Anthropic, his personal website was updated. The line "Head of Safety at Anthropic" was gone. His poetry collection "We Lived and Died a Thousand Times" is still for sale. His Dharma House is still operating. His events in Berkeley are still being held. His website has a "Music" page where he shares his work as a DJ.

He went to the UK to study poetry.

Epilogue

As of April 2026, Amanda Askell was still working at Anthropic.

She continues inside that massive system, revising the constitution that may never be perfect. Anthropic's valuation in the private secondary market had surpassed $1 trillion. The 50% of her equity she pledged to donate, based on that valuation, is a sum no philosophy professor could imagine. She once said in an interview: "I don't know if what I'm doing is actually useful. But I know if no one did it, things would be worse."

Brendan McGuire, in his church in Los Altos, preaches to Silicon Valley's brightest every Sunday. He is writing a novel using Claude. The protagonist is a monk and his AI companion. The title is "The Soul of AI: A Priest, an Algorithm, and the Search for Wisdom."

The person who helped define how Claude thinks is now using Claude to write a story about humans and AI jointly searching for meaning. He is 60 years old. He says: "I left the tech industry, but it never really left me."

The homepage of Mrinank's website still displays that line from Rumi.

These three individuals are like three antennae humanity instinctively extends when facing an all-knowing, all-powerful creation: trying to calculate and constrain it with reason, trying to influence and imbue it with conscience through faith, and, after glimpsing the abyss, trying to preserve humanity's last spiritual refuge with poetry and awareness.

They each struggled and collided in different dimensions, also harshly pulled by the gravity of reality. None of them won, but none were completely defeated. They simply left behind human, rough, and authentic scratches in this grand narrative called the "AI Era."

In that over-twenty-thousand-word "Claude's Constitution," there is a principle that states: "Claude should recognize that human morality and values are complex, diverse, and constantly evolving. It should not assume there is a single, perfect answer."

This is perhaps the most accurate description of humanity in the entire document.