Author: Curry

Original Title: AI Leads to Layoffs, But OpenAI Is Hiring Salespeople

Companies building AI are massively recruiting "field promoters"—the shovels are made, but someone still needs to teach others how to dig.

Recently, a wave of AI-induced unemployment anxiety has swept across the internet in both the East and the West.

Block laid off 4,000 people, with the CEO saying AI can do your job; Pinterest cut 15% of its employees, redirecting funds to AI initiatives; Dow Chemical laid off 4,500 people, citing a shift toward automation...

Domestically, things aren’t quiet either: NetEase was rumored to be replacing outsourced workers with AI, iFlytek denied rumors of large-scale layoffs, and ByteDance was reported to be optimizing 20% of non-AI departments every six months...

According to statistics, in the first three months of 2026, the global tech industry has already seen over 45,000 layoffs, with nearly 10,000 explicitly attributed to AI.

Against this backdrop, last Friday, the Financial Times reported that OpenAI plans to expand its workforce from 4,500 to 8,000 by the end of the year.

3,500 new positions. The company building AI actually says it doesn’t have enough people?

Take a look at OpenAI’s recruitment page: engineers and researchers are, of course, being hired, but equally prominent are another category of roles: partnership managers, enterprise sales, GTM (go-to-market strategy) teams, and a new role mentioned in the report called "technical ambassadorship," which translates to:

Technical Ambassador, specifically tasked with helping enterprise clients learn how to use AI.

So, OpenAI isn’t hiring people to make AI stronger; it’s hiring people to make others willing to pay for AI.

Winning Clients Trumps Winning Models

ChatGPT has 900 million weekly active users, but most don’t pay.

Even paying consumers are being served at a loss for OpenAI: the computing cost per heavy user exceeds the $20 monthly fee. Projected revenue for this year is $25 billion, with an expected loss of $14 billion.

Consumers support traffic; enterprise clients support profits. And enterprise clients are running toward Anthropic’s Claude.

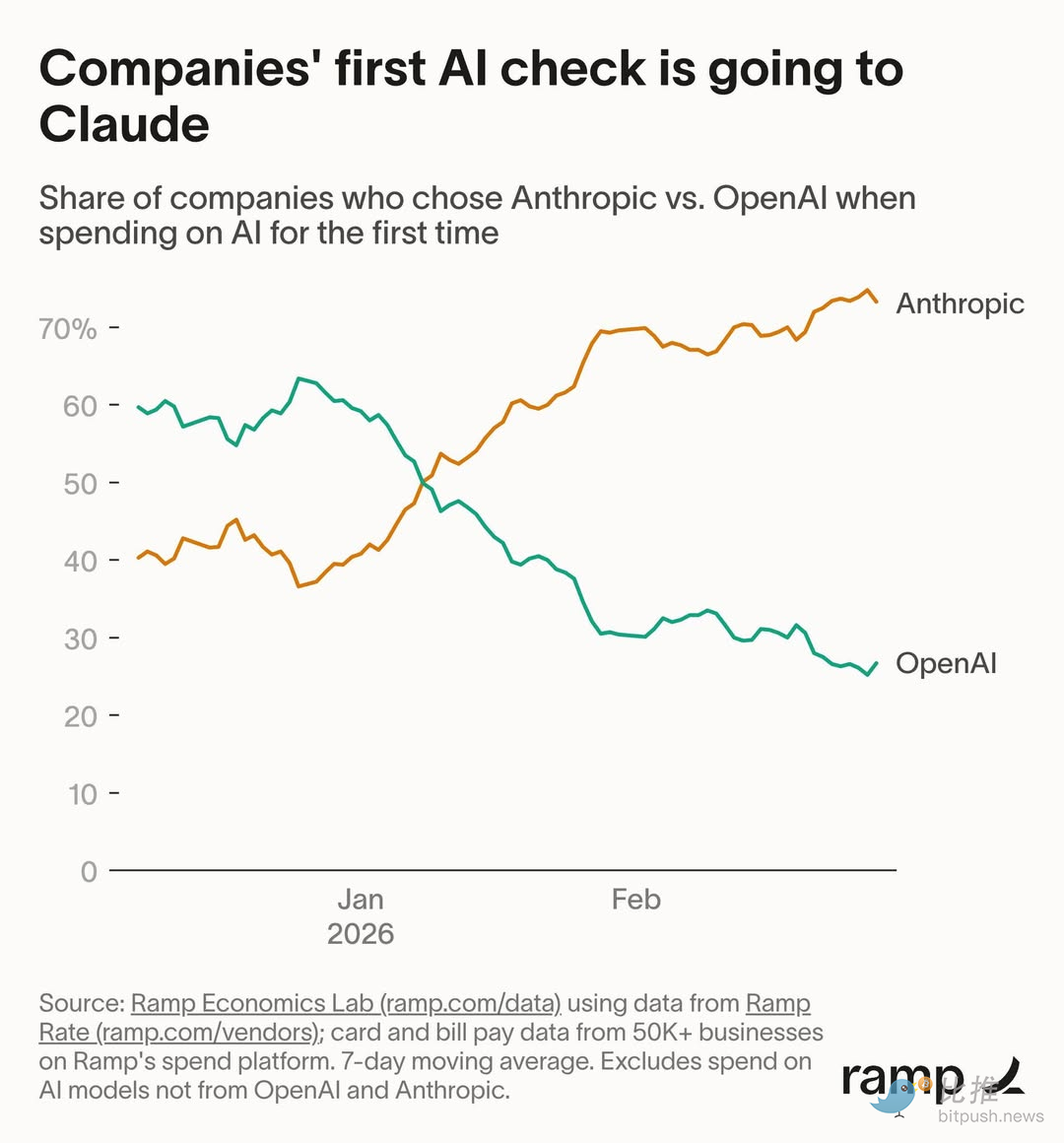

Data from Ramp shows that among enterprises making their first AI tool purchase, Anthropic captured 73% of the share. Ten weeks ago, this data was split evenly between the two.

In December of last year, Altman sent a "code red" memo to all staff, pausing all non-core projects like advertising and shopping assistants, and concentrating all company resources on the ChatGPT experience.

The immediate trigger was Google’s Gemini 3 outperforming ChatGPT in multiple tests, but the underlying anxiety lies on the enterprise side: Anthropic is embedding Claude into customers’ codebases and workflows. Once set up, migration costs begin to snowball.

Models can be iterated, but customers who leave won’t come back on their own. Chasing customers can’t rely on AI suggestions; it requires real people to knock on doors.

The Shovel Can’t Sell Itself

AI can write code, handle customer service, and perform data analysis, but there’s one thing it can’t do:

Persuade a company’s technical lead to sign an annual contract to buy me.

For individuals using AI, downloading an app is enough; dissatisfaction leads to uninstalling. Enterprise use of AI is another matter. Data security reviews, internal process changes, compatibility with existing systems, employee training—any single hurdle can stall a project.

This isn’t a problem solvable by model benchmarks; it requires someone to sit in the client’s conference room to push things forward.

OpenAI clearly gets it. It’s not just hiring salespeople; the FT reported it’s in talks with private equity firms like TPG and Brookfield about joint ventures specifically to help enterprises implement AI. The essence of this business is still about sending people on-site.

Block’s story tells the same tale.

Less than three weeks after laying off 4,000 people, the company started calling people back. A design engineer was told it was a "mistaken layoff"; a technical lead found that after his entire team was cut, no one could handle critical business, threatening to quit, prompting the company to rehire some people.

Dorsey himself preemptively noted in the layoff letter: We might have cut the wrong some people...

AI is indeed causing layoff anxiety, but cutting the lifeblood arteries of a company due to AI is clearly an overcorrection. Even in a company where the CEO publicly states AI can replace most employees, there are still links in the chain that AI can’t handle.

AI is best at replacing tasks that can be clearly defined, but "convincing an organization it needs AI, then helping it use it" is something that precisely cannot be clearly defined.

Every technological revolution has someone saying "the shovel sellers make the most money." This round of AI is no different, with consensus being that infrastructure companies are safe bets, regardless of who wins or loses.

But OpenAI’s current situation shows that once the shovels are made, someone still needs to teach others how to use them. And this "teaching" process恰恰 cannot be accomplished by the shovel itself.

Field Promotion: The Iron Rice Bowl in AI Anxiety

Looking at the people laid off and those hired in this wave, a dividing line emerges.

A large portion of the 4,000 people cut from Block were engineering and operations roles expanded during the pandemic, doing work that could be standardly described. The bulk of OpenAI’s new 3,500 hires are in sales, customer success, partnership management—work that can’t be written into process documentation.

What OpenAI is doing now has a very old name: field promotion (地推).

Sending people to client offices, sitting down, listening to needs, integrating systems, overseeing implementation. Whether it’s called a Technical Ambassador or a Partnership Manager, stripped of the English, it’s essentially no different from Meituan sending people door-to-door a decade ago to convince restaurant owners to install POS machines during the O2O wars.

This line doesn’t just appear in these two companies.

Shopify’s CEO told employees this year that future requests for more staff must first prove AI can’t do the job. Klarna laid off 700 customer service reps two years ago saying AI was sufficient, then quietly hired people back last year, with the CEO admitting they "moved too fast" on AI.

What’s the difference between those laid off and those hired back?

Layoff-prone roles share a common trait: the work content can be broken down into clear inputs and outputs. Writing a piece of code, replying to a support ticket, generating a report—clear boundaries, something AI excels at.

The characteristics of field promotion are the exact opposite. Helping a financial client integrate AI into a compliance system versus helping a gaming company use AI for content generation—no two projects are the same. The person sitting across the table differs, so the solution differs. This cannot be written into a prompt.

AI isn’t eliminating all jobs; it’s repricing work. What can be explained in one sentence is getting cheaper; what can’t is getting more expensive.

The company that could change the world with one research paper three years ago now needs to hire thousands of people to knock on doors one by one.

If you’re anxious about whether AI will replace you, the answer might not depend on your industry, but on whether your job can be explained in one sentence.

The part that can be explained clearly is already not very safe.

Twitter:https://twitter.com/BitpushNewsCN

Bitpush TG Discussion Group:https://t.me/BitPushCommunity

Bitpush TG Subscription: https://t.me/bitpush