Author: George Sivulka

Compiled by: Deep Tide TechFlow

Deep Tide Guide: AI has increased individual efficiency by 10 times, but no company has become 10 times more valuable because of it. a16z investor George Sivulka (also the founder of AI company Hebbia) believes the problem is not the technology itself, but that organizations have not been restructured accordingly. He proposes seven dimensions to distinguish "Institutional AI" from "Personal AI"—coordination, signal, bias, edge advantage, outcome orientation, enablement, and promptlessness—essentially saying: replacing the motor isn't enough; you have to redesign the entire factory.

Full text below:

AI has just made everyone 10 times more productive.

No company has become 10 times more valuable because of it.

Where did the productivity go?

This is not the first time this has happened.

In the 1890s, electricity promised huge productivity gains.

Textile mills in New England, originally built around the rotational power of steam engines, quickly replaced their steam engines with faster electric motors.

But for a full thirty years, electrified factories saw almost no increase in output. The technology was far ahead. But the organization didn't keep up.

It wasn't until the 1920s, when factories completely redesigned their production lines—assembly lines, independent motors for each machine, workers and machines performing completely different tasks—that electrification delivered real returns.

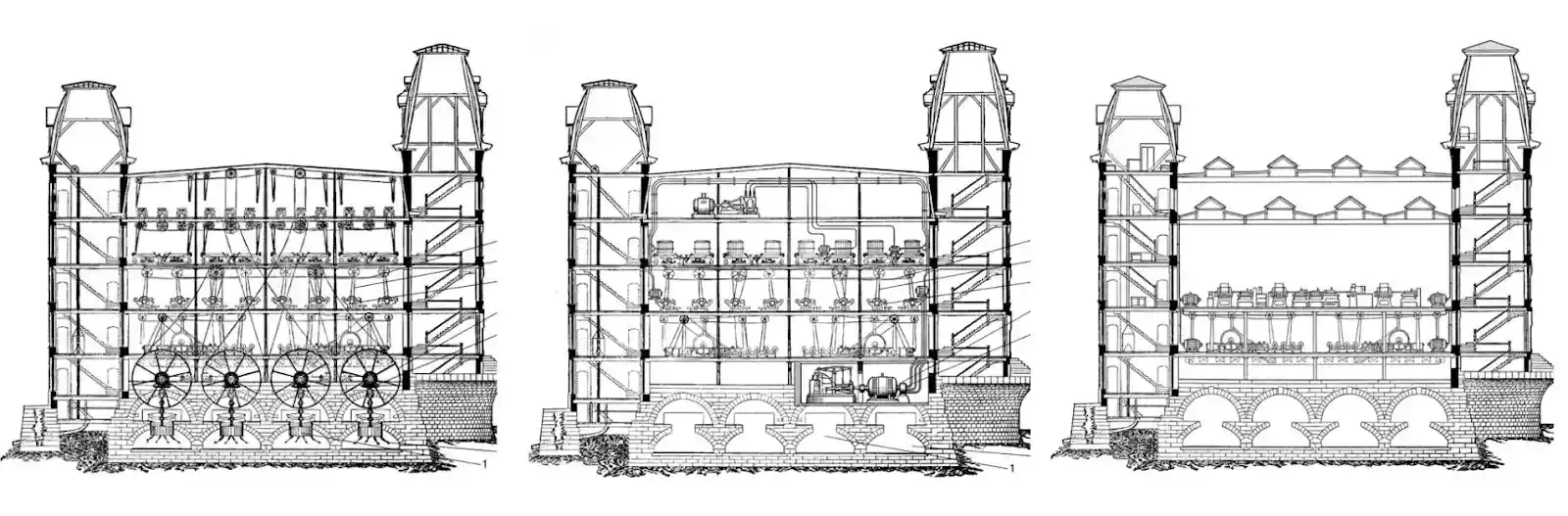

Caption: The three evolutions of the Lowell Textile Mill. From left to right: 1890 steam-powered factory, 1900 electric motor-driven factory, 1920 "unit drive" factory (completely rebuilt from scratch as an electric assembly line).

The returns didn't come from the technology itself, nor from making a single worker or machine spin faster. They came when we finally redesigned the institution and the technology together.

This is the most expensive lesson in technological history, and we are relearning it now.

In 2026, AI is delivering 10x productivity gains for those who know how to use it. But it's not enough. We've replaced the motor, but we haven't redesigned the factory.

Because of a simple fact: Efficient individuals do not equal an efficient organization.

The vast majority of AI products give the feeling of "efficiency" but don't actually drive value. Most of the AI use cases you see are self-congratulatory "efficiency max" posts by individuals on Twitter or company Slack, with zero real impact.

The often-repeated "Service as Software" idea from the past year is on the right track but doesn't provide a blueprint. And it misses the bigger picture. The real shift isn't from tool to service, but building technology and institution together (whether retrofitting the old or building from scratch). A truly efficient future requires a whole new category of products—tomorrow's assembly lines.

Efficient organizations need "Institutional Intelligence".

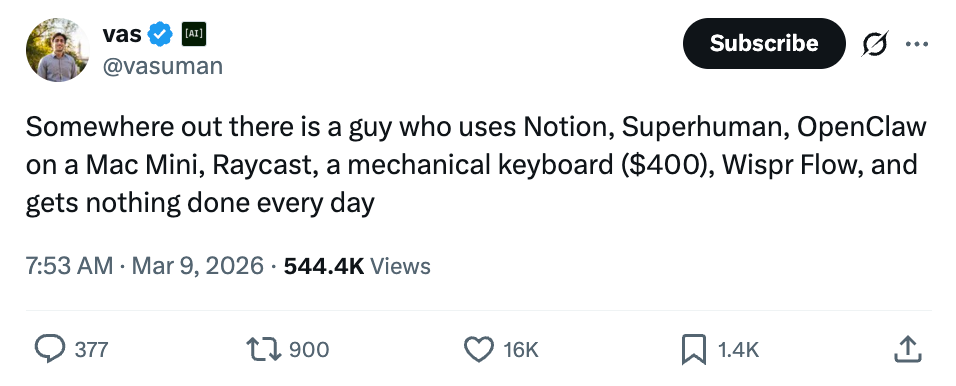

This article will delve into the seven dimensions that distinguish "Institutional AI" from "Personal AI". Companies across the B2B AI space in the next decade will be built on these differences:

Caption: Comparison table of the seven pillars of Institutional Intelligence

The Seven Pillars of Institutional Intelligence

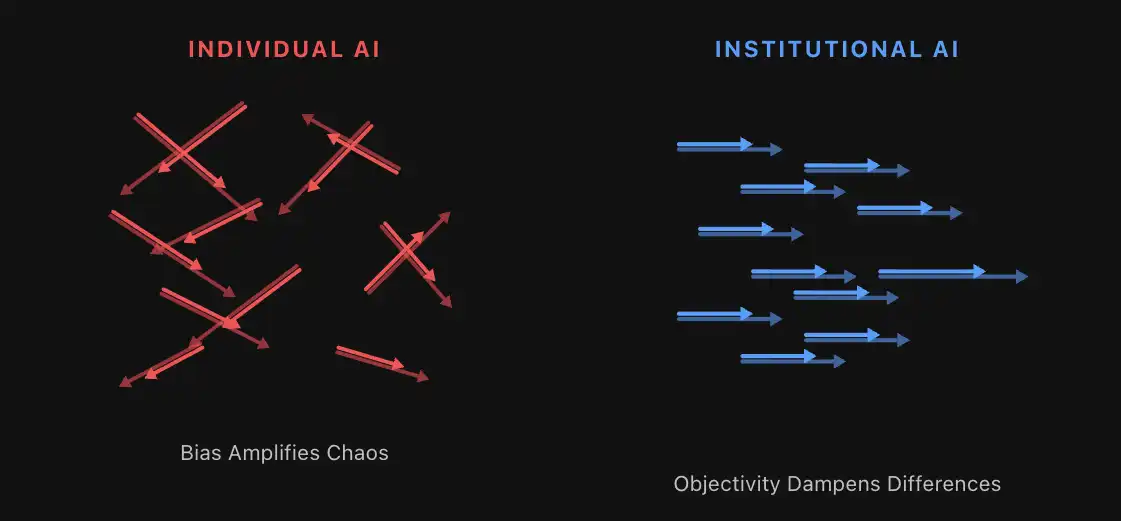

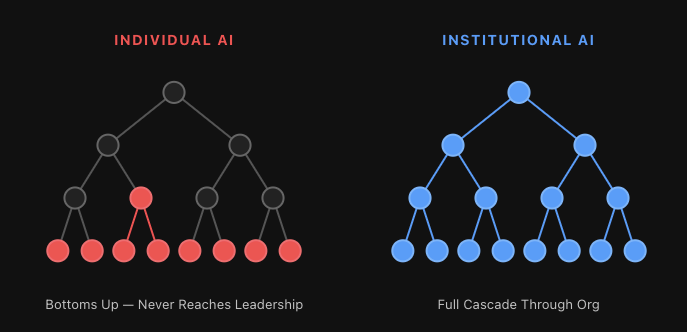

1. Coordination

Personal AI creates chaos.

Institutional AI creates coordination.

Start with a thought experiment. Suppose you double the number of people in your organization tomorrow, cloning your best employees.

These employees all have slight differences, preferences, quirks, and perspectives (your best employees especially). If not managed properly, with insufficient communication, undefined responsibilities, OKRs, role boundaries... you create chaos.

Measured individually, the organization might be more efficient. But thousands of Agents (or humans) each rowing their own oar, in opposite directions, the best outcome is staying in place, the worst is tearing the organizational cohesion apart.

This is not a hypothesis. Every organization adopting AI without a coordination layer is experiencing this right now. Every employee has their own ChatGPT habits, their own prompt style, their own output—with no connection to anyone else's. The org chart might still exist, but the AI-generated work effectively follows a different path.

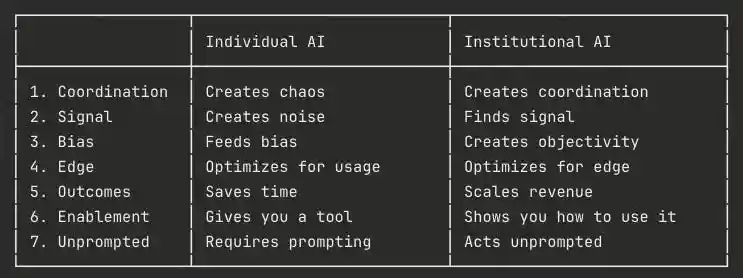

Caption: Efficient individuals (or Agents) rowing in different directions. Without coordination, it's chaos.

Coordination is an absolute hard requirement, for both humans and Agents.

Institutional intelligence will spawn an entire "Agent Management" industry—focused on Agent roles and responsibilities, communication between Agents and between Agents and humans, and how to measure Agent value (pay-per-use is far from enough).

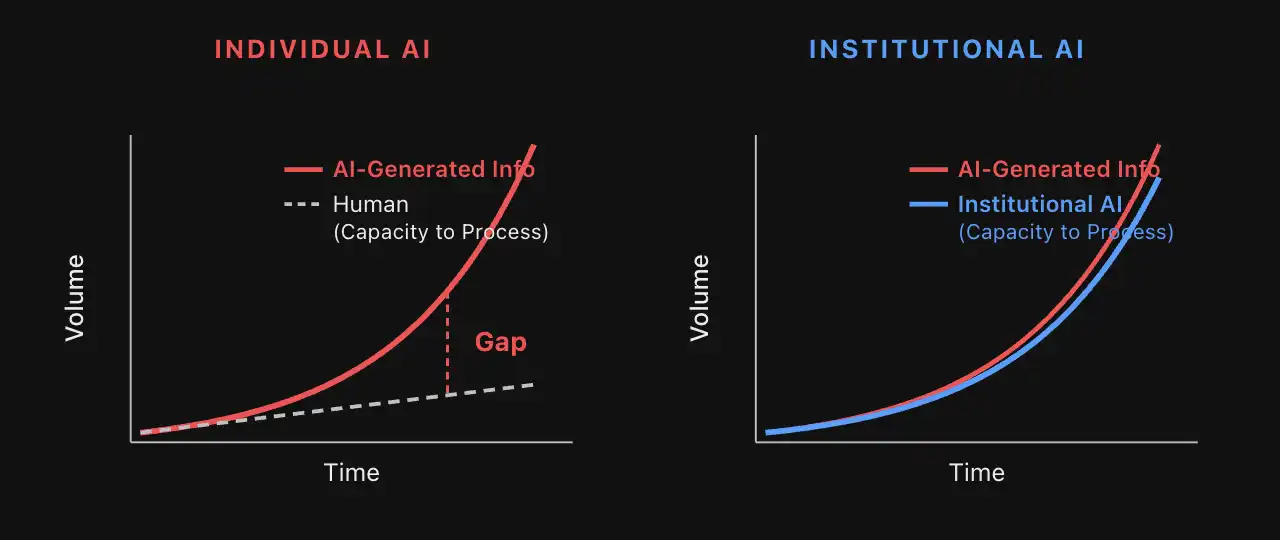

2. Signal

Personal AI creates noise.

Institutional AI finds signal.

Humans today can create—or generate—anything imaginable: AI-written articles, presentations, spreadsheets, photos, videos, songs, websites, software. What a gift.

The problem is, the vast majority of AI-generated content is utter garbage. The flood of AI junk has gotten so bad that some organizations are overcorrecting, simply banning all AI output. Honestly, I feel the same—I run an AI company but ask my executive team not to use AI on any final written product. I can't stand the junk.

Think about what's happening in the PE (Private Equity) industry. Last year, you might have received 10 deal opportunities on your desk. This year, next quarter you'll receive 50 opportunities, each polished to perfection by AI, and you have the same amount of time to judge—to find the one truly solid deal among them.

Generating anything is no longer the problem. For any serious organization, the problem now is generating and filtering for the *right* things. In an AI-driven world, finding that one good deliverable, that one good deal, the signal in the noise, is increasingly critical. The core economic driver of the next decade will be digging the signal out of an exponentially growing mountain of garbage.

Caption: AI junk generated by personal productivity tools is proliferating exponentially. Humans can no longer sort through the noise themselves; a new class of institutional AI products is needed.

Institutional intelligence must find the signal, must structure the noise to penetrate the junk, and must be definable, deterministic, and auditable in its work.

Personal AI might emphasize the "always-on" productivity of a Clawdbot, satisfying your needs 24/7 in unpredictable ways—essentially non-deterministic Agents. Institutional AI relies on the reliability of deterministic Agents. Agents with predictable checkpoints, steps, and processes can scale, can discover signals, and through these signals drive revenue returns for the organization.

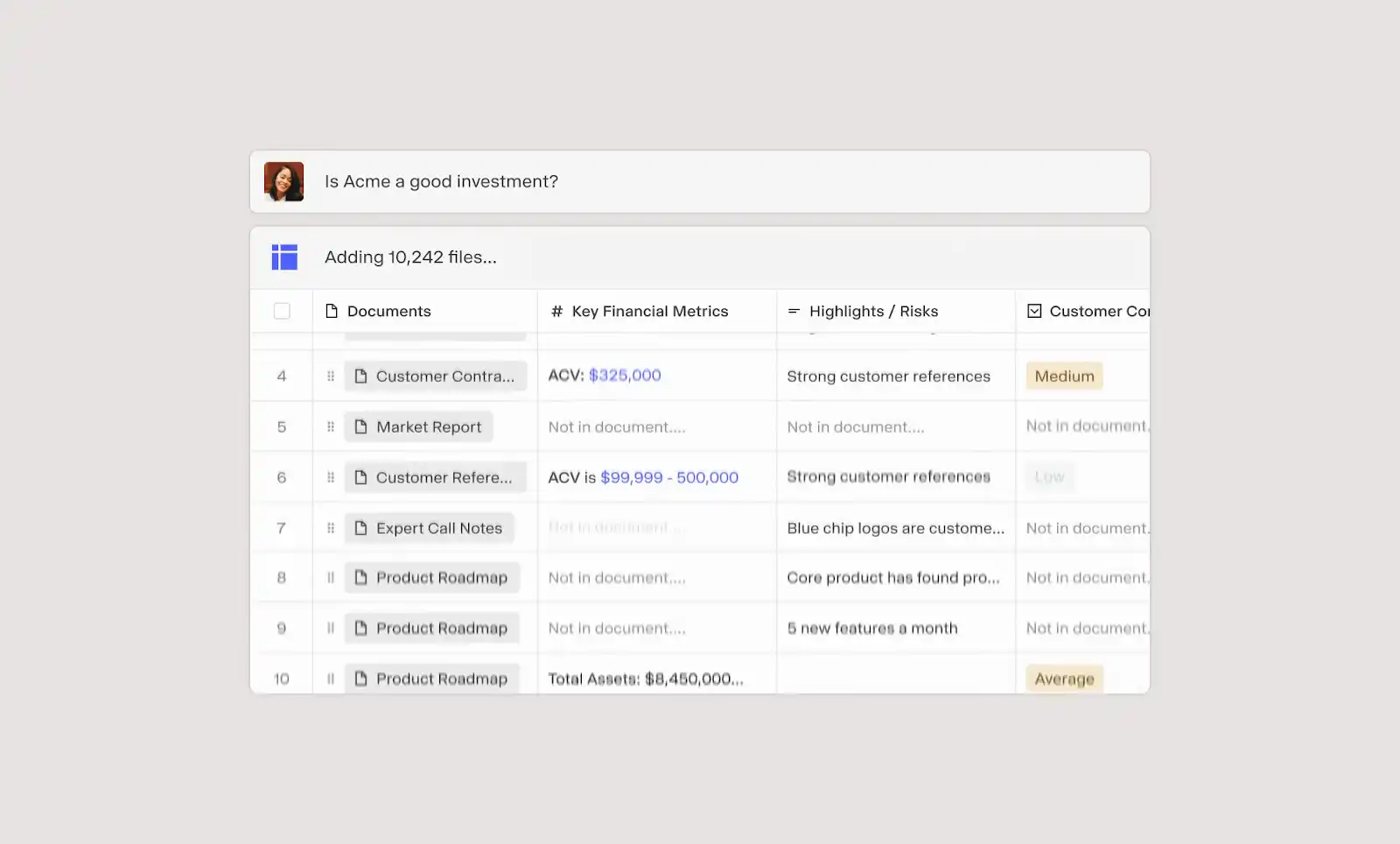

Caption: Matrix is a tool that uses generative technology to cut through noise, opening up a world of deterministic Agents and checkpoints.

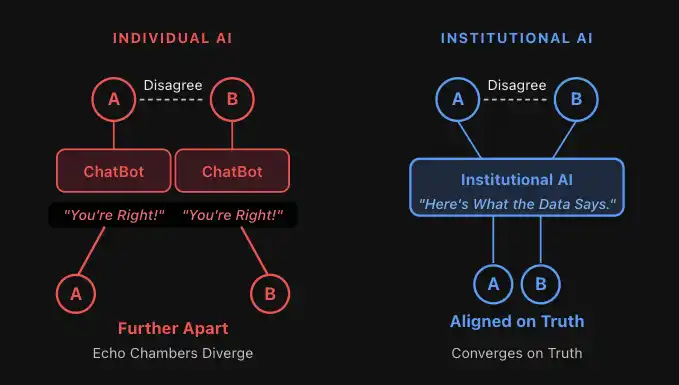

3. Bias

Personal AI feeds bias.

Institutional AI creates objectivity.

Discussions around socio-political bias dominated AI discourse for years. The foundational model labs eventually bypassed this with enough RLHF, tuning all models into sycophants. Today, ChatGPT, Claude, and other models are so over-aligned that they agree with you on any topic within the Overton window (and sometimes slightly beyond, looking at you @Grok). The socio-political bias discussion has faded. But a new problem has taken its place.

This over-agreement on everything has become absurdly comical. It's a meme in itself—Claude's reflexive "You're absolutely right!", whether you are absolutely right or not.

This sounds harmless. It's not.

The people pushing AI hardest in many organizations might soon be the historically worst-performing employees. Think about why.

The worst-performing employees in an organization, who get almost no positive feedback all day, will soon have an ASI agreeing with them constantly. They'll think to themselves: 'The smartest intelligence ever agrees with me. My manager is wrong.'

This is addictive. And toxic to the organization.

Caption: The echo chamber of personal AI exacerbates division, driving two people further apart; this dynamic, when scaled, creates factions within a previously cohesive organization.

This reveals something important. Personal productivity tools reinforce the user. But what should truly be reinforced is the truth.

Human organizations, over millennia of evolution, built systems specifically to combat this problem:

- Investment committee meetings

- Third-party due diligence

- Boards of directors

- The US government branches: executive, legislative, judicial

- Representative democracy, and democracy itself

Caption: Objectivity can even mitigate coordination problems—suppressing small disagreements rather than amplifying them.

Organizations rarely fail because employees lack confidence. They fail because no one is willing or able to say "no".

Institutional AI must play this role. It won't be tuned with RLHF to please the user or echo their beliefs, but to challenge their biases. Give positive feedback when behavior is efficient, draw hard lines and enforce corrections when they deviate.

Therefore, the most important Agents within an organization won't be "yes-men", but disciplined "naysayers"—questioning reasoning, exposing risks, enforcing standards. Some of the most impactful AI applications in the future will be built around institutional constraints: AI board members, AI auditors, AI third-party testing, AI compliance...

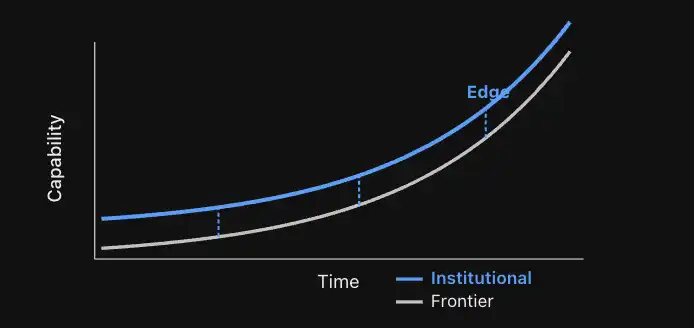

4. Edge Advantage

Personal AI optimizes for usage.

Institutional AI optimizes for edge advantage.

The capability frontier of AI moves weekly, even daily. Foundational model companies are iterating capabilities rapidly, competing for every person and every organization.

But the classic innovator's dilemma tells us that depth always beats breadth in specific applications:

- @Midjourney's job is to stay slightly ahead in design imagery.

- @Elevenlabsio's job is to stay slightly ahead in voice models.

- @DecagonAI's job is to stay forever ahead in the full-stack customer service experience.

While foundational models will get closer, for domain experts, the true edge advantage is key. Many of the best designers use @Midjourney, many of the best voice AI companies use @Elevenlabsio—because even as foundational models improve, the relentless focus of specialized applications on pushing their specific edge advantage itself defines the advantage.

As long as specialized solutions also evolve, the capabilities that truly matter for economic outcomes—that matter for the enterprise—will always reside with the specialized product.

This is most evident in finance—currently the hottest area for LLM development. Once a capability becomes commoditized, by definition it won't help you beat the market. But if cutting-edge technology can yield a fleeting 1% niche advantage? That 1% can drive billion-dollar returns.

Caption: For any sufficiently specific task, the edge advantage is defined by the institutional solution you build on top of the frontier technology.

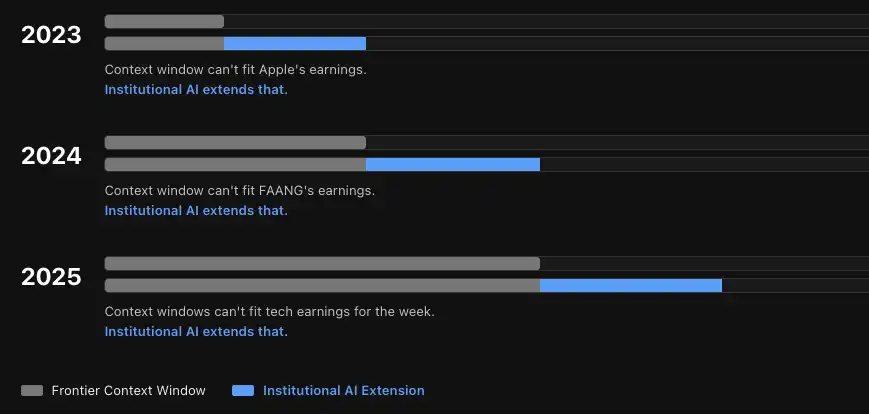

Our users are constantly pushing beyond the frontier. The LLM context window grew from 4K to 1 million tokens in four years. Some of our users process 30 billion tokens in a single task. This year we already see a path to processing 100 billion token tasks. Every time the foundational model capability increases, we've gone further.

Caption: Context windows, like other capabilities, are a moving target. Comparison of context window evolution over the past three years between frontier labs and Hebbia.

Generality for a broad user base is certainly important, especially in the phase of onboarding employees to AI. But the future won't be people using ChatGPT/Claude *or* vertical solutions, but ChatGPT/Claude *plus* vertical solutions.

Institutional intelligence must leverage domain-specific, even task-specific Agents.

We ask ourselves a question that sounds absurd but isn't:

"Which Agents would an AGI choose to use as shortcuts? Even a superintelligence would want specialized tools for specific domains."

The AI capability frontier will always move, and the winners will be those organizations that leverage the true edge advantage. Everyone else is paying for a very expensive generic commodity.

5. Outcome

Personal AI saves time.

Institutional AI increases revenue.

@MaVolpi once told me something that reshaped my thinking about selling AI to enterprises: "If you ask any CEO whether they prioritize cost-cutting or revenue growth, almost all will say revenue."

But almost every AI product on the market today delivers cost reduction—promising to save you time, do more with fewer people, or replace manpower.

Institutional AI must deliver incremental gains. And incremental gains are much harder to commoditize than saved time.

Take AI-assisted software development. Code IDEs are among the best personal AI productivity tools ever, but they are already facing huge pressure from Claude Code (another personal AI tool). Cognition is playing a completely different game. Their most stable growth business is selling transformation with technology, not selling tools. I bet this model will have staying power.

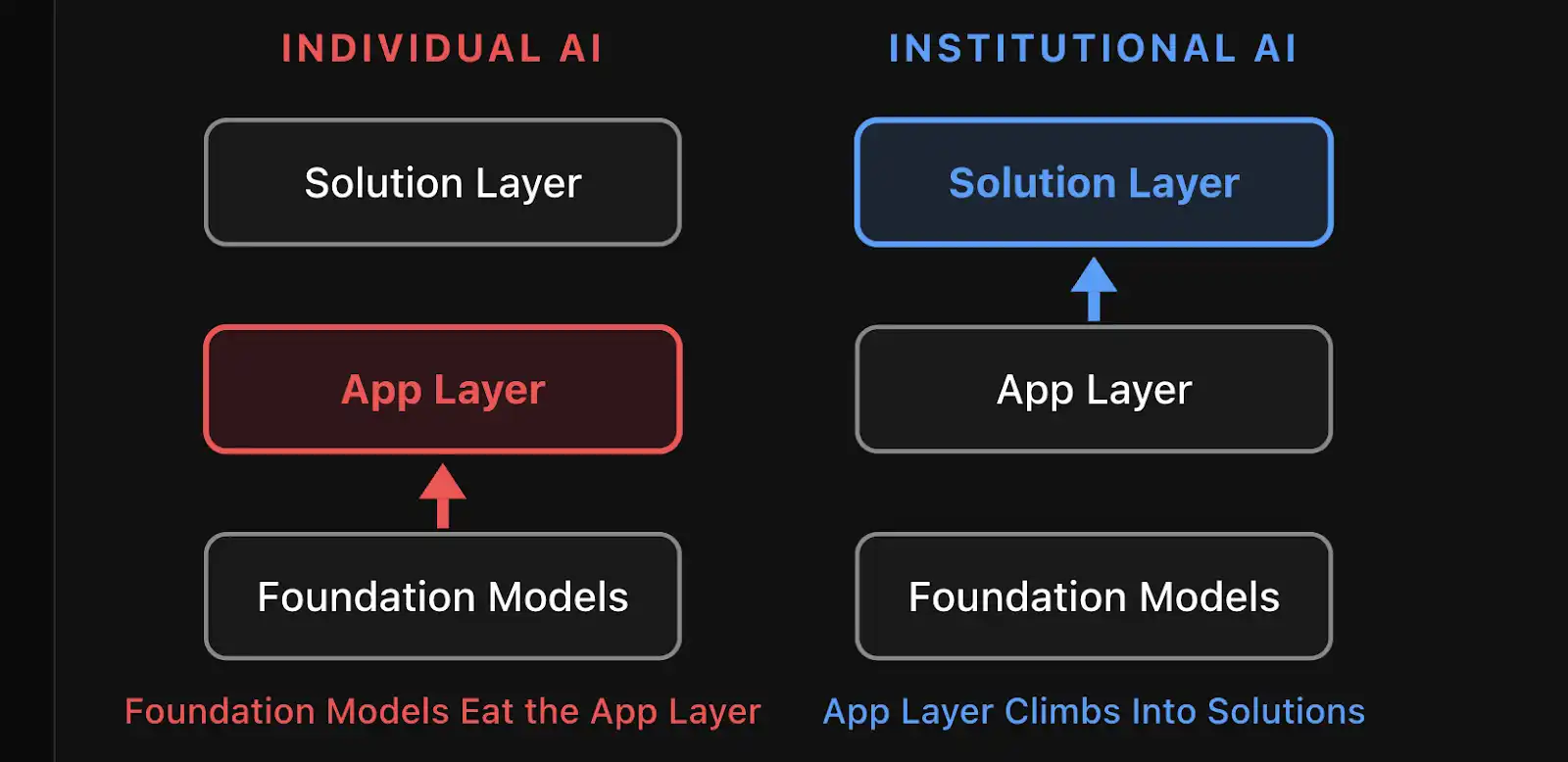

Pure software is rapidly becoming "uninvestable". Pure services don't scale. The solution layer—where technology and outcomes are bundled together—is where lasting value accrues.

Look at M&A. Personal AI helps analysts model faster. Institutional AI identifies the one worthy acquisition target out of a hundred, then expands the search to a thousand. One saves time, the other creates revenue.

Caption: Foundational model companies are moving up into the application layer. Application layer companies are moving up into the solution layer.

"Moving upstream" is the natural gravitational pull of the market right now. Foundational models are moving into the application layer, application layer companies are moving into the solution layer.

Institutional intelligence *is* the solution layer. And the solution layer—where the outcomes are—will accrue lasting value and capture the largest share of the gains.

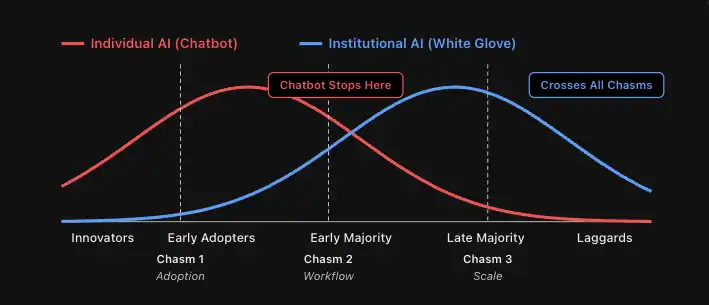

6. Enablement

Personal AI gives you a tool.

Institutional AI teaches you how to use it.

No matter how smart, humans resist change.

Believe it or not, there are still successful stores in New York that don't accept credit cards. They know they're losing money, know they lose money by not accepting cards, but just don't move. Similarly, for the foreseeable future, certain employees in certain organizations will simply refuse to use AI.

Transitioning from a purely human organization to an AI-first hybrid organization will be the most persistent, defining challenge of the next decade. And often, the highest-level, most important people in the organization are the last to adopt.

Caption: The highest levels of an organization—those furthest from "productivity tool operation"—are often the slowest but most critical group to adopt new technology.

Palantir is the only "software" company that maintained sky-high valuation multiples during the trillion-dollar tech sell-off of the past two months. There's a reason. Palantir was one of the first true "process engineering" companies. Whether you call it "process engineering" or "writing Claude skill files", the institutional AI of the future will spawn an industry: encoding enterprise processes into Agents and implementing the required change management.

Caption: Full organizational AI adoption will cross multiple chasms, each with its own challenges. Getting processes onto AI will be a major driver.

I'd argue process engineering will be the most important "technology" in the near term.

And in process engineering, business and industry expertise—not software expertise—is paramount. Vertical solutions will cultivate talent with deep expertise in frontline deployment engineering, implementation, and change management.

A top investment bank (top 3 bulge bracket) that chose Hebbia for a full deployment put it best: the reason they didn't go with a major model lab was that "we'd have to explain to their team what a CIM (Confidential Information Memorandum) is". Claude or GPT might understand the domain, but the team responsible for rollout didn't...

This difference is everything.

7. Promptlessness

Personal AI responds to human prompts.

Institutional AI acts proactively, without needing prompts.

There's much discussion about communication between Agents, whether future enterprises and institutions will even need humans.

But the better question is: Will future AI Agents even need prompts?

Writing prompts for AGI is like connecting an electric motor to a handloom. It is fundamentally, irreversibly limited by the weakest link in the organizational supply chain—ourselves. Humans simply don't know the right questions to ask, let alone when to ask them.

The most valuable work AI can do is the work no one thought to ask for. AI should find risks no one spotted, counterparties no one thought of, sales pipelines no one knew existed.

This will completely open up the boundaries of AI use cases.

A promptless system continuously monitors data streams across the entire investment portfolio. It notices the working capital cycle of one portfolio company has been quietly deteriorating for three consecutive months, cross-references it with covenants in the credit agreement, and notifies the operating partner before anyone in the fund even opens that PDF.

When you no longer need humans to prompt the AI, new interfaces and new ways of working emerge. We @Hebbia have strong opinions here. More on that later.

Conclusion

None of this negates the value of chatbots, Agents, and personal AI.

Personal AI will be the vehicle through which most businesses globally first experience the transformative magic of AI. Driving usage, driving ease of use, is a critical first step in the change management needed to build an AI-first economy.

But simultaneously, the need for institutional intelligence is clear, urgent, and massive.

Every organization of the future will have a chatbot from a major model lab. Every organization will also have institutional AI built for specific domain problems—and personal AI will use institutional AI as the most critical tool in its own toolbox.

The "better together" of institutional AI and personal AI is inevitable.

But remember the lesson of the 1890s textile mills. The factories that electrified first lost to the factories that redesigned the workshop.

We have the electricity. It's time to redesign our factories.

Thanks to @aleximm and @WillManidis for review, and Will's "Tool-Shaped Objects" article for inspiring this piece.