5 月 5 日,A16Z 旗下的加密专项 VC a16z crypto 宣布第五支基金募集完成,规模 22 亿美元。同时,CTO 埃迪·拉扎林晋升为普通合伙人,与克里斯·迪克森、Ali Yahya、Guy Wuollet 一起成为这支基金的第四位 GP。

多数英文媒体把焦点放在「这是当前加密寒冬里最大的一笔募资」上,强调了 22 亿这个绝对数。但这个数字在 2021 年也出现过,那一年 a16z crypto 完成了第三支基金的募资,也是 22 亿美元。中间隔着五年、一轮牛市顶点和两轮加密寒冬,a16z 把这个数字又押了一次。

这个数字的故事不是「大」,是「死磕」。

a16z crypto 的上一支加密专项基金 Fund 4 在 2022 年 5 月募集完成,规模 45 亿美元,是史上最大的单只加密 VC 基金,至今未被打破。从 45 亿掉到 22 亿,规模确实缩了一半。但在这一轮寒冬里,能再凑出 22 亿继续押加密的机构,就剩 a16z 一家。

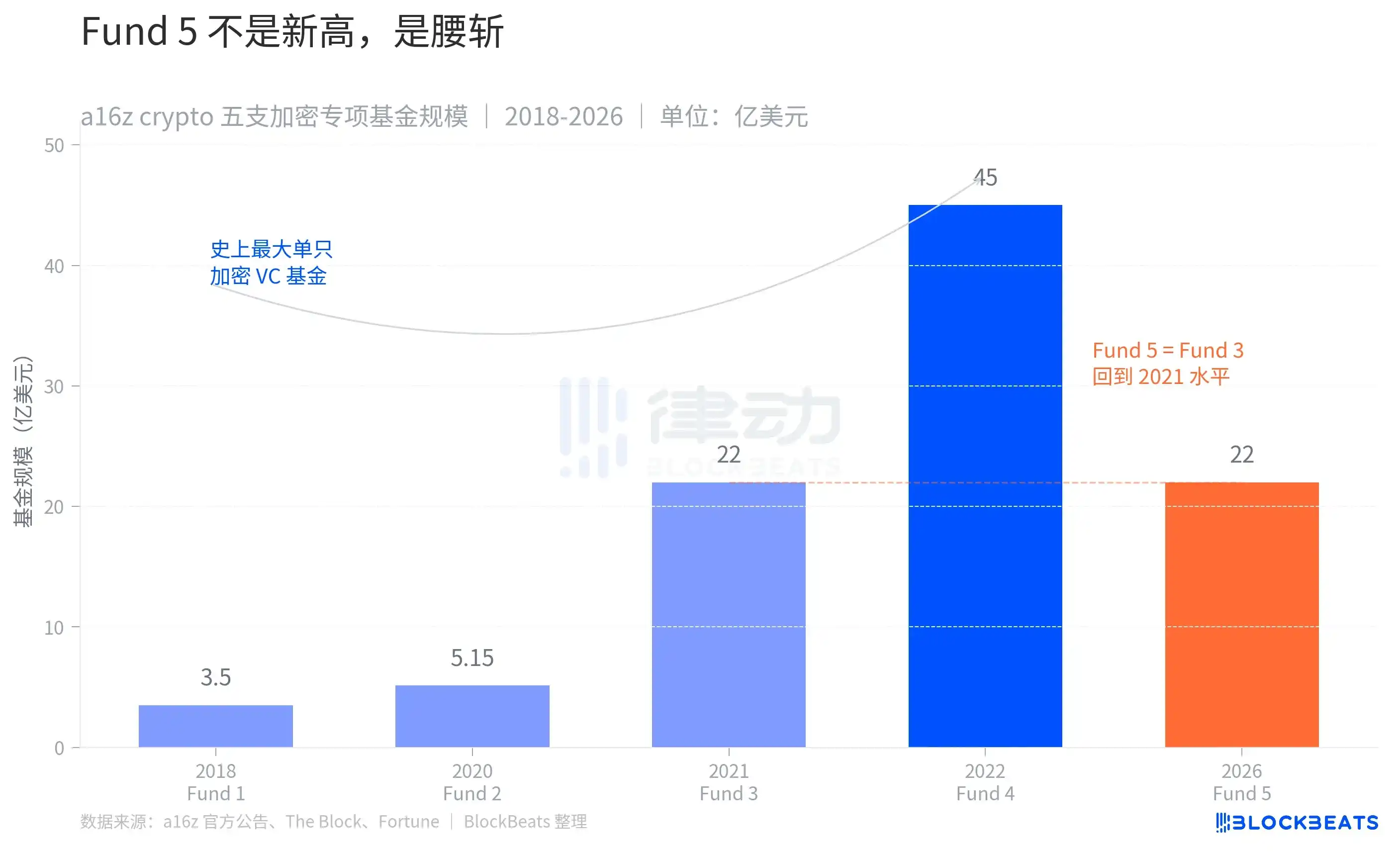

把这家机构八年来五支加密基金的规模摆在一起看,节奏更清楚一点。Fund 1(2018 年,3.5 亿美元)和 Fund 2(2020 年,5.15 亿美元)是早期试水。Fund 3(2021 年,22 亿美元)是行业牛市第一段拉伸,规模翻了 4 倍。Fund 4(2022 年,45 亿美元)是顶峰,体量再翻一倍。Fund 5 在五年之后回到 22 亿,刚好等于 Fund 3。

把 Fund 3 和 Fund 5 的柱顶用虚线连起来,画面就成了这样:a16z crypto 在加密叙事里,绕了一个完整的圈,回到了 2021 年的尺寸。这家机构 2018 年至今累计承诺资本 98 亿美元,其中近一半(45 亿)压在 2022 年那一支至今还没花完的 Fund 4 上。Fund 5 不是新一波加仓,而是在 Fund 4 还没用完、行业又冷一轮的情况下,把加密专项的弹药继续往下续。

也可以从另一个角度读这张图。Fund 1 到 Fund 4 之间,每一支基金的间隔都在缩短,2 年、1 年、1 年,规模也在膨胀。这是 2018 至 2022 年加密行业典型的节奏。Fund 4 之后,间隔突然拉到 4 年。

这 4 年里,FTX 倒下,DeFi 杀回又退潮,比特币 ETF 在 2024 年通过,一轮新牛市起来再回落。a16z crypto 没有沿用 Fund 1-4 的节奏继续募资,而是先把 Fund 4 的弹药用掉一部分,再来凑下一支。Fund 5 募完的这一天,距离 Fund 4 已经过去整整 48 个月。

但只看 a16z crypto 自己的曲线还不完整,22 亿是死磕还是跟随,得放进同期行业的形状里看。

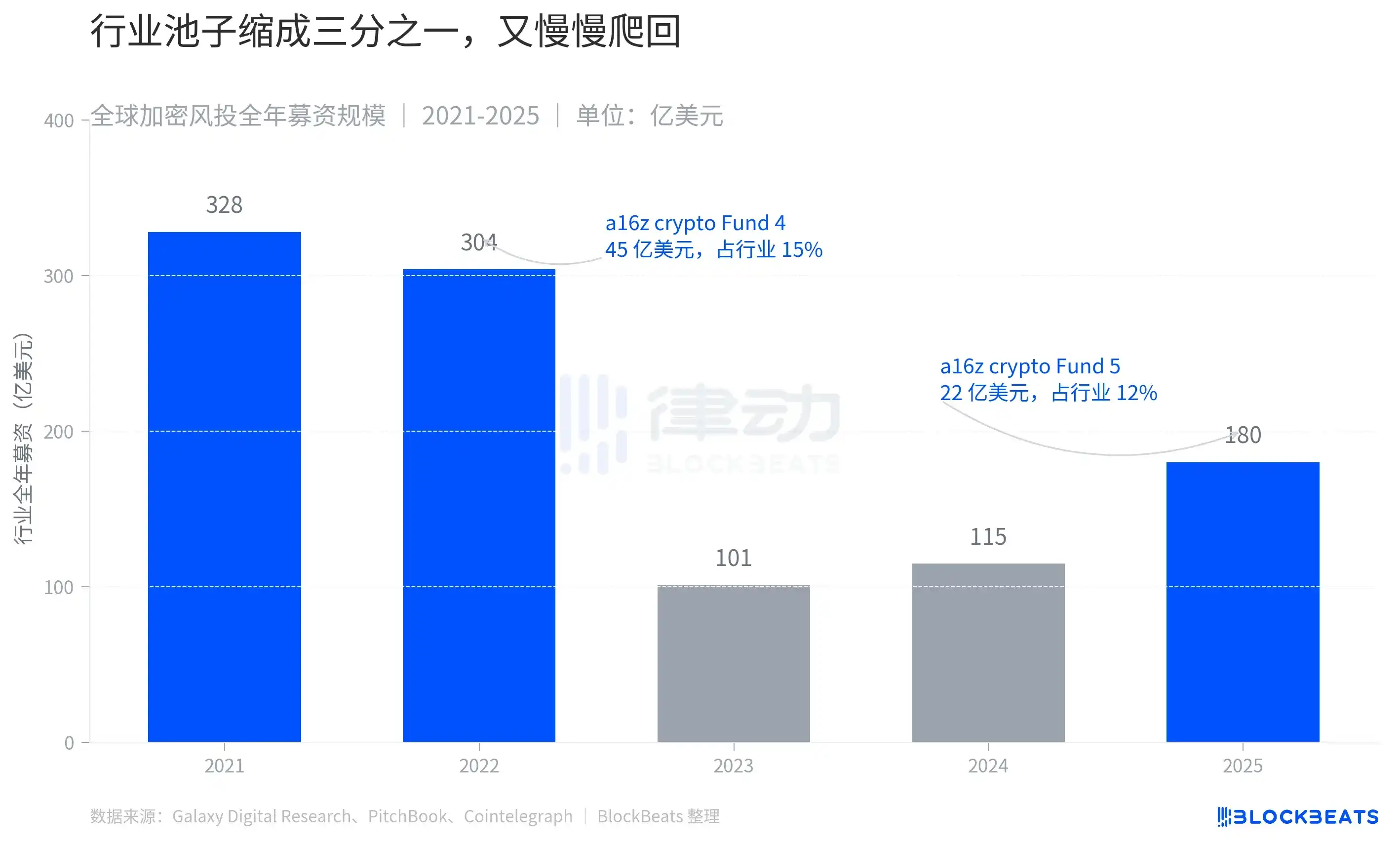

实际情况是行业塌得比 a16z crypto 自己更陡。据 Galaxy Digital 的统计,2021 年全球加密风投投入约 328 亿美元,2022 年仍有 304 亿美元。两年累计超过 632 亿,是加密历史上最庞大的一笔风险资本注入。FTX 倒下之后,2023 年这个数字砍到 101 亿美元,缩水接近七成。2024 年小幅回升到 115 亿,2025 年据 PitchBook 口径回到约 180 亿,跌回 2020 年的水平。

把 a16z crypto 的两次大规模募资放进这条曲线里看,比例就显出来了。Fund 4 那 45 亿在 2022 年的行业里占了大约 15%,意味着每 7 美元的加密风投就有 1 美元由 a16z crypto 一家管。Fund 5 这 22 亿在 2025 年 180 亿的行业池子里占大约 12%。从绝对值看,a16z crypto 募的钱缩了一半。从相对值看,它在缩成三分之一的池子里咬下的份额几乎没变。

读懂这层就懂了 Fund 5 这 22 亿真正的位置。规模缩了一半,但在缩到只剩三分之一的池子里,咬下的份额几乎没变。要做到这件事,LP 在过去三年没把对加密的配置砍到零,a16z 的合伙人也得说服自己「继续把弹药花在加密」。

还有一组细节可以拉出来单独看。2024 年到 2025 年间,Multicoin 的 AUM 从约 6 亿美元一度爬到 60 亿,又因比特币 10 月之后的下跌腰斩到 27 亿。同期 a16z crypto 的 portfolio 估值缩水约 40%。Haun Ventures 同比涨了 30% 左右。

Pantera 在 2025 年靠 Circle 和 BitGo 等 5 家被投公司上市,把利润分配给 LP,自己开始募集第五支基金。寒冬里同行做的事情大致是三件,募新钱、向 LP 退钱、把投资范围扩展到加密之外。a16z crypto 选了第一件,且只选第一件。不退钱、不扩展,只继续投加密。

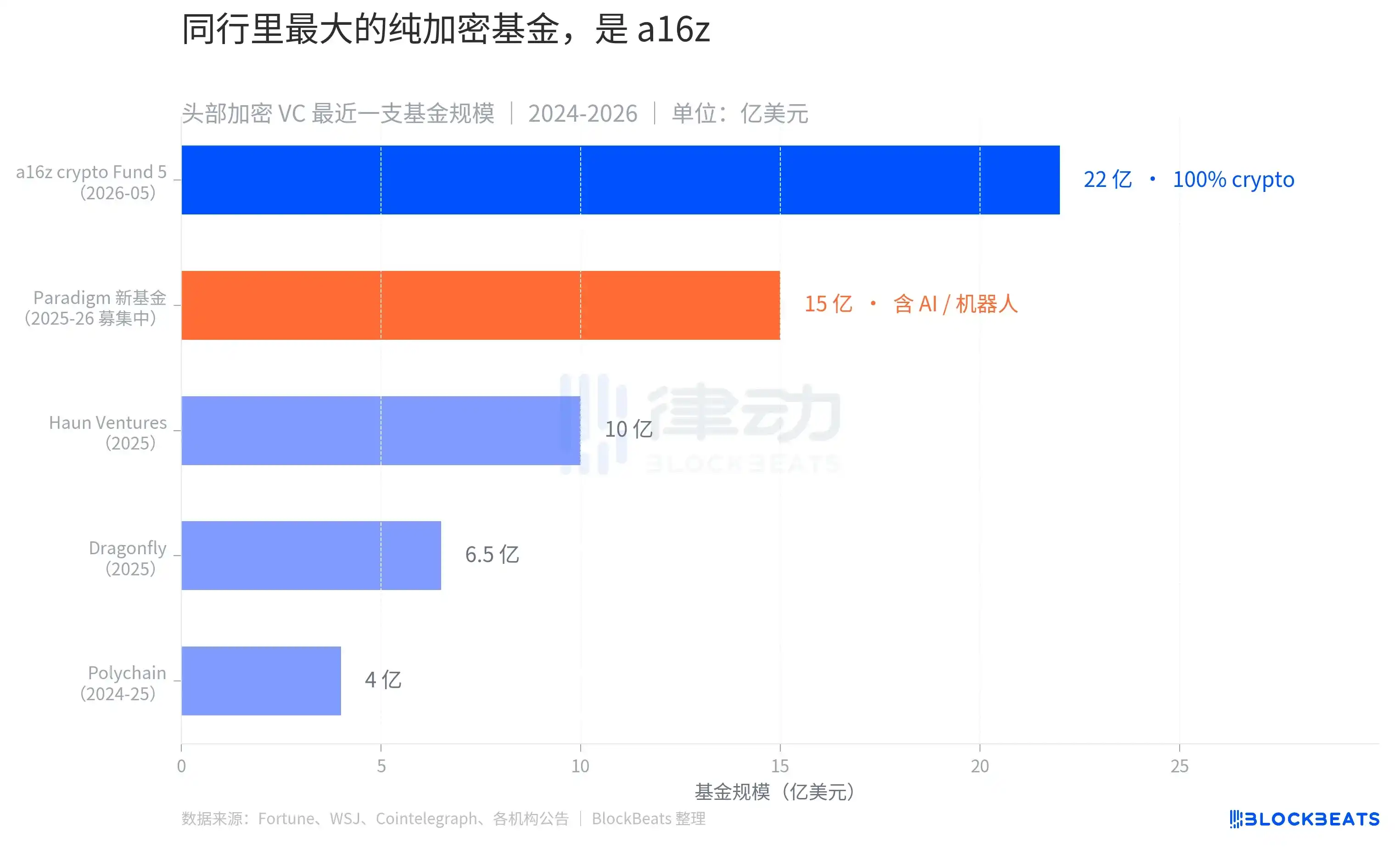

第三层视角是看同行。22 亿美元和 45 亿美元的对比是 a16z crypto 自己的,180 亿美元和 328 亿美元的对比是行业的,最后一个对比是同行之间。

把 2024-2026 年间几家头部加密 VC 各自最新一支基金摆在一起看:Polychain 4 亿美元,Dragonfly 6.5 亿美元,Haun Ventures 10 亿美元,Paradigm 新基金 15 亿美元(仍在募集),a16z crypto Fund 5 22 亿美元。a16z crypto 是这一轮里最大的一支,但更关键的细节在它和 Paradigm 之间。

Paradigm 是 2018 年由前红杉资本合伙人与 Coinbase 联合创始人共同创立的加密 VC,长期被视为 a16z crypto 在加密领域里最直接的竞争者。Paradigm 2024 年募完了一支 8.5 亿美元的早期基金「Paradigm Three」,后续宣布的新基金目标 15 亿美元。据《华尔街日报》报道,这支新基金的范围已经从纯加密扩展到 AI、机器人和其他前沿计算。换句话说,Paradigm 的合伙人们做出的判断是「只投加密会错过太多机会」。

a16z crypto 的判断方向相反。基金宣布当天,发言人对 Fortune 的回应只有一句:「Fund 5 100% 投加密创业者」。这句话放在 2026 年的 VC 语境里,是死磕。

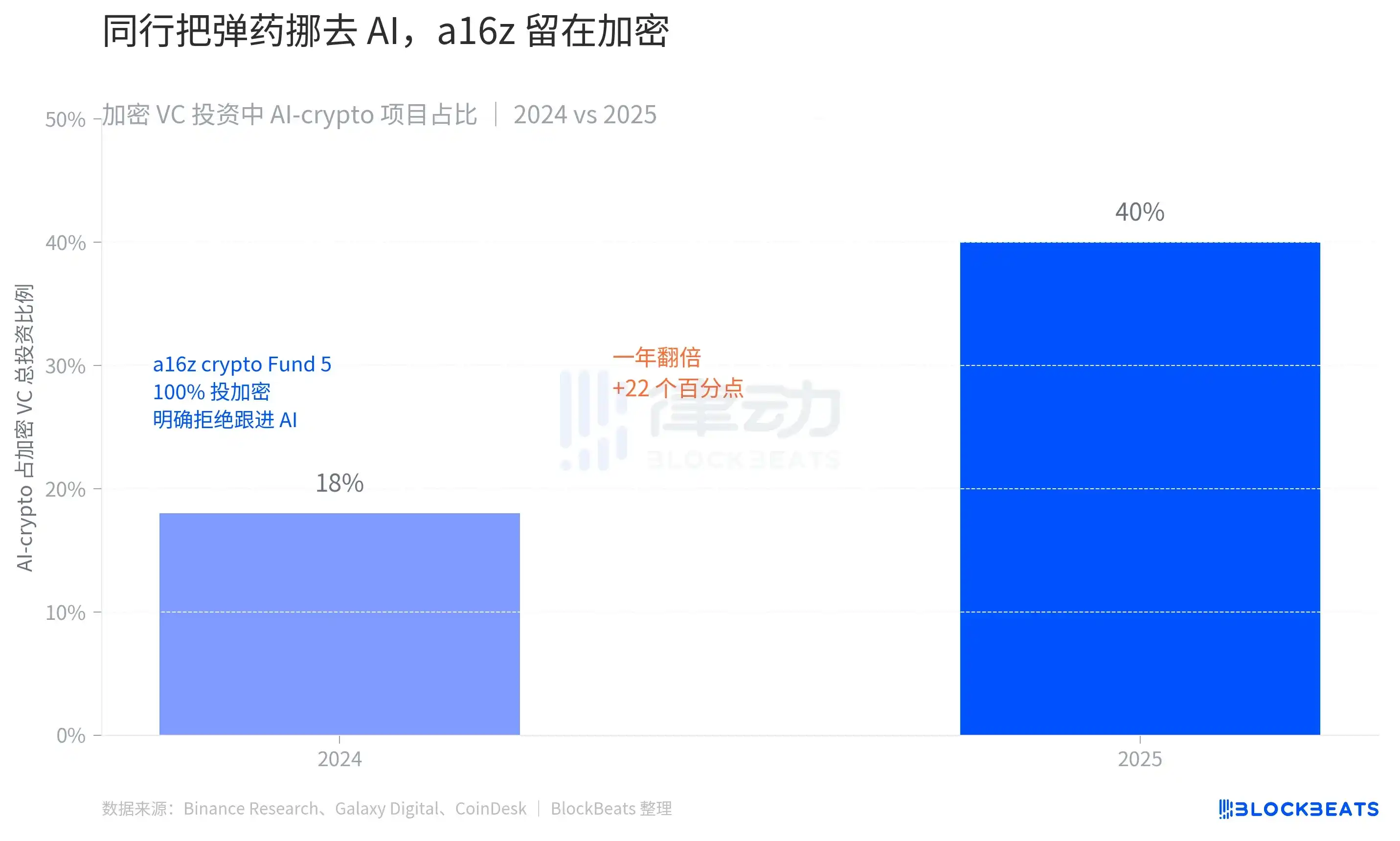

2024 年加密风投投出去的每 1 美元里,有 18 美分流向了「AI + 加密」结合的项目。到 2025 年,这个数字翻了 1 倍多,达到 40 美分。

40% 这个数字背后是一个完整的资金路径变化。据 a16z 1 月发布的「Why Did We Raise $15B」公告,母公司在 2026 年 1 月完成了一轮 150 亿美元的新募资,分布在 Apps(17 亿,AI 应用)、Infrastructure(17 亿,AI 基建)、Growth(67.5 亿)、American Dynamism(11.76 亿)、Bio(7 亿)和 Other(30 亿,含加密、金融科技与企业软件),公开拆分里没有「Crypto」这条独立类目。Fund 5 这 22 亿是在 4 个月之后单独完成募资的。

a16z 母公司的资金盘从 2024 年 5 月的 420 亿扩大到 2026 年 3 月的 900 亿之上,加密分部却从 Fund 4 时期的 11% 占比降到 Fund 5 时期的 2.4%。在内部结构里,加密已经从「一个独立板块」变成「Other 池子里的一种押注」。a16z 母公司的资金重心已经移走,只有 a16z crypto 这条线还想把弹药压在加密上。

这是 Fund 5 真正的位置。它是 a16z 体系里对加密的一次集中下注,规模缩到上一轮的一半,但在加密占比已被压到 2.4% 的母公司里,它是仅存的一笔加密专项。据 Fortune 报道,Fund 4 末期已经下注的 Babylon(让比特币持有者用 BTC 做抵押的协议)、预测市场跨平台工具 Kairos、以及 5000 万美元投入索拉纳质押协议 Jito,是 Fund 5 部署方向的样本。部署目标据迪克森和合伙人在公告中所言,是「投到周期里被忽略的那一段,把新基础设施变成普通人每天用的产品」。

留下来死磕加密的人,只有 a16z 自己。