Trump family-backed World Liberty Financial has proposed using 5% of the project’s WLFI token treasury to grow the supply of its stablecoin USD1.

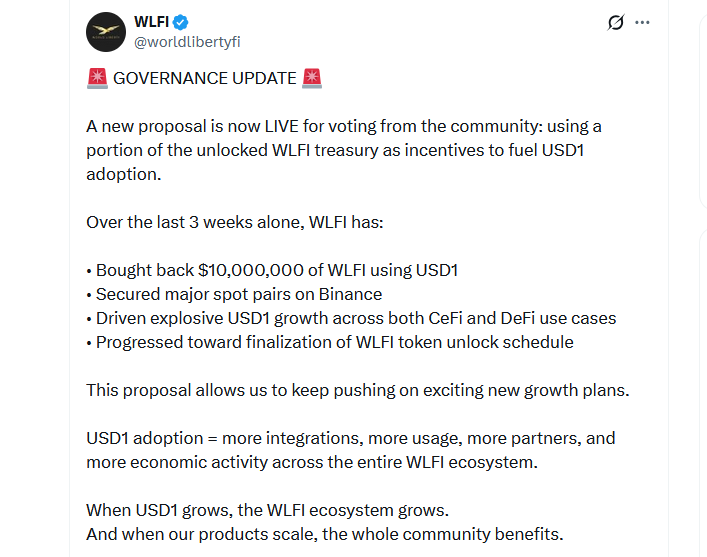

The proposal was posted to the World Liberty Financial governance forum on Wednesday, with the team highlighting the importance of increasing USD1 supply to keep up with “an increasingly competitive stablecoin landscape.”

The proposal outlines that the additional supply would help spread “USD1 use cases across select high-profile CeFi & DeFi partnerships,” with increased adoption helping to create more “value capture” opportunities in the WLFI ecosystem.

“As USD1 grows, more users, platforms, institutions, and chains integrate with World Liberty Financial infrastructure. This increases the scale and influence of the network governed by WLFI holders,” the team said.

“More USD1 in circulation leads to more demand for WLFI-governed services, integrations, liquidity incentives, and ecosystem programs,” it added.

World Liberty Financial’s WLFI token started trading on exchanges in September. Leading up to the launch, the project indicated that 19.96 billion of the total WLFI supply would be allocated to the treasury. At current prices, that total sum is worth almost $2.4 billion, with a 5% unlock equating to around $120 million.

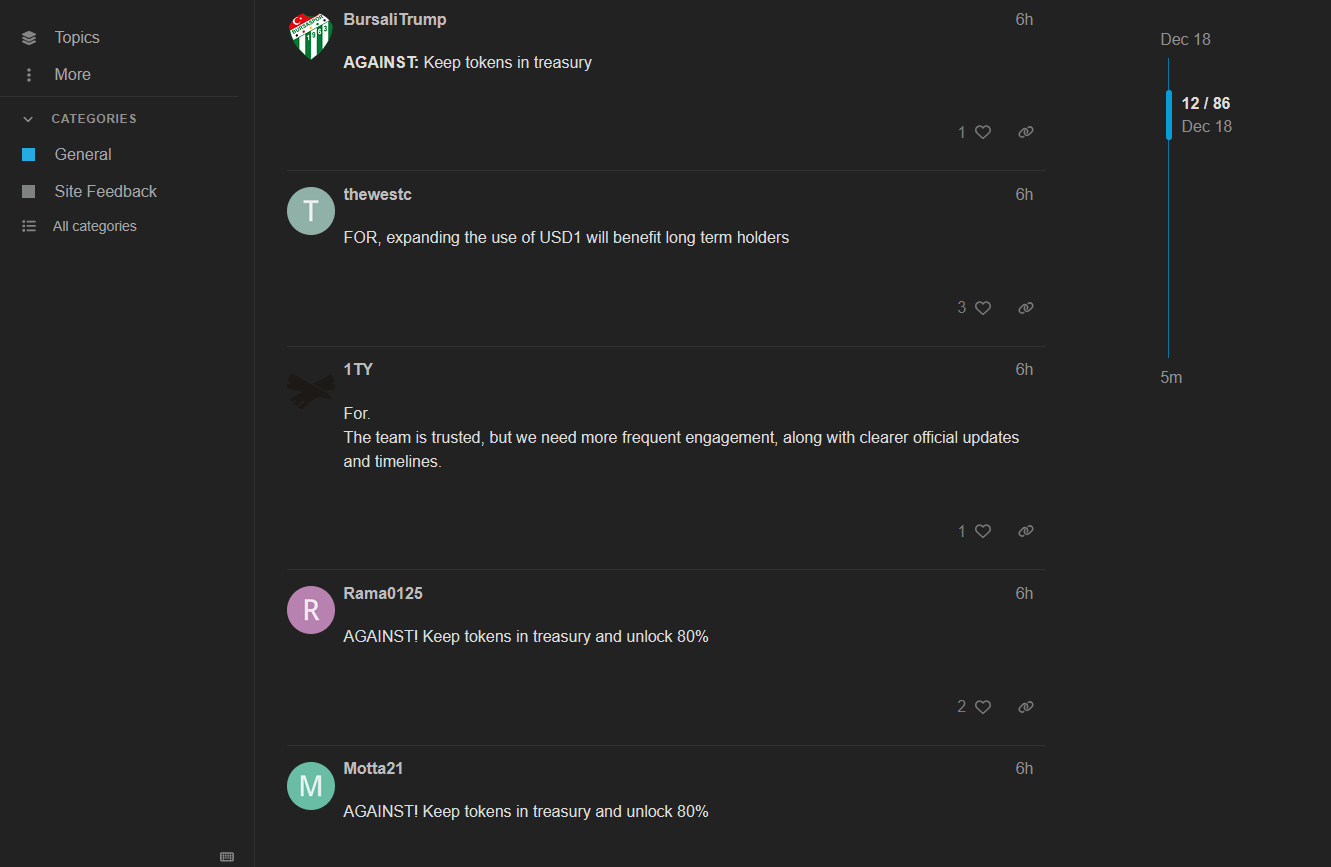

The team outlined three potential voting options in the proposal: for, against or abstain. The vote is now live, but it is not explicitly clear how the voting is taking place.

Related: Binance mulls new US strategy, CZ potentially reducing stake: Report

The reaction to the proposal is currently mixed, with “against” slightly edging out those who indicate they support the proposal.

The project’s stablecoin launched in March and has a market cap of $2.74 billion according to CoinGecko data, making it the seventh-largest USD-pegged stablecoin on the market.

The 5% treasury unlock may help spur growth of the asset; however, it has a lot of catching up to do if it wants to displace competitors, with sixth-placed PYUSD from PayPal having a market cap $1.1 billion larger than USD1.

Magazine: Big questions: Would Bitcoin survive a 10-year power outage?