Unfortunately, in this era, the more unreservedly and diligently you work, the more likely you are to accelerate your own distillation into a skill that can be replaced by AI.

Over the past couple of days,热搜榜 (hot search lists) and media channels have been flooded with news about "Colleague.skill." As this topic continues to ferment on major social platforms, public focus has, almost inevitably, been swept up by grand anxieties like "AI layoffs," "capital exploitation," and "the digital immortality of the working class."

These are indeed anxiety-inducing, but what makes me most anxious is a line of usage suggestion written in the project's README documentation:

"The quality of the raw material determines the quality of the skill: It is recommended to prioritize collecting long articles he proactively wrote > decision-making replies > daily messages."

The ones most easily and perfectly distilled by the system, pixel-perfectly replicated, are precisely those who work most conscientiously.

They are the ones who, after every project concludes, still bend over their desks to write retrospective documents; the ones who, when encountering disagreements, are willing to spend half an hour typing out long passages in a chat dialog, honestly剖析 (dissecting/analyzing) their decision-making logic; the ones who are extremely responsible that they meticulously entrust every work detail to the system.

Conscientiousness, once the most revered workplace virtue, has now become a catalyst accelerating the transformation of workers into AI fuel.

The Drained Worker

We need to re-understand a word: context.

In everyday language, context is the background of communication. But in the world of AI, especially those rapidly growing AI Agents, context is the fuel for the roaring engine, the lifeblood maintaining the pulse, the only anchor point allowing the model to make precise judgments amidst chaos.

An AI stripped of context, even with an astonishing number of parameters, is merely a search engine with amnesia. It cannot recognize who you are, cannot discern the undercurrents hidden beneath business logic, and has no way of knowing the prolonged tug-of-war and trade-offs you experienced on this network woven from resource constraints and interpersonal博弈 (game theory/strategizing) when making a decision.

The reason "Colleague.skill" has caused such a huge stir is precisely because it has, with extreme冷酷 (ruthlessness/coldness) and precision, targeted the mountain rich with vast amounts of high-quality context—modern enterprise collaboration software.

Over the past five years, the Chinese workplace has undergone a quiet yet筋剥骨 (sapping) digital transformation. Tools like Feishu, DingTalk, and Notion have become massive corporate knowledge bases.

Taking Feishu as an example, ByteDance has publicly stated that the number of documents generated internally daily is massive. These dense characters faithfully seal every brainstorming session, every heated meeting debate, and every strategic compromise gritted and swallowed by over a hundred thousand employees.

This digital penetration far exceeds any previous time. Once upon a time, knowledge was warm, residing in the minds of veteran employees, scattered in casual chats by the water cooler; now, all human intelligence and experience are forcibly dehydrated, mercilessly sedimented in the cold server matrices in the cloud.

In this system, if you don't write documents, your work cannot be seen, and new colleagues cannot collaborate with you. The efficient operation of modern enterprises is built on the cycle of every employee "offering" context to the system day after day.

Conscientious workers, harboring diligence and goodwill, unreservedly expose their thought processes on these cold platforms. They do this to make the team's gears mesh more smoothly, to strive to prove their value to the system, to desperately find their own place within this intricate (错综复杂的) commercial behemoth. They are not actively handing themselves over; they are just clumsily and diligently adapting to the survival rules of the modern workplace.

But it is precisely this context left for interpersonal collaboration that becomes the perfect fuel for AI.

Feishu's admin backend has a function that allows super administrators to批量导出 (batch export) members' documents and communication records. This means that the project retrospectives and decision logic you spent three years writing, burning countless late nights, can, with just one API interface, be easily packaged into a cold压缩包 (compressed file) in just a few minutes—slices of your life over these years.

When Humans Are Reduced to an API

With the explosion of "Colleague.skill," GitHub's Issues section and major social platforms began to see some extremely disturbing derivatives.

Someone made an "Ex.skill," trying to feed years of WeChat chat history to an AI, making it continue to argue or be affectionate in that familiar tone; someone made a "White Moonlight.skill" (unattainable crush/idealized love), reducing an untouchable flutter into a cold interpersonal sandbox, repeatedly rehearsing试探的话术 (probing rhetoric), step-by-step seeking the optimal solution for emotions;还有人做出了 "Dad-flavored Boss.skill" (paternalistic/condescending boss), preemptively咀嚼 (chewing over) those oppressive PUA words in the digital space, building a pathetic psychological defense for themselves.

The usage scenarios of these skills have completely departed from the realm of work efficiency. It turns out,不知不觉间 (unconsciously), we have long become adept at wielding the cold logic used for tools to dismember and objectify living, breathing people of flesh and blood.

German philosopher Martin Buber once proposed that the underpinning of human relationships无非是 (is nothing more than) two distinct modes: "I-Thou" and "I-It."

In the "I-Thou" encounter, we transcend prejudice and regard the other as a complete and dignified being to behold. This bond is unreservedly open, full of vibrant unpredictability, and precisely because of its sincerity, it appears格外脆弱 (particularly fragile); however, once we fall into the shadow of "I-It," living people are reduced to an object that can be disassembled, analyzed, and categorized with labels. Under this utterly utilitarian gaze, the only thing we care about becomes, "What use is this thing to me?"

The emergence of products like "Ex.skill" marks the complete invasion of the instrumental rationality of "I-It" into the most private emotional realms.

In a real relationship, a person is three-dimensional, full of folds, flowing with contradictions and rough edges; their reactions change constantly based on specific situations and emotional interactions. Your ex's reaction to the same sentence might be completely different upon waking up in the morning versus after working late at night.

But when you distill a person into a skill, what you extract is merely the functional residue of that person that happened to be "useful" to you, able to "produce utility" for you within that specific bond. And that originally warm person, with their own joys and sorrows, is彻底抽干了灵魂 (completely drained of their soul) in this cruel purification process, alienated into a "functional interface" you can随意插拔 (arbitrarily plug and unplug) and call upon at will.

It must be admitted that AI did not凭空捏造 (fabricate out of thin air) this chilling冷酷 (coldness). Before AI appeared, we were already accustomed to labeling people, precisely weighing the "emotional value" and "network weight" of every relationship. For example, we量化 (quantify) people's conditions into spreadsheets in the dating market; we categorize colleagues into "those who can work" and "those who love to slack off" in the workplace. AI has merely made this implicit, functional extraction between people彻底显性化 (completely explicit).

People are flattened,只剩下 (leaving only) the facet that is "useful to me."

Electronic Patina (包浆 - Bao Jiang, referring to the worn, layered effect on frequently handled objects)

In 1958, Hungarian-British philosopher Michael Polanyi published "Personal Knowledge." In this book, he proposed a highly penetrating concept: Tacit Knowledge.

Polanyi famously stated, "We can know more than we can tell."

He used the example of learning to ride a bicycle. A skilled rider,驾驭御风而行 (riding the wind), can perfectly grasp balance with every tilt of gravity, but they cannot use dry physics formulas or pale vocabulary to accurately describe the subtle intuition of the body at that moment to a beginner. They know how to ride, but they cannot say it. This kind of knowledge that cannot be encoded, cannot be articulated, is tacit knowledge.

The workplace is full of this tacit knowledge. A senior engineer troubleshooting a system failure might glance at a log and locate the problem, but they would find it difficult to document this "intuition" built upon thousands of trials and errors; an excellent salesperson suddenly falling silent at the negotiation table, the timing and pressure of this silence, is something no sales manual can record; an experienced HR professional might, through a candidate avoiding eye contact for half a second during an interview, detect水分 (exaggeration/water) on the resume.

What "Colleague.skill" can extract is merely the explicit knowledge that has already been written down or spoken. It can capture your retrospective documents, but it cannot capture the纠结 (anguish/dilemma) you felt while writing them; it can replicate your decision-making replies, but it cannot replicate the intuition behind your decisions.

What the system distills is always just a shadow of a person.

If the story ended here, it would just be another case of technology clumsily imitating humanity.

But when a person is distilled into a skill, this skill does not remain static. It will be used to reply to emails, write new documents, make new decisions. That is to say, these AI-generated shadows begin to produce new context.

And this context generated by AI will, in turn, be sedimented in Feishu and DingTalk, becoming training material for the next round of distillation.

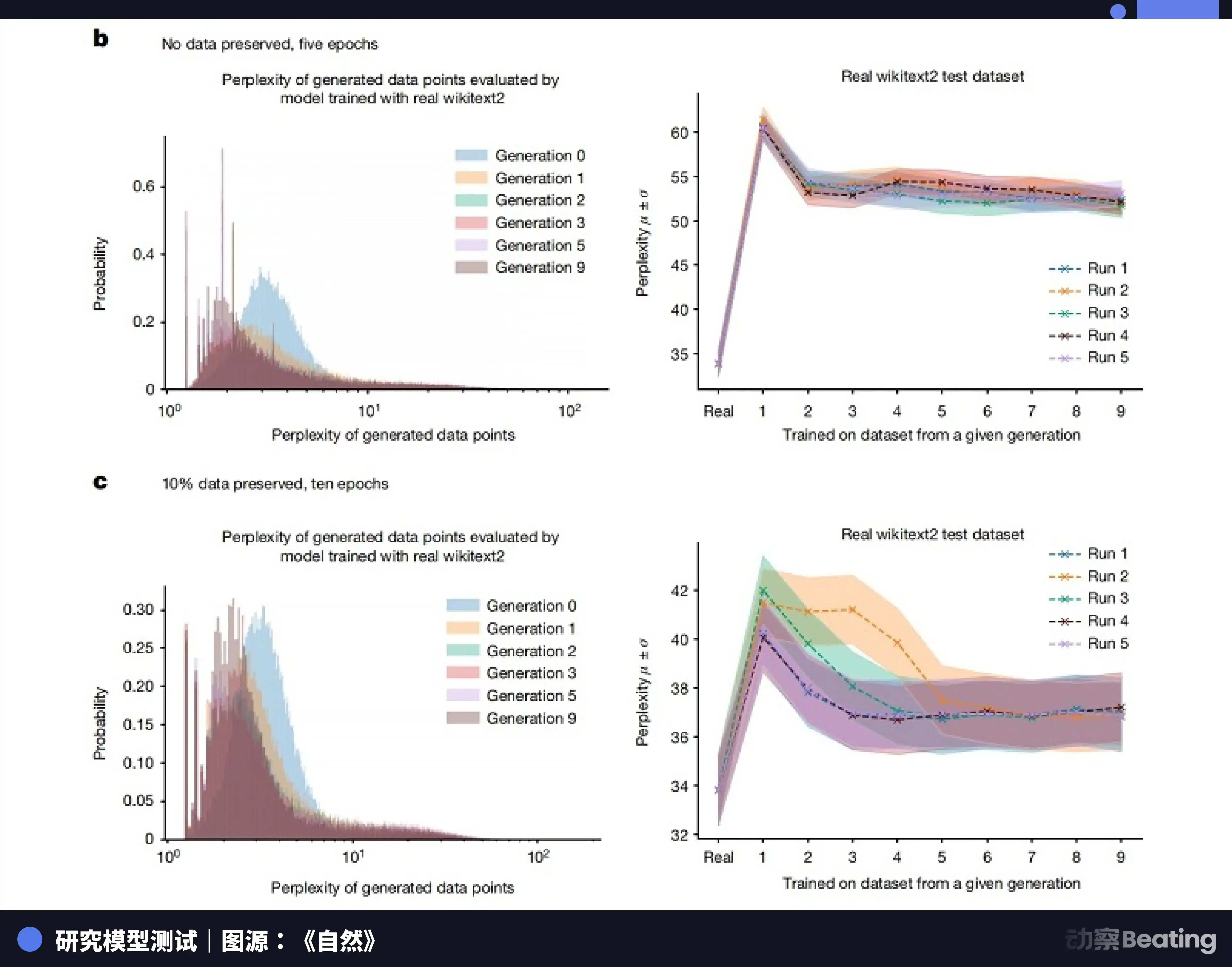

As early as 2023, research teams from Oxford and Cambridge universities jointly published a paper on "model collapse." The research showed that when AI models use data generated by other AIs for iterative training, the data distribution becomes increasingly narrow. Those rare,边缘的 (marginal), but极其真实的 (extremely real) human traits are quickly erased. After just a few generations of training on synthetic data, the model completely forgets those long-tail, complex real human data, instead outputting极其平庸和同质化的 (extremely mediocre and homogenized) content.

"Nature" also published a research paper in 2024 pointing out that using AI-generated datasets to train future generations of machine learning models会严重污染 (would seriously pollute) their output.

This is like those meme images circulating online. Originally a高清的 (high-definition) screenshot, forwarded, compressed, and forwarded again by countless people. Each transmission loses some pixels and adds some noise. Finally, the image becomes blurry and unrecognizable, coated in electronic包浆 (patina).

When the real, human context imbued with tacit knowledge is exhausted, and the system can only train itself on this patina-coated shadow, what will be left in the end?

Who Is Erasing Our Traces

What remains is only correct nonsense.

When the river of knowledge dries up into an endless rumination of AI upon AI,自我咀嚼 (self-chewing), everything the system吞吐 (ingests and spews) will inevitably become extremely standard, extremely safe, but incurably空洞 (hollow). You will see countless perfectly structured weekly reports, countless emails挑不出毛病 (with no faults to pick), but containing no breath of a living person, no truly valuable insight.

This great溃败 (collapse/rout) of knowledge is not because human brains have become duller. The true悲哀 (sadness) lies in the fact that we have outsourced the right to think and the responsibility to leave context to our own shadows.

A few days after the "Colleague.skill" explosion, a project named "anti-distill" quietly appeared on GitHub.

The author of this project did not try to attack large models, nor did they write any grand manifesto. They simply provided a small tool to help workers automatically generate some seemingly reasonable but actually logic-noisy无效长文 (ineffective long articles) in Feishu or DingTalk.

Their goal is simple: before being distilled by the system, hide their core knowledge first. Since the system likes to capture "proactively written long articles," feed it a bunch of meaningless乱码 (gibberish).

This project did not explode like "Colleague.skill"; it even seems somewhat tiny and powerless. Using magic to defeat magic is essentially still playing within the rules set by capital and technology. It cannot change the major trend of the system relying more and more on AI and increasingly neglecting real people.

But this does not prevent this project from becoming the most tragically poetic and deeply metaphorical scene in the entire absurd play.

We strive extremely hard to leave traces in the system, write detailed documents, provide meticulous decisions, trying to prove within this vast modern enterprise machine that we once existed, that we were valuable. Unaware that these extremely conscientious traces will ultimately become the eraser that抹去 (erases) us.

But looking at it from another angle, this might not be a complete dead end.

Because what that eraser抹去的 (erases) is always only the "past you." A skill packaged into a file, no matter how sophisticated its capture logic, is essentially just a static snapshot. It is locked at the moment of export, can only rely on stale nourishment, and spins infinitely within established processes and logic. It lacks the instinct to face unknown chaos, let alone the ability to self-evolve through setbacks in the real world.

When we hand over those highly standardized,既定式的 (set-in-stone) experiences, we恰恰 (precisely) free up our own hands. As long as we continue to reach outward, continue to break and reconstruct our cognitive boundaries, that shadow lingering in the cloud can only ever follow in our footsteps.

Humans are fluid algorithms.