By | Alphabet AI

It has been about ten months since Wang Tao (Alexandr Wang) joined Meta. The world is about to move from one summer to another, and Meta's "Avocado" is finally ripe.

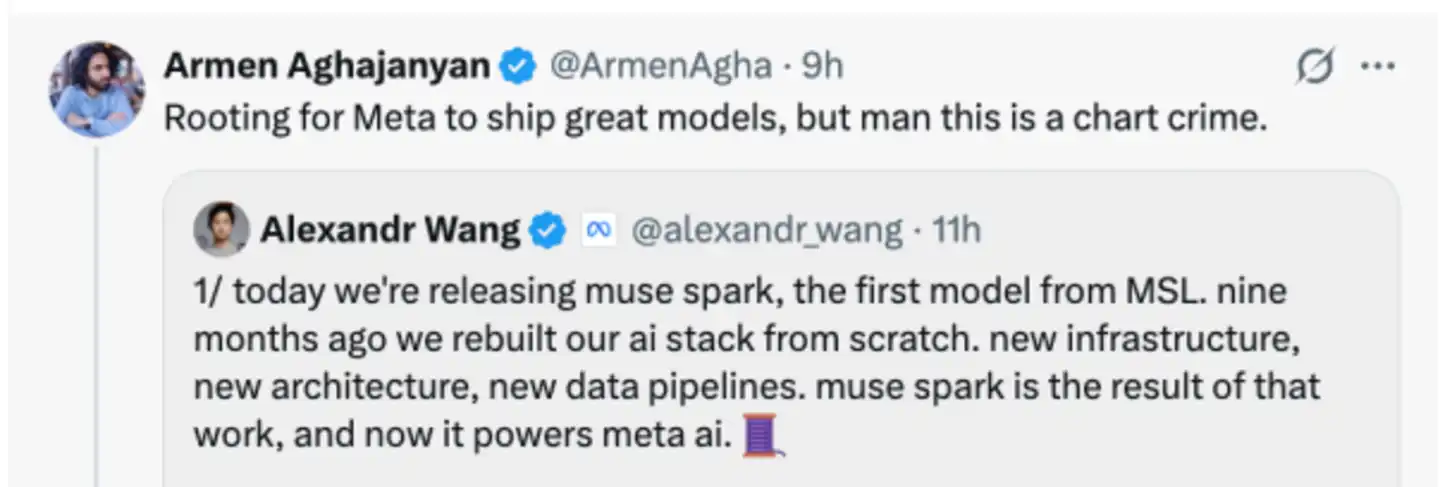

On April 8 local time, Meta officially announced the release of Spark, the first model in the Muse series. This is also the first dish served by Meta after recruiting Wang Tao and establishing the "Meta Superintelligence Labs (MSL)".

Wang Tao posted several messages on X to introduce the new model, stating: "Nine months ago, we rebuilt the AI technology stack from scratch, including new infrastructure, architecture, and data pipelines. Muse Spark is the result of this work."

Even Yann LeCun, Meta's former chief scientist who was rumored to have disagreements with Wang Tao, came to congratulate him, creating a harmonious atmosphere.

Meta emphasized that Spark was designed to be "small and fast." Leading with such a model instead of "holding back for a big move" to release a crushing model shows that Meta knows time is of the essence.

This move seems to have worked, as Meta's stock price rose by about 9% that day.

01 New Model Muse Spark

First, let's take a look at what model Meta has released.

The new model is called Muse Spark, with Muse being the name of the model series. The name is quite interesting: Muse refers to the "Muses," and Spark means "spark."

Meta stated that Muse Spark is Meta's most powerful model to date. It currently powers Meta AI applications and websites and will be rolled out to WhatsApp, Instagram, Facebook, Messenger, and AI glasses in the coming weeks. Meta will also offer a private preview of the model via API to select partners.

Clearly, Meta wants to fully leverage its platform advantages, explicitly stating that Muse Spark is specifically built for Meta's products.

It will provide smarter and faster support for Meta AI and unlock new features over time, such as referencing recommended content and information shared by users on Instagram, Facebook, and Threads.

"We are moving toward the goal of personal superintelligence: creating an intelligent assistant that can help anyone, anytime, with the things they care about most."

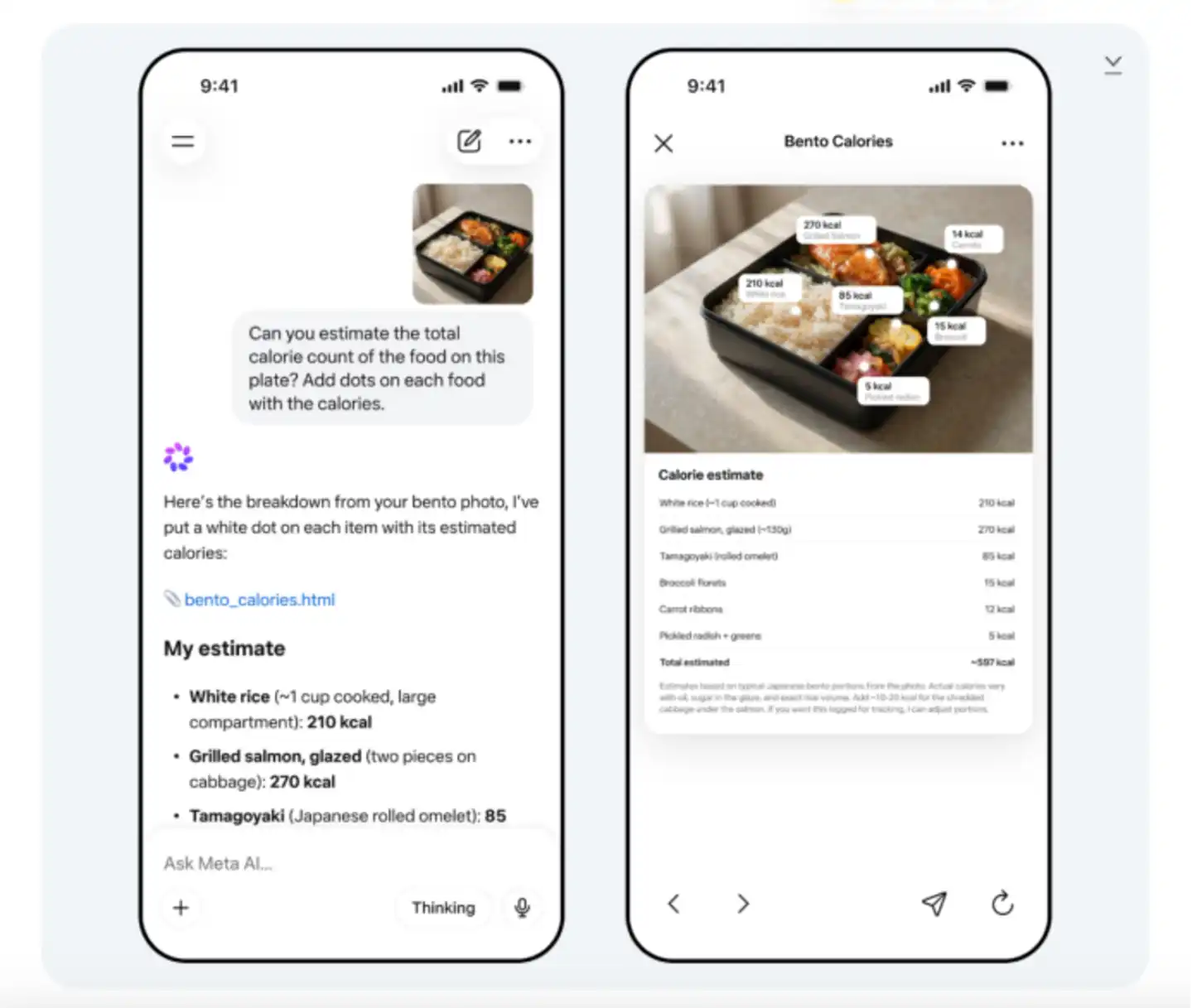

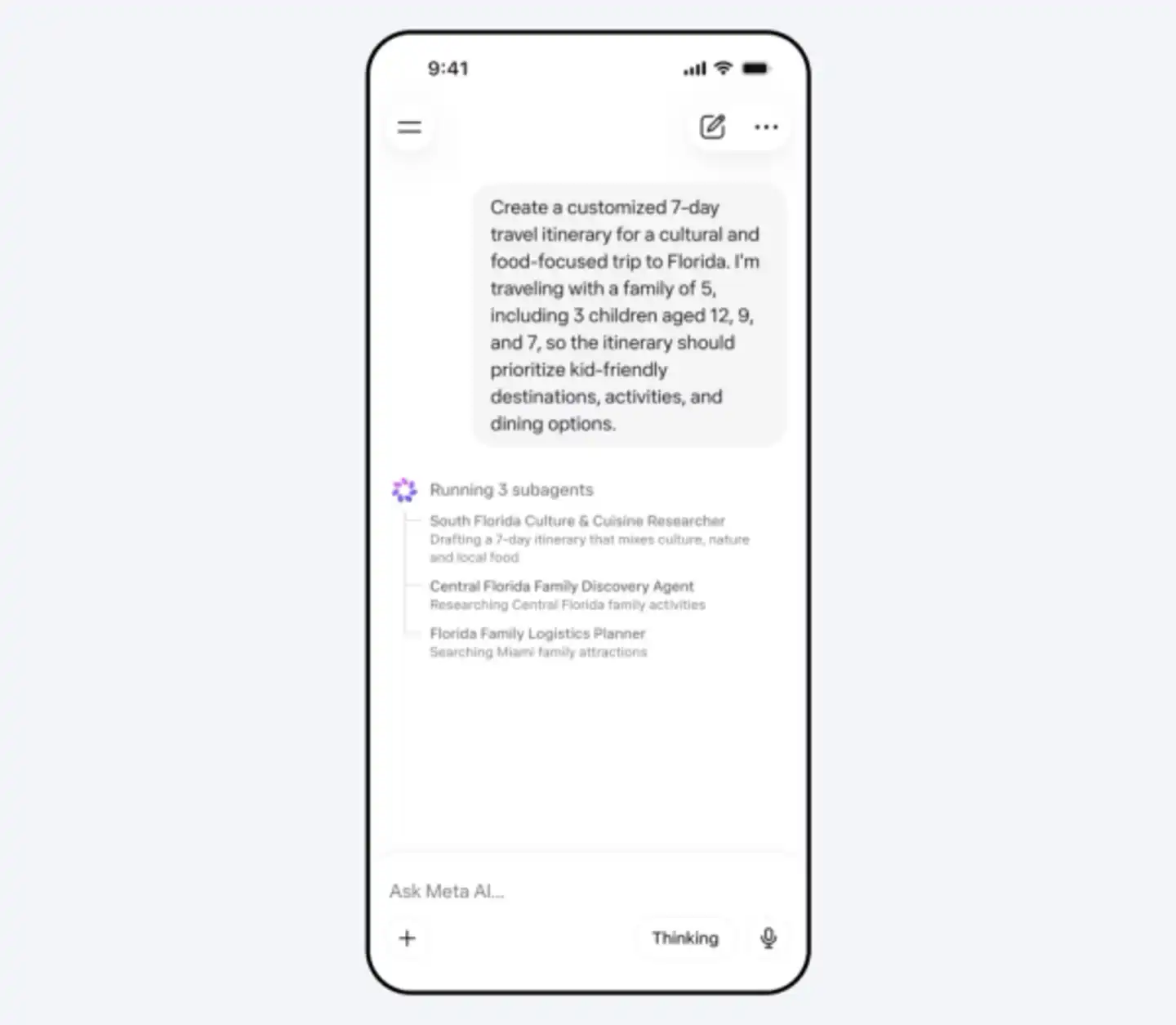

Muse Spark is designed to be small and fast yet capable of handling complex problems in science, mathematics, and health. Its core is a natively multimodal reasoning model.

Unlike previous versions that "stitched" vision and text together, Muse Spark was rebuilt from the ground up, integrating visual information into its internal logic. This architectural shift enables a "visual chain of thought," allowing the model to annotate dynamic environments—for example, identifying components of a complex coffee machine or correcting a user's yoga posture through side-by-side video analysis.

However, the most important technical leap is the new "Contemplating" mode.

Meta claims that this feature coordinates multiple sub-agents for parallel reasoning, enabling Meta to compete with extreme reasoning models like Google's Gemini Deep Think and OpenAI's GPT-5.4 Pro.

In terms of single-model test results:

. PhD-level scientific reasoning (GPQA Diamond): Muse Spark achieved an accuracy of 89.5%, which is quite strong but still slightly behind Gemini 3.1 Pro (94.3%), GPT-5.4 (92.8%), and Claude Opus 4.6 (92.7%).

. Chart and visual understanding (CharXiv Reasoning, in Contemplating mode): Scored 86.4, significantly outperforming competitors in this multimodal visual reasoning task—surpassing Gemini 3.1 Pro (80.2), GPT-5.4 (82.8), and Claude Opus 4.6 (65.3). Visual understanding and chart reasoning are among Muse Spark's standout strengths.

. Hard medical reasoning (HealthBench Hard): Scored 42.8%, significantly leading all major competitors, including GPT-5.4 (40.1%), Gemini 3.1 Pro (20.6%), and Claude Opus 4.6 (14.8%). Meta stated that this is thanks to targeted training in collaboration with over 1,000 doctors. Medical-related capabilities are one of its highlights.

. Software engineering and coding (SWE-Bench Verified): Scored 77.4%, behind Claude Opus 4.6 (80.8%) and Gemini 3.1 Pro (80.6%). Meta itself admitted that there is still a gap in long-term, multi-step autonomous tasks (agentic tasks) and complex coding workflows, requiring continued investment.

. Multimodal multidisciplinary understanding (MMMU Pro): Scored approximately 80.4–80.5%, second only to Gemini 3.1 Pro (83.9%), ranking second in visual multimodal tasks.

Overall, Muse Spark excels in visual multimodal reasoning, medical fields, and efficient reasoning, making it particularly suitable for Meta's own social, content, and health ecosystems. However, it still has room for improvement in pure coding and long-chain autonomous tasks.

02 The "Avocado" Delayed Multiple Times

An interesting side note occurred on X.

As Meta's current AI leader, Wang Tao (Alexandr Wang) posted several messages on X promoting the new model.

At this point, someone pointed out that the benchmark chart provided was too misleading, "almost criminal." In this chart, Muse Spark's scores were placed in the first column and all highlighted in a prominent color. At first glance, it seemed to be leading across the board, but upon closer inspection, some scores were actually lower.

Playing tricks with charts is not new; OpenAI has been criticized for this multiple times before.

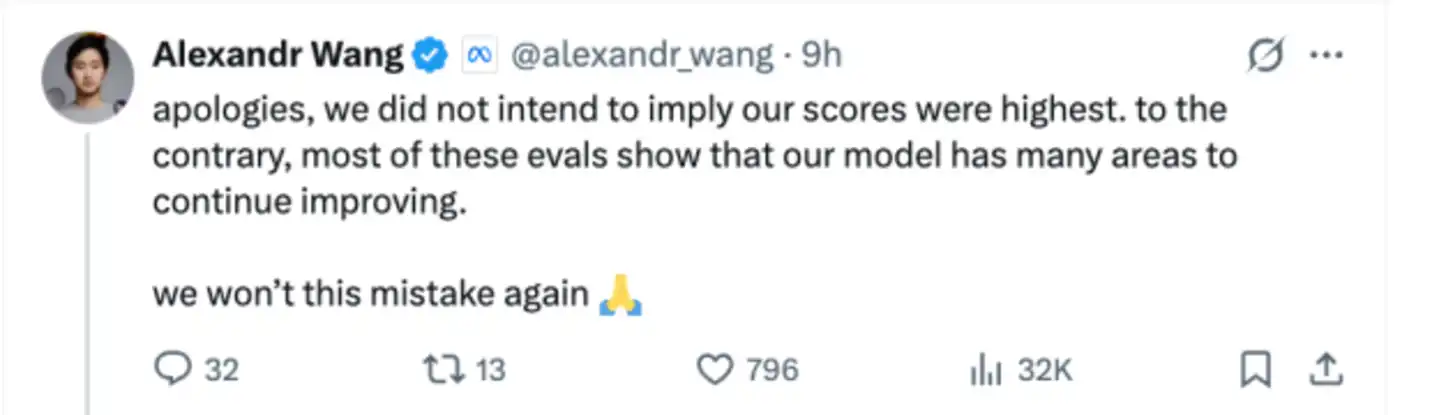

Interestingly, Wang Tao chose to immediately "apologize" in response to the criticism:

"Sorry, we did not mean to imply that our scores are the highest. On the contrary, most evaluation results show that our model still has much room for improvement. We will not make the same mistake again."

It is not hard to see that Meta does not intend for Muse Spark to achieve complete dominance but rather to return to the competition in AI.

From various signs, the Muse series is likely the project internally code-named "Avocado."

Avocado has been delayed for too long, and Meta has now adopted a "small first, then big" strategy. Meta emphasized in its official blog post that Spark focuses on being fast and small, and this is just the beginning:

"Our models are developing as expected. Muse Spark is an early data point in our development journey, and we are working on larger-scale models."

This is different from the AI industry's (especially the top players') habit of "making a splash" or "shocking," but Meta really doesn't have time to take it slow.

Early last year, after Meta released the Llama 4 series, the model's performance did not meet expectations (especially the insufficient performance of the Behemoth large model), and further open-source development of the Llama series was paused.

By last summer, Meta invested $14.3 billion in Scale AI (acquiring a 49% stake) and directly recruited Scale AI founder and CEO, 28-year-old Wang Tao (Alexandr Wang), as Chief AI Officer, formally establishing the Meta Superintelligence Labs (MSL).

At the same time, Meta engaged in疯狂挖角 (frenzy recruitment), recruiting dozens of top researchers from OpenAI, Google, and other companies with high salaries, some offers reaching millions to hundreds of millions of dollars.

In terms of costs, Meta's full-year AI-related capital expenditure in 2025 reached $72.22 billion; the financial guidance in January 2026 indicated that this number would significantly increase to $115–135 billion, almost doubling, mainly for MSL's model training and data center expansion.

Over the past ten months, Meta, as well as Zuckerberg and Meta's AI head Wang Tao, have been under tremendous pressure. People were eager to see what dish would be served after Wang Tao joined and Meta重组 (reorganized).

At least from the market's initial feedback, Meta's strategy of abandoning "holding back for a big move" and instead serving a small dish first is working. Meta's stock price surged nearly 9% that day, marking the largest single-day gain since January this year. As of the close, Meta rose 6.5%.

A noteworthy piece of information is that the outside world一直认为 (has always believed) that "Avocado" would completely转向闭源 (shift to closed-source), but Meta did not close the door this time. In the future, Meta may adopt a hybrid strategy of open-source and closed-source并行 (parallel), keeping flagship models and exclusive technologies internal while maintaining the open-source availability of fresh models to the broad developer community.

Meta has finally served "Avocado" on the table, but this is far from the end. For Wang Tao and Zuckerberg, Muse Spark is more like a starting gun. The future unfolds depends on whether the promise of "getting stronger and stronger" can be fulfilled.