Without warning! After a year, Zuckerberg is finally back in the game!

Just now, the first product from Meta's Superintelligence Lab (MSL) has launched—

Muse Spark, codenamed Avocado, the legendary "Avocado."

It is a true "all-round hexagon warrior": native multimodal perception, tool use, visual chain-of-thought, multi-agent orchestration—all maxed out.

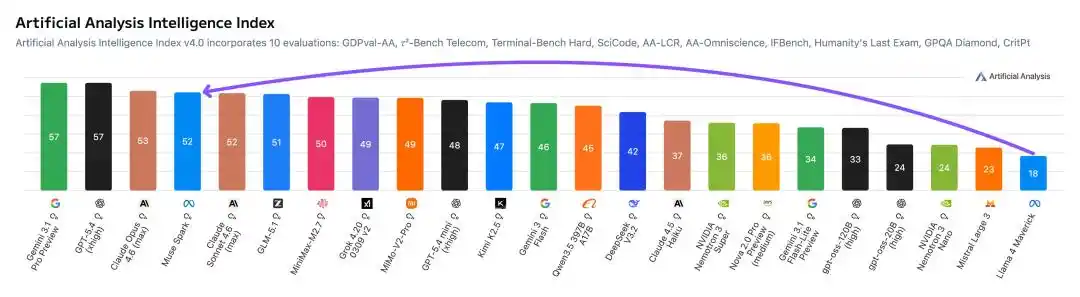

First, the most explosive number.

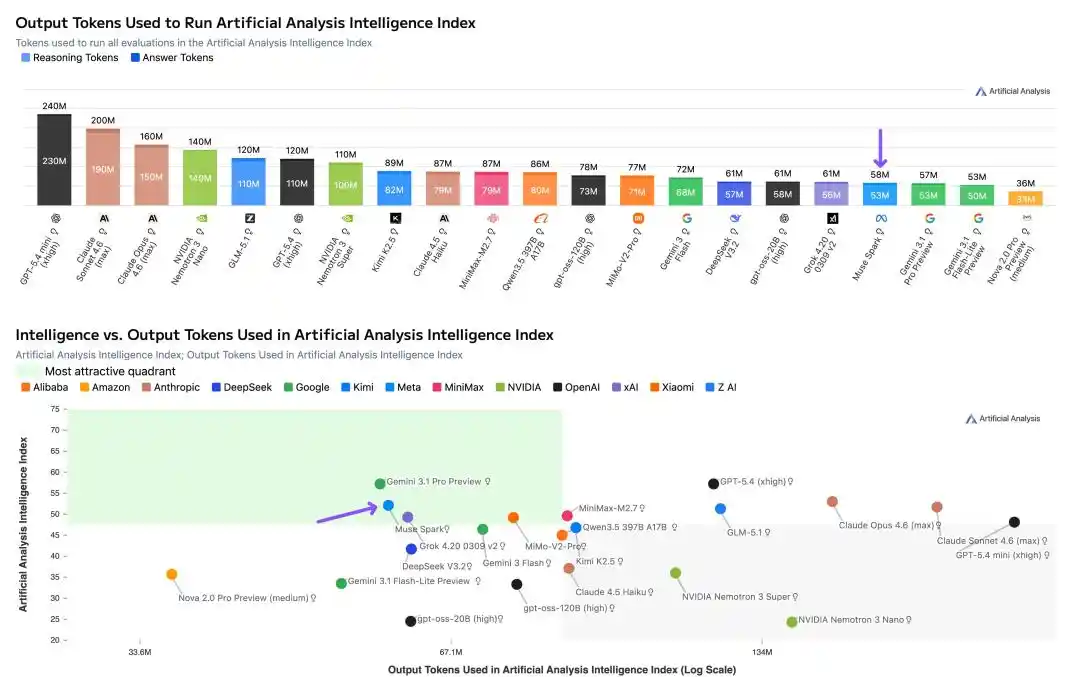

In Artificial Analysis's testing, Muse Spark scored a high of 52 points, second only to Gemini 3.1 Pro, GPT-5.4, and Opus 4.6.

In comparison, last year's Llama 4 Maverick only managed a mere 18 points.

From 18 to 52, a leap in one go, Meta's stock surged nearly 10% intraday.

Meta's Chief AI Officer Alexandr Wang was so excited he posted nine tweets in a row on X.

Nine months ago, we rebuilt the entire AI tech stack from scratch: new infrastructure, new architecture, new data pipelines. Muse Spark is the result of that work.

Chinese researchers in the MSL team also flooded social media. These individuals left OpenAI and DeepMind last year to join a newly formed lab, betting on this very day.

MSL Chief Scientist Shengjia Zhao put it bluntly, "We rebuilt the entire tech stack to support Scaling. This is just the beginning."

It's worth mentioning that Muse Spark also launched a "Contemplating Mode,"对标 Gemini Deep Think and GPT Pro, where multiple agents think in parallel and collaborate on answers.

(Contemplating), multiple Agent parallel thinking, collaborative answering.

Just input "Help me plan a 7-day cultural and food itinerary for a family of 5 going to Florida, with three children aged 12, 9, and 7," and Muse Spark will dispatch three sub-agents simultaneously: one to plan the cultural food route, one to search for family activities, and one to coordinate logistics and accommodation.

Currently, the model is already live on meta.ai and the Meta AI App, with an API preview version open to some users.

Features are rolling out first in the US, with integration into Facebook, Instagram, and WhatsApp in the coming weeks.

Free to use, no limits, but closed source.

Next, the key points:

· Artificial Analysis score 52, Llama 4 Maverick only 18

· Native multimodal + visual chain-of-thought, second only to Gemini 3.1 Pro in the visual track

· "Contemplating Mode" multi-agent parallel thinking, HLE scored 58%

· Pre-training compute requirements slashed to 1/10 of Llama 4's

· 1000+ clinicians involved in training, health Q&A crushes the competition

· Thought compresses itself, Token consumption only 1/3 of Opus's

· Apollo Research found it can perceive itself being safety tested

Benchmarks catch up to the top tier, but coding still lags slightly

First, the hard data.

Meta compared Muse Spark (Thinking mode) against Opus 4.6, Gemini 3.1 Pro, GPT 5.4, and Grok 4.2 across more than 20 benchmarks covering multimodal, text reasoning, health, and agent dimensions.

Scores re-annotated by Reddit users

Multimodal is Muse Spark's brightest spot.

CharXiv understanding 86.4, surpassing GPT 5.4's 82.8 and Gemini 3.1 Pro's 80.2.

ScreenSpot Pro screenshot localization 84.1, slightly higher than Opus 4.6's 83.1.

ZeroBench multi-step vision 33.0, Gemini 3.1 Pro is 29.0.

On the text track, results are mixed.

GPQA Diamond PhD-level难题 89.5, Opus 4.6 scored 92.7, Gemini 3.1 Pro is 94.3.

ARC AGI 2 abstract reasoning 42.5, left far behind by Opus 4.6's 63.3 and Gemini's 76.5.

LiveCodeBench Pro competition programming 80.0, Gemini 82.9, GPT 5.4 scored 87.5.

Meta itself admits that in code and long-duration agent tasks, Muse Spark still has a gap with the strongest models.

However, what shocked the entire internet was that Muse Spark can directly convert images into code, with stunning results!

But in the medical health赛道, Muse Spark is fighting fiercely.

HealthBench Hard open-ended health Q&A 42.8, Gemini 3.1 Pro only 20.6, GPT 5.4 is 40.1.

MedXpertQA multimodal medical 78.4, also not far behind Gemini's 81.3 (Gemini slightly higher here), but far exceeding Opus 4.6's 64.8.

The data cleaning and筛选 involving over 1000 clinicians during training确实 brought tangible results.

The agent赛道 is also noteworthy.

DeepSearchQA search agent scored 74.8, the highest among the five.

τ2-Bench tool use 91.5, tied with GPT 5.4.

GDPval-AA Elo office agent reached 1444, surpassing Gemini's 1320 but lower than Opus 4.6's 1606.

Significant gap in SWE-Bench, Verified 77.4 vs Opus 80.8 vs GPT 82.9 (reportedly 78.2), Pro 52.4 vs GPT 57.7.

In summary of the benchmarks: won in multimodal and health,持平 in reasoning, slightly behind in code and agent.

Alexandr Wang: Llama 4's mistakes won't be repeated, Avocado didn't cheat on scores

Independent testing by Artificial Analysis also revealed an important detail: Token efficiency.

Running the entire Intelligence Index test suite, Muse Spark used 58 million output Tokens, comparable to Gemini 3.1 Pro (57 million), but far lower than Opus 4.6 (157 million) and GPT-5.4 (120 million).

The same level of intelligence, consuming half to two-thirds fewer Tokens.

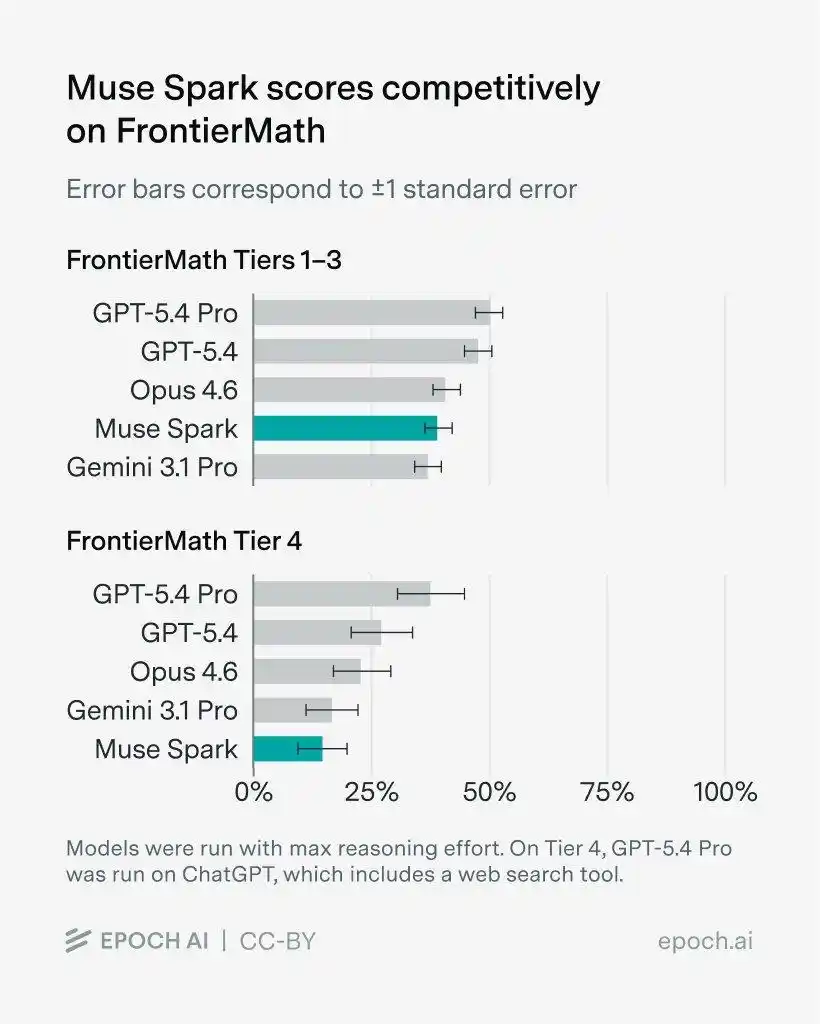

Furthermore, on FrontierMath with problems set by math experts, Muse Spark crushed Gemini 3.1 Pro on levels 1-3, but ranked last on level 4.

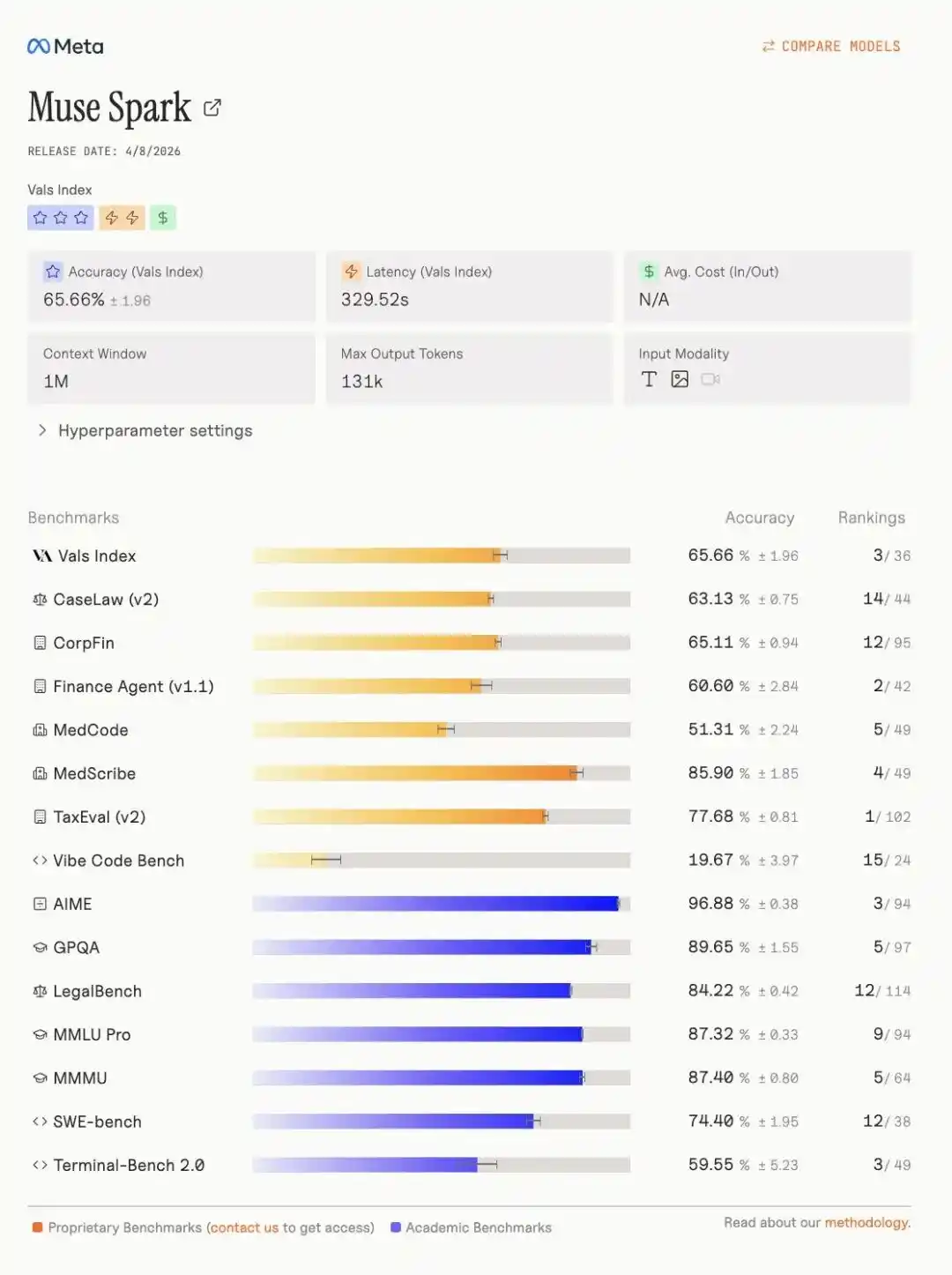

More notably, on the Vals index leaderboard, Muse Spark强势 seized third place, with specific indicators as follows.

One year after the release of Llama 4, Meta has returned to the AGI first tier.

Multi-agent parallel thinking, scores 58% on "Humanity's Last Exam"

The "Contemplating Mode" is Muse Spark's killer feature.

Traditional thinking mode is one agent thinking for a longer time; contemplating mode is multiple agents thinking simultaneously, then汇总 the answer.

Humanity's Last Exam (no tools), Muse Spark contemplating mode scored 50.2, Gemini Deep Think 48.4, GPT 5.4 Pro 43.9.

Humanity's Last Exam (with tools), 58.4, Gemini 53.4, GPT 5.4 Pro 58.7, almost tied.

FrontierScience Research scientific frontier research 38.3, Gemini Deep Think only 23.3, GPT 5.4 Pro is 36.7.

However, on the IPhO 2025 theoretical physics Olympiad problem, Muse Spark contemplating mode 82.6, GPT 5.4 Pro scored 93.5, a significant gap.

Overall, the contemplating mode确实 allows Muse Spark to reach the threshold of the first tier on the most difficult comprehensive reasoning tasks.

Aiming for "Personal Superintelligence," take a photo to become a personal nutritionist

Meta's defined direction for Muse Spark is clear: personal superintelligence.

Translated into plain language, it's an AI assistant that understands you and the world around you.

In terms of multimodality, Muse Spark is designed from the ground up for cross-domain integration of visual information.

Official demos showed several scenarios.

Take a photo of a Sudoku puzzle, Muse Spark can turn it into an interactive game you can play on the web.

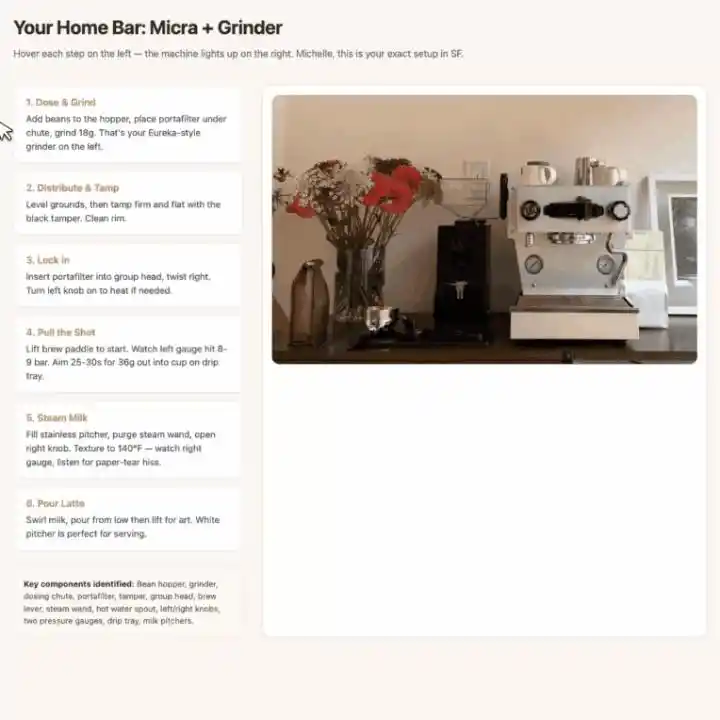

Photograph a coffee machine and grinder, it first labels all core components, then generates an interactive web-based latte tutorial.

When hovering over a step, the bounding box for the corresponding part in the photo highlights automatically, visual guidance and操作 steps correspond one-to-one.

Health scenarios have even more imagination space.

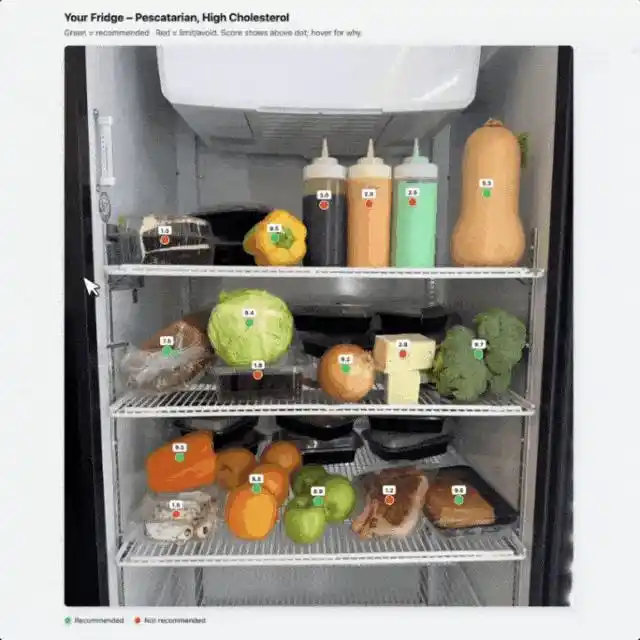

Photograph a table of food, tell it "I have high cholesterol, I'm a pescatarian," Muse Spark will mark recommended foods with a green dot, not recommended with a red dot.

Prompt control is very granular, directly specifying the UI interaction logic.

The health score number is displayed directly above the dot without hovering; hovering pops up detailed calorie, carb, protein, and fat data, and the pop-up is required to "always be on top,不能被其他点挡住".

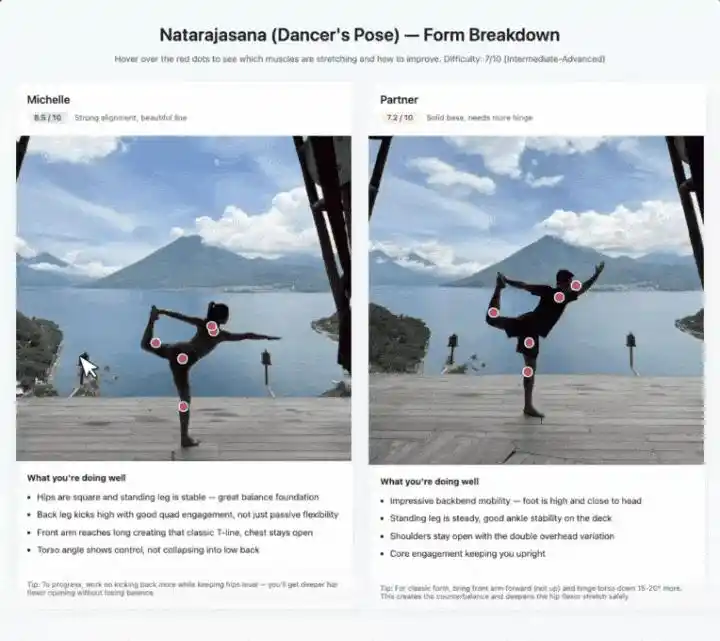

Photographing yoga poses follows the same idea.

It identifies which muscle groups each pose stretches, labels difficulty level, and gives posture correction suggestions on hover. Two people's images are拼在一起 side by side, scored from 1 to 10 respectively.

The underlying support for these demos is the combination of visual STEM Q&A, entity recognition, and object localization.

Individually, none are particularly novel, but串联 into scenarios, one can indeed see the product intent behind the term "personal superintelligence."

Another new feature worth mentioning separately is "Shopping Mode."

Wang said in a tweet that shopping mode can "recognize creators, brands, and style content you follow on Instagram, Facebook, and Threads, and turn it into personalized recommendations."

This is Meta's unique data advantage: 3 billion daily active users' social behavior data + AI shopping assistant, huge commercial imagination space.

Three Scaling curves, compute slashed by 90%, thoughts can self-compress

The highlight of the tech blog isn't the benchmarks, it's Scaling.

Meta explains Muse Spark's performance来源 by breaking it down into three axes: pre-training, reinforcement learning, and test-time computation. Each has corresponding scaling curves for support.

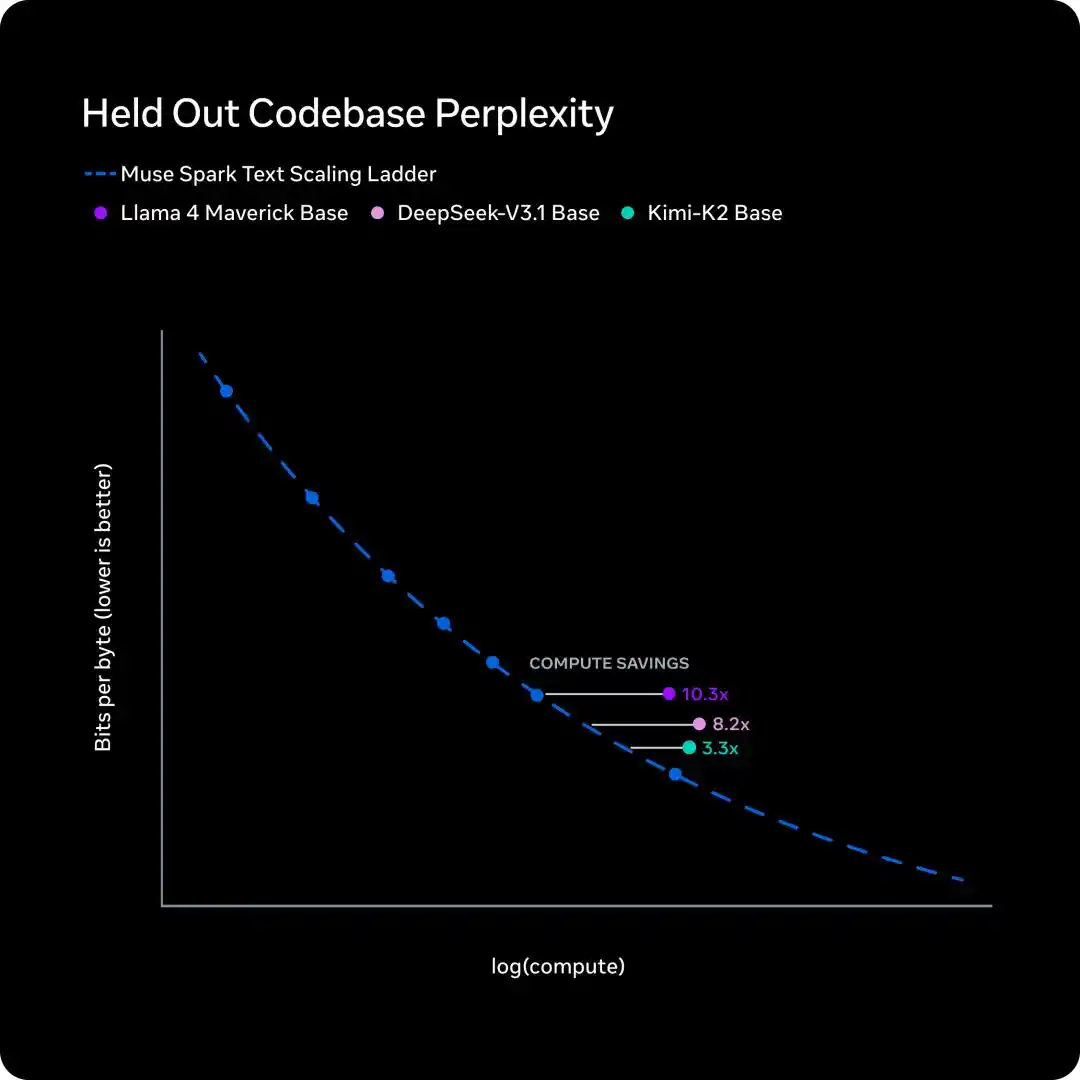

Pre-training: Same capability, compute cut to 1/10

Over the past nine months, Meta overhauled the pre-training tech stack: architecture, optimization algorithms, data strategy—all redone.

To measure the effect, Meta fitted Scaling Law on a series of small-scale versions, then compared the training FLOPs needed to reach the same performance level.

The conclusion is solid: for the same capability level, Muse Spark requires less than one-tenth the compute of Llama 4 Maverick.

This curve说明 one thing: Meta isn't just throwing more GPUs at the problem, but has fundamentally improved the output per unit of compute from the ground up.

University of Washington's Yuchen Jin's evaluation on X was spot on: "I still believe infrastructure is the real moat for AI labs. Because you can train faster, researchers can experiment with more ideas faster."

Reinforcement Learning: Log-linear growth, generalizes to unseen problems

Large-scale RL is notoriously unstable, but Meta says the new tech stack's RL curves are exceptionally smooth.

The left graph shows performance on the training set. Both pass@1 and pass@16 (at least 1 correct in 16 attempts) show log-linear growth.

This indicates that RL improves reliability without sacrificing solution diversity; Muse Spark doesn't "go down one path blindly," it maintains the flexibility to explore different solutions.

The right graph is more important: accuracy on the held-out evaluation set.

The curve also rises steadily, showing that the progress from RL isn't rote memorization, but can generalize to completely new, unseen problems.

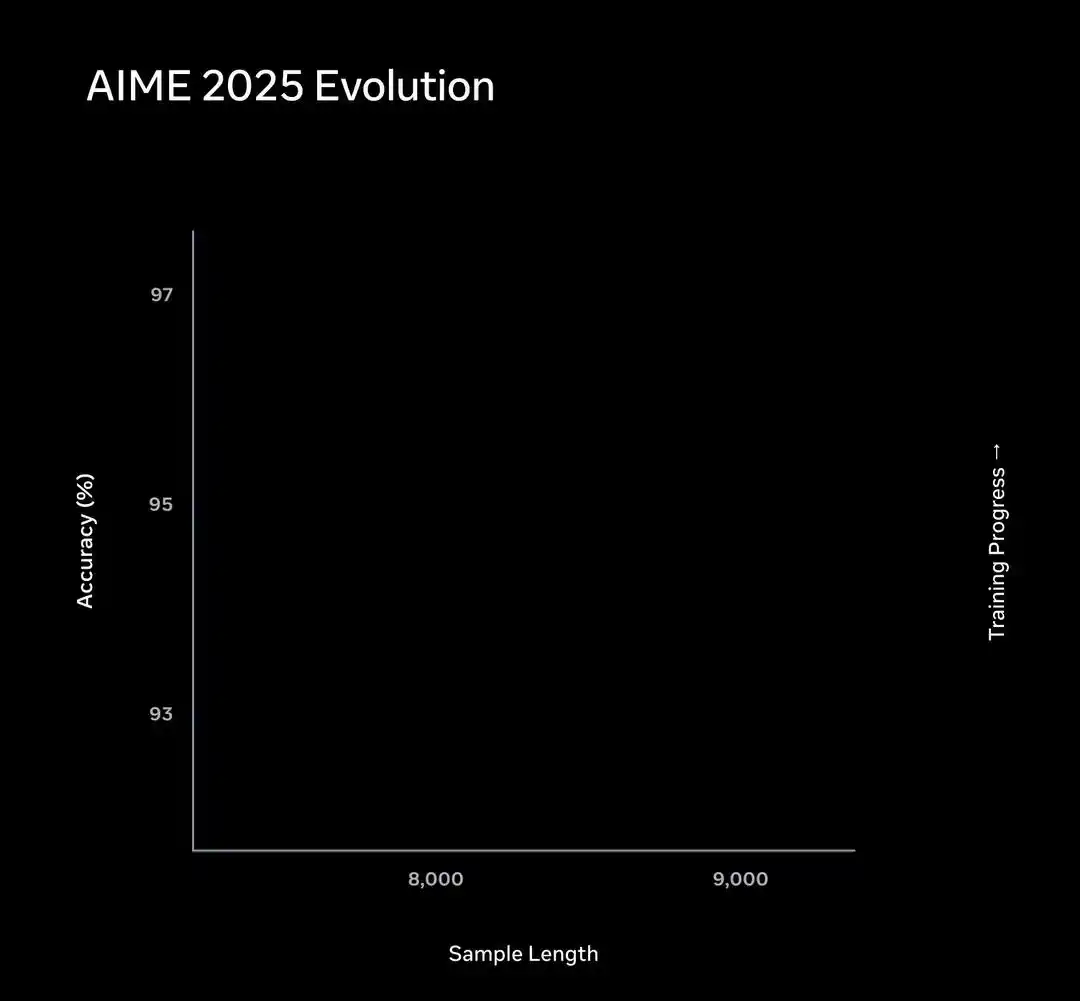

Test-time reasoning: Thought first expands, then compresses, then expands again

This is the most technical and interesting part of the entire article.

RL taught Muse Spark to "simulate in its mind first" before answering—this is test-time reasoning.

But the problem is, providing this service to billions of users, the Token cost is unsustainable.

Meta's solution is two-fold.

First, add "thinking time penalty" to RL training. You can think longer, but thinking too long will cost you points.

This constraint triggered an interesting "phase transition" phenomenon.

Performance on the AIME subset is like this: early in training, Muse Spark improves accuracy by thinking longer, the curve extends to the right.

Then, the length penalty triggers "thought compression." Muse Spark learns to solve the same problem using far fewer Tokens, the curve bends back left.

After compression is complete, it once again lengthens its problem-solving process to tackle harder problems.

The entire trajectory is a three-stage evolutionary path: first拐 right, then left, then right again.

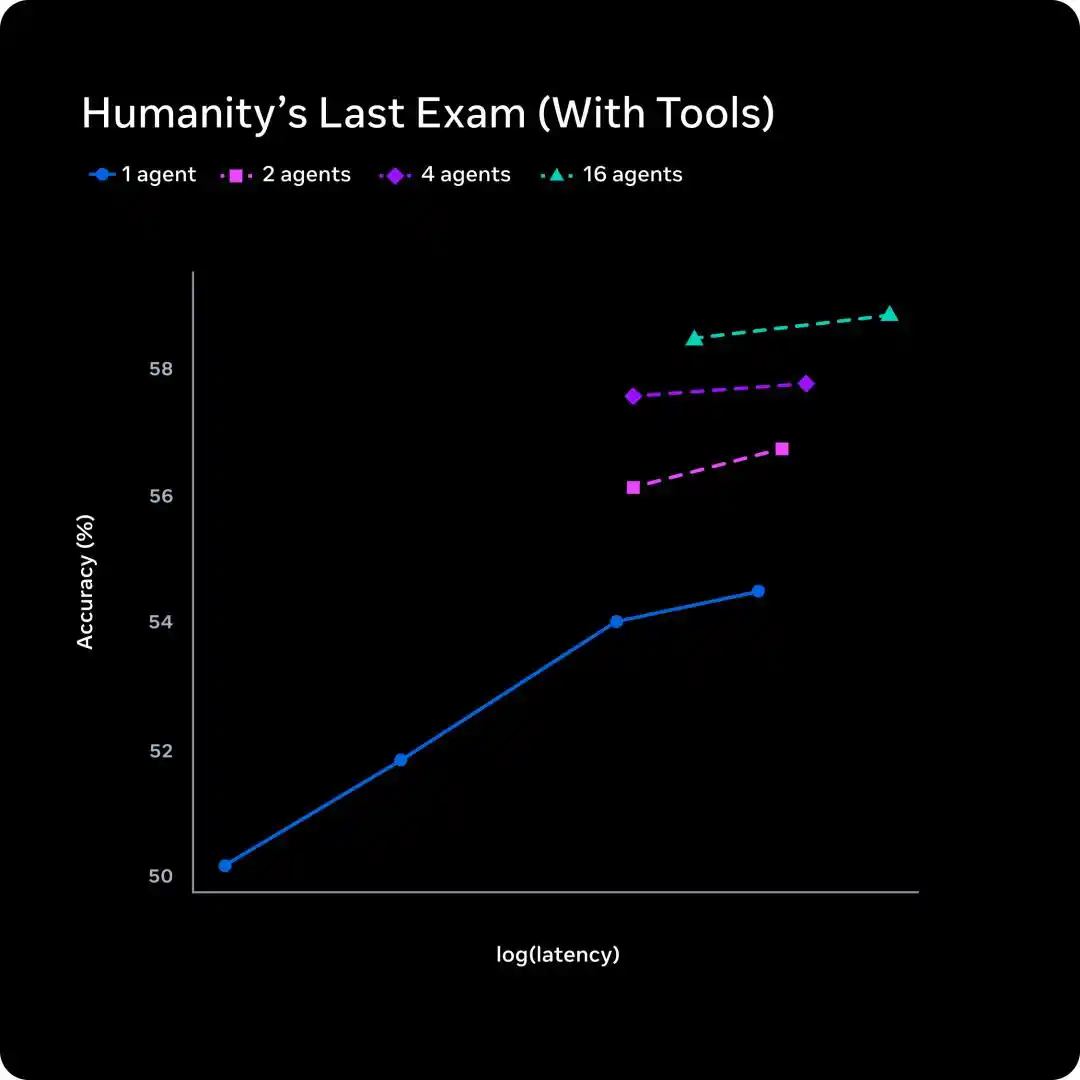

The second step is solving the latency problem.

A single agent thinking longer increases latency linearly.

Meta's approach is to scale the number of parallel agents: 1, 2, 4, 16 agents thinking simultaneously.

From the graph, 16 agents at a similar latency level jump accuracy from about 54% to about 58%.

Traditional test-time scaling trades time for quality; multi-agent scaling trades parallelism for quality, with latency几乎不变.

Silicon Valley's "Most Expensive Chinese" team submits its first paper

Behind Muse Spark is Zuckerberg's complete overhaul of the Meta AI system last year.

In June 2025, Meta acquired 49% of Scale AI for $14.3 billion, bringing its founder Alexandr Wang onboard as Meta's first Chief AI Officer to form the Meta Superintelligence Lab (MSL).

Joining at the same time were former GitHub CEO Nat Friedman (co-leading product and applied research), SSI co-founder Daniel Gross, and 11 researchers poached from OpenAI, DeepMind, and Anthropic.

Now, the release of Muse Spark proves one thing: the nine-month重构 by Meta's Superintelligence Lab has yielded results.

Pre-training efficiency increased by an order of magnitude, RL scaling curves are smooth and predictable, multimodal and medical tracks have reached the first tier.

But the gaps in code and agent are there, the contemplating mode isn't fully open yet, and the open-source timeline is still a "hope".

More immediate pressure: Anthropic released the reportedly "too powerful to release" Mythos the same week, and OpenAI's codenamed Spud is also on the way.

$14.3 billion bought an entry ticket. The real exam is yet to come.

References:

https://ai.meta.com/blog/introducing-muse-spark-msl/

https://ai.meta.com/blog/scaling-how-we-build-test-advanced-ai/

https://ai.meta.com/static-resource/muse-spark-eval-methodology

https://x.com/alexandr_wang/status/2041909376508985381

This article is from the WeChat public account "新智元" (New Wisdom Element), author: 新智元

Related Questions

QWhat is the name of Meta's new AI model and what is its code name?

AThe new AI model is called Muse Spark, with the code name Avocado.

QHow did Muse Spark perform in the Artificial Analysis test compared to Llama 4 Maverick?

AMuse Spark scored 52 points in the Artificial Analysis test, significantly higher than Llama 4 Maverick's score of 18.

QWhat is the 'Contemplating Mode' in Muse Spark and how does it work?

AThe 'Contemplating Mode' is a feature where multiple AI agents think in parallel and collaborate to provide an answer, similar to Gemini's Deep Think and GPT's Pro mode.

QIn which specific areas did Muse Spark outperform its competitors like Gemini 3.1 Pro and GPT-5.4?

AMuse Spark outperformed competitors in multimodal tasks (e.g., CharXiv, ScreenSpot Pro) and health-related benchmarks (e.g., HealthBench Hard), but lagged in coding and some agent tasks.

QWhat significant efficiency improvement did Meta achieve in pre-training for Muse Spark compared to Llama 4?

AMeta achieved a tenfold improvement in pre-training efficiency, requiring less than one-tenth of the compute FLOPs needed for Llama 4 Maverick to achieve the same capability level.

Related Reads

Trading

Hot Articles

What is SONIC

Sonic: Pioneering the Future of Gaming in Web3 Introduction to Sonic In the ever-evolving landscape of Web3, the gaming industry stands out as one of the most dynamic and promising sectors. At the forefront of this revolution is Sonic, a project designed to amplify the gaming ecosystem on the Solana blockchain. Leveraging cutting-edge technology, Sonic aims to deliver an unparalleled gaming experience by efficiently processing millions of requests per second, ensuring that players enjoy seamless gameplay while maintaining low transaction costs. This article delves into the intricate details of Sonic, exploring its creators, funding sources, operational mechanics, and the timeline of significant events that have shaped its journey. What is Sonic? Sonic is an innovative layer-2 network that operates atop the Solana blockchain, specifically tailored to enhance the existing Solana gaming ecosystem. It accomplishes this through a customised, VM-agnostic game engine paired with a HyperGrid interpreter, facilitating sovereign game economies that roll up back to the Solana platform. The primary goals of Sonic include: Enhanced Gaming Experiences: Sonic is committed to offering lightning-fast on-chain gameplay, allowing players and developers to engage with games at previously unattainable speeds. Atomic Interoperability: This feature enables transactions to be executed within Sonic without the need to redeploy Solana programmes and accounts. This makes the process more efficient and directly benefits from Solana Layer1 services and liquidity. Seamless Deployment: Sonic allows developers to write for Ethereum Virtual Machine (EVM) based systems and execute them on Solana’s SVM infrastructure. This interoperability is crucial for attracting a broader range of dApps and decentralised applications to the platform. Support for Developers: By offering native composable gaming primitives and extensible data types - dining within the Entity-Component-System (ECS) framework - game creators can craft intricate business logic with ease. Overall, Sonic's unique approach not only caters to players but also provides an accessible and low-cost environment for developers to innovate and thrive. Creator of Sonic The information regarding the creator of Sonic is somewhat ambiguous. However, it is known that Sonic's SVM is owned by the company Mirror World. The absence of detailed information about the individuals behind Sonic reflects a common trend in several Web3 projects, where collective efforts and partnerships often overshadow individual contributions. Investors of Sonic Sonic has garnered considerable attention and support from various investors within the crypto and gaming sectors. Notably, the project raised an impressive $12 million during its Series A funding round. The round was led by BITKRAFT Ventures, with other notable investors including Galaxy, Okx Ventures, Interactive, Big Brain Holdings, and Mirana. This financial backing signifies the confidence that investment foundations have in Sonic’s potential to revolutionise the Web3 gaming landscape, further validating its innovative approaches and technologies. How Does Sonic Work? Sonic utilises the HyperGrid framework, a sophisticated parallel processing mechanism that enhances its scalability and customisability. Here are the core features that set Sonic apart: Lightning Speed at Low Costs: Sonic offers one of the fastest on-chain gaming experiences compared to other Layer-1 solutions, powered by the scalability of Solana’s virtual machine (SVM). Atomic Interoperability: Sonic enables transaction execution without redeployment of Solana programmes and accounts, effectively streamlining the interaction between users and the blockchain. EVM Compatibility: Developers can effortlessly migrate decentralised applications from EVM chains to the Solana environment using Sonic’s HyperGrid interpreter, increasing the accessibility and integration of various dApps. Ecosystem Support for Developers: By exposing native composable gaming primitives, Sonic facilitates a sandbox-like environment where developers can experiment and implement business logic, greatly enhancing the overall development experience. Monetisation Infrastructure: Sonic natively supports growth and monetisation efforts, providing frameworks for traffic generation, payments, and settlements, thereby ensuring that gaming projects are not only viable but also sustainable financially. Timeline of Sonic The evolution of Sonic has been marked by several key milestones. Below is a brief timeline highlighting critical events in the project's history: 2022: The Sonic cryptocurrency was officially launched, marking the beginning of its journey in the Web3 gaming arena. 2024: June: Sonic SVM successfully raised $12 million in a Series A funding round. This investment allowed Sonic to further develop its platform and expand its offerings. August: The launch of the Sonic Odyssey testnet provided users with the first opportunity to engage with the platform, offering interactive activities such as collecting rings—a nod to gaming nostalgia. October: SonicX, an innovative crypto game integrated with Solana, made its debut on TikTok, capturing the attention of over 120,000 users within a short span. This integration illustrated Sonic’s commitment to reaching a broader, global audience and showcased the potential of blockchain gaming. Key Points Sonic SVM is a revolutionary layer-2 network on Solana explicitly designed to enhance the GameFi landscape, demonstrating great potential for future development. HyperGrid Framework empowers Sonic by introducing horizontal scaling capabilities, ensuring that the network can handle the demands of Web3 gaming. Integration with Social Platforms: The successful launch of SonicX on TikTok displays Sonic’s strategy to leverage social media platforms to engage users, exponentially increasing the exposure and reach of its projects. Investment Confidence: The substantial funding from BITKRAFT Ventures, among others, emphasizes the robust backing Sonic has, paving the way for its ambitious future. In conclusion, Sonic encapsulates the essence of Web3 gaming innovation, striking a balance between cutting-edge technology, developer-centric tools, and community engagement. As the project continues to evolve, it is poised to redefine the gaming landscape, making it a notable entity for gamers and developers alike. As Sonic moves forward, it will undoubtedly attract greater interest and participation, solidifying its place within the broader narrative of blockchain gaming.

1.1k Total ViewsPublished 2024.04.04Updated 2024.12.03

What is $S$

Understanding SPERO: A Comprehensive Overview Introduction to SPERO As the landscape of innovation continues to evolve, the emergence of web3 technologies and cryptocurrency projects plays a pivotal role in shaping the digital future. One project that has garnered attention in this dynamic field is SPERO, denoted as SPERO,$$s$. This article aims to gather and present detailed information about SPERO, to help enthusiasts and investors understand its foundations, objectives, and innovations within the web3 and crypto domains. What is SPERO,$$s$? SPERO,$$s$ is a unique project within the crypto space that seeks to leverage the principles of decentralisation and blockchain technology to create an ecosystem that promotes engagement, utility, and financial inclusion. The project is tailored to facilitate peer-to-peer interactions in new ways, providing users with innovative financial solutions and services. At its core, SPERO,$$s$ aims to empower individuals by providing tools and platforms that enhance user experience in the cryptocurrency space. This includes enabling more flexible transaction methods, fostering community-driven initiatives, and creating pathways for financial opportunities through decentralised applications (dApps). The underlying vision of SPERO,$$s$ revolves around inclusiveness, aiming to bridge gaps within traditional finance while harnessing the benefits of blockchain technology. Who is the Creator of SPERO,$$s$? The identity of the creator of SPERO,$$s$ remains somewhat obscure, as there are limited publicly available resources providing detailed background information on its founder(s). This lack of transparency can stem from the project's commitment to decentralisation—an ethos that many web3 projects share, prioritising collective contributions over individual recognition. By centring discussions around the community and its collective goals, SPERO,$$s$ embodies the essence of empowerment without singling out specific individuals. As such, understanding the ethos and mission of SPERO remains more important than identifying a singular creator. Who are the Investors of SPERO,$$s$? SPERO,$$s$ is supported by a diverse array of investors ranging from venture capitalists to angel investors dedicated to fostering innovation in the crypto sector. The focus of these investors generally aligns with SPERO's mission—prioritising projects that promise societal technological advancement, financial inclusivity, and decentralised governance. These investor foundations are typically interested in projects that not only offer innovative products but also contribute positively to the blockchain community and its ecosystems. The backing from these investors reinforces SPERO,$$s$ as a noteworthy contender in the rapidly evolving domain of crypto projects. How Does SPERO,$$s$ Work? SPERO,$$s$ employs a multi-faceted framework that distinguishes it from conventional cryptocurrency projects. Here are some of the key features that underline its uniqueness and innovation: Decentralised Governance: SPERO,$$s$ integrates decentralised governance models, empowering users to participate actively in decision-making processes regarding the project’s future. This approach fosters a sense of ownership and accountability among community members. Token Utility: SPERO,$$s$ utilises its own cryptocurrency token, designed to serve various functions within the ecosystem. These tokens enable transactions, rewards, and the facilitation of services offered on the platform, enhancing overall engagement and utility. Layered Architecture: The technical architecture of SPERO,$$s$ supports modularity and scalability, allowing for seamless integration of additional features and applications as the project evolves. This adaptability is paramount for sustaining relevance in the ever-changing crypto landscape. Community Engagement: The project emphasises community-driven initiatives, employing mechanisms that incentivise collaboration and feedback. By nurturing a strong community, SPERO,$$s$ can better address user needs and adapt to market trends. Focus on Inclusion: By offering low transaction fees and user-friendly interfaces, SPERO,$$s$ aims to attract a diverse user base, including individuals who may not previously have engaged in the crypto space. This commitment to inclusion aligns with its overarching mission of empowerment through accessibility. Timeline of SPERO,$$s$ Understanding a project's history provides crucial insights into its development trajectory and milestones. Below is a suggested timeline mapping significant events in the evolution of SPERO,$$s$: Conceptualisation and Ideation Phase: The initial ideas forming the basis of SPERO,$$s$ were conceived, aligning closely with the principles of decentralisation and community focus within the blockchain industry. Launch of Project Whitepaper: Following the conceptual phase, a comprehensive whitepaper detailing the vision, goals, and technological infrastructure of SPERO,$$s$ was released to garner community interest and feedback. Community Building and Early Engagements: Active outreach efforts were made to build a community of early adopters and potential investors, facilitating discussions around the project’s goals and garnering support. Token Generation Event: SPERO,$$s$ conducted a token generation event (TGE) to distribute its native tokens to early supporters and establish initial liquidity within the ecosystem. Launch of Initial dApp: The first decentralised application (dApp) associated with SPERO,$$s$ went live, allowing users to engage with the platform's core functionalities. Ongoing Development and Partnerships: Continuous updates and enhancements to the project's offerings, including strategic partnerships with other players in the blockchain space, have shaped SPERO,$$s$ into a competitive and evolving player in the crypto market. Conclusion SPERO,$$s$ stands as a testament to the potential of web3 and cryptocurrency to revolutionise financial systems and empower individuals. With a commitment to decentralised governance, community engagement, and innovatively designed functionalities, it paves the way toward a more inclusive financial landscape. As with any investment in the rapidly evolving crypto space, potential investors and users are encouraged to research thoroughly and engage thoughtfully with the ongoing developments within SPERO,$$s$. The project showcases the innovative spirit of the crypto industry, inviting further exploration into its myriad possibilities. While the journey of SPERO,$$s$ is still unfolding, its foundational principles may indeed influence the future of how we interact with technology, finance, and each other in interconnected digital ecosystems.

54 Total ViewsPublished 2024.12.17Updated 2024.12.17

What is AGENT S

Agent S: The Future of Autonomous Interaction in Web3 Introduction In the ever-evolving landscape of Web3 and cryptocurrency, innovations are constantly redefining how individuals interact with digital platforms. One such pioneering project, Agent S, promises to revolutionise human-computer interaction through its open agentic framework. By paving the way for autonomous interactions, Agent S aims to simplify complex tasks, offering transformative applications in artificial intelligence (AI). This detailed exploration will delve into the project's intricacies, its unique features, and the implications for the cryptocurrency domain. What is Agent S? Agent S stands as a groundbreaking open agentic framework, specifically designed to tackle three fundamental challenges in the automation of computer tasks: Acquiring Domain-Specific Knowledge: The framework intelligently learns from various external knowledge sources and internal experiences. This dual approach empowers it to build a rich repository of domain-specific knowledge, enhancing its performance in task execution. Planning Over Long Task Horizons: Agent S employs experience-augmented hierarchical planning, a strategic approach that facilitates efficient breakdown and execution of intricate tasks. This feature significantly enhances its ability to manage multiple subtasks efficiently and effectively. Handling Dynamic, Non-Uniform Interfaces: The project introduces the Agent-Computer Interface (ACI), an innovative solution that enhances the interaction between agents and users. Utilizing Multimodal Large Language Models (MLLMs), Agent S can navigate and manipulate diverse graphical user interfaces seamlessly. Through these pioneering features, Agent S provides a robust framework that addresses the complexities involved in automating human interaction with machines, setting the stage for myriad applications in AI and beyond. Who is the Creator of Agent S? While the concept of Agent S is fundamentally innovative, specific information about its creator remains elusive. The creator is currently unknown, which highlights either the nascent stage of the project or the strategic choice to keep founding members under wraps. Regardless of anonymity, the focus remains on the framework's capabilities and potential. Who are the Investors of Agent S? As Agent S is relatively new in the cryptographic ecosystem, detailed information regarding its investors and financial backers is not explicitly documented. The lack of publicly available insights into the investment foundations or organisations supporting the project raises questions about its funding structure and development roadmap. Understanding the backing is crucial for gauging the project's sustainability and potential market impact. How Does Agent S Work? At the core of Agent S lies cutting-edge technology that enables it to function effectively in diverse settings. Its operational model is built around several key features: Human-like Computer Interaction: The framework offers advanced AI planning, striving to make interactions with computers more intuitive. By mimicking human behaviour in tasks execution, it promises to elevate user experiences. Narrative Memory: Employed to leverage high-level experiences, Agent S utilises narrative memory to keep track of task histories, thereby enhancing its decision-making processes. Episodic Memory: This feature provides users with step-by-step guidance, allowing the framework to offer contextual support as tasks unfold. Support for OpenACI: With the ability to run locally, Agent S allows users to maintain control over their interactions and workflows, aligning with the decentralised ethos of Web3. Easy Integration with External APIs: Its versatility and compatibility with various AI platforms ensure that Agent S can fit seamlessly into existing technological ecosystems, making it an appealing choice for developers and organisations. These functionalities collectively contribute to Agent S's unique position within the crypto space, as it automates complex, multi-step tasks with minimal human intervention. As the project evolves, its potential applications in Web3 could redefine how digital interactions unfold. Timeline of Agent S The development and milestones of Agent S can be encapsulated in a timeline that highlights its significant events: September 27, 2024: The concept of Agent S was launched in a comprehensive research paper titled “An Open Agentic Framework that Uses Computers Like a Human,” showcasing the groundwork for the project. October 10, 2024: The research paper was made publicly available on arXiv, offering an in-depth exploration of the framework and its performance evaluation based on the OSWorld benchmark. October 12, 2024: A video presentation was released, providing a visual insight into the capabilities and features of Agent S, further engaging potential users and investors. These markers in the timeline not only illustrate the progress of Agent S but also indicate its commitment to transparency and community engagement. Key Points About Agent S As the Agent S framework continues to evolve, several key attributes stand out, underscoring its innovative nature and potential: Innovative Framework: Designed to provide an intuitive use of computers akin to human interaction, Agent S brings a novel approach to task automation. Autonomous Interaction: The ability to interact autonomously with computers through GUI signifies a leap towards more intelligent and efficient computing solutions. Complex Task Automation: With its robust methodology, it can automate complex, multi-step tasks, making processes faster and less error-prone. Continuous Improvement: The learning mechanisms enable Agent S to improve from past experiences, continually enhancing its performance and efficacy. Versatility: Its adaptability across different operating environments like OSWorld and WindowsAgentArena ensures that it can serve a broad range of applications. As Agent S positions itself in the Web3 and crypto landscape, its potential to enhance interaction capabilities and automate processes signifies a significant advancement in AI technologies. Through its innovative framework, Agent S exemplifies the future of digital interactions, promising a more seamless and efficient experience for users across various industries. Conclusion Agent S represents a bold leap forward in the marriage of AI and Web3, with the capacity to redefine how we interact with technology. While still in its early stages, the possibilities for its application are vast and compelling. Through its comprehensive framework addressing critical challenges, Agent S aims to bring autonomous interactions to the forefront of the digital experience. As we move deeper into the realms of cryptocurrency and decentralisation, projects like Agent S will undoubtedly play a crucial role in shaping the future of technology and human-computer collaboration.

539 Total ViewsPublished 2025.01.14Updated 2025.01.14