By | Emphasizing Next

Throughout history, so-called "all-staff letters" have always been meant for external audiences.

Last weekend, a strategic memo from OpenAI's Chief Revenue Officer, Denise Dresser, sent to all employees, was exposed by the foreign media outlet "The Verge". Four pages, five priorities, and a rather scathing analysis of competitors.

This is not just an internal mobilization order; it更像是一次经过精心设计的对外信号释放。有策略地攻击竞争对手,有意地传递市场叙事,顺带给内部团队打一针鸡血。

01 "Capability Alone Is No Longer Enough"

The tone set at the beginning of the memo is intriguing. Dresser writes that enterprise AI is entering a "more mature phase," and what customers want is no longer just how smart the model is, but fit. Specifically, whether AI can truly integrate into their workflows, knowledge systems, and daily operations.

Over the past two years, OpenAI has swept the market with the capability advantages of the GPT series. But the era of competing on basic model capabilities is ending. As the capability gap between all major players narrows rapidly, the marginal utility of the "our model is the strongest" card is diminishing.

The procurement logic of enterprise customers is beginning to return to the classic path of To B software. They are more concerned with "whether this AI can truly run, run stably, and run long-term within my organization."

This is a sign of market maturity and a strategic shift in focus that OpenAI must complete.

02 Five Priorities

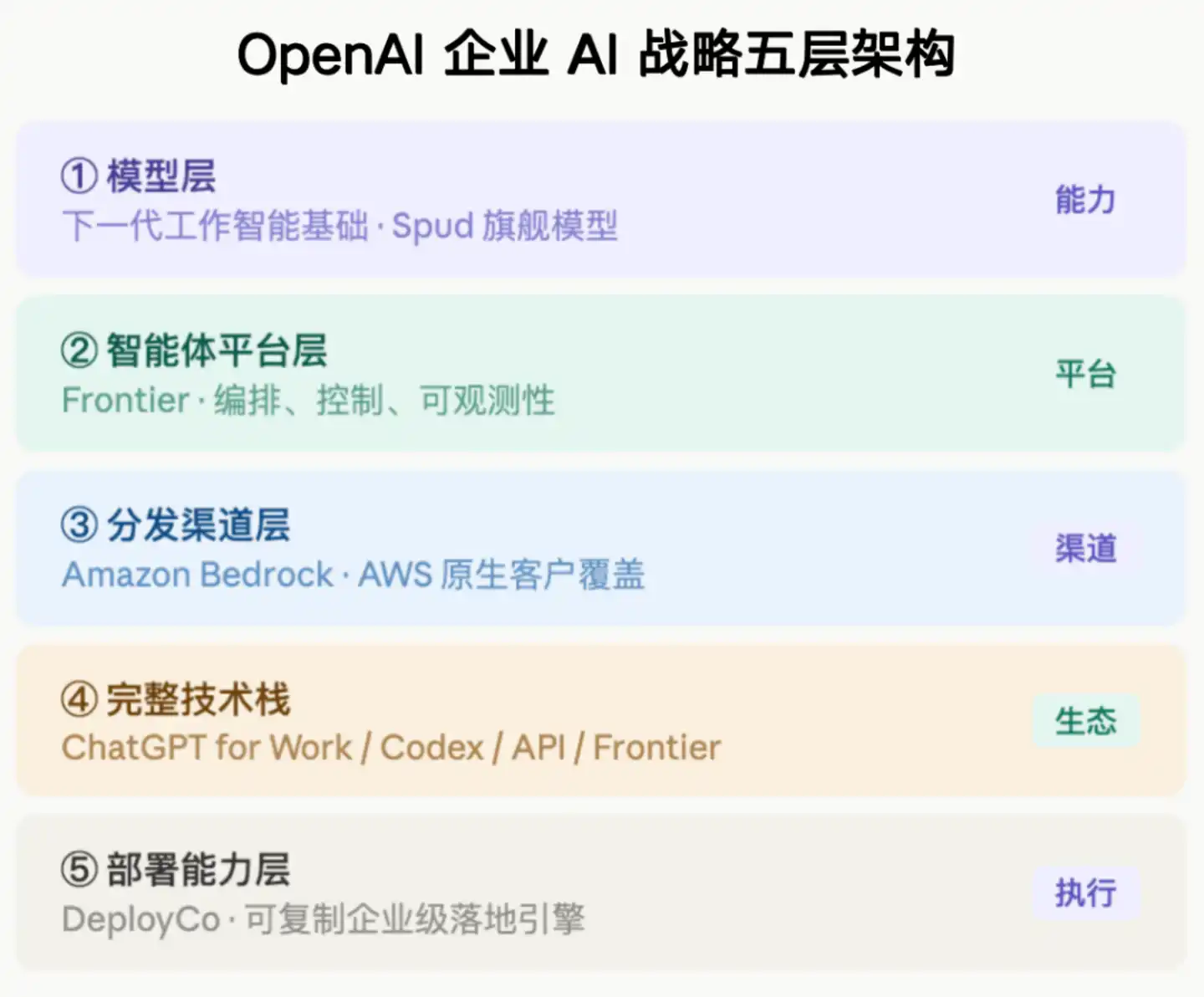

The memo lists five Q2 priorities: Win the model layer, win the agent platform, expand the market with Amazon, sell the full technology stack, and master deployment rights.

At first glance, these are five lines, but they point to the same goal: transitioning from the "most usable AI" to the "hardest to replace AI".

This is a typical platform transformation logic. Single-point products compete on performance; platforms compete on ecosystem and switching costs. One sentence in the memo is quite blunt: As customers connect more workflows to this system, "OpenAI will become harder to replace and will be more at the core of work."

OpenAI wants to be the operating system for enterprise AI. Just like Microsoft made Windows the underlying layer of all enterprise IT infrastructure. The word Dresser repeatedly emphasizes in the text is "platform." She says customers want a platform, not point solutions. ChatGPT for Work is the entry point for knowledge work, Codex is for the developer side, API is the integration engine, Frontier is the agent orchestration layer, and Amazon runtime is the production-grade stateful runtime. Five products, five entry points; theoretically, customers entering through any door will eventually be guided into the complete ecosystem.

The problem is, the story of a "platform company" sounds good, but few have truly succeeded historically. Platforms require network effects, switching costs, and ecosystem lock-in—things not built by a memo alone.

The memo mentions that "multi-year, multi-product, nine-figure deals are increasing." Nine figures means at least one hundred million dollars. The increase in such deals indicates that enterprise customers' bets on OpenAI are no longer at the "try it out" level but involve real strategic binding.

03 The Passage Attacking Anthropic

There is a passage in the memo that directly names Anthropic, with quite strong wording. It says their narrative is built on "fear, restrictions, and the notion that a select few should control AI"; it says their strategic mistakes in computing power are already reflected in the product—customers are experiencing rate limiting, low availability, and unstable performance; the most重磅的一条说Anthropic对外宣称的$300亿年化营收被高估了$80亿,因为他们把与Amazon和Google的收入分成按“总额”而非“净额”计入。

This practice of directly naming competitors is indeed rare in Chinese corporate culture. Executives of domestic giants, when expressing themselves externally, usually use迂回措辞 like "some players in the industry" or "certain products." Directly naming and criticizing with specific figures would be considered too aggressive in the domestic context and could easily trigger public backlash.

But in Silicon Valley, this is a normal operation,背后有几重逻辑。

First, the need for investor narratives. A company valued at tens of billions of dollars will be questioned if it cannot clearly explain why it is a better bet than its competitors. Pointing out specific weaknesses of competitors is an effective way to signal "we have底气" to the outside world.

Second, the need for internal mobilization. The audience of the memo is all employees. Specific comparisons like "their computing power is insufficient, customers have felt the rate limiting" are much more effective than preaching big道理, allowing the sales team to have something concrete to say in the next client meeting.

Third, this memo was likely expected to be leaked. Dresser couldn't have not known that in a company of several hundred people, a four-page strategic document would eventually appear in the media. "External messaging" itself might have been the purpose: to let the market know that we believe Anthropic's $30 billion figure is inflated, we have evidence, welcome verification.

That financial accusation deserves separate mention. The core of the argument that Anthropic's annualized revenue is overestimated by $8 billion is a dispute over accounting standards: should revenue sharing be accounted for on a gross or net basis? There is no absolute right or wrong on this issue, but if Anthropic确实 uses gross numbers in external宣传, there is a misleading component. Such disputes are often amplified before a company goes public; if Anthropic推进IPO later, investor due diligence will scrutinize this area carefully.

04 Insights for the Chinese Market

The value of this memo for China's AI industry lies in its clear depiction of what the next battlefield for enterprise AI competition looks like.

The first dimension: The gap in competition stages.

The competition among domestic large models currently mainly remains at the stage of "running leaderboards, comparing parameters, and price wars." This document reveals the next stage of competition dimensions, moving from capability competition to deployment competition, platform competition, and ecosystem competition. Whoever can help enterprise customers achieve replicable, scaled deployment the fastest, whose products can be most deeply embedded into customers' actual workflows, will truly win the enterprise market.

Dresser specifically mentioned DeployCo in the memo, a deployment engine专门负责帮企业落地AI. This is almost a blank slate in China. Many domestic AI companies sell a model API or a set of platforms, but the task of "helping customers truly use it and make it" has not been done systematically by any company. Many enterprise AI projects stall at the POC (Proof of Concept) stage and cannot scale, essentially due to this deployment capability gap.

Domestically, the closest to this logic might be Alibaba's DingTalk + Tongyi combination (embedding directly into enterprise workflows through DingTalk), and Huawei's overall IT solution capabilities on the government and enterprise side. But overall, the "enterprise deployment capability" and "platform ecosystem construction" of domestic AI products still have considerable room for improvement.

The second dimension: The particularity of data sovereignty and trust.

A word反复强调 in the memo is "trust." Enterprises need systems that are trustworthy, reliable, and sustainable to build upon. A large number of domestic enterprises, especially in fields like finance, government affairs, and healthcare, are far more sensitive to data sovereignty than the欧美市场. Transmitting data to third-party cloud services itself is a policy red line.

The model established through OpenAI's cooperation with Amazon, "running within the customer's own AWS environment, within the existing governance framework," is precisely responding to this concern. This provides an idea for domestic AI vendors: if they can differentiate themselves in the direction of "true private deployment, auditable data pipelines, and compliant industry solutions," it might have more commercial value than "my model is smarter than yours." Because in highly regulated industries, the ability to land compliantly is itself a barrier.

05 Finally: A Signal Worth Heeding

There is a sentence in the memo: "The opportunity ahead is enormous, and our biggest constraint right now is not demand, but capacity."

This situation is almost the reverse in China. The dilemma faced by many domestic AI companies is "having computing power, models, technology, but找不到愿意真正付费的企业客户". The chasm between "customers are willing to try" and "customers are willing to make multi-year, large-scale payments" is the real沟壑 that the domestic enterprise AI market needs to cross.

When OpenAI says "the biggest constraint is capacity," domestic peers might need to ask themselves: What is our biggest constraint? Is it technology? The product? Sales capability? Or the confidence and willingness of the entire market to invest in AI commercialization?