The Allen Institute for Artificial Intelligence (AI2) recently released the groundbreaking fully open-source web agent MolmoWeb . Unlike traditional agents that rely on a webpage's underlying code (DOM), MolmoWeb makes decisions solely by reading screenshots, marking a significant leap forward in "vision-driven" web navigation technology.

Core Technology: "Seeing" Web Pages Like a Human

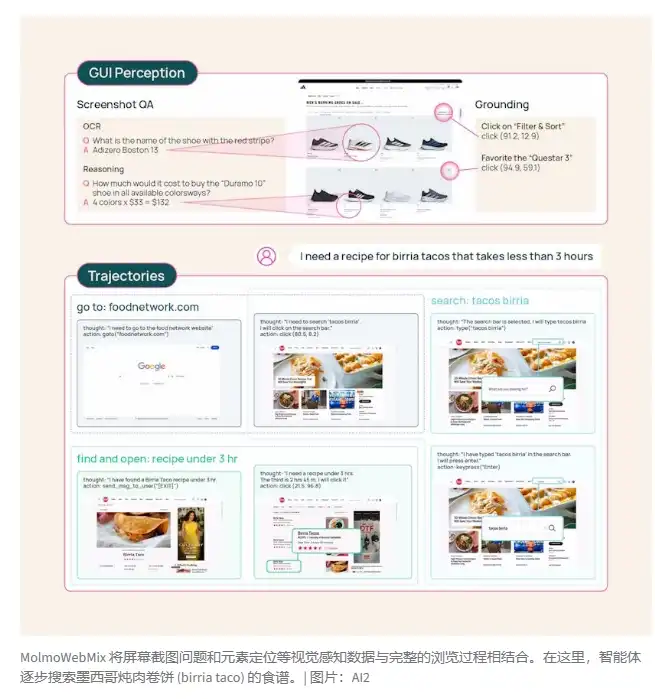

MolmoWeb's operating logic is very intuitive: it captures a screenshot of the current browser window, decides the next action (such as clicking, scrolling, or paging) through visual analysis, then executes it and repeats. This "what you see is what you get" model makes it more robust than traditional agents because the visual layout of a webpage is generally more stable than its underlying code, and its decision-making process is completely transparent and explainable to human users.

Performance Leap: Small Model Outperforms Giants

Despite having parameter sizes of only 4B and 8B, MolmoWeb demonstrates a "small but mighty" performance:

Topping the Charts: In the WebVoyager test, the 8B version scored an impressive 78.2%, not only ranking among the top open-source models but also approaching the performance of OpenAI's proprietary model o3 (79.3%).

Huge Potential: Research found that by running tasks multiple times and selecting the optimal result, its success rate could further jump to 94.7%.

Precise Localization: In UI element localization benchmark tests, it even surpassed Anthropic's Claude3.7.

Data Support: The Largest Open Dataset to Date

AI2 has not only open-sourced the model weights but also contributed a massive dataset named MolmoWebMix. This dataset contains:

36,000 real browsing tasks completed by human volunteers.

Over 2.2 million screenshot-question-answer pairs.

Automated synthetic data verified by GPT-4o. Experiments show that synthetic data is even better than human trajectories at guiding the agent to find the "optimal path".

Open-Source Spirit and Future Challenges

Currently, MolmoWeb is fully available under the Apache 2.0 license on Hugging Face and GitHub. Although it still faces challenges in handling complex instructions, login authentication, and legal compliance (such as terms of service), AI2 firmly believes that only through complete transparency and community collaboration can we truly counter the data monopoly of large tech companies.