Author: Thought Circle

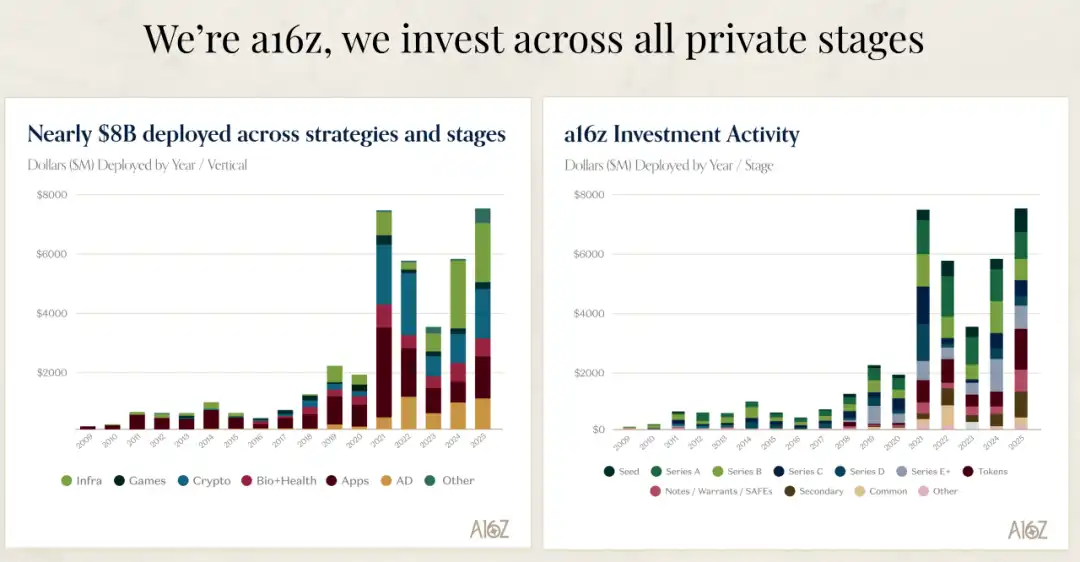

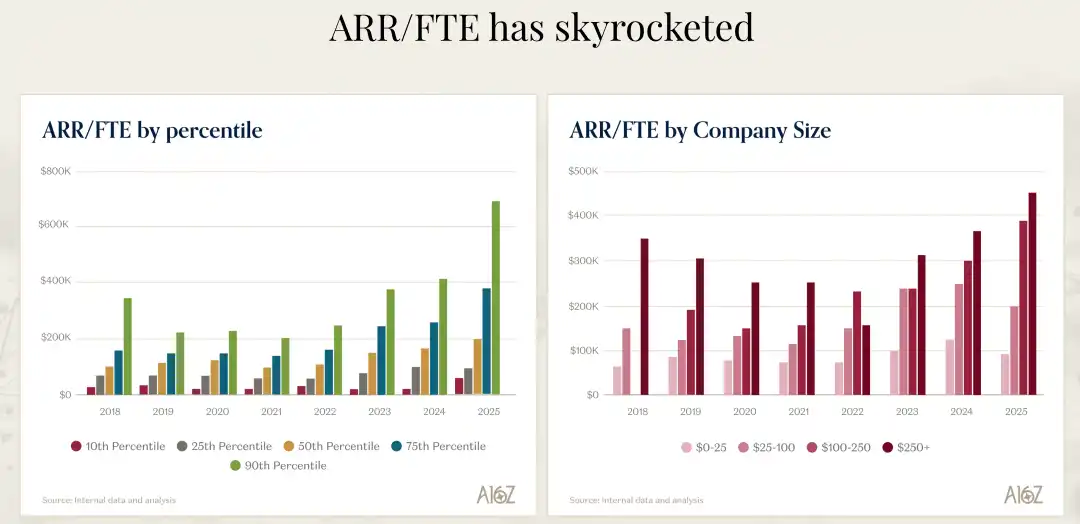

Have you ever thought that the software industry might be undergoing a transformation more dramatic than the shift from command line to graphical interface? Recently, I listened to a16z's David George's in-depth analysis of the AI market, and I was stunned by one set of data: the fastest-growing AI companies are expanding at an annual growth rate of 693%, while their spending on sales and marketing is significantly lower than that of traditional software companies. This is not an isolated case; the entire group of AI companies is growing more than 2.5 times faster than non-AI companies. What I find even more incredible is that these companies' ARR per FTE (Annual Recurring Revenue per Full-Time Employee) reaches $500,000 to $1 million, while the standard for the previous generation of software companies was $400,000.

What does this mean? It means we are witnessing the birth of a completely new business model, an era of creating greater value with fewer people and lower costs. D

avid George mentioned in his talk that this is not a minor adjustment, but a complete paradigm shift. Those core concepts—version control, templates, documentation, even the concept of a user—are being redefined by AI agent-driven workflows. I firmly believe that within the next five years, companies that cannot adapt to this change will be completely eliminated.

The Astonishing Truth About AI Company Growth

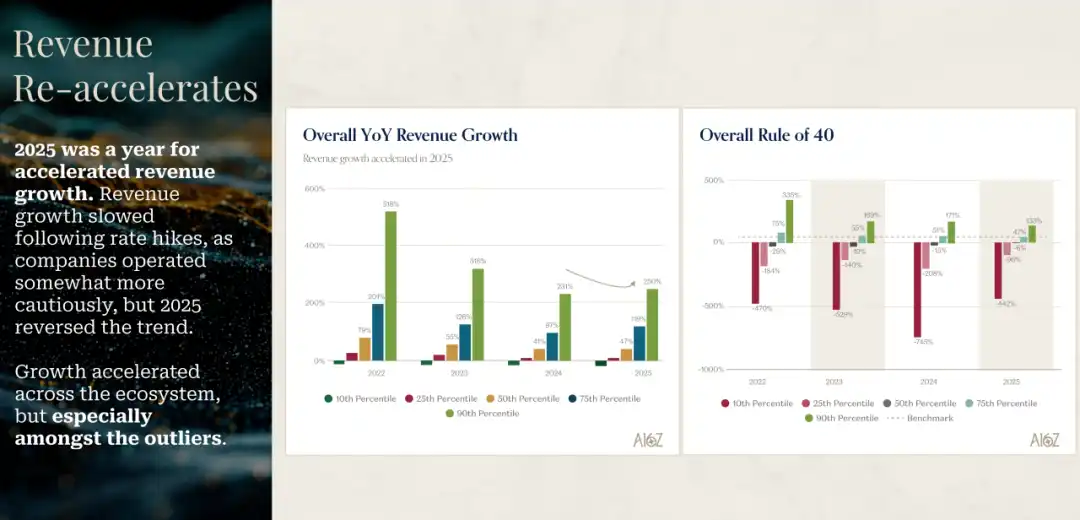

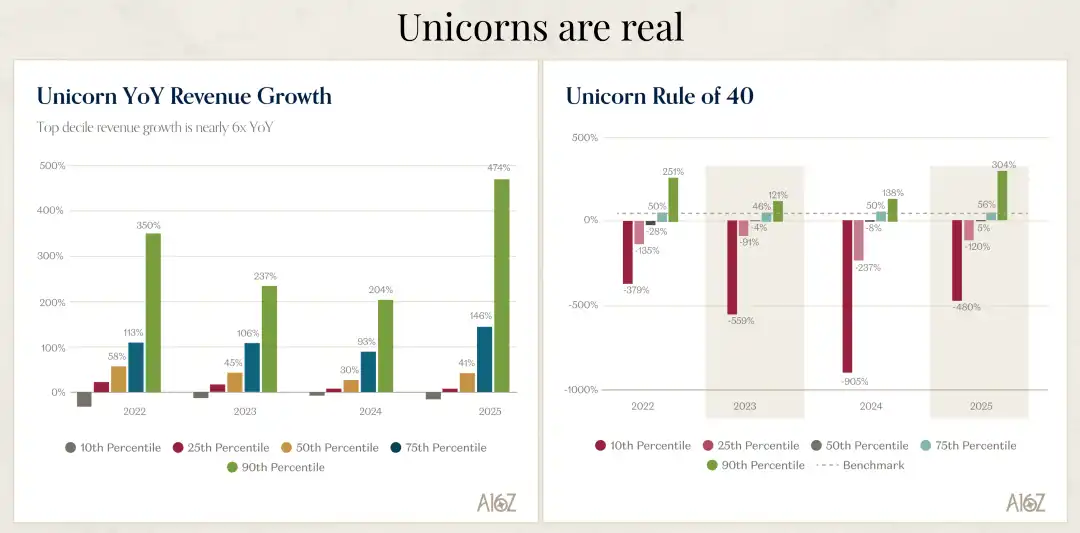

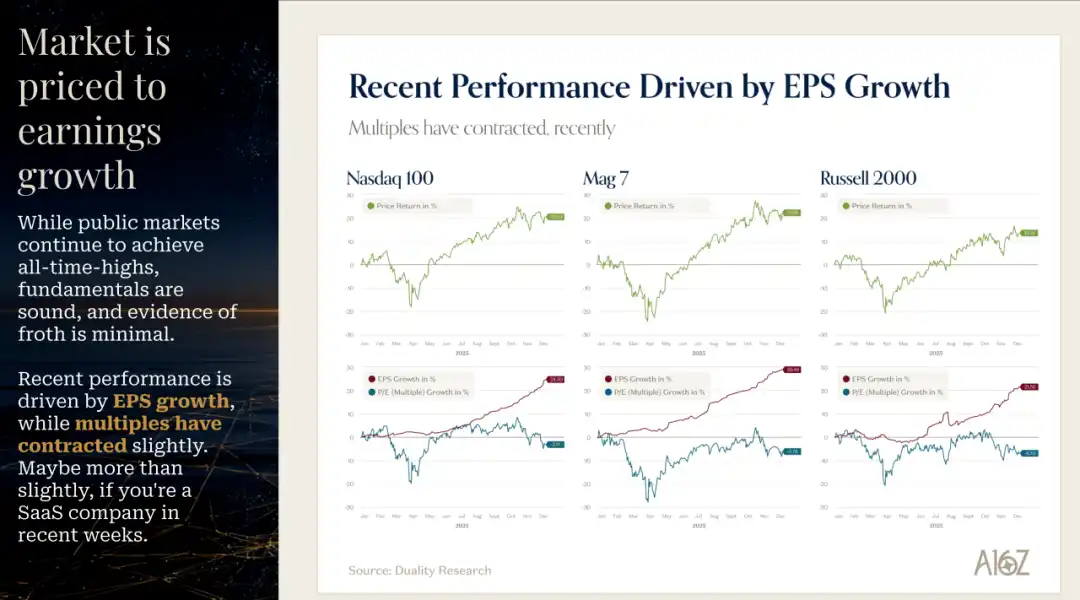

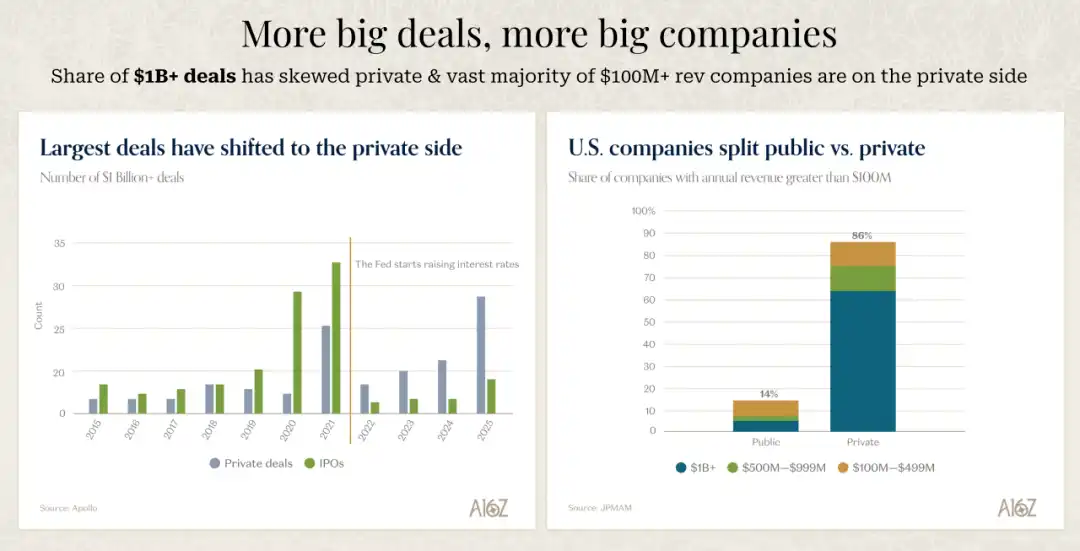

The data David George presented made me rethink what true growth really is. 2025 has been a year of accelerated growth for AI companies. After the growth deceleration in 2022, 2023, and 2024 due to rising interest rates and tech sector contraction, 2025 completely reversed this trend. Most astonishingly, among companies ranked by different tiers, the true outlier companies are growing at an almost unbelievable pace.

My first reaction upon seeing this data was: are these numbers correct? The top-performing group of AI companies is growing at 693% year-over-year. David said their team also triple-checked before believing this number. But it completely aligns with the actual situations and cases they see from their portfolio companies. This is not an isolated phenomenon, but a systemic change happening across the entire AI field.

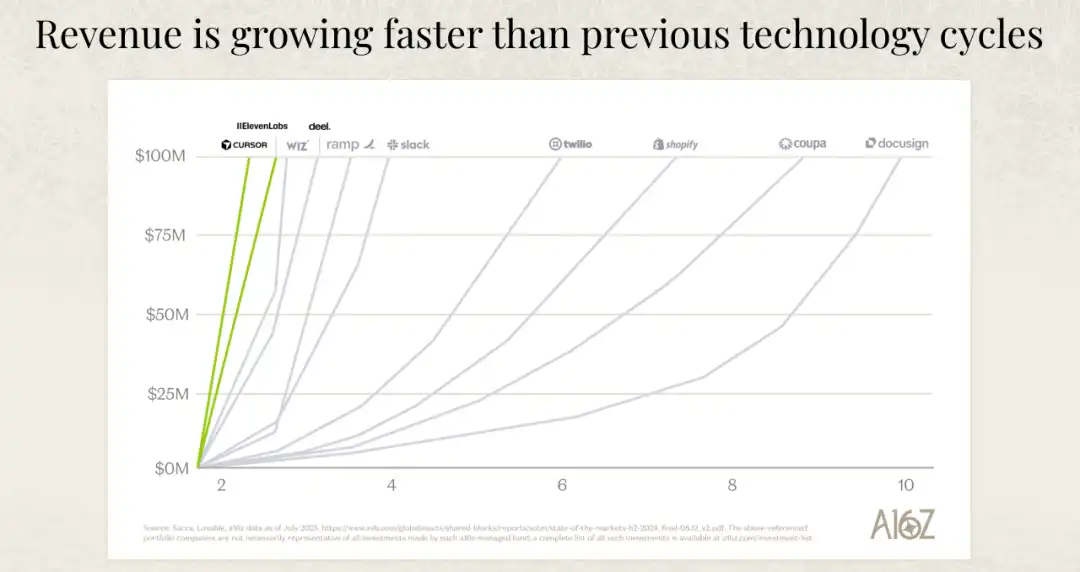

More importantly is the quality of growth. Traditional software companies usually take a long time to reach $100 million in annual revenue, while the fastest-growing AI companies reach this milestone much quicker. David emphasized a very important point: this is not because they are spending more on sales and marketing; on the contrary, the fastest-growing AI companies actually spend less on sales and marketing than traditional SaaS (Software as a Service) companies. They are growing faster while spending less. What's the reason? It's because end-customer demand is extremely strong, and the products themselves are highly compelling.

I think this reveals a profound shift in business logic. In the past era of software, growth often relied on strong sales teams and huge marketing budgets. You needed to educate the market, persuade customers, and overcome adoption barriers. But in the AI era, truly excellent products can speak for themselves. When a product can immediately create value for users, allowing them to feel an efficiency boost the first time they use it, market demand automatically arises. This product-driven growth model is much healthier and more sustainable than the traditional sales-driven model.

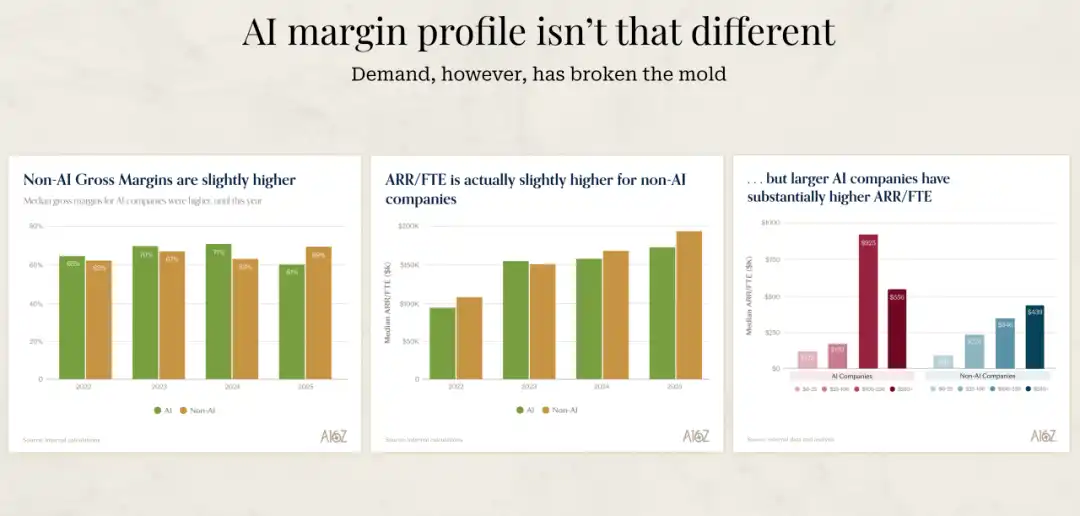

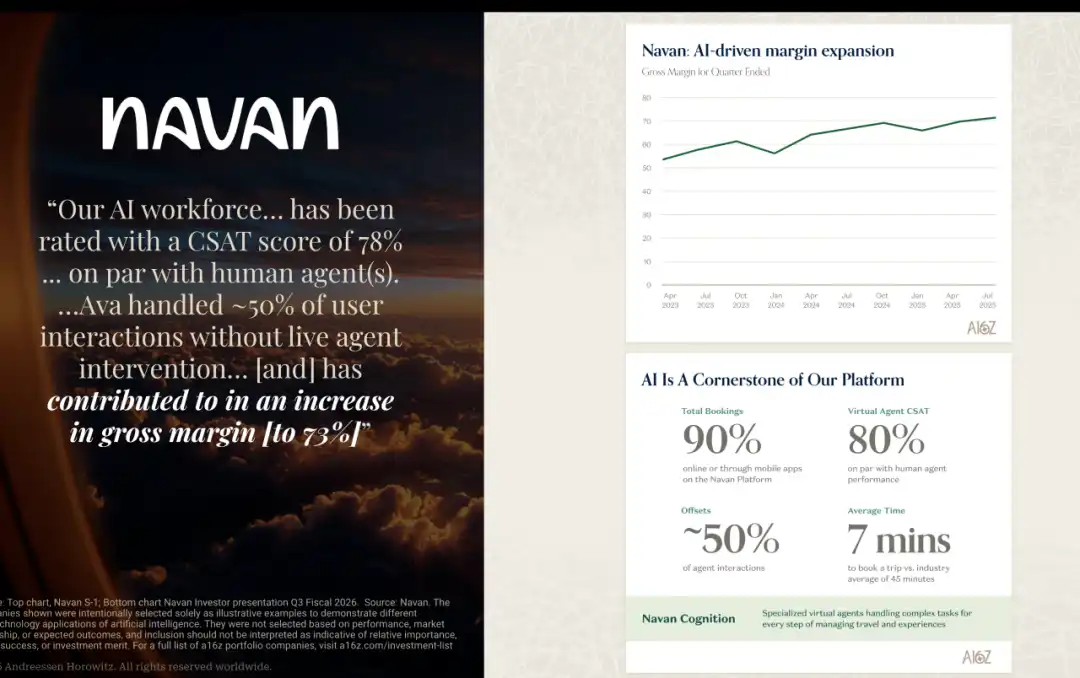

Another set of data David showed is also interesting. The gross margins of AI companies are actually slightly lower than those of traditional software companies. Their team has a unique perspective: for AI companies, low gross margin is somewhat a badge of honor. Because if the low gross margin is caused by high inference costs, it indicates two things: First, people are really using the AI features; Second, over time, these inference costs will decrease. So, in a way, if they see an AI company with particularly high gross margins, they might be a bit suspicious, as it could mean the AI features are not what customers are actually buying or using.

Why AI Companies Can Be More Efficient

I've been thinking about a question: Why can AI companies, also being software companies, create more revenue with fewer people? David focused on the ARR per FTE metric in his talk, which is the Annual Recurring Revenue per Full-Time Employee. This metric is actually a comprehensive indicator of a company's overall operational efficiency, encompassing not only sales and marketing efficiency but also management costs and R&D costs.

The ARR per FTE of the best AI companies reaches $500,000 to $1 million, while the standard for the previous generation of software companies was about $400,000. This might seem like just a numerical difference, but it reflects completely different business models and operational methods. David believes the main reason for this difference is that the market demand for these products is very strong, so they need fewer resources to bring the product to market.

But I think this is only the surface reason. The deeper reason is that AI companies are forced from the start to think differently about how to operate. They have no choice but to use AI to redesign their internal processes, product development methods, and customer support systems. This forced innovation反而 allows them to find a more efficient business model.

David shared a particularly vivid example. He said he recently chatted with a founder of a company who was dissatisfied with the progress of one of their products. So, he directly assigned two engineers who were deeply involved in AI to rebuild this product from scratch using the latest programming tools like Claude Code and Cursor, and gave them an unlimited budget for programming tools. The result? The founder said he believed the progress was 10 to 20 times faster than before. And the bills generated by these tools were so high that it made him start rethinking what the entire organization should look like.

What impressed me about this example is that this is not an incremental improvement, but an order-of-magnitude leap. What does a 10 to 20 times speed increase mean? It means a project that originally took a year might now be completed in one or two months. This speed difference will have a decisive impact in competition. This founder's conclusion was: I need the entire product and engineering team to work in this way, and I think this will happen within the next 12 months. But this also means the team's organizational structure will undergo fundamental changes. Where are the boundaries between product, engineering, and design? These questions all need to be redefined.

I believe December 2024 was a turning point in the field of programming. David feels the same. He said it felt like at that point in time, programming tools made a qualitative leap. In the next 12 months, this change will either truly take root in companies, or those that don't adopt it will be much slower than their peers. This is not alarmist; it's reality.

Adapt to AI or Be Eliminated

David mentioned a very stark view in his talk: For companies founded before the AI era, it's either adapt to the AI era or die. This sounds extreme, but I completely agree. And this adaptation needs to happen simultaneously on two fronts: the front end and the back end.

On the front end, companies need to think about how to natively integrate AI into the product, not just add a chatbot to existing workflows. This requires reimagining what the product can do with AI and being radically disruptive to themselves, making changes. David shared several interesting examples. There is a pre-AI era software company whose CEO has been completely converted by the AI concept, saying: We are going to become an AI product. We want the product to be able to say, your employees are now your AI agents. How many agents do you have? These are the topics he discusses now.

There is an even more extreme example. A CEO said, for every task we need to complete now, I ask one question: Can I do this with electricity, or must I do it with blood? This is an extreme mindset shift. Using electricity refers to using AI and automation; using blood refers to using human labor. This shift in thinking is very profound; it forces you to re-examine every process and every task in the company.

On the back end, companies need to fully adopt the latest programming models and tools. All developers should use the latest programming assistance tools, and every functional department should use the latest tools. So far, adoption rates are highest in the programming field, which is also where the biggest leaps are seen. But this change is spreading to other functional areas.

David mentioned that for those pre-AI companies, the good news is that the evolution of the business model is still in its early stages. The most disruptive scenario is when both technology/product and business model shift. Right now, technology and product are indeed undergoing dramatic changes, but the business model shift hasn't fully unfolded yet.

He sees the business model as a spectrum. On the far left is the license model (licenses), the pre-SaaS era license and maintenance model. Then comes SaaS and subscription models, usually based on seat pricing, which was a major innovation and very disruptive. You can look at what happened to Adobe during this transition. Then comes the consumption-based model, which is usage-based pricing, the way cloud services charge. Many usage-based businesses have moved from seat-based to consumption-based.

The next stage will be the outcome-based model. When you complete a task, ideally successfully complete a task, you charge based on the successful completion of the task. Currently, the only area where this might be truly feasible is customer support and customer success, because you can objectively measure problem resolution. But as model capabilities improve, if other functions beyond customer support can also measure such outcomes, that will be hugely disruptive to existing companies.

I find this evolution path very insightful. From license to subscription, from subscription to consumption, from consumption to outcome—each transition is a disruption of the previous generation's business model. And we are now on the eve of the transition from consumption to outcome. Once AI agents can reliably complete tasks and be objectively evaluated, outcome-based pricing will become mainstream. By then, companies still charging by seat will find themselves completely uncompetitive.

The AI Adoption Dilemma of Large Companies

Regarding Fortune 500 companies adopting AI, David's observations are very interesting. He said there is a huge gap between what he hears from the CEOs of these large companies and what is actually happening. The CEOs are all saying: We must adapt, we are desperate to understand what AI tools we need, we are ready to change, our business will roll out these tools across the board, we are going to be AI companies.

But the reality is completely different. The biggest disconnect between this mindset and actual business change is: change management is too difficult. Even just getting people to use AI assistants to help them do their jobs better is hard enough. As for actual business management, changing business processes, change management—these are extremely difficult.

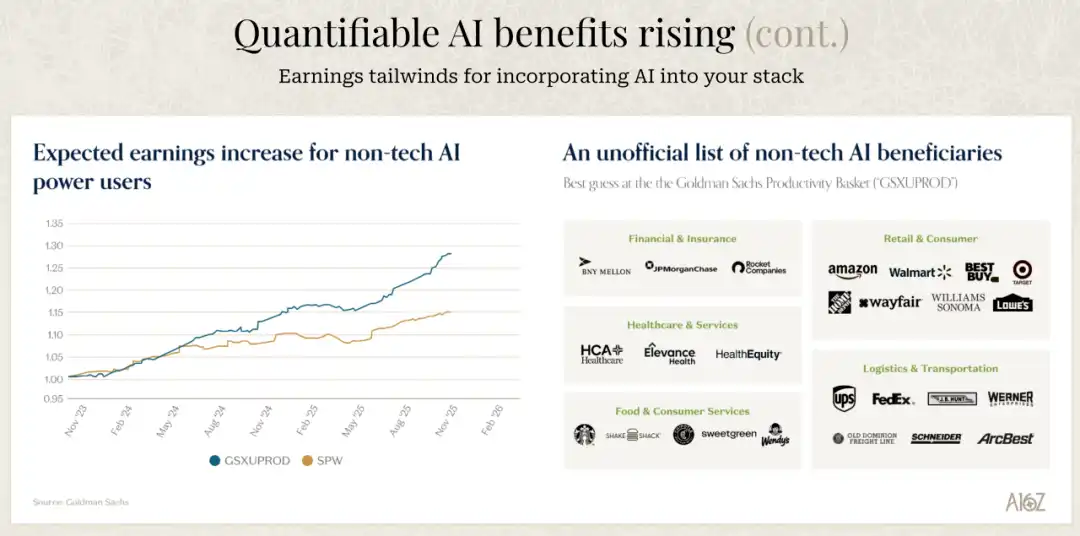

David said he isn't surprised by market chatter that things are moving slower than expected. But for the best companies that are truly fully embracing AI and know what to do, there has been a huge business impact. He gave a few specific examples: Chime said they reduced support costs by 60%; Rocket Mortgage said they saved 1.1 million hours in underwriting, a 6x year-over-year increase, equivalent to $40 million in annual operational savings.

I think this reveals a key problem: the gap between intention and capability. Large company CEOs have the intention to embrace AI, but whether they have the ability to implement it is another matter. The difficulty of change management is often underestimated. This is not just about buying some tools or hiring some AI engineers; it requires fundamentally changing the company's processes, culture, and organizational structure.

And many large companies need to first adjust their own business to make it ready for AI. Using a chatbot is one thing; how much productivity gain you might get is probably not much. But if you have to completely overhaul your systems, information, and backend to accommodate AI, a lot of work is potentially in the works, accumulating, and we haven't seen the related results yet.

David predicts the next 12 months will be very interesting. He thinks we will see more case studies, but there will be companies that figure it out and companies that don't. Those that figure it out will gain massive productivity advantages, and those that don't will be at a huge disadvantage. I believe this divergence will come faster and more sharply than people imagine.

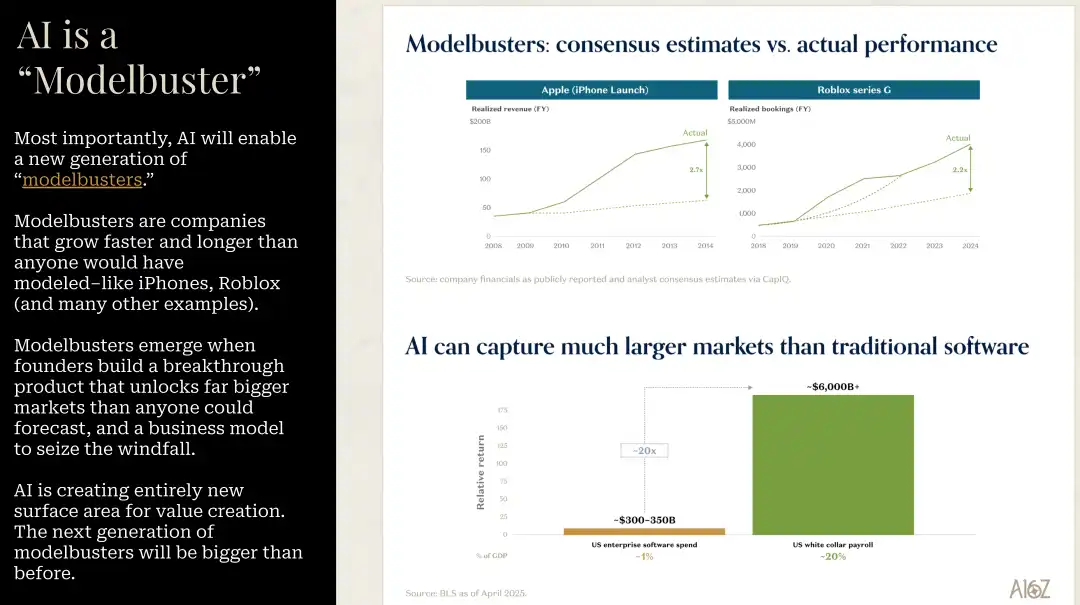

Model Busters and the Future of the Market

David mentioned a concept I find particularly insightful: Model Busters. These are companies whose growth speed and duration far exceed anything anyone could predict in any scenario. The iPhone is the classic case of this concept. If you look at the consensus forecast before the iPhone launch and the actual performance 4-5 years later, the consensus forecast was off by 3x. And this is the most watched company in the world.

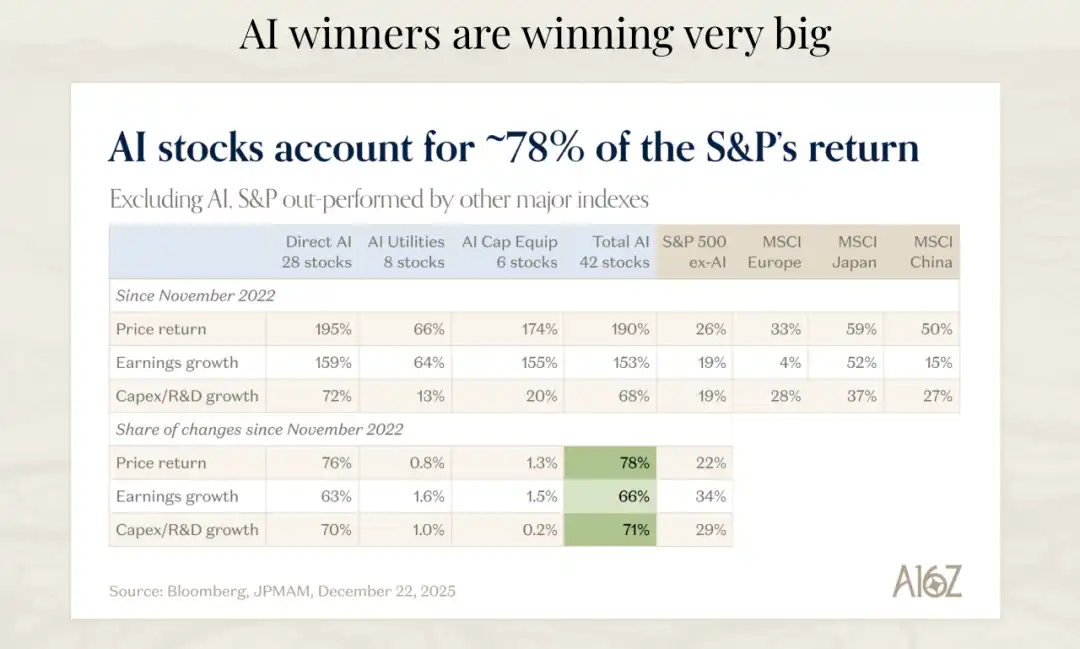

David believes AI will be the biggest Model Buster he has seen in his career. The performance of many companies in the AI space will significantly exceed any expectations in any spreadsheet. I strongly agree with this view. When a technology platform brings not incremental improvement but order-of-magnitude leaps, traditional prediction models fail.

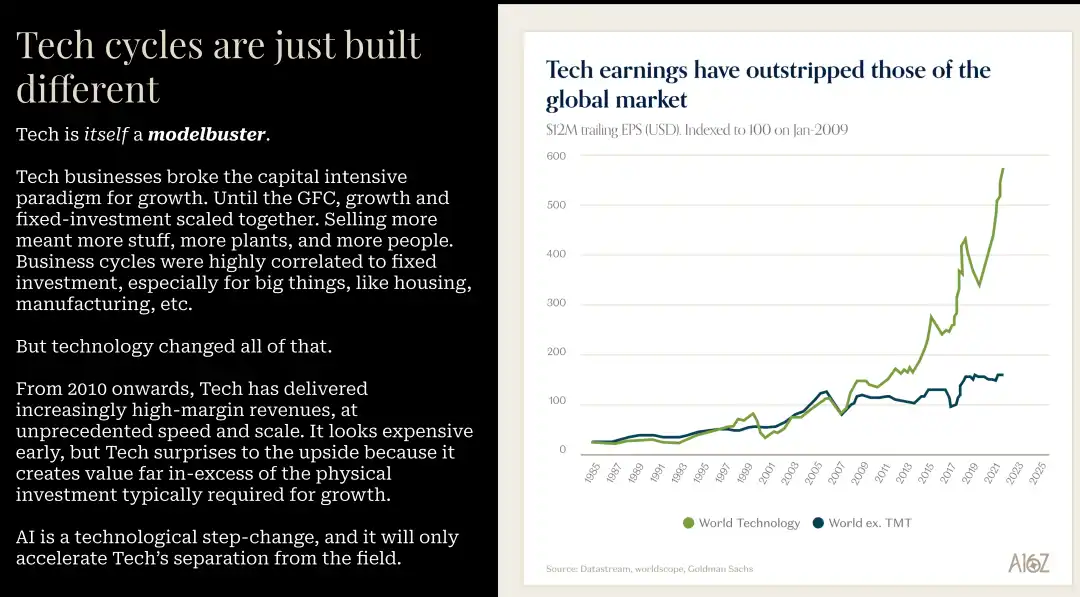

He mentioned that technology itself is a Model Buster. But since 2010, tech has delivered high-margin revenue at unprecedented speed and scale. So it always looks expensive early on, but it repeatedly outperforms expectations, creating value far in excess of the capital required. He sees no reason why this time should be any different.

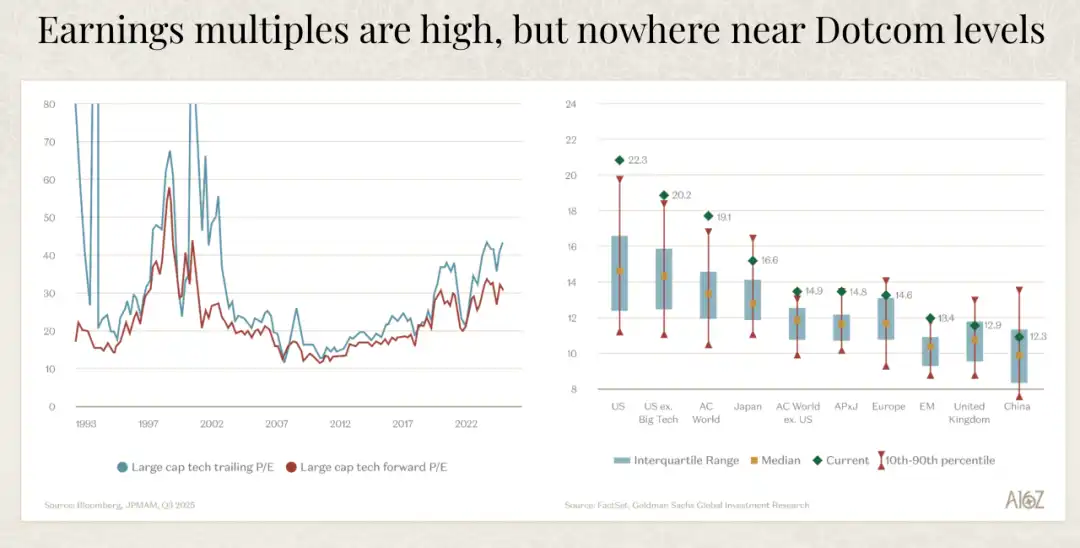

In terms of capital expenditure (capex), the data David showed is also interesting. Compared to the dot-com bubble era, current capex is actually supported by cash flow, and capex as a percentage of revenue is much lower. The hyperscale cloud providers bear the brunt of the capex burden, and these are some of the best business companies ever.

David specifically mentioned that, as a portfolio company, they very much welcome this capex. He said: Building as much capacity as possible, providing as much supply for training and inference as possible, is a very good thing. And the ones bearing most of the burden are some of the best business companies ever.

A phenomenon they are starting to watch is debt entering the equation. You can't fund all the forecasted future capex with just cash flow, and the market is starting to see some debt. But overall, they feel comfortable with companies funding with cash flow, continuing to generate cash flow, and using debt, as long as the counterparties are companies like Meta, Microsoft, AWS, Nvidia.

David mentioned a case worth watching: Oracle. Oracle has always been profitable, always buying back stock, but the scale of capex they've committed to is massive; it's a huge bet. They will have negative cash flow for many years to come. The market has started to notice this; Oracle's credit default swap (CDS) costs have risen to about 2% over the past three months. This is a signal to watch.

I think this capital-intensive building phase is necessary but not without risk. The key is to ensure these investments ultimately generate corresponding returns. Currently, demand far exceeds supply. All hyperscale cloud providers report demand far outstripping supply. Gavin Baker, whom David interviewed, had a great analogy: In the internet era, a lot of fiber was laid, and then that fiber sat idle, unused; this was called dark fiber. But in the AI era, there is no such thing as a dark GPU. If you install a GPU in a data center, it will be immediately fully utilized.

The Astonishing Speed of Revenue Growth

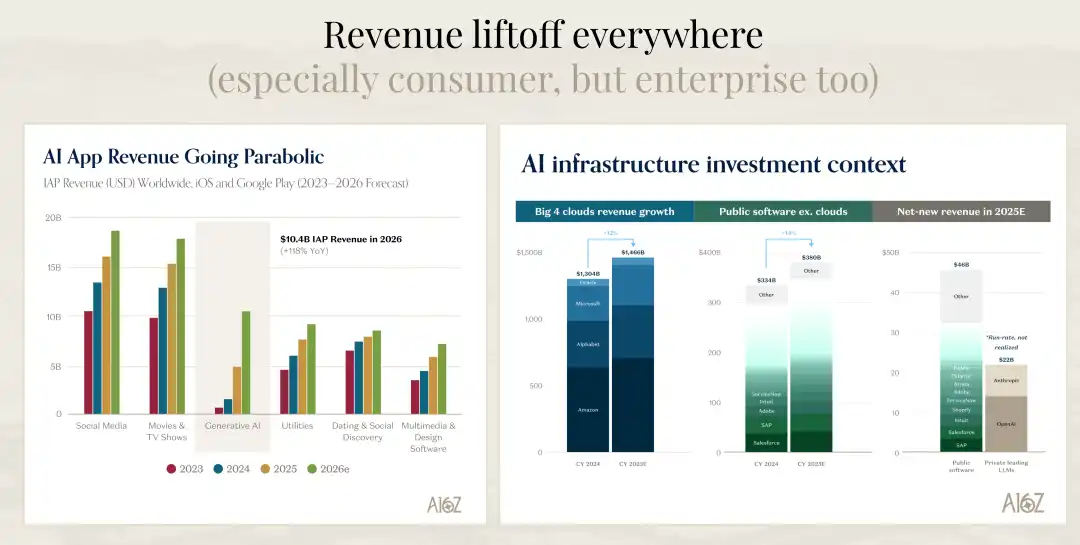

One set of data David showed was particularly震撼. He compared cloud services, public software companies, and the net new revenue added in 2025. Public software companies added a total of $46 billion in new revenue in 2025. If you look just at OpenAI and Anthropic, on an operating revenue basis, the new revenue they added is almost half of that number.

And David believes that if you do the same comparison for 2026, the new revenue added by AI companies (model companies) could reach 75% to 80% of the entire public software industry (including SAP and legacy software companies, not just SaaS). This speed is simply unbelievable. This means that within just a few years, the new value created by AI companies will surpass that of the entire traditional software industry.

Goldman Sachs estimates that AI build-out will generate $9 trillion in revenue. Assuming a 20% profit margin and a 22x P/E ratio, this would translate into $35 trillion in new market capitalization. About $24 trillion has already been提前 priced in. While we can argue whether this is all due to AI or large tech performance, there is still a lot of market cap up for grabs, and significant upside potential if these assumptions are correct.

David also did some simple arithmetic. Based on current estimates, by 2030, the cumulative capex of hyperscale cloud providers will be just under $5 trillion. To achieve a 10% hurdle rate on this $4.8 trillion or nearly $5 trillion investment, AI annual revenue needs to reach about $1 trillion by 2030. To put that number in context, $1 trillion is roughly 1% of global GDP, just to generate a 10% return.

Is this achievable? It might also fall slightly short. But David thinks looking仅仅 at 2030 is limiting. The returns on these investments will likely materialize over a longer period, say between 2030 and 2040. And if we are at roughly $50 billion in AI revenue now (his rough estimate), and this has mainly been generated in the past year and a half or so, then the path from $50 billion to $1 trillion is not unimaginable.

My Thoughts on the Future

After listening to David's talk, my biggest feeling is: we are at the beginning of a historic inflection point, not the middle or the end. This is a product cycle that could last 10 to 15 years, and we are just getting started. This makes me both excited and anxious.

Excited because the opportunities brought by this transformation are enormous. For companies that can adapt quickly and fully embrace AI, they will not only gain competitive advantages but are more likely to become the companies that define the next era. We will see new unicorns born, new business models emerge, and completely different ways of organizing companies.

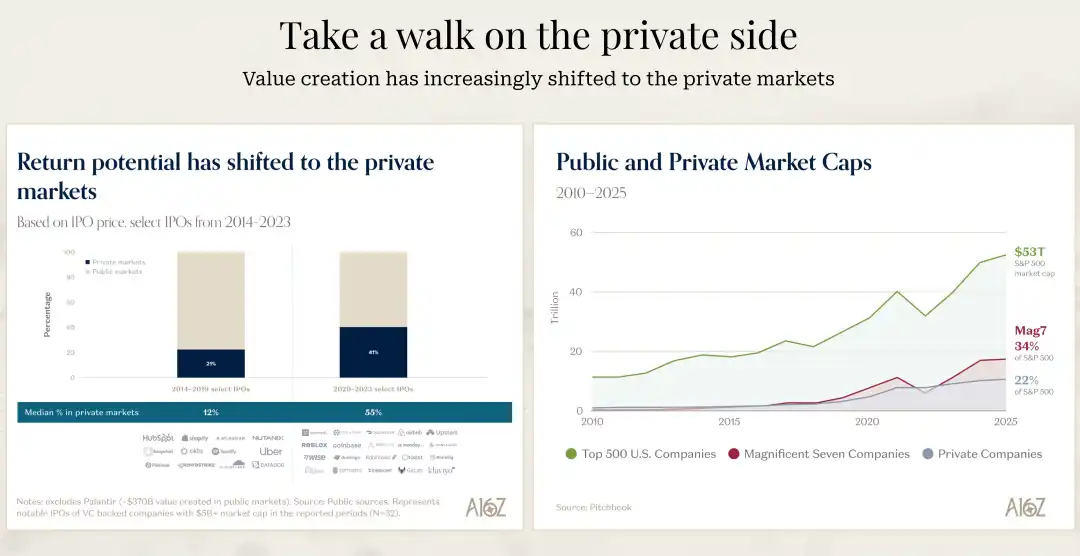

Anxious because the speed of this change may be much faster than most people expect. The data David mentioned is particularly telling: the average time a S&P 500 company stays in the index has decreased by 40% over the past 50 years. This means the speed at which companies are being disrupted is accelerating. In the AI era, this speed is likely to accelerate further.

I believe a clear divergence will emerge next. Some companies will truly understand the potential of AI and fundamentally rethink their products, processes, and organizational structures. These companies will gain order-of-magnitude efficiency improvements and competitive advantages. Other companies, even if they have the intention to change, will progress slowly due to the difficulties of change management, organizational inertia, and technical debt. This divergence will become increasingly apparent in the coming years.

For entrepreneurs, now might be the best of times. Market demand is extremely strong, technological capabilities are advancing rapidly, and capital markets are still willing to support truly promising companies. And compared to the previous generation of software companies, it's now possible to reach the same scale with fewer resources and faster speed. This lowers the barrier to entry but also increases the requirements for product quality and market fit.

For investors, the key is to identify the true Model Busters. The growth speed and duration of these companies will far exceed any predictions from traditional models. But this also requires investors to have sufficient vision and patience, willing to believe growth curves that seem unreasonable.

For practitioners, whether you are an engineer, product manager, designer, or any other role, you need to quickly learn and adapt to new tools and ways of working. The example David mentioned—two engineers using the latest programming tools being 10 to 20 times faster than before—is not an isolated case but a trend. Those who can master these new tools and methods will gain huge career advantages.

Finally, I want to say that this transformation is not just technical, but also a shift in mindset. From "how should we do it" to "what outcome do we want to achieve", from "adding more people" to "how to solve this problem with AI", from "following established processes" to "reimagining possibilities". That question of "electricity or blood", although it sounds extreme, captures the essence of this shift.

We are witnessing the software world being rewritten. This is not an incremental upgrade, but a complete重构. Those who can understand this, embrace this, will define the next era.

Related Questions

QWhat is the key difference in growth and efficiency between the fastest-growing AI companies and traditional software companies according to the a16z analysis?

AThe fastest-growing AI companies are expanding at an annual growth rate of 693%, which is 2.5 times faster than non-AI companies. They achieve this with significantly lower sales and marketing expenditures. Additionally, their ARR per FTE (Annual Recurring Revenue per Full-Time Employee) is $500,000 to $1 million, compared to the previous standard of $400,000 for traditional software companies, indicating a more efficient and scalable business model driven by strong product-market fit rather than heavy sales investment.

QHow does the article explain the lower gross margins of AI companies compared to traditional software firms?

AThe article suggests that lower gross margins for AI companies can be a 'badge of honor' because they are often caused by high inference costs. This indicates two things: first, that customers are actually using the AI features extensively, and second, that these inference costs are expected to decrease over time. A very high gross margin might conversely raise suspicions that the AI functionality is not a core part of the product that customers value and use.

QWhat fundamental shift in business model evolution does the article predict is on the horizon due to AI?

AThe article predicts a shift towards an 'outcome-based' business model. This model moves beyond current consumption-based pricing (paying for usage) to charging customers based on the successful completion of a specific task or the achievement of a desired result. This is seen as a potentially massive disruption, as it aligns pricing directly with the value delivered, unlike traditional seat-based SaaS subscriptions or even usage-based cloud models.

QWhat major challenge do large Fortune 500 companies face in adopting AI, despite their CEOs' enthusiasm?

AThe primary challenge is the 'change management gap.' There is a significant disconnect between the CEOs' strategic desire to adopt AI and the practical difficulty of implementing it. Transforming business processes, retraining or restructuring teams, and integrating AI natively into complex existing systems is extremely difficult. This often leads to much slower actual implementation and business transformation than the initial ambitious statements from leadership would suggest.

QWhat is the concept of a 'Model Buster' as described in the article, and why is AI considered one?

AA 'Model Buster' is a company whose growth speed and duration far exceed any reasonable consensus prediction made by traditional financial or business models. The iPhone is given as a classic example. AI is considered the ultimate Model Buster of this era because the technology delivers not just incremental improvements but order-of-magnitude leaps in capability and efficiency. This fundamental shift makes historical data and conventional forecasting models largely ineffective for predicting the growth and impact of leading AI companies.

Related Reads

Trading

Hot Articles

What is SONIC

Sonic: Pioneering the Future of Gaming in Web3 Introduction to Sonic In the ever-evolving landscape of Web3, the gaming industry stands out as one of the most dynamic and promising sectors. At the forefront of this revolution is Sonic, a project designed to amplify the gaming ecosystem on the Solana blockchain. Leveraging cutting-edge technology, Sonic aims to deliver an unparalleled gaming experience by efficiently processing millions of requests per second, ensuring that players enjoy seamless gameplay while maintaining low transaction costs. This article delves into the intricate details of Sonic, exploring its creators, funding sources, operational mechanics, and the timeline of significant events that have shaped its journey. What is Sonic? Sonic is an innovative layer-2 network that operates atop the Solana blockchain, specifically tailored to enhance the existing Solana gaming ecosystem. It accomplishes this through a customised, VM-agnostic game engine paired with a HyperGrid interpreter, facilitating sovereign game economies that roll up back to the Solana platform. The primary goals of Sonic include: Enhanced Gaming Experiences: Sonic is committed to offering lightning-fast on-chain gameplay, allowing players and developers to engage with games at previously unattainable speeds. Atomic Interoperability: This feature enables transactions to be executed within Sonic without the need to redeploy Solana programmes and accounts. This makes the process more efficient and directly benefits from Solana Layer1 services and liquidity. Seamless Deployment: Sonic allows developers to write for Ethereum Virtual Machine (EVM) based systems and execute them on Solana’s SVM infrastructure. This interoperability is crucial for attracting a broader range of dApps and decentralised applications to the platform. Support for Developers: By offering native composable gaming primitives and extensible data types - dining within the Entity-Component-System (ECS) framework - game creators can craft intricate business logic with ease. Overall, Sonic's unique approach not only caters to players but also provides an accessible and low-cost environment for developers to innovate and thrive. Creator of Sonic The information regarding the creator of Sonic is somewhat ambiguous. However, it is known that Sonic's SVM is owned by the company Mirror World. The absence of detailed information about the individuals behind Sonic reflects a common trend in several Web3 projects, where collective efforts and partnerships often overshadow individual contributions. Investors of Sonic Sonic has garnered considerable attention and support from various investors within the crypto and gaming sectors. Notably, the project raised an impressive $12 million during its Series A funding round. The round was led by BITKRAFT Ventures, with other notable investors including Galaxy, Okx Ventures, Interactive, Big Brain Holdings, and Mirana. This financial backing signifies the confidence that investment foundations have in Sonic’s potential to revolutionise the Web3 gaming landscape, further validating its innovative approaches and technologies. How Does Sonic Work? Sonic utilises the HyperGrid framework, a sophisticated parallel processing mechanism that enhances its scalability and customisability. Here are the core features that set Sonic apart: Lightning Speed at Low Costs: Sonic offers one of the fastest on-chain gaming experiences compared to other Layer-1 solutions, powered by the scalability of Solana’s virtual machine (SVM). Atomic Interoperability: Sonic enables transaction execution without redeployment of Solana programmes and accounts, effectively streamlining the interaction between users and the blockchain. EVM Compatibility: Developers can effortlessly migrate decentralised applications from EVM chains to the Solana environment using Sonic’s HyperGrid interpreter, increasing the accessibility and integration of various dApps. Ecosystem Support for Developers: By exposing native composable gaming primitives, Sonic facilitates a sandbox-like environment where developers can experiment and implement business logic, greatly enhancing the overall development experience. Monetisation Infrastructure: Sonic natively supports growth and monetisation efforts, providing frameworks for traffic generation, payments, and settlements, thereby ensuring that gaming projects are not only viable but also sustainable financially. Timeline of Sonic The evolution of Sonic has been marked by several key milestones. Below is a brief timeline highlighting critical events in the project's history: 2022: The Sonic cryptocurrency was officially launched, marking the beginning of its journey in the Web3 gaming arena. 2024: June: Sonic SVM successfully raised $12 million in a Series A funding round. This investment allowed Sonic to further develop its platform and expand its offerings. August: The launch of the Sonic Odyssey testnet provided users with the first opportunity to engage with the platform, offering interactive activities such as collecting rings—a nod to gaming nostalgia. October: SonicX, an innovative crypto game integrated with Solana, made its debut on TikTok, capturing the attention of over 120,000 users within a short span. This integration illustrated Sonic’s commitment to reaching a broader, global audience and showcased the potential of blockchain gaming. Key Points Sonic SVM is a revolutionary layer-2 network on Solana explicitly designed to enhance the GameFi landscape, demonstrating great potential for future development. HyperGrid Framework empowers Sonic by introducing horizontal scaling capabilities, ensuring that the network can handle the demands of Web3 gaming. Integration with Social Platforms: The successful launch of SonicX on TikTok displays Sonic’s strategy to leverage social media platforms to engage users, exponentially increasing the exposure and reach of its projects. Investment Confidence: The substantial funding from BITKRAFT Ventures, among others, emphasizes the robust backing Sonic has, paving the way for its ambitious future. In conclusion, Sonic encapsulates the essence of Web3 gaming innovation, striking a balance between cutting-edge technology, developer-centric tools, and community engagement. As the project continues to evolve, it is poised to redefine the gaming landscape, making it a notable entity for gamers and developers alike. As Sonic moves forward, it will undoubtedly attract greater interest and participation, solidifying its place within the broader narrative of blockchain gaming.

1.2k Total ViewsPublished 2024.04.04Updated 2024.12.03

What is $S$

Understanding SPERO: A Comprehensive Overview Introduction to SPERO As the landscape of innovation continues to evolve, the emergence of web3 technologies and cryptocurrency projects plays a pivotal role in shaping the digital future. One project that has garnered attention in this dynamic field is SPERO, denoted as SPERO,$$s$. This article aims to gather and present detailed information about SPERO, to help enthusiasts and investors understand its foundations, objectives, and innovations within the web3 and crypto domains. What is SPERO,$$s$? SPERO,$$s$ is a unique project within the crypto space that seeks to leverage the principles of decentralisation and blockchain technology to create an ecosystem that promotes engagement, utility, and financial inclusion. The project is tailored to facilitate peer-to-peer interactions in new ways, providing users with innovative financial solutions and services. At its core, SPERO,$$s$ aims to empower individuals by providing tools and platforms that enhance user experience in the cryptocurrency space. This includes enabling more flexible transaction methods, fostering community-driven initiatives, and creating pathways for financial opportunities through decentralised applications (dApps). The underlying vision of SPERO,$$s$ revolves around inclusiveness, aiming to bridge gaps within traditional finance while harnessing the benefits of blockchain technology. Who is the Creator of SPERO,$$s$? The identity of the creator of SPERO,$$s$ remains somewhat obscure, as there are limited publicly available resources providing detailed background information on its founder(s). This lack of transparency can stem from the project's commitment to decentralisation—an ethos that many web3 projects share, prioritising collective contributions over individual recognition. By centring discussions around the community and its collective goals, SPERO,$$s$ embodies the essence of empowerment without singling out specific individuals. As such, understanding the ethos and mission of SPERO remains more important than identifying a singular creator. Who are the Investors of SPERO,$$s$? SPERO,$$s$ is supported by a diverse array of investors ranging from venture capitalists to angel investors dedicated to fostering innovation in the crypto sector. The focus of these investors generally aligns with SPERO's mission—prioritising projects that promise societal technological advancement, financial inclusivity, and decentralised governance. These investor foundations are typically interested in projects that not only offer innovative products but also contribute positively to the blockchain community and its ecosystems. The backing from these investors reinforces SPERO,$$s$ as a noteworthy contender in the rapidly evolving domain of crypto projects. How Does SPERO,$$s$ Work? SPERO,$$s$ employs a multi-faceted framework that distinguishes it from conventional cryptocurrency projects. Here are some of the key features that underline its uniqueness and innovation: Decentralised Governance: SPERO,$$s$ integrates decentralised governance models, empowering users to participate actively in decision-making processes regarding the project’s future. This approach fosters a sense of ownership and accountability among community members. Token Utility: SPERO,$$s$ utilises its own cryptocurrency token, designed to serve various functions within the ecosystem. These tokens enable transactions, rewards, and the facilitation of services offered on the platform, enhancing overall engagement and utility. Layered Architecture: The technical architecture of SPERO,$$s$ supports modularity and scalability, allowing for seamless integration of additional features and applications as the project evolves. This adaptability is paramount for sustaining relevance in the ever-changing crypto landscape. Community Engagement: The project emphasises community-driven initiatives, employing mechanisms that incentivise collaboration and feedback. By nurturing a strong community, SPERO,$$s$ can better address user needs and adapt to market trends. Focus on Inclusion: By offering low transaction fees and user-friendly interfaces, SPERO,$$s$ aims to attract a diverse user base, including individuals who may not previously have engaged in the crypto space. This commitment to inclusion aligns with its overarching mission of empowerment through accessibility. Timeline of SPERO,$$s$ Understanding a project's history provides crucial insights into its development trajectory and milestones. Below is a suggested timeline mapping significant events in the evolution of SPERO,$$s$: Conceptualisation and Ideation Phase: The initial ideas forming the basis of SPERO,$$s$ were conceived, aligning closely with the principles of decentralisation and community focus within the blockchain industry. Launch of Project Whitepaper: Following the conceptual phase, a comprehensive whitepaper detailing the vision, goals, and technological infrastructure of SPERO,$$s$ was released to garner community interest and feedback. Community Building and Early Engagements: Active outreach efforts were made to build a community of early adopters and potential investors, facilitating discussions around the project’s goals and garnering support. Token Generation Event: SPERO,$$s$ conducted a token generation event (TGE) to distribute its native tokens to early supporters and establish initial liquidity within the ecosystem. Launch of Initial dApp: The first decentralised application (dApp) associated with SPERO,$$s$ went live, allowing users to engage with the platform's core functionalities. Ongoing Development and Partnerships: Continuous updates and enhancements to the project's offerings, including strategic partnerships with other players in the blockchain space, have shaped SPERO,$$s$ into a competitive and evolving player in the crypto market. Conclusion SPERO,$$s$ stands as a testament to the potential of web3 and cryptocurrency to revolutionise financial systems and empower individuals. With a commitment to decentralised governance, community engagement, and innovatively designed functionalities, it paves the way toward a more inclusive financial landscape. As with any investment in the rapidly evolving crypto space, potential investors and users are encouraged to research thoroughly and engage thoughtfully with the ongoing developments within SPERO,$$s$. The project showcases the innovative spirit of the crypto industry, inviting further exploration into its myriad possibilities. While the journey of SPERO,$$s$ is still unfolding, its foundational principles may indeed influence the future of how we interact with technology, finance, and each other in interconnected digital ecosystems.

54 Total ViewsPublished 2024.12.17Updated 2024.12.17

What is AGENT S

Agent S: The Future of Autonomous Interaction in Web3 Introduction In the ever-evolving landscape of Web3 and cryptocurrency, innovations are constantly redefining how individuals interact with digital platforms. One such pioneering project, Agent S, promises to revolutionise human-computer interaction through its open agentic framework. By paving the way for autonomous interactions, Agent S aims to simplify complex tasks, offering transformative applications in artificial intelligence (AI). This detailed exploration will delve into the project's intricacies, its unique features, and the implications for the cryptocurrency domain. What is Agent S? Agent S stands as a groundbreaking open agentic framework, specifically designed to tackle three fundamental challenges in the automation of computer tasks: Acquiring Domain-Specific Knowledge: The framework intelligently learns from various external knowledge sources and internal experiences. This dual approach empowers it to build a rich repository of domain-specific knowledge, enhancing its performance in task execution. Planning Over Long Task Horizons: Agent S employs experience-augmented hierarchical planning, a strategic approach that facilitates efficient breakdown and execution of intricate tasks. This feature significantly enhances its ability to manage multiple subtasks efficiently and effectively. Handling Dynamic, Non-Uniform Interfaces: The project introduces the Agent-Computer Interface (ACI), an innovative solution that enhances the interaction between agents and users. Utilizing Multimodal Large Language Models (MLLMs), Agent S can navigate and manipulate diverse graphical user interfaces seamlessly. Through these pioneering features, Agent S provides a robust framework that addresses the complexities involved in automating human interaction with machines, setting the stage for myriad applications in AI and beyond. Who is the Creator of Agent S? While the concept of Agent S is fundamentally innovative, specific information about its creator remains elusive. The creator is currently unknown, which highlights either the nascent stage of the project or the strategic choice to keep founding members under wraps. Regardless of anonymity, the focus remains on the framework's capabilities and potential. Who are the Investors of Agent S? As Agent S is relatively new in the cryptographic ecosystem, detailed information regarding its investors and financial backers is not explicitly documented. The lack of publicly available insights into the investment foundations or organisations supporting the project raises questions about its funding structure and development roadmap. Understanding the backing is crucial for gauging the project's sustainability and potential market impact. How Does Agent S Work? At the core of Agent S lies cutting-edge technology that enables it to function effectively in diverse settings. Its operational model is built around several key features: Human-like Computer Interaction: The framework offers advanced AI planning, striving to make interactions with computers more intuitive. By mimicking human behaviour in tasks execution, it promises to elevate user experiences. Narrative Memory: Employed to leverage high-level experiences, Agent S utilises narrative memory to keep track of task histories, thereby enhancing its decision-making processes. Episodic Memory: This feature provides users with step-by-step guidance, allowing the framework to offer contextual support as tasks unfold. Support for OpenACI: With the ability to run locally, Agent S allows users to maintain control over their interactions and workflows, aligning with the decentralised ethos of Web3. Easy Integration with External APIs: Its versatility and compatibility with various AI platforms ensure that Agent S can fit seamlessly into existing technological ecosystems, making it an appealing choice for developers and organisations. These functionalities collectively contribute to Agent S's unique position within the crypto space, as it automates complex, multi-step tasks with minimal human intervention. As the project evolves, its potential applications in Web3 could redefine how digital interactions unfold. Timeline of Agent S The development and milestones of Agent S can be encapsulated in a timeline that highlights its significant events: September 27, 2024: The concept of Agent S was launched in a comprehensive research paper titled “An Open Agentic Framework that Uses Computers Like a Human,” showcasing the groundwork for the project. October 10, 2024: The research paper was made publicly available on arXiv, offering an in-depth exploration of the framework and its performance evaluation based on the OSWorld benchmark. October 12, 2024: A video presentation was released, providing a visual insight into the capabilities and features of Agent S, further engaging potential users and investors. These markers in the timeline not only illustrate the progress of Agent S but also indicate its commitment to transparency and community engagement. Key Points About Agent S As the Agent S framework continues to evolve, several key attributes stand out, underscoring its innovative nature and potential: Innovative Framework: Designed to provide an intuitive use of computers akin to human interaction, Agent S brings a novel approach to task automation. Autonomous Interaction: The ability to interact autonomously with computers through GUI signifies a leap towards more intelligent and efficient computing solutions. Complex Task Automation: With its robust methodology, it can automate complex, multi-step tasks, making processes faster and less error-prone. Continuous Improvement: The learning mechanisms enable Agent S to improve from past experiences, continually enhancing its performance and efficacy. Versatility: Its adaptability across different operating environments like OSWorld and WindowsAgentArena ensures that it can serve a broad range of applications. As Agent S positions itself in the Web3 and crypto landscape, its potential to enhance interaction capabilities and automate processes signifies a significant advancement in AI technologies. Through its innovative framework, Agent S exemplifies the future of digital interactions, promising a more seamless and efficient experience for users across various industries. Conclusion Agent S represents a bold leap forward in the marriage of AI and Web3, with the capacity to redefine how we interact with technology. While still in its early stages, the possibilities for its application are vast and compelling. Through its comprehensive framework addressing critical challenges, Agent S aims to bring autonomous interactions to the forefront of the digital experience. As we move deeper into the realms of cryptocurrency and decentralisation, projects like Agent S will undoubtedly play a crucial role in shaping the future of technology and human-computer collaboration.

557 Total ViewsPublished 2025.01.14Updated 2025.01.14