AI inference costs have dropped by over 80% in 18 months, yet China's three major cloud providers announced price hikes in the same week. This will be a structural price game lasting at least two to three years. This article attempts to answer a more important question: when will it end?

Tomorrow (April 18), Alibaba Cloud and Baidu AI Cloud will officially begin price adjustments. Three weeks later, Tencent Cloud will also usher in a new round of price increases. Globally, OpenAI and Anthropic have reduced API prices by over 80% in the past 18 months, and the emergence of DeepSeek-R1 has further led the outside world to believe that inference costs are about to hit zero.

As a result, China's three major cloud providers announced price increases of 20% to 30% in the same week.

Figure | Timeline of global cloud computing price increase events in 2026

The media's initial reaction was "the price war is over, and the big players are starting to reap profits." This assessment is not wrong, but it stops at the most superficial interpretation. It explains why cloud providers are raising prices but does not answer the more critical question: Is this price hike a temporary correction or the starting point of a sustained trend? The answer lies in an economic paradox from 150 years ago.

01.

Jevons Paradox: The Cheaper It Gets, the More It Burns

In 1865, British economist William Jevons observed a counterintuitive phenomenon: after the efficiency of steam engines improved, the total coal consumption in the UK increased dramatically. The reduction in usage costs triggered an explosion in demand. This is the Jevons Paradox, which has accurately reappeared in the computing power market of 2026.

DeepSeek-R1 has indeed significantly reduced the cost per token for inference. But it has also opened a floodgate of demand: many enterprises that previously found "AI too expensive" have begun integrating AI into their business processes. Once integrated, token consumption expands at a nonlinear rate.

A more critical change is that AI applications have moved from "dialogue" to "doing things": Agents and Reasoning Models have entered the scene. A task that previously burned 1,000 tokens now burns 5,000 tokens with a reasoning chain, as Reasoning Models "think" on their own, consuming 10 to 50 times more than standard models.

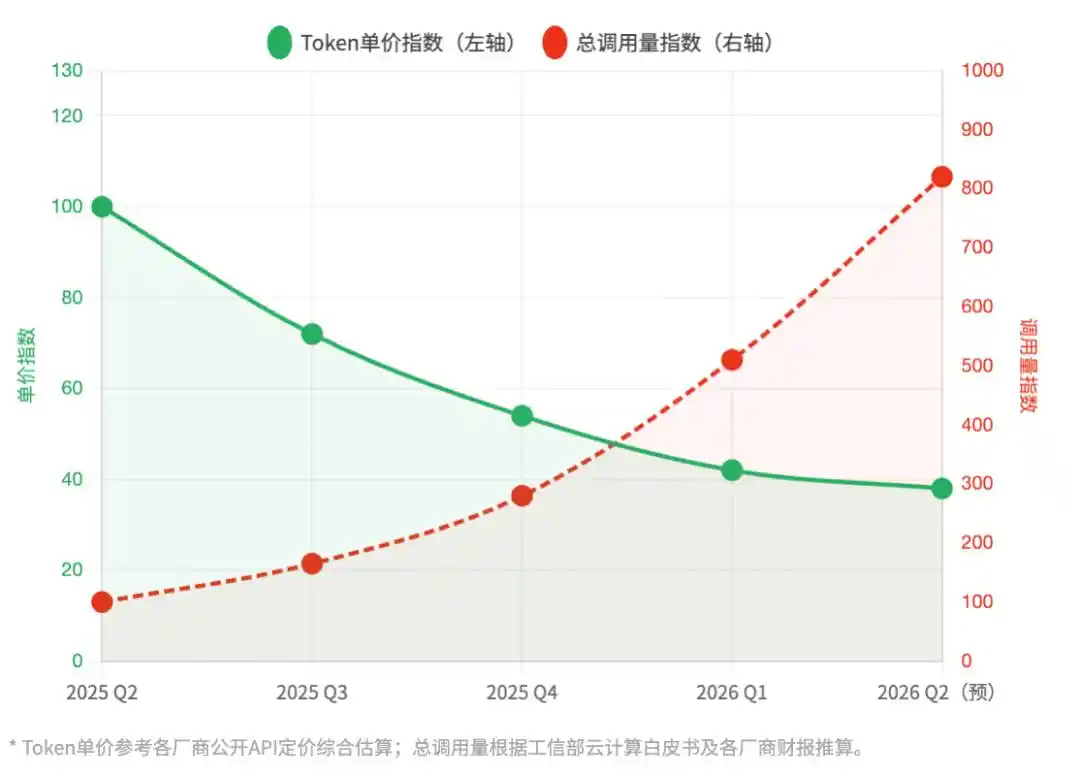

Figure | Before and after DeepSeek's release: Token unit price vs. total call volume trend

Note: 2025Q2 = Baseline 100 | Comprehensive estimate of inference APIs from major Chinese cloud providers

DeepSeek lowered the entry barrier but broke through the computing power ceiling. Each unit token is becoming cheaper, but each business task is becoming more expensive. This is the real foundation on which this round of price increases is built.

02.

Weights Open-Sourced, Inference Stack Not Open-Sourced

Another detail largely overlooked in reports: DeepSeek open-sourced the model weights but not its inference optimization stack. The difference between the two is like being given the design blueprints for an engine but not being told how to tune it for F1 performance.

What truly determines inference cost is not just the model architecture but the engineering capabilities hidden beneath the surface: the hit rate of speculative decoding, memory scheduling strategies for KV Cache, optimization separation between Prefill and Decode phases, and the network topology of ten-thousand-card clusters. These hard skills remain the moat of a few leading cloud providers.

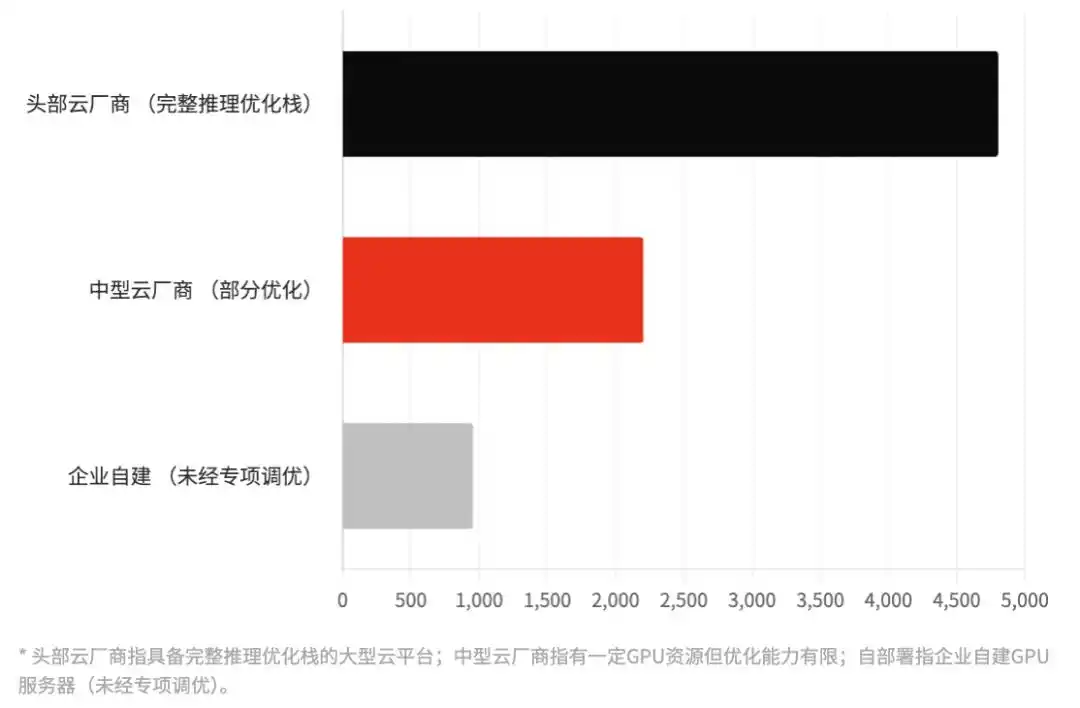

Figure | Actual efficiency gap in inference under equivalent model scale

Using DeepSeek-R1-67B as a benchmark, comparison of tokens processed per second (TPS) under different deployment conditions | Values are comprehensive industry estimates

Running the same DeepSeek-R1, the inference efficiency of leading cloud providers can be 3 to 5 times higher than that of self-built enterprise deployments. This means that with the same computing power investment, cloud providers can serve more concurrency, resulting in lower unit costs.

This efficiency gap is one source of the "premium" charged by cloud providers. It is a tangible engineering barrier. Therefore, this price increase is, to some extent, also about pricing their technical advantage.

03.

Battle of the Giants: The Ledgers and Ambitions of Four Major Players

In this wave of collective price adjustments, the stances of the four core giants vary, reflecting different commercial calculations.

Alibaba Cloud: Wu Yongming-style "Profit Quality" Defense. Alibaba's adjustment is the most resolute, with increases focused mainly on high-end GPU instances and storage (CPFS). Against the backdrop of Alibaba's full return to "efficiency first," Alibaba Cloud is no longer pursuing所谓的 "cloud market share first" but is instead aiming for "AI computing power profit margin first." The subtext is that Alibaba Cloud is establishing a "VIP computing power zone." If you cannot cover this 30% premium, you might not be on Alibaba's core target customer list.

Baidu AI Cloud: "User Filter." As the earliest player to bet big on large models, Baidu is facing pressure from the quantum leap in inference costs for its ERNIE model as call volumes scale. Therefore, Baidu's price hike is more like a "user reshuffle." It is actively weeding out small individual developers who only seek free benefits without creating commercial closed loops,转而全力服务对价格不敏感的B端大客户 (turning instead to fully serve large B-end customers who are price-insensitive). Baidu needs to prove through price adjustments that its AI growth no longer relies on subsidies but on "selling intelligence at a premium."

Tencent Cloud: "ROI Correction" After Ecosystem Lock-in. Tencent Cloud's move came three weeks later than Alibaba's, a typical "follow-the-leader strategy." Tencent's confidence lies in the deep integration with the WeChat ecosystem and Enterprise WeChat. When enterprise workflows are deeply embedded in Tencent's social/collaboration ecosystem, the migration cost is extremely high. Tencent Cloud's price increase is more like a "catch-up increase," used to correct the ROI sacrificed over the past two years to seize the ecosystem, making the AI business look more "respectable" in financial reports.

Volcano Engine: Strategic "Unbalanced Followership" and Talent Grab Plan. Volcano Engine (ByteDance) is the variable in this wave of price hikes. Although it has also adjusted some prices, the increases on many core APIs are significantly lower than those of Alibaba and Baidu. ByteDance is using this window period for "stock interception." Relying on the tremendous computing power absorption capacity brought by internal Douyin and TikTok, Volcano holds an extremely strong cost amortization card. While competitors are "driving away customers" to protect profits, Volcano is waiting for those who fall behind, attempting to use the price difference to achieve one last "installed base" overtake.

04.

The Biggest Surprise: Large Enterprises Start "Leaving"

This price increase has triggered an unintended counterforce: it has实质上坚定了大企业“自建算力”的决心 (substantially strengthened large enterprises' determination to "self-build computing power").

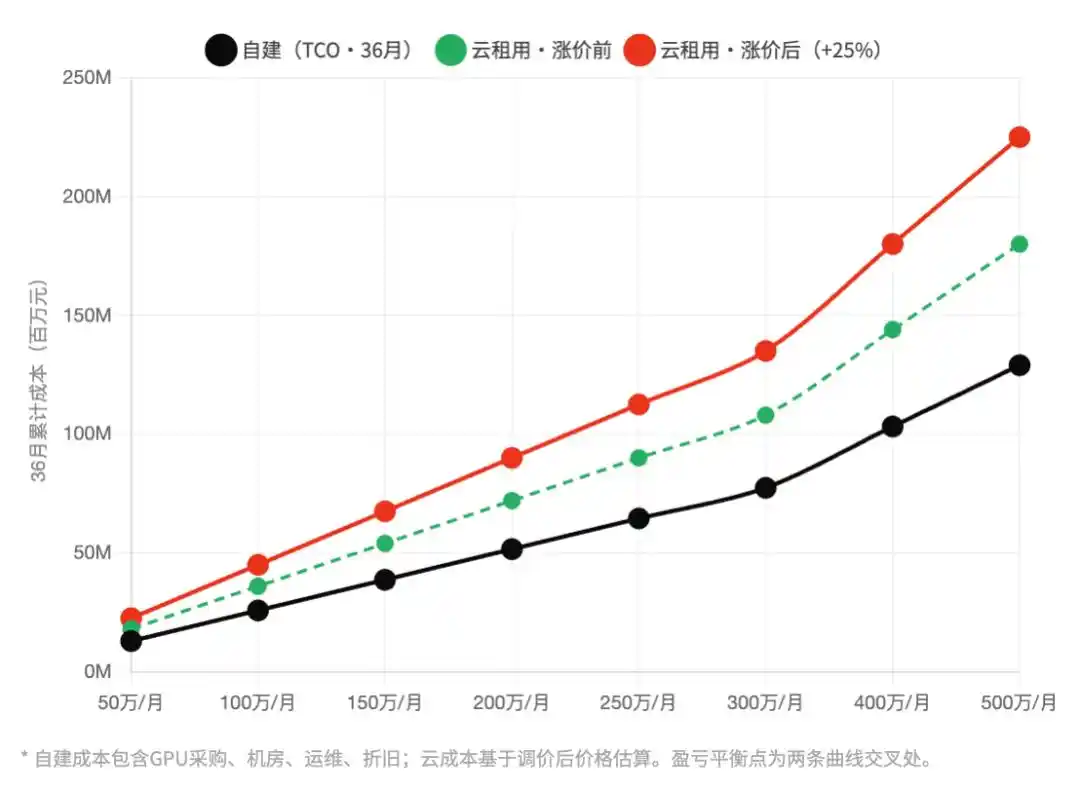

The cloud computing industry has a hidden rule: when the monthly bill exceeds a certain threshold, the financial model of "self-build vs. rent" flips. For banks, central state-owned enterprises, and large manufacturers, this threshold is roughly at a monthly cloud computing expenditure of 3 million to 5 million yuan.

In 2024, most large enterprises were below this threshold, making self-building uneconomical. In 2025, as AI projects rolled out, some enterprises began to touch the line. And this round of 20% to 30% price increases in 2026 has directly pushed a group of customers who were originally just on the line into the zone where they "must seriously consider self-building."

Figure | Cloud rental vs. self-build: Total Cost of Ownership (TCO) break-even point calculation

Horizontal axis: average monthly computing power expenditure (10k yuan/month), Vertical axis: 36-month cumulative cost (million yuan) | Comparison before and after price increase

The beneficiaries of this self-building wave are not the cloud providers' competitors but more peripheral players: GPU rental platforms saw inquiry volumes triple year-on-year in March; Huawei Ascend's delivery schedule for large customers has been extended to 6 months; integrators specializing in helping enterprises build "private inference clusters" have suddenly become highly sought after.

Cloud providers intended to raise prices to harvest high-end customers but inadvertently pushed away a group of large customers with self-building capabilities. This decision risk may be reassessed when the earnings season arrives.

05.

Who Wins? The Truth About Benefit Distribution

The price increases by the three cloud providers are seen by the media as "big players harvesting." But from the perspective of the entire industry chain, the distribution of real winners is much more complex.

There is an ironic reality: the most hurt are the small and medium-sized AI startups full of innovative vitality. If they fall on a large scale due to costs, the cloud providers' own ecosystems will wither accordingly.

This is not without precedent. In the early 2010s, Amazon AWS's aggressive price increases on some services accelerated the shift of some developers to Google Cloud, indirectly helping GCP complete its early ecosystem accumulation. History does not repeat itself simply, but it rhymes.

06.

How Long Will the Era of Price Hikes Last?

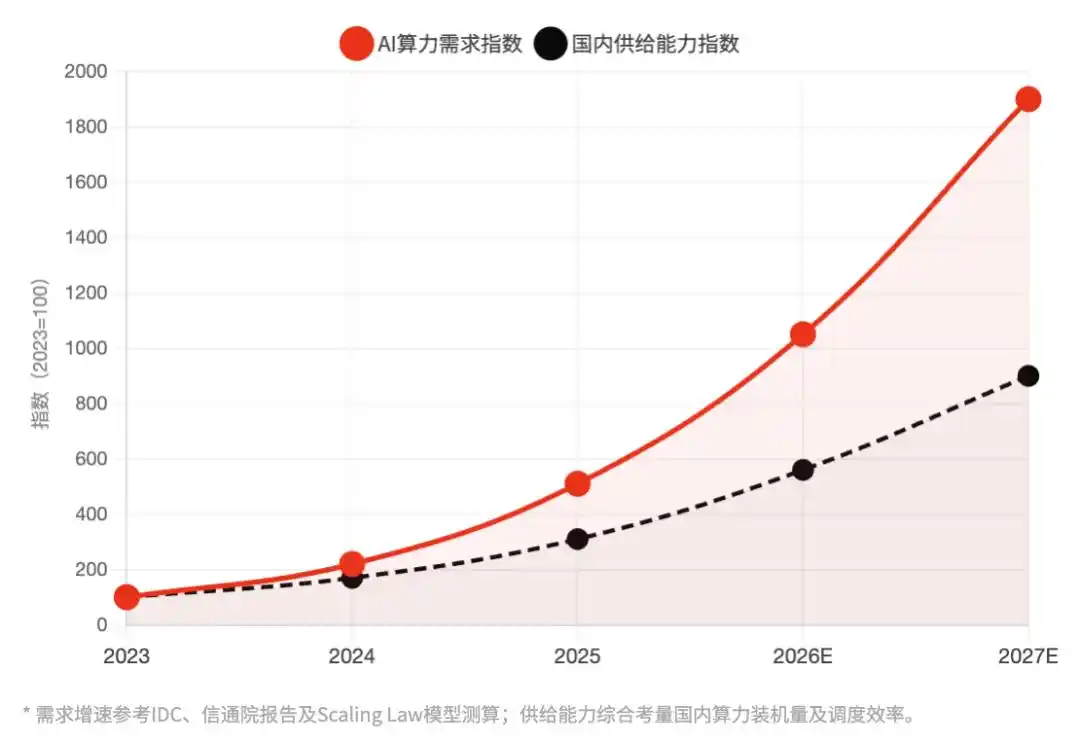

Simply put, the essence of this round of price increases is a pressure release in China's AI computing power market amidst exploding demand and supply constraints. Squeezed from both sides, prices can only move upward. This is not entirely an active choice by cloud providers; in a sense, it is also a forced pricing correction.

Figure | China's high-performance AI computing power: Demand growth rate vs. domestic supply capacity expansion rate Index: 2023 = 100 | The持续扩大的供需缺口 (continuously expanding supply-demand gap) is the underlying logic of this price increase

None of the three structural factors supporting this round of price increases will substantially disappear within 12 months: the quantum leap in token consumption brought by the adoption of Reasoning models, the accelerated large-scale deployment of AI Agents, and the supply constraints caused by Nvidia's export controls.

The B2B software market has a repeatedly verified规律 (rule): the Price Ratchet Effect. None of AWS's several price increases in the early 2010s were fully rolled back after supply improved. Google Cloud storage pricing has only seen one downward step since 2021, accompanied by tightened storage limits. Cloud providers understand this规律 (rule): this price increase is not just "harvesting during a window" but also about locking in a new price baseline.

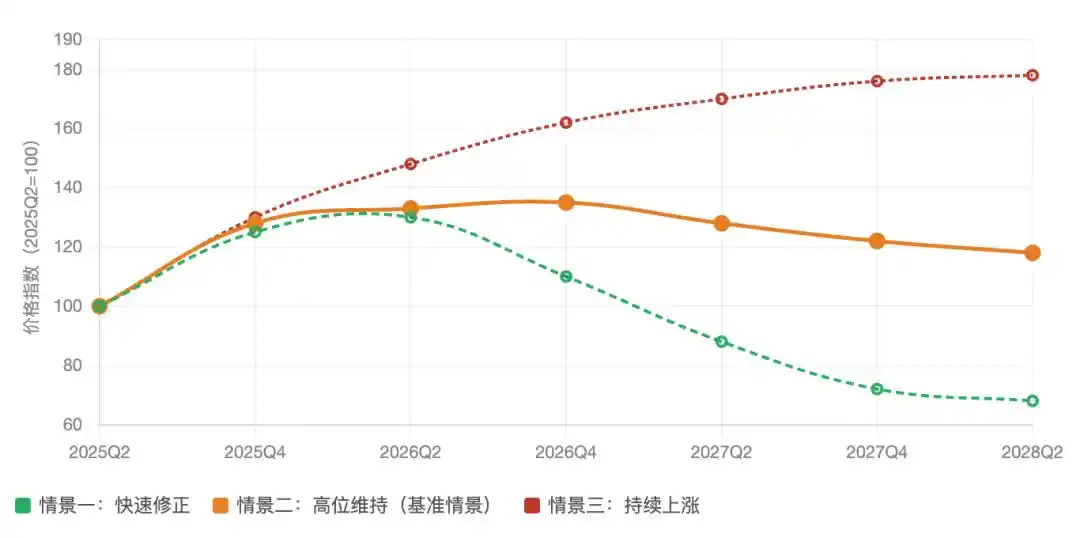

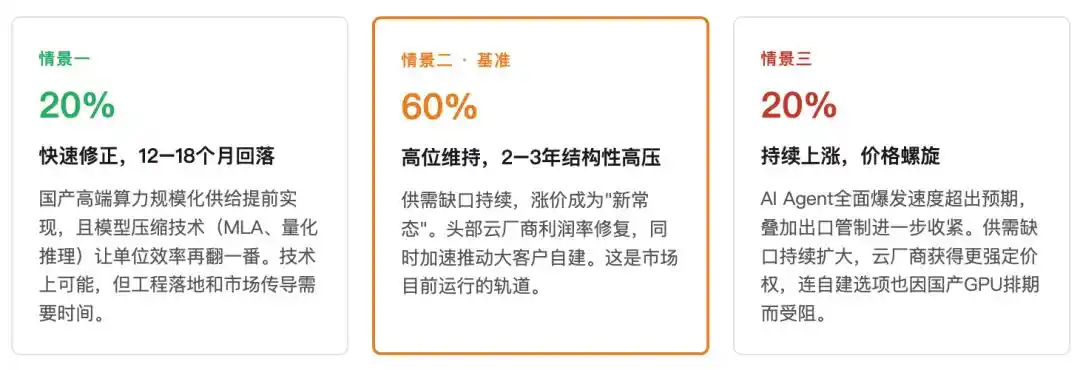

Computing power price index trend: Three scenario predictions (2025Q2–2028Q2)

2025Q2 = Baseline 100 | Comprehensive inference API average price index estimate, including price increase effects

Therefore, before 2027, "computing power to zero" will not become a reality. The real factor determining the price inflection point depends on when the scheduling efficiency of domestic computing power can substantially catch up with Nvidia's H100. Judging from the current engineering progress, this point in time is most likely between 2027 and 2028.

And during this window, cloud providers have every reason to "raise prices first out of respect," because they know the window will not stay open forever.

07

Conclusion: A Structural Game on the Supply Side

What this round of price increases reveals is not the grand narrative of "AI commercialization's coming of age" but a more specific industrial reality: when an efficiency revolution and demand explosion occur simultaneously, prices may not fall but instead rise. The Jevons Paradox held true in the coal era, and it同样成立 (holds equally true) in the computing power era.

For small and medium-sized AI application companies, rather than arguing about who is harvesting, it is better to seriously calculate: in their own business scenarios, how many tokens are being consumed无效ly (ineffectively)?

Saving tokens is the hardest moat in this era.

This article is from the WeChat public account "EmphasizeNext" (ID: leo89203898), author: Wen Xin, editor: Xiao Bai