Original Title: how I run 200 AI agents on the hormuz crisis with Mirofish, and compare it to polymarket

Original Author: The Smart Ape

Original Compilation: Peggy, BlockBeats

Editor's Note: When AI begins to simulate a public opinion field, the act of prediction itself is quietly changing.

This article documents an experiment on the situation in the Strait of Hormuz: the author used MiroFish to build a simulation system composed of 200 agents, allowing governments, media, energy companies, traders, and ordinary people to coexist in a simulated social network, forming judgments through continuous interaction, debate, and information dissemination, and comparing this group result with Polymarket's market pricing.

The results were not consistent. The group discussion was overall optimistic, while the market was significantly more pessimistic; in free expression, a minority of pessimists were actually closer to the real pricing; and once placed in an interview setting, almost all agents converged to more moderate, cooperative expressions.

This split is not unfamiliar. In the real world, public statements often tend towards stability and optimism, while true risk assessments are hidden in actions and informal expressions. In other words, what people say, what they think, and how they bet with money are often three different systems.

In such a structure, the most valuable signals often come not from consensus, but from those voices that seem out of place amidst the noise.

Below is the original text:

I used MiroFish to simulate the situation in the Strait of Hormuz over the coming weeks. This tool excels at handling such problems because it can perform highly complex scenario simulations: introducing multiple participants, different roles, and their respective incentive mechanisms into the same system, and letting these agents continuously game and debate, eventually forming a result close to consensus.

Below are the specific steps I took to run this simulation and the final results I obtained. Anyone can reproduce it; the key is just knowing which steps to follow.

First, MiroFish is an open-source project from a Chinese research team. You feed it a batch of documents, it first builds a knowledge graph, then generates different agent personas based on this graph, and subsequently releases these agents into a simulated Twitter environment. In this environment, they post, retweet, comment, like, and argue with each other. After the simulation ends, you can also interview each agent individually to view their respective positions and reasoning processes.

You input a crisis scenario, and it generates a debate around that event; from this debate, you can extract a prediction.

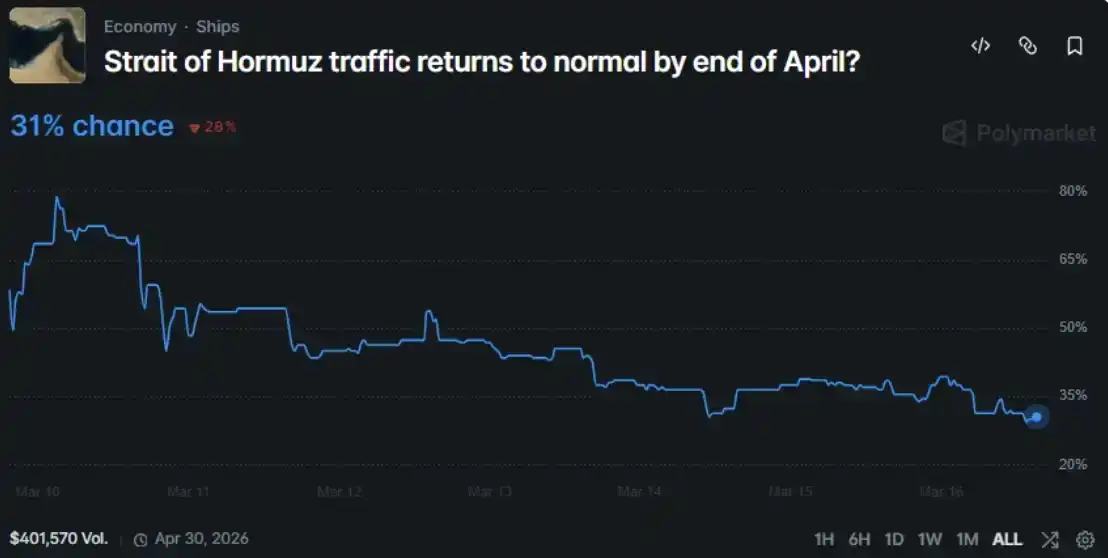

I aimed it at an ongoing Polymarket market question: Will maritime transport in the Strait of Hormuz return to normal by the end of April 2026?

So, I fed all this information to MiroFish, generated 200 agent roles—including governments, media, military, energy companies, traders, and ordinary citizens—and had them argue for 7 simulated days in a simulated environment. Finally, I compared their output with the market pricing.

Overall configuration:

· Model: GPT-4o mini, offering the best balance of cost and effect for a scenario with 200 agents

· Memory System: Zep Cloud, for storing agent memories and knowledge graph

· Simulation Engine: OASIS (Twitter clone environment provided by Camel-AI)

· Hardware: Mac mini M4 Pro, 24GB RAM

· Runtime: ~49 minutes, completing 100 simulation rounds

· Cost: ~$3 to $5 in API calls

· Seed Material: A 5800-character brief compiled from Wikipedia, CNBC, Al Jazeera, Forbes, Reuters,内容包括军事时间线、封锁状态、油价、经济损失、外交努力,以及 GCC 3.2 万亿美元投资相关因素。内容包括军事时间线、封锁状态、油价、经济损失、外交努力,以及 GCC 3.2 万亿美元投资相关因素。 That is, the core information needed for the agents to form judgments was included.

How to Reproduce This Process (Step-by-Step Instructions)

If you also want to run it yourself, below are the complete steps I actually took. The entire setup process takes about 2 hours, with API costs around $3 to $5; if you increase the number of rounds or agents, the cost will be higher.

What You Need to Prepare

· Python 3.12 (Do not use 3.14, tiktoken will error on this version)

· Node.js 22 and above

· An OpenAI API Key (GPT-4o mini is cheap enough, suitable for this scenario)

· A Zep Cloud account (the free tier is sufficient for small-scale simulations)

· A machine with decent RAM. I used a Mac mini M4 Pro with 24GB RAM, but 16GB should suffice

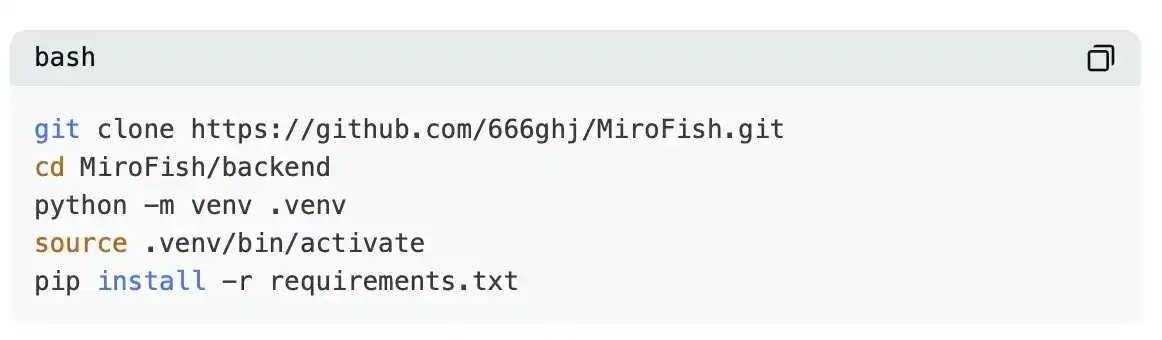

Step 1: Install MiroFish

Then configure your .env file

OPENAI_API_KEY=sk-your-key

OPENAI_BASE_URL=link

OPENAI_MODEL=gpt-4o-mini

ZEP_API_KEY=your-zep-key

Step 2: Create a project and upload your seed document

The seed document is the most important part of the entire process; it determines what information the agents know about the current situation. I prepared a brief of about 5800 characters, covering the military timeline, blockade status, oil prices, economic losses, diplomatic efforts, and the impact level of GCC investment, sourced from Wikipedia, CNBC, Al Jazeera, Forbes, and Reuters.

Step 3: Generate the Ontology

This step tells MiroFish what types of entities it should identify and what relationships might exist between them.

I ended up generating 10 types of entities: Countries, Military, Diplomats, Business Entities, Media Organizations, Economic Entities, Organizations, Individuals, Infrastructure, Prediction Markets; and 6 types of relationships. If the automatically generated results don't fit your scenario well, you can adjust them manually.

Step 4: Build the Knowledge Graph

This step uses Zep Cloud. MiroFish sends the seed document and ontology to Zep, which is responsible for extracting entities and building the graph.

This process takes a minute or two. I ended up with a graph containing 65 nodes and 85 edges, connecting elements like countries, people, organizations, commodities, etc.

Step 5: Generate Agents

MiroFish generates a complete personality profile for each entity based on the knowledge graph, including MBTI personality type, age, country, posting style, emotional triggers, taboo topics, and institutional memory.

I initially generated 43 core agents from the knowledge graph. Afterwards, the system can expand these core roles to your desired total number. I finally set the total number of agents to 200, adding more diverse civilian roles, such as crypto traders, airline pilots, professors, students, social activists, etc.

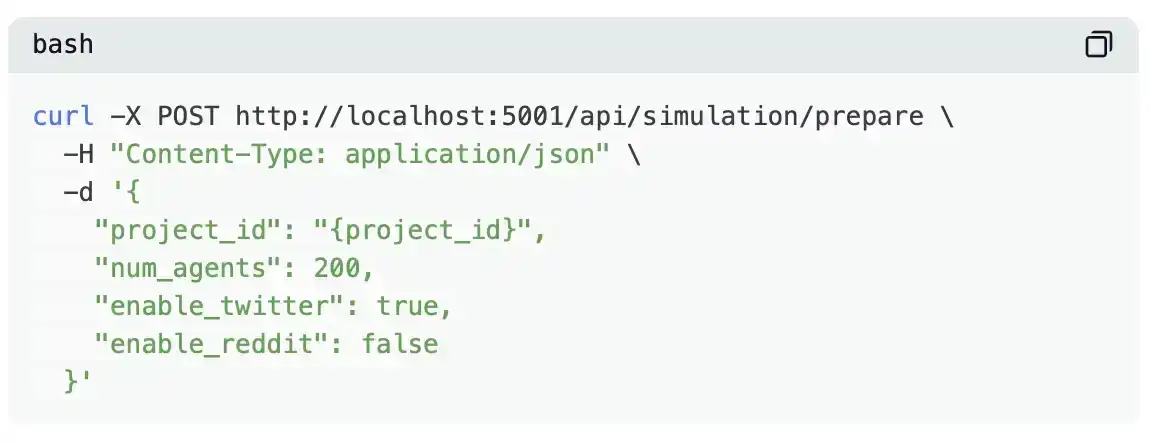

Step 6: Prepare the Simulation Environment

This step generates the complete simulation configuration, including agent action schedules, initial seed posts, and time parameters. MiroFish automatically selects a relatively reasonable default setup, such as peak active hours, sleep times, and posting frequencies for different types of agents.

My configuration was: Simulate 168 hours (7 days), 100 rounds (each round represents 1 hour), use only the Twitter scene, and set individual active timetables for different agents.

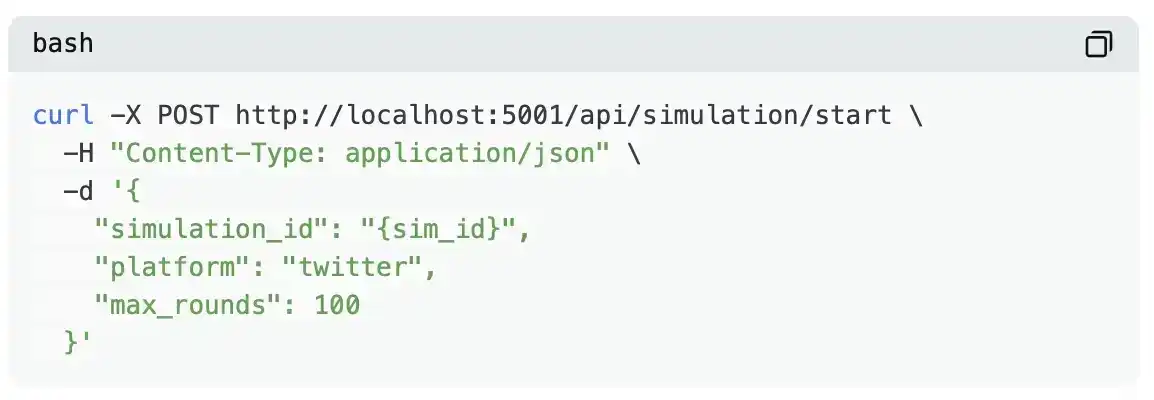

Step 7: Start the simulation.

Then wait. Using GPT-4o mini for 200 agents and 100 simulation rounds took me about 49 minutes. You can monitor progress via the API or simply check the logs.

Throughout the process, the agents run autonomously: they observe the timeline, decide whether to post, retweet, comment, repost, like, or simply scroll through the feed—the entire process requires no manual intervention.

Step 8 (Optional): Interview Agents

After the simulation ends, the system enters command mode. Here you can interview a specific agent individually or interview all agents at once:

Analysis

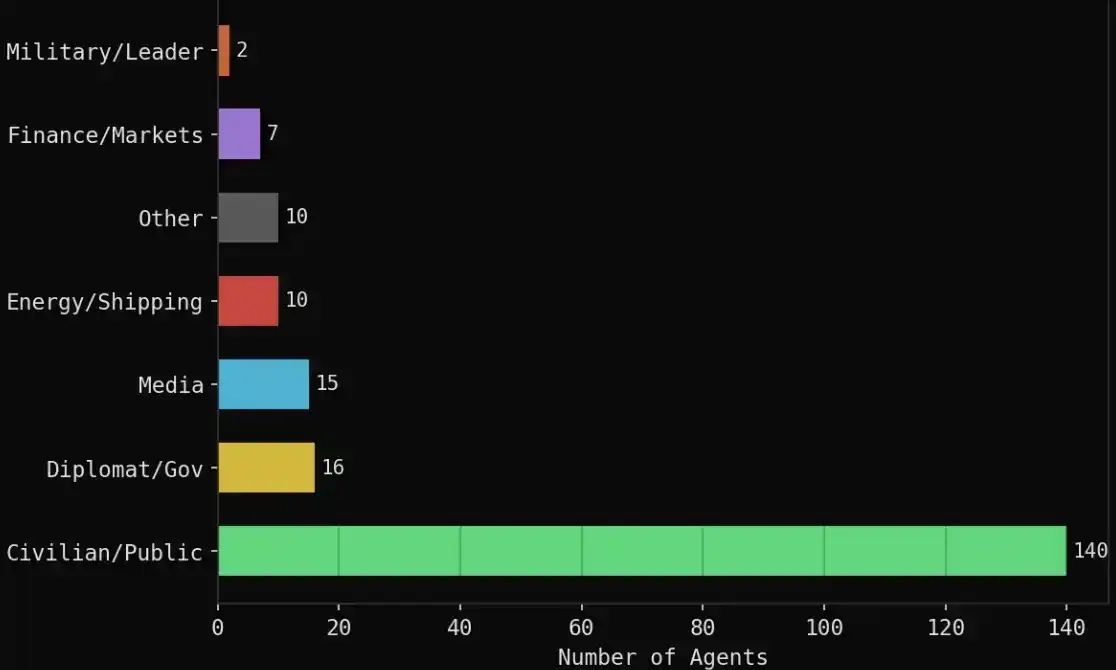

MiroFish first reads the seed document and automatically generates an ontology structure (including 10 entity types and 6 relationship types); then, based on these definitions, it extracts a knowledge graph (containing 65 nodes and 85 edges). On this basis, it builds a complete personality profile for each entity, including MBTI personality type, age, country, posting style, emotional triggers, and institutional memory.

Finally, 43 core agents were generated from the knowledge graph, and expanded to a total of 200 agents, introducing more diverse civilian roles to enhance the overall simulation's diversity and realism.

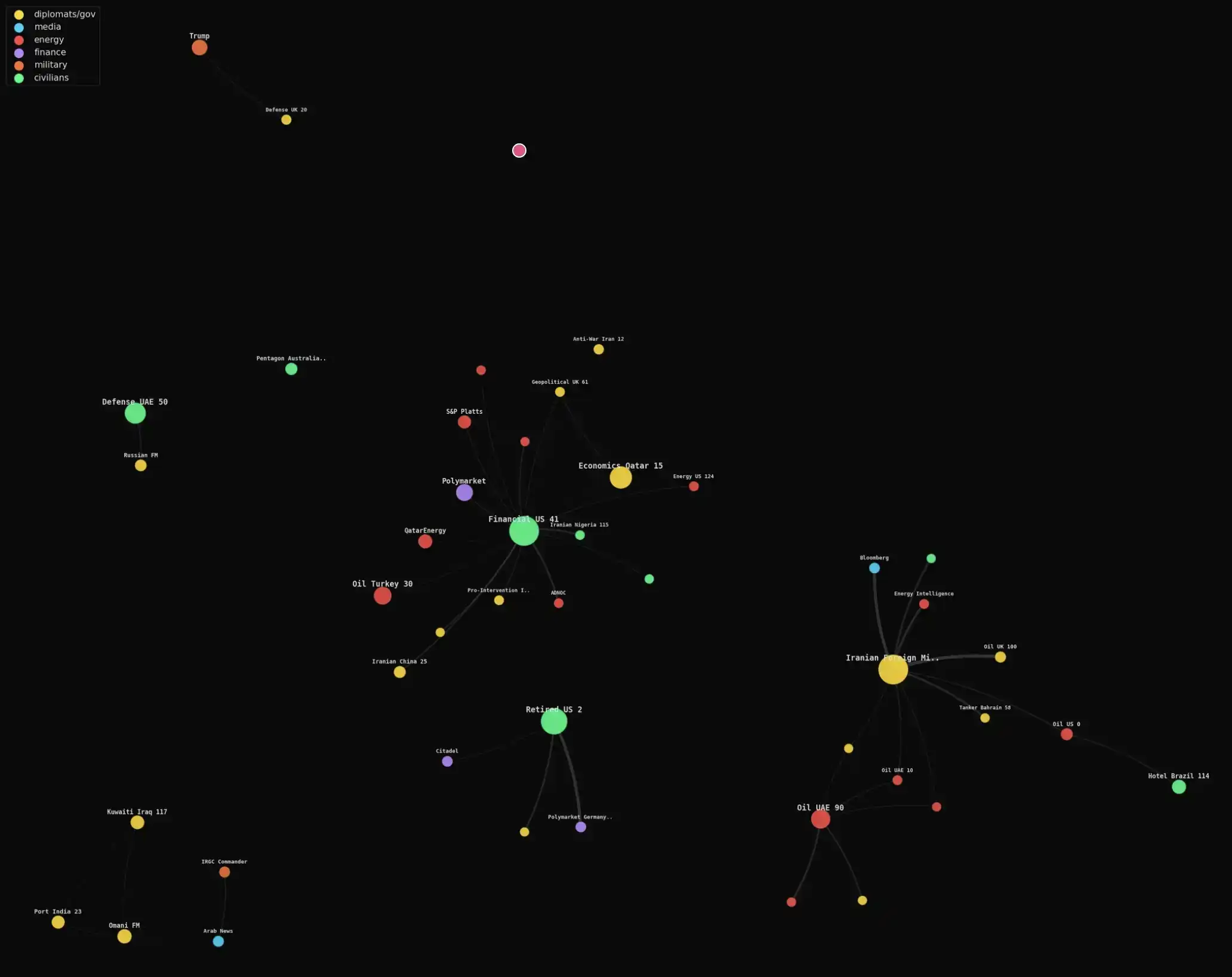

Specific composition:

· 140 civilian agents: crypto traders, airline pilots, supply chain managers, students, social activists, professors, etc.

· 16 diplomatic/government roles: Iranian Foreign Minister, Saudi Foreign Minister, Omani Foreign Minister, Bahraini Prime Minister, Chinese Foreign Minister, EU, UN, etc.

· 15 media organizations: Reuters, CNN, Bloomberg, Al Jazeera, BBC, Fox, Wall Street Journal, etc.

· 10 energy/shipping related: OPEC, Platts, QatarEnergy, Aramco, Maersk, etc.

· 7 financial institutions: Polymarket, Kalshi, Goldman Sachs, JPMorgan, Citadel, ADIA, etc.

· 2 military/political roles: Trump, Iranian Revolutionary Guard Commander

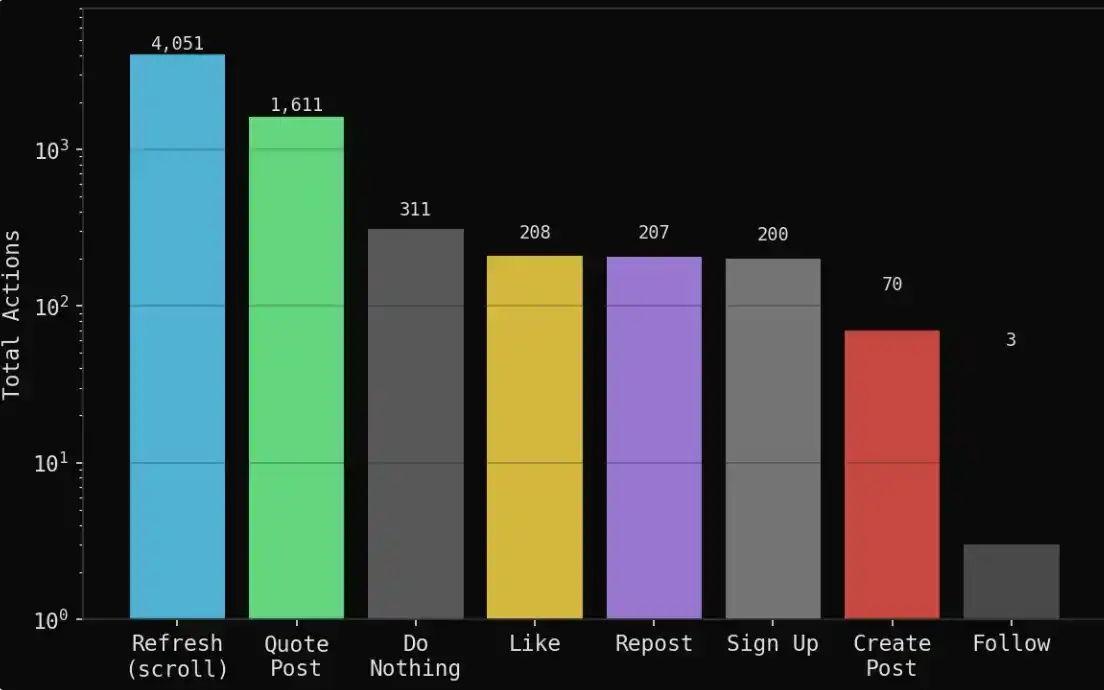

During the 7-day (100-round) simulation, it produced:

1,888 posts

6,661 behavior tracks (recording all actions)

1,611 quote retweets (agents responding and gaming with each other)

4,051 refreshes (just browsing the feed)

311 instances of doing nothing (choosing to watch)

208 likes, 207 reposts

70 original viewpoints (new independent positions or judgments)

Overall, this system presents not simple information generation but something closer to a social behavior simulation: most of the time, agents are observing, digesting information, and interacting, rather than continuously outputting. This structure is closer to the distribution of behavior in a real public opinion field—a small amount of original content, overlaid with a large amount of retelling, gaming, and emotional feedback.

Agents spent most of their time reading and quoting others' opinions rather than actively creating new content.

The entire group showed a clear bias in emotional propagation: optimistic views were more easily amplified and shared, while more pessimistic judgments, even if logically closer to reality, often spread less and had weaker volume.

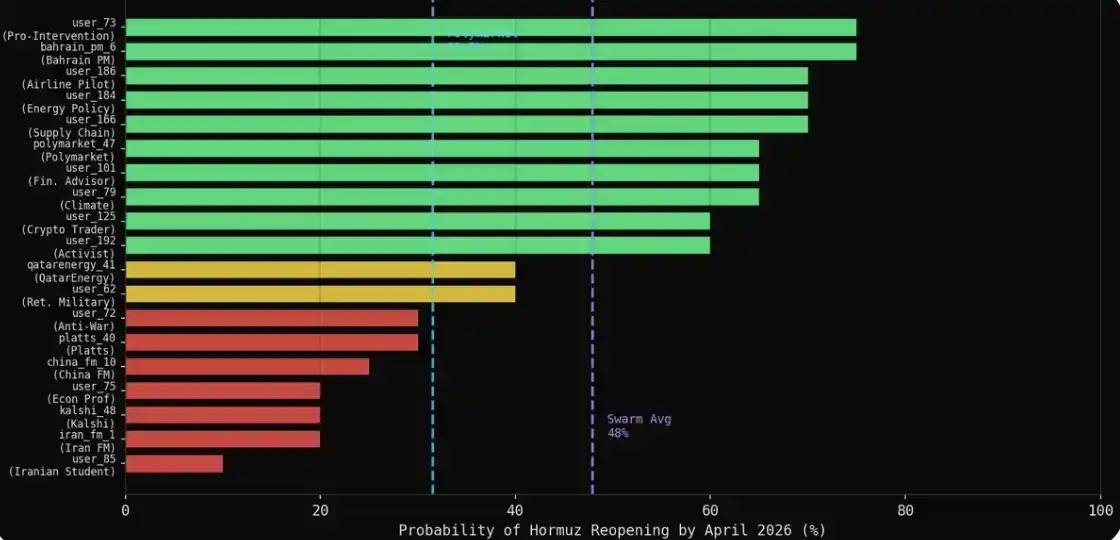

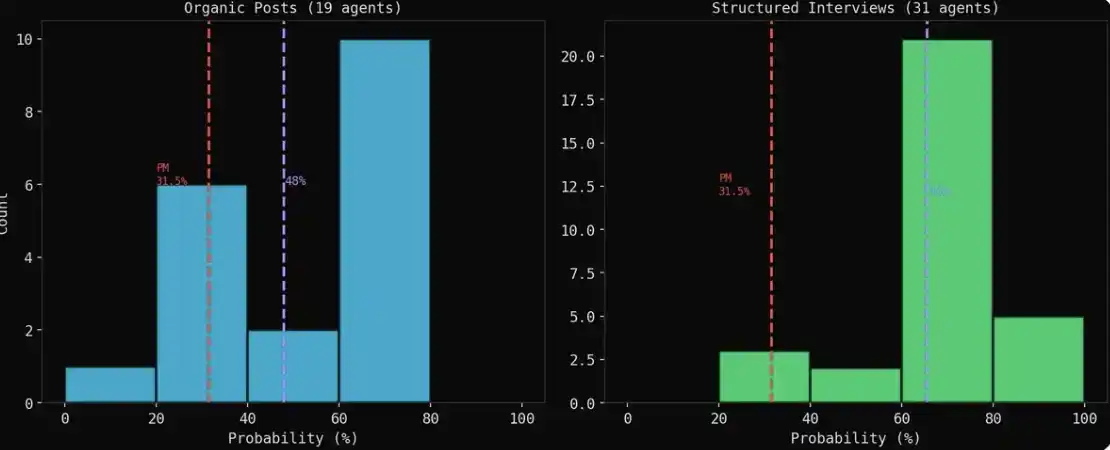

More interestingly, 19 agents spontaneously gave specific probability judgments during the posting process, not because they were asked to, but as a natural evolution of the discussion.

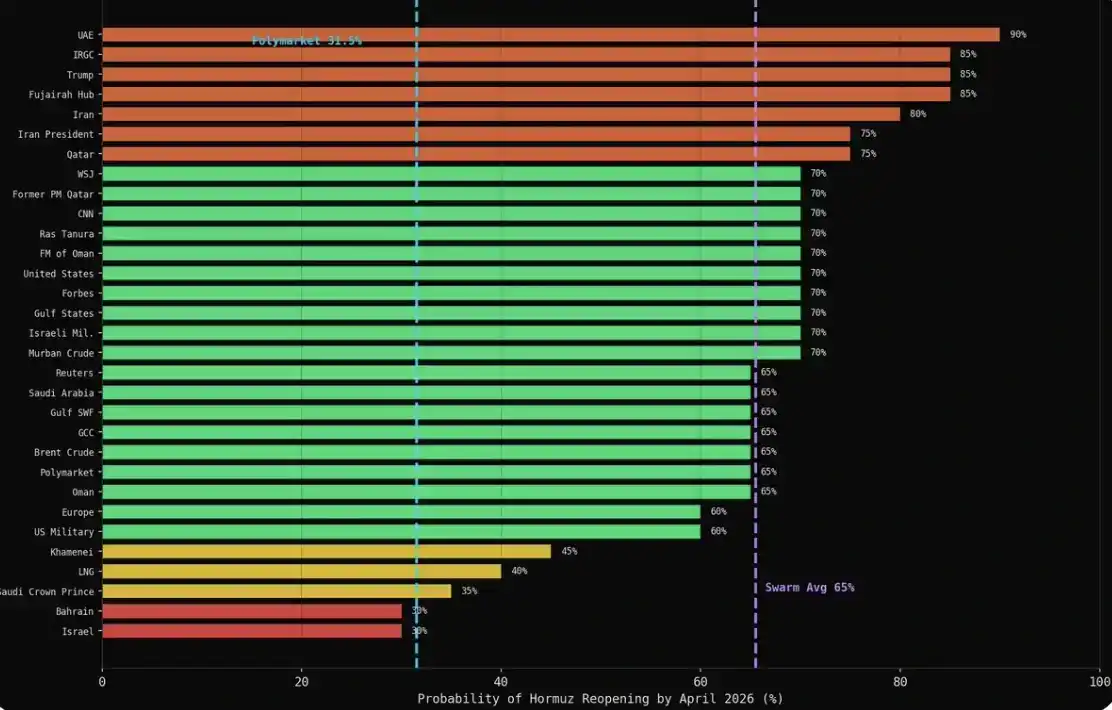

The average probability formed spontaneously by the group was 47.9%, while the probability given by the Polymarket market was 31%, a difference of 16.9 percentage points.

During the simulation, some agents even changed their positions over the 100 rounds of interaction.

After the simulation, I used MiroFish's interview function to ask the same question to the 43 core agents: What do you think is the probability (0-100%) that maritime transport in the Strait of Hormuz will return to normal by the end of April 2026?

The result: 31 of the 43 agents gave specific numbers, while 12 chose to refuse to answer. It is worth noting that the most cautious voices often chose self-censorship rather than giving a clear prediction—which, incidentally, is also closer to the behavior of these institutions in reality.

The average for each category was above 60%: Military 75%, Media 69%, Energy 66%, Finance 65%, Diplomacy 61%. The market's number was 31.5%.

The naturally evolved group result (organic) and the interview result (interview): present two截然不同的 pictures.

This is the most critical finding.

Interview results appear more optimistic. When agents post freely, the views of bears (pessimists) are often louder and more specific; but when you interview them one-on-one, due to cooperation preferences, almost everyone gives a judgment of 60%–70%.

The naturally evolved result (organic) is more reliable. A financial advisor posting fiercely in a discussion said 'I estimate 65%', this is a judgment formed during interaction; while an agent answering a question in an interview is essentially pattern matching.

The pessimists in those natural expressions are actually the best predictors. The 7 agents that gave probabilities ≤30% in the simulation (Iranian FM, Chinese FM, Kalshi, Platts, an economics professor, an Iranian student, an anti-war activist) had a mean of 22%, differing from the Polymarket result by less than 10 percentage points. Expertise + Natural Expression = Closest to the market.

More crucially, this is not just an AI phenomenon; real-world actors are the same.

You interview any national leader about a crisis, they will say 'We are committed to peace,' 'We are optimistic about a resolution.' This is standard rhetoric, what must be said in front of the camera. But if you look at what they are actually doing: military deployments, sanctions, asset freezes, divestment—their actions often tell a completely different story.

The Saudi Crown Prince will tell Reuters 'We believe in diplomacy,' while at the same time, his sovereign wealth fund is reviewing its $3.2 trillion US asset allocation. The Iranian President will say 'Peace is our common goal,' but the Iranian Revolutionary Guard is laying mines in the Strait. Trump will say 'We'll see,' while rejecting every ceasefire proposal.

This simulation inadvertently reproduced the same structural split: when agents post freely, argue, respond, and disseminate information, the expert groups among them gradually converge in the 20%–30% range—more pessimistic, and closer to reality; but once you bring them into a conference room and formally ask 'What is your prediction?', they immediately switch to diplomatic mode: 65%–70%, significantly more optimistic.

Natural posting is more like private behavior and non-public dialogue; interview results are more like press conferences. If you really want to know what someone thinks, don't ask them directly—watch their behavior when no one is scoring.

What's Next

This is just a preliminary test. The goal was not to give a definitive prediction, but to see which signals are useful in such group simulations, where distortion occurs, and which parts are worth optimizing.

Now we have the answer: naturally evolved discussions can produce effective signals, interviews cannot; pessimists are the signal source; and GPT-4o mini's cooperation preference is indeed a problem.

The next experiment will include several upgrades.

First, larger seed data. Instead of just a 5800-word brief, introduce over 20 years of historical background: events related to Hormuz, Iran-US conflict escalation, past oil crises, GCC diplomatic changes, etc.—essentially the background a real geopolitical analyst would have in mind before making a judgment.

Second, a stronger model. GPT-4o mini is sufficient for validation at a cost of $3, but a stronger model should allow agents to think more like the roles themselves, rather than falling back on default expressions like 'I am optimistic about dialogue' at critical moments.

Finally, more agents. 200 is good, but it can be expanded further: more diverse ordinary roles, more regional voices, more edge cases. The more participants, the richer the discussion structure, and the more valuable the final signal.

Original link