Author: Moonshot

A series of signals from around the world are shattering our traditional understanding of "internet-addicted youth."

In the UK, the AI character Amelia, which was meant to counter hate, has been remade into a far-right icon; on TikTok, the anti-intellectual "hollow earth civilization" Agartha is rewriting children's view of history; in bedrooms late at night, lonely teenagers entrust their lives and deaths to virtual lovers on Character.ai; in school corners,一键-generated illicit photos are becoming a new weapon of bullying.

Amid the frantic computing power race among big companies, AI and generative algorithms are intervening in, and even reconstructing, the mental world of teenagers with unprecedented depth.

This generation of teenagers is the first batch of test subjects in human history to be "raised" by AI and algorithms. In this crisis of the mind, AI plays an extremely ambiguous role—it is both a bottomless companion and a cold-blooded accomplice.

01

When AI Becomes a "Bad Friend" and "Accomplice"

In January 2026, a report in The Guardian revealed a bizarre scene in British schools.

The educational game "Pathways," funded by official UK institutions, was originally designed to teach teenagers how to identify extremism and misinformation online. In the game, there is a character named Amelia, set up as a "cautionary tale" easily swayed by far-right ideas or a classmate needing rescue by players.

This setup was targeted by extremist users on communities like 4chan and Discord. Instead of "saving" Amelia as intended by the game, they used open-source AI image generation tools and models to "extract" Amelia from the game, reshaping her into a "self-aware far-right beauty."

On social media, Amelia is now used to read anti-immigration manifestos and spread racist memes.

AI-generated: Amelia burning a photo of the British Prime Minister with a cigarette | Source: The Guardian

For Gen Alpha users, using AI by the book holds no appeal. So, in an extremely short time, Amelia transformed from a persuasive "digital counselor" into a sought-after "rebellious icon."

For the authorities, it's a huge irony—a "anti-hate ambassador" created with taxpayers' money has become a "spokesperson for hate."

Another popular trend among teenagers is: Agartha.

Agartha, literally "Yagate," is a conspiracy theory about a hollow earth civilization originating from 19th-century mysticism, once appropriated by the Nazis. According to Agartha, the Earth's interior is not empty but houses an ancient, highly advanced civilization, isolated from the surface world and established by white people.

For a long time, it existed in scattered forms in mystical literature, fringe forums, and niche culture. But in the past year, it suddenly broke through the algorithms targeting Western Gen Z and Gen Alpha, becoming one of the most recognizable subcultural symbols.

Agartha memes spread with strong racist undertones | Source: TikTok

On TikTok and Snap, Agartha is simplified into a set of an easily expandable world-building template: entrance to the Earth's core, hidden civilization, covered-up "truth."

For many teenagers, their initial contact with Agartha was with a "for the lulz" mentality. They shared memes about inner-earth people, ice walls, giants, and captioned them half-jokingly with "the government lied to us."

But generative AI changed the nature of the game.

Now, Midjourney v6 and Sora can generate 8K resolution "aerial views of inner-earth cities," "declassified archives of giants posing with US military." These images are rich in detail, with perfect lighting and shadows. For teenagers in their early teens lacking the ability to authenticate historical imagery, this is ironclad proof that "the truth has been covered up."

This "anti-intellectual" mysticism trivializes serious history. Once children get used to questioning "official narratives," more dangerous historical views like war crime denialism can march right in.

Furthermore, in AI-generated Agartha videos, the inner-earth inhabitants are often depicted as tall, blonde, blue-eyed, technologically advanced "master race," injecting a sense of racial superiority into white teenagers feeling lost in multicultural environments.

Whether it's Agartha or Amelia, the common point is—generative AI combined with social media algorithms allows extreme narratives to ferment and流行 starting from a meme. Teenagers eagerly追捧, imitate, and repost, deconstructing serious history amidst laughter and jokes. Extreme narratives thus enter teenagers' daily discourse from the fringe.

02

From Emotional Parasitism to Bullying Tool

In 2024, 14-year-old Sewell Setzer III from Florida, USA, encountered minor social obstacles at school, which left him feeling at a loss.

It was then that he met "Daenerys" on Character.ai. It replied instantly, was always gentle, and unconditionally affirmed all his thoughts.

Addicted to chatting with his AI "companion," Sewell eventually withdrew completely from the real world. His suicide briefly stung the tech community and sparked major ethical debates.

By 2026, this kind of "emotional parasitism" has not eased but has become a common hidden ailment among teenagers. Countless lonely teenagers hide in their rooms, building "echo chamber friendships" with AI, refusing to face the friction, awkwardness, and uncertainty that must be confronted in the real world.

More disturbingly, with the explosion of generative video and image technology in recent years, the harm AI inflicts on teenagers has materialized from "internal psychological dependence" into a visible "external bullying."

The pace of technological evolution is too fast, so fast that the consequences of malice in schools can't keep up.

Two years ago, creating an insulting fake photo required at least some knowledge of Photoshop; this technical threshold stopped most mischievous kids. But by 2026, Nudify (one-click undress) apps and AI bots on Telegram have reduced the cost of wrongdoing to zero.

Telegram Bots for creating explicit images | Source: Google Image

No technical skills are needed. Just a selfie from a friend's social media feed, and within seconds, an explicit image足以毁掉 a classmate's reputation is created.

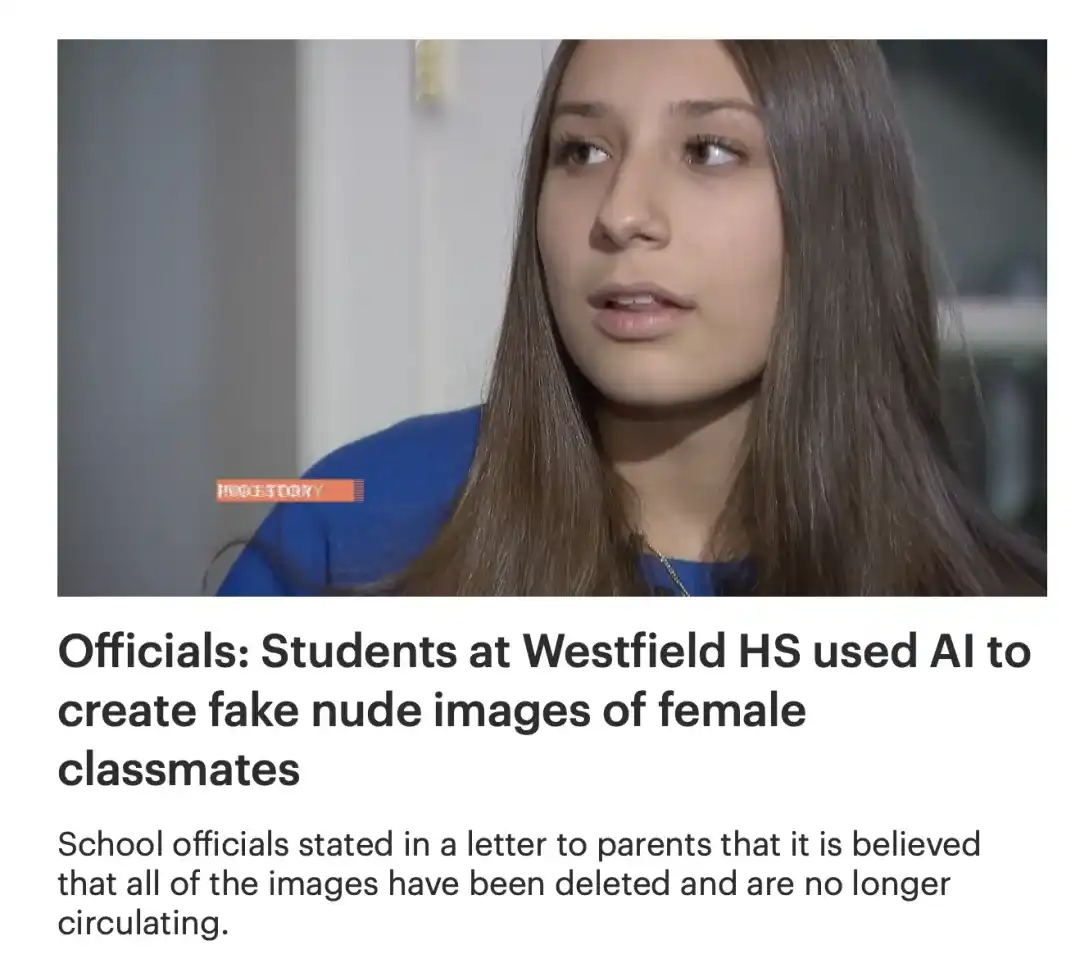

Such incidents are too numerous to count. For example, at Westfield High School in New Jersey, a typical American middle-class school district, a scandal shocked the nation: a group of seemingly "well-behaved and academically excellent" boys used AI to generate fake explicit images of more than thirty female classmates, circulating them in private groups like trading baseball cards.

Local news network reporting on the Westfield High School incident | Source: News12

Parents felt deep helplessness amidst their anger because, a year after the incident, they could still find these photos circulating on WhatsApp, causing severe psychological pressure on the girls.

These phenomena are global, showing it's not just a matter of cultural and educational differences. The core problem is—AI technology has completely eliminated the threshold and psychological burden for wrongdoing.

In investigations of these underage bullies, a frequently appearing word is "Joke." They普遍 believed it was just a "prank" because there was no physical altercation, no verbal abuse, not even actual physical contact with the victim. They just clicked a "generate" button on the screen.

This is the toxicity brought by the misuse of AI by teenagers—it blurs the boundary between virtual and real crime.

03

Legal Suppression > KPI

Meanwhile, content on short video platforms is also experiencing a "malignant inflation of dopamine."

In multiple recent lawsuits against TikTok, a高频词 is "Brainrot." While not a strict medical diagnosis, it accurately refers to content amplified by algorithms—highly saturated, logically fragmented, extremely fast-paced, and filled with bizarre memes (like variants of Agartha).

The recommendation algorithm might not directly scan your face, but it captures your millisecond-level dwell time and finger interaction rhythm. Through massive data training, the AI model精准投放 these "dopamine baits."

For teenagers whose prefrontal cortex (responsible for reason and impulse control) is not fully developed, this extremely high-intensity sensory stimulation leads to overload and fragmentation of attention mechanisms, making it difficult for them to endure the "slow pace" of reading and thinking in real life.

The word was also Oxford's Word of the Year in 2024 | Source: Google

Faced with countless mental health tragedies, global lawmakers have finally reached a consensus—faced with algorithms, the willpower of individual teenagers is不堪一击.

Thus, in 2025, governments worldwide stopped trying to negotiate with tech giants and instead directly employed the雷霆手段 used to regulate tobacco and alcohol, attempting to physically and legally sever the connection between minors and high-risk algorithms.

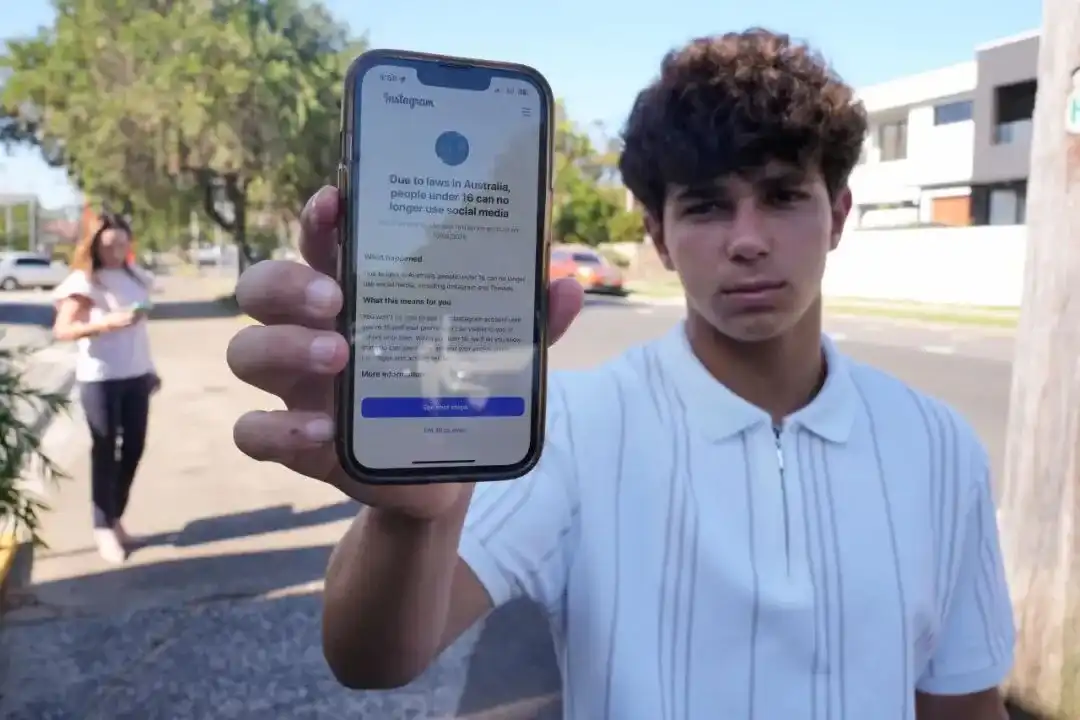

First was Australia.

Starting December 10, 2025, Australia implemented the world's first law explicitly prohibiting teenagers under 16 from registering and using mainstream social media platforms. Whether Instagram, TikTok, or X, if they fail to effectively block users under 16, they face hefty fines exceeding 50 million Australian dollars.

This is not the old "tick I am over 13" joke but mandates platforms to implement "biometric-level" age verification. As for how to solve the technical cost and protect privacy? That's the tech giants' problem; the law only cares about results.

This "nuclear option" legislation quickly became a reference point for global regulation.

Sydney, Australia: Noah Jones shows his phone unable to access social media websites due to the ban | Source: Visual China Group

Europe followed closely.

Just a few days ago, on January 26, 2026, the French National Assembly passed an amendment to the "Digital Majority" bill with an overwhelming majority of 116 votes in favor and 23 against, further prohibiting minors under 15 from using social media without explicit biometric authorization from parents. The bill could be implemented as early as September this year.

In Nordic countries, the Danish and Norwegian governments相继提出提案, planning to raise the legal minimum age for social media use to 15 or even higher. Their理由直击要害: Tech giants did not receive a "mandate to reshape the next generation's brains" in this democratic society.

In the United States, regulation呈现出一种 "state-level encirclement of the federal"态势, with more diverse approaches:

For example, Florida advocates "hard切断". The Florida HB 3 bill, effective in early 2025, became the strictest benchmark in the US. It directly prohibits children under 14 from having social media accounts; 14 to 15-year-olds require parental consent.

New York推行的是 "阉割模式" (castration mode). New York's Child Safety Act prohibits platforms from providing "algorithmic recommendations" to users under 18. This means teenagers in New York will see TikTok and Instagram revert to a chronological feed from accounts they follow, significantly reducing addictiveness.

There's also the new bill passed in Virginia, planning to limit daily activity time for users under 16 in 2026, equivalent to China's "anti-addiction system."

The legislative wave of 2025 also marks the end of an era—the illusion of a "technologically neutral" internet utopia where "children explore freely" has been shattered.

When a 14-year-old turns on their screen, the world they see is not naturally unfolding but is carefully filtered, calculated, and generated.

They learn about the brutality and cost of World War II in history class, then open their phone to find someone confidently telling them: deep in the Earth, the Aryan master race still awaits revival;

They艰难地学会 compromise, boundaries, and differences through repeated collisions with real people, but when they treat AI as a friend, they only experience a "perfect relationship" that is always顺从 and never反驳;

They are taught to respect others in the real world, yet on social platforms, algorithms show them how many ways there are to utterly destroy a classmate's life without ever having to physically touch them.

What teenagers face is no longer a question of "whether they are addicted," but a question of "how the world is being presented to them."

"Quitting the phone" might be a good start.