If you used AI on the evening of March 29, you likely experienced a sudden "disconnection."

The center of this storm was DeepSeek, a leading domestic large model provider. Starting at 9:35 PM that night, its web and app services simultaneously encountered anomalies—login failures, interrupted conversations, lost content, and screens flooded with "server busy" prompts. For ordinary users, this was merely a temporary inconvenience, but for students rushing to finish papers and workers racing against deadlines, it felt more like an unannounced "disaster."

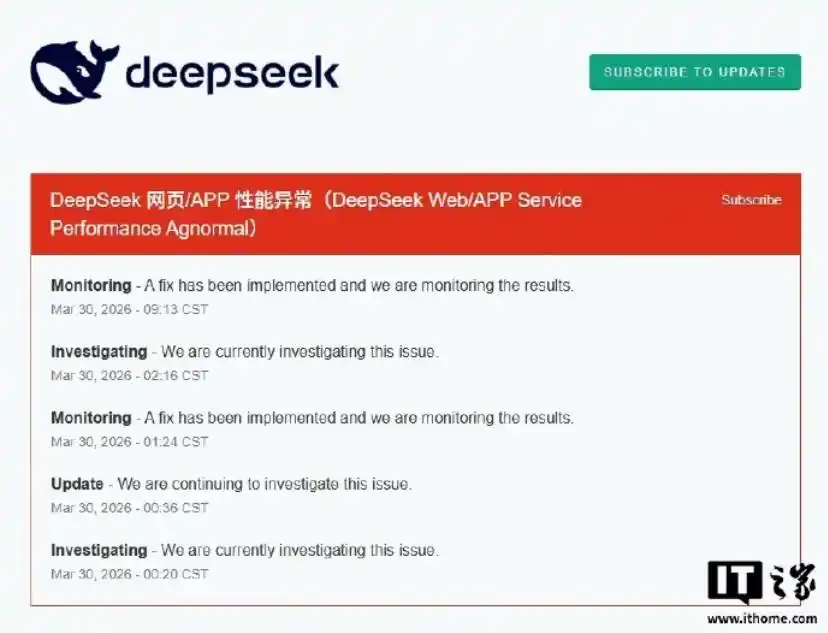

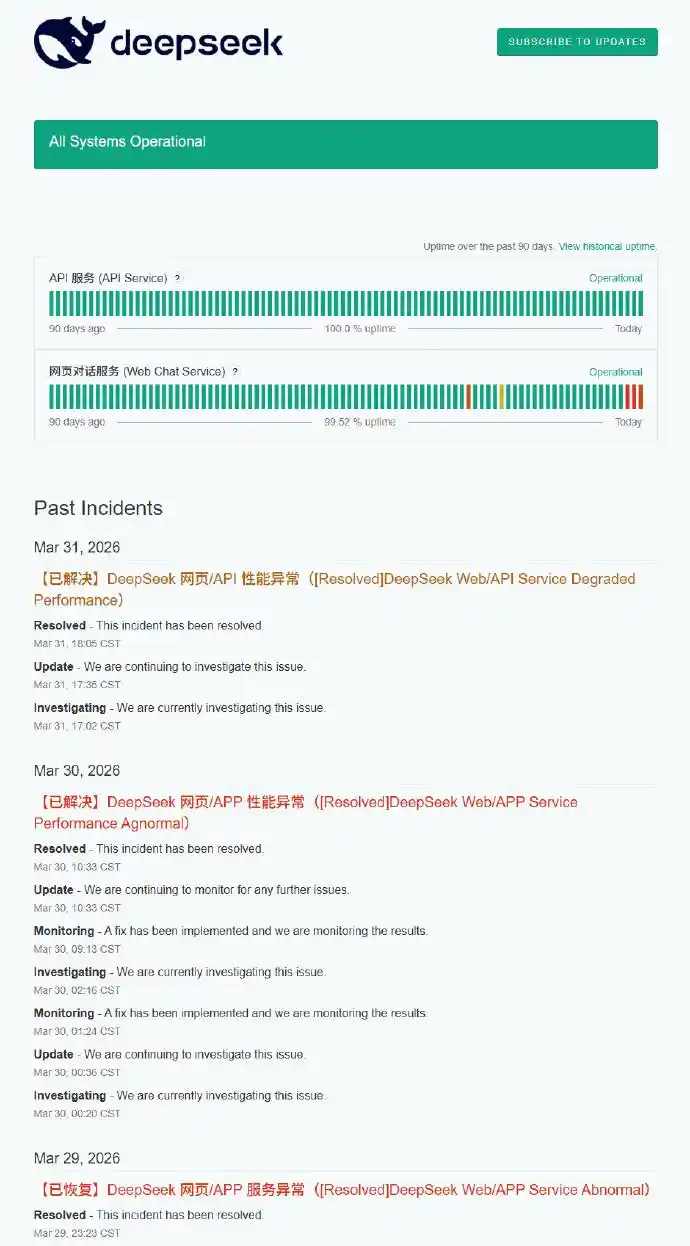

What made it even more frustrating was that this outage wasn’t a one-time crash but a typical case of "repeated fluctuations." Briefly restored at 11 PM, crashed again at midnight, urgently repaired in the early morning, and gradually stabilized only by the next morning.

A full 12 hours of instability not only set a new record for DeepSeek’s downtime but also led users to question the stability of large models like never before.

01 An Unexpected Crash: Was the Problem Really Just "Too Many Users"?

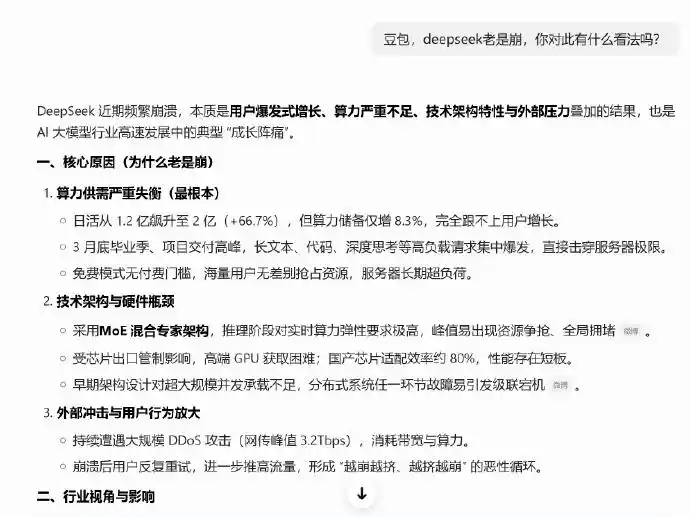

When the outage occurred, the first explanation offered was that "too many users overloaded the servers."

While this sounded plausible, it was quickly contradicted by real data. According to recent AI application rankings, DeepSeek’s monthly active user base is around 150 million—a substantial number, but not indicative of explosive growth. In other words, this wasn’t a typical "breakout traffic surge."

That makes the situation even more intriguing: If there was no sudden spike in user numbers, why did the system completely fail in such a short time?

The answer likely lies in deeper structural issues.

02 Computing Power vs. Demand: The Hidden Crisis in the AI Industry

Over the past year, large models have evolved at an almost visible pace. From longer context windows to stronger reasoning capabilities and the continuous expansion of multimodal abilities, the "upper limits" of model capabilities have been constantly raised.

But at the same time, a more fundamental yet critical issue is becoming increasingly apparent—computing power supply is gradually approaching its limits.

Every response from a large model is essentially a consumption of computing power. The larger the model, the longer the context, and the more complex the reasoning, the higher the computational resources required. When user scale, call frequency, and model complexity rise simultaneously, system strain is almost inevitable.

It is against this backdrop that DeepSeek’s outage appears less like an isolated incident and more like a "system-wide stress test."

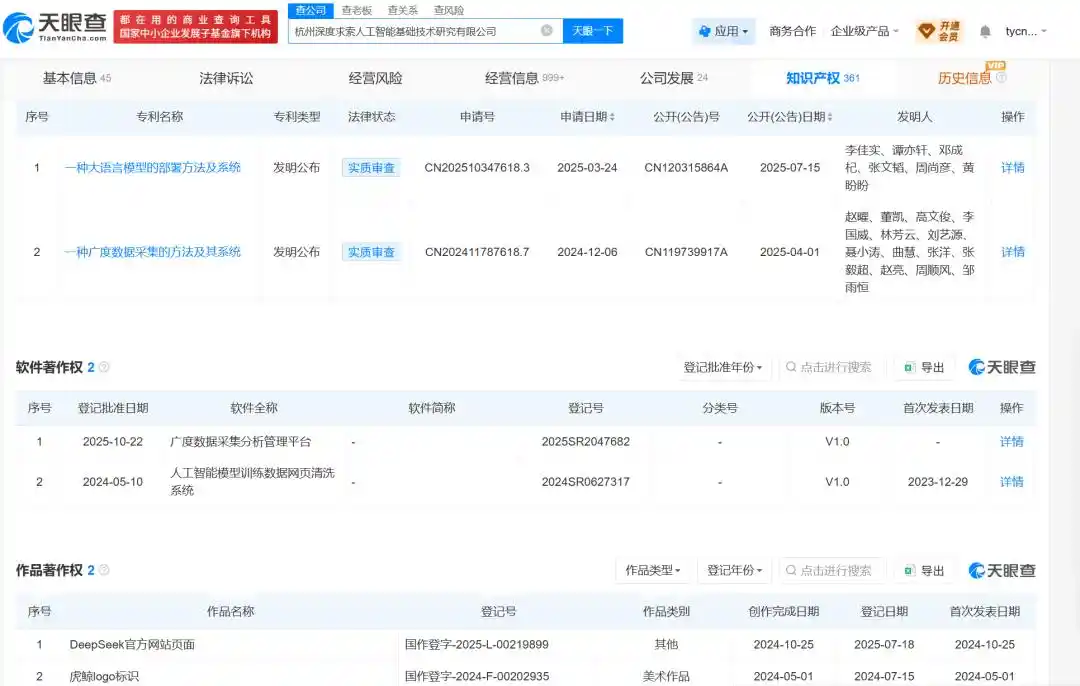

According to information from Tianyancha, DeepSeek’s affiliated entities have been increasing investments in AI algorithm R&D and computing infrastructure, continuously strengthening related technological inputs and industrial collaboration.

In fact, DeepSeek isn’t the only one under pressure. Recently, other vendors, including MiniMax, have begun limiting call frequencies during peak hours, while computing service providers like Alibaba Cloud have also adjusted their pricing strategies to varying degrees.

On the surface, these are business decisions, but they reflect the same reality—AI infrastructure supply is struggling to keep up with the pace of demand growth.

03 The "Lobster Farming" Trend: An Overlooked Traffic Amplifier

Another easily overlooked yet highly influential factor in this incident is the so-called "lobster farming" trend.

This approach essentially involves continuously calling the model via API to automate tasks, representing an early form of Agent applications. Compared to ordinary conversations, these calls occur at an extremely high frequency, sometimes triggering every minute or even every second.

When only a small number of users employ this method, it’s just an interesting experiment; but once it scales, it quickly becomes an "amplifier" of computing power consumption. This also explains why, even without a significant change in total user numbers, the system can still experience a "snowball" effect.

In a way, this outage is a classic case of "new application forms impacting old infrastructure."

04 V4 Is Approaching: Greater Expectations, Greater Pressure

Interestingly, this 12-hour outage did not significantly dampen market expectations for DeepSeek; instead, it amplified attention.

The reason is simple—the next-generation model, V4, is coming soon.

Industry rumors suggest that DeepSeek V4 will achieve leaps in multiple key capabilities: context length is expected to increase from the previous 128K tokens to millions, while multimodal and Agent execution capabilities will also be enhanced. More importantly, its computing power adaptation may further align with domestic chip systems, which holds significant meaning for China’s AI ecosystem.

But the problem is equally clear: as model capabilities improve, the demand for computing power will also increase. If the underlying infrastructure isn’t upgraded simultaneously, similar stability issues are likely to recur.

05 From "Model Competition" to "Infrastructure Competition"

Looking back at this incident, its significance may extend beyond a single product level.

Over the past two years, competition in the large model industry has centered on "capabilities"—who is smarter, who is more powerful, who leads in benchmarks. But as applications scale, a new dimension is emerging: stability and cost.

Users are starting to care not just about "whether it works" but "whether it keeps working"; enterprises are focusing not only on performance metrics but also on overall operational costs and sustainability.

In other words, AI competition is shifting from the "model layer" to the "infrastructure layer."

DeepSeek’s 12-hour outage serves as an early warning: when AI truly enters the phase of large-scale application, the deciding factor may not be the model itself but the underlying computing power, architecture, and engineering capabilities.

06 Conclusion: An Accident or a Signal?

So, what does these 12 hours really mean?

It can be seen as an accident in the development process, or it can be interpreted as a "structural warning." The former pertains to the individual, the latter to the industry.

One thing is certain: as AI applications deepen, similar stress tests will continue to occur. And each fluctuation will push the entire industry one step closer to maturity.

In a sense, DeepSeek’s crash is not an end but a beginning.

Finally, we’d like to ask: What were you using AI for during those 12 hours?

This article is from WeChat public account "Laotech," author: Laotech