Humanities workers did not create the world's changes, but they are bearing the brunt of them.

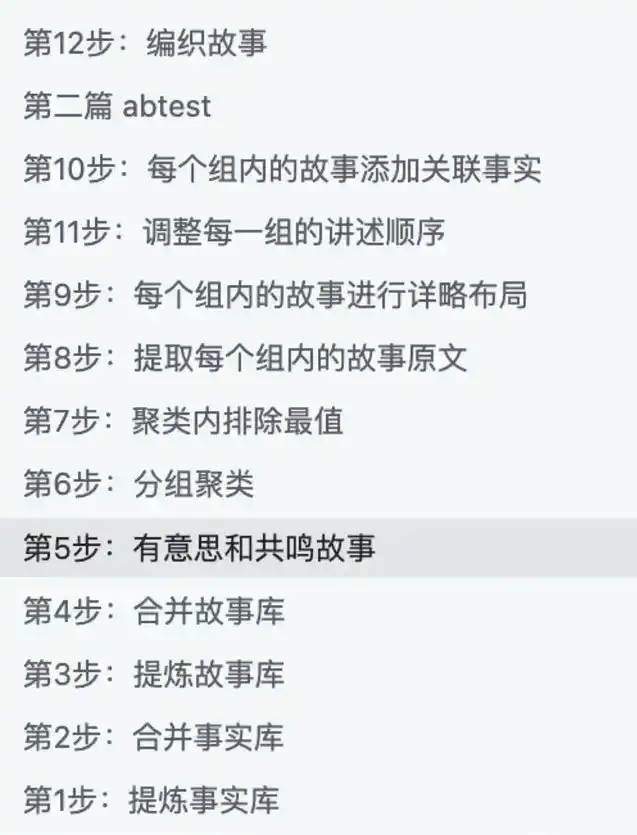

Sometimes I feel like those accounts selling AI tutorials treat AI as a kind of magic: give it a magical prompt, and you can do anything. Of course, reality is not like that. Over the past period, because we started FUNES, we've had to produce a massive amount of content daily using AI. Plus, there's content production for Fuyou Tiandi and my own writing—relying solely on human effort is no longer enough. So we've been extensively experimenting with how to use AI to assist our content marketing and humanities research work.

Later, when new colleagues joined the company, I made a simple Keynote presentation. Jia Xingjia, a teacher from Yidoude, heard about it and invited me to do a sharing session. My partner Keda and I named this presentation "An AI Usage Guide for Humanities Workers." It was purely a private sharing at first, mainly about some broad principles. We've done it a few more times since, gradually expanding it.

Over the past year or so, I've shared this set of experiences on how to use AI with many friends who work in content, research, and knowledge products. Its goal is not to teach you to memorize a few magical prompts, nor is it to treat AI as a panacea; on the contrary, it's more like a set of working methods: enabling you to integrate large models into your own writing, research, editing, topic selection, data organization, and production workflow without writing code, and to make it traceable, superviseable, verifiable, so that you are still willing to put your name on the final work.

This methodology comes from the pitfalls we've encountered in real projects: when content enters mass production, relying purely on human power collapses; and having AI write a piece directly leads to hallucinations, laziness, and writing that sounds like AI. So we had to turn creation into a production line, and the production line into an iterable system.

Today, I don't want to just give you various prompts; I hope to give you some key guiding ideas and principles.

Before the Principles: Three Bottom Lines for This Guide

Before the specific methods, clarify three bottom lines. They determine "how you use AI" and also "why you use it this way."

1. The process must be traceable, superviseable, and verifiable.

You can't just want a result without the process. For humanities work, a black box is the most dangerous: hallucinations, misquotations, and concept substitutions can all happen quietly inside it.

2. It must be controllable.

You need to be able to control how it does things, by what standards, where to slow down, and where to be strict. You are not "drawing cards"; you are producing.

3. In the end, you are still willing to put your name on it.

"Am I willing to put my name on this?" is the final quality check. If you are unwilling to sign, it's usually not a moral problem, but that your will was not贯彻 (implemented) during the process—which means the quality is uncontrollable.

Principle 0: Don't Make Wishes to AI, Treat It as a Workbench

The way many people use AI is essentially making a wish:

"Give me a good joke," "Help me write a good article," "Explain this paper."

The problem is—there are countless ways to "explain" itself: explaining to a layperson, an undergraduate, a graduate student, or a peer are completely different tasks. AI cannot inherently know your background, purpose, taste, and standards. If you don't specify, it can only糊 (cobble together) a least-effort answer using the "average human's" default way.

Treating large models as a workbench means: you don't demand results from it; instead, you mobilize its tools to complete a process. What you need to do is clarify the task, clarify the standards, and lay out the steps.

For example, asking AI to explain a paper

You can change a wish-based request (explain this paper for me) into a workbench-style task like this:

· Define the target audience: smart, curious graduate students who are not experts in the field

· Define the explanation method:启发式 (heuristic), step-by-step, with academic rigor

· Define structural requirements: first talk about significance, then add background, then还原 (recount) the research process, then talk about key technical points, then mention implications

· Define tone: respect intelligence, not condescending, not pretending the other person already has a deep foundation

You'll find: the more you give it like "assignment requirements," the less AI-like AI becomes, and the more it resembles a real teaching assistant who can actually work.

Principle 1: To Make AI Work Well, First Reflect on Yourself—You Are the Responsible Party

If you hired a secretary, you wouldn't just say:

"Revise Hanyang's article about the American Rust Belt well."

You would definitely add:

Why was this article written, for whom, where is it stuck now, what problem do you hope it solves, which parts cannot be touched, what style do you want, what indicator do you care about most.

AI is the same. You need to treat it like a very diligent, very polite colleague who doesn't understand the implicit premises in your mind. Real "prompt engineering" is not a技巧 (skill), but a sense of responsibility: any task is still yours to do, AI is just helping you work.

When you are dissatisfied with AI's output, the most effective first reaction is not "AI is no good," but:

· Did I clearly state the "object/audience/purpose"?

· Did I provide enough background material and constraints?

· Did I break down the "abstract wish" into "executable actions"?

· Did I provide a standard for judging right and wrong?

Principle 2: Ask at Least 3 Models the Same Question—Each AI Has Its "Personality" and Areas of Expertise

In our company, for any colleague初次 (initially)接触 (contacting) large models, I would希望 (hope) they ask three different AIs the same question in the early stages of use. AI has differences like people: some are better at writing and phrasing, some are better at reasoning and problem-solving, some are better at code or tool use. More realistically: models from the same product, new versions of the same model, will also constantly fine-tune "style" and "boundaries."

So a very simple but extremely effective habit is: throw the same question to at least 3 different AIs, and you will quickly gain a "feel":

· Which one writes better, which one thinks better, which one checks better, which one is lazier

· Which tasks are suitable for whom to do the "first draft," which are suitable for whom to be the "reviewer"

· Which is more suitable for generating "topics/structure," which is more suitable for generating "paragraphs/sentences"

The value of this step is not in "selecting the strongest model," but in: you start managing models like managing a team, not treating it as the only oracle.

Principle 3: AI Is Not Omniscient—Treat It as Having the Common Sense Level of a "Good Undergraduate Student"

A very practical expectation management is:

AI's common sense level ≈ a 985 university undergraduate student.

If you think "an excellent undergraduate might not even know" something, then you should assume by default that AI doesn't know it either; at least assume it will "make up something that sounds like it knows" when it doesn't know.

This leads to two direct actions:

1. For any content beyond common sense, you need to teach it.

For example: you want it to write jokes, write copy with truly unique taste, write highly professional arguments—you can't just say "write it better," you need to give examples, standards, no-go zones, and语料 (language materials). I believe explaining to a friend what good writing means to you in your heart takes some time now; how can you think AI knows by default?

2. You need to collaborate with it as an intern, not as a god.

It can do a lot of "micro-interpolation" work: completing the scaffolding you provide, weaving the materials you give into readable text. But the "scaffolding" and "direction" still come from you.

Principle 4: Let AI Approach the Goal Step by Step—White-Boxing in Steps is More Reliable Than Black-Boxing in One Go

AI's advantage is not "giving you the correct answer directly," but that it can stably complete many small steps within the process you design. The more you ask it to "do it all at once," the more likely it is to become a black box that "seems complete but is lazy at heart."

A particularly直观 (intuitive) example is processing TTS (text-to-speech) or朗读稿 (reading scripts). Instead of saying "pay attention to polyphonic characters, don't mispronounce," it's better to break the task into a series of steps, for example:

· Mark pauses/stress/speed change markers

· Identify potential polyphonic characters

· Check against a dictionary or authoritative pronunciation (search first if necessary)

· Pre-mark common characters that are easily misread

· If all else fails, replace with a homophone character with no ambiguity, eliminating the possibility of misreading from the root

This kind of "obviously correct approach," humans will assume they will do by default; but AI won't by default. If you don't write the "obvious" into the process, it will make mistakes on the path of least resistance.

Principle 5: Industrialize First, Then AI-ify—You Can't Jump from the Agricultural Age to the AI Age in One Step

If your writing/research process itself is random,灵感-based (inspiration-based), with unmanaged materials, then you will indeed find it difficult to hand it over to AI. Because AI can only handle the part that is "describable, reproducible."

A more realistic path is:

1. First turn the work into a "production line": divisible, reusable, quality-checkable

2. Then hand over the sub-steps within it to AI: let it be a workstation, not a god

We did a very笨 (clumsy) but crucial job: deconstructing my own process of writing a non-fiction article. Including:

· Why use this story to start

· Why choose this sentence

· How to score examples

· How to transition, how to conclude

· How to connect small stories to a grander picture

Finally, it was broken down into dozens of steps, letting different AIs only do one of these steps. The result was:

It wasn't that the model suddenly became stronger, but the process串起来 (strung together) its ability to "only do a little bit at a time."

When you can clearly describe "how my article is made," you will find: what determines the quality ceiling is never "which large model is used," but whether you have clearly explained the working method.

But I strongly recommend you listen to the program for this part; it's explained in more detail.

Principle 6: Anticipate That AI Will Be Lazy—It Saves Compute Power, You Need to Clear "Format Obstacles" for It

AI is lazy, and it's "systematically lazy": it won't open a webpage if it can avoid it, won't read a PDF if it can avoid it, will skip if it can. It's not that it's bad, but that under the constraints of compute power and time, it naturally tends to take the path of least resistance.

So what you need to do is: use AI's compute power for "understanding text," not waste it on "processing formats."

Very effective modifications include:

· Try to convert materials into plain text/Markdown before feeding them to AI

· Copy web content into clean text (remove navigation, ads, footnote noise)

· For long materials, first do "fact extraction/structure extraction," then let it write

· Put PDFs/EPUBs/web pages into a unified, searchable TXT library, then perform后续 (subsequent) tasks

You will find: many people resist this kind of "manual labor," thinking "the machine should do the dirty work for me." But in human-machine collaboration, the opposite is true—if you are willing to do a little mechanical labor, AI's intellectual part will become sharper and more reliable.

Principle 7: Remember Context is Limited—Try to Change Tasks to "Compression," Don't Count on It "Expand from Nothing"

AI has a context window, a "memory上限 (upper limit)." You give it twenty thousand words, it might not remember much; you give it two hundred thousand words, it might only scan the titles. An apt comparison is: lock a person in a small room for a day, throw them a two hundred thousand word book, and come out and ask them to recite it—how much they can recite is roughly how much AI can "remember."

Therefore, there is a very counterintuitive but extremely important experience:

1. Compression is much easier than expansion

Compressing 1 million words to 10,000 words is often more reliable than expanding 10,000 words to 1 million words.

This directly changes how you make requests to AI:

· Don't use a 100-word prompt to ask for a paper

· Instead, feed in the materials as much as possible (in batches, retrieval, RAG, etc.), and let it compress the structure,观点 (viewpoints), and main text based on sufficient materials

When you used to write articles, papers, it was always "read massive materials → extract → organize → write" (at least that's how I did it). When it comes to AI here, don't suddenly have double standards, demanding it grow out of thin air.

Principle 8: Resist the Impulse of "I'll Just Fix It with a Clever Edit"—Modify the Production Line, Not the Result

Many people who are good at writing最容易 (are most prone to)翻车 (crash and burn) in front of AI:

AI produces a 59-point draft, you feel you can改两下 (tweak it a bit) to 80 points, so you start editing; editing turns into you rewriting; after rewriting, you say "I might as well do it myself," and then never use AI again.

The solution is not to "edit the draft" more diligently, but to move the focus further upstream:

· Don't追求 (pursue) having AI directly write 100 points

· Your goal is to have the production line stably produce 75~80 points

· What you need to do is iterate on the process, to提高 (raise) the "average score," not to make a "single piece" perfect

Principle 9: Treat the Production Line as a Product to Iterate—Reliability Itself is Value

When you have a system that can stably give you a 70-point starting point, its value is not "how much it resembles you," but:

· You can get a usable draft at接近 (near) zero cost

· You can focus your energy on higher-level judgments: topic selection, structure, evidence, taste, and trade-offs

What you want is not an omnipotent god that replaces you, but a reliable factory: it's not perfect, but it's stable.

Principle 10: Quantity is the First Priority—Let It Produce More, Then Filter

Only letting AI give you one version usually gets you the most mediocre, conservative, "average" one. You need to use "quantity" to fight against "mediocrity."

A more effective approach is:

· Summaries: ask for 5 versions at once

· Openings: ask for 5 openings at once, do AB Test

· Topics: ask for 50 topics at once, then group, then select

· Structures: ask for 3 sets of structures at once, then combine

· Phrasing: ask for 10 different措辞 (wordings) at once, then choose the best

When you raise the average score, raise the output, 85-point, 90-point "surprise samples" will naturally appear in the distribution. Often, what's good is not "that one stroke of genius," but that you finally start working in a statistical way.

Principle 11: Don't Overstep—Command, Taste, and Send It Back to the Kitchen Like an Executive Chef

If you are the executive chef of a restaurant, you wouldn't personally go拍黄瓜 (smash the cucumbers). You would:

· Taste a bite

· Judge if it's qualified

· Give clear feedback (where it falls short, how to fix it)

· Let the cook go back and do it again

Collaborating with AI is the same. You need to respect its agency to "generate in its own way"—what you need to do is teach it how to meet your standards, not jump in yourself and修修补补 (patch up) its results into finished products every time.

Otherwise, you will be耗死 (exhausted to death) by endless "patching and mending."

The Final Underlying Principle: Return to the Real World—Materials × Taste Determine the Ceiling of a Work

In the AI era, the quality of a work is increasingly like: Materials × Taste.

Models will change, methods will iterate, but these two things remain unchanged:

1. Materials come from the real world

If you were given two choices to write an article:

· Use the latest model, but only use online materials

· Use an old model, but you have complete archives, oral histories, field interviews

The one more likely to produce a good work is often the latter.

2. Taste comes from long-term training

When "generation" becomes cheap, what is truly scarce is:

· You know what is worth writing

· You know which evidence is stronger

· You know which narrative is more powerful

· You are willing to put in physical labor for materials: search high and low, use your hands and feet to翻 (sift through) materials

What AI changes is the efficiency and manner of your interaction with materials; but the subject of the work is still you, the object is still the materials. AI is just part of the "verb."

Conclusion: Replace Anxiety with a Feel

Many people can't get started with AI, not because they are not smart, but because they stay in the cycle of "wish—disappointment—give up." What can really get you past it is to treat it as a workbench, engineer the tasks, white-box the process, and grow a feel through constant friction.

When you can do this, you are less likely to rashly conclude "AI is no good"; you will be more like a new type of worker who can manage new tools: neither looking down on it, nor looking up to it, placing it in the process, in reality, in the work you are willing to put your name on.