Written by: Ivan Zhao, Notion CEO

Compiled by: AididiaoJP, Foresight News

Each era is shaped by its unique technological raw materials. Steel forged the Gilded Age, semiconductors ushered in the digital age. Today, artificial intelligence arrives in the form of infinite intelligence. History tells us: those who master the raw materials define the era.

Left: Young Andrew Carnegie and his brother. Right: A steel mill in Pittsburgh during the Gilded Age.

In the 1850s, Andrew Carnegie was a telegraph messenger running through the muddy streets of Pittsburgh, a time when six out of ten Americans were farmers. Just two generations later, Carnegie and his peers forged the modern world—horses gave way to railroads, candlelight to electric light, iron to steel.

Since then, work has shifted from factories to offices. Today, I run a software company in San Francisco, building tools for thousands of knowledge workers. In this tech town, everyone is talking about Artificial General Intelligence (AGI), but the majority of the two billion office workers worldwide have yet to feel its presence. What will knowledge work look like soon? What happens when organizations are infused with intelligence that never rests?

Early films often resembled stage plays, with a single camera pointed at the stage.

The future is often hard to predict because it is always disguised as the past. Early phone calls were as brief as telegrams; early films were like recorded stage plays. As Marshall McLuhan said: "We look at the present through a rear-view mirror. We march backwards into the future."

Today's most common form of AI still looks like the Google search of the past. Quoting McLuhan: "We look at the present through a rear-view mirror." Today, we see AI chatbots that imitate the search box. We are deep in that uncomfortable transition period that occurs with every technological shift.

I don't have all the answers for what the future holds. But I like to use a few historical metaphors to think about how AI might operate at different levels: the individual, the organization, and the entire economy.

Individual: From Bicycle to Car

The first signs can be seen in the "high-level practitioners" of knowledge work: programmers.

My co-founder Simon was once a "10x programmer," but lately he rarely writes code by hand. Walking by his desk, you'd see him orchestrating three or four AI programming assistants simultaneously. These assistants not only type faster but also think, making him a 30 to 40 times more efficient engineer. He often queues up tasks before lunch or bed, letting the AI work while he's away. He has become a manager of infinite intelligence.

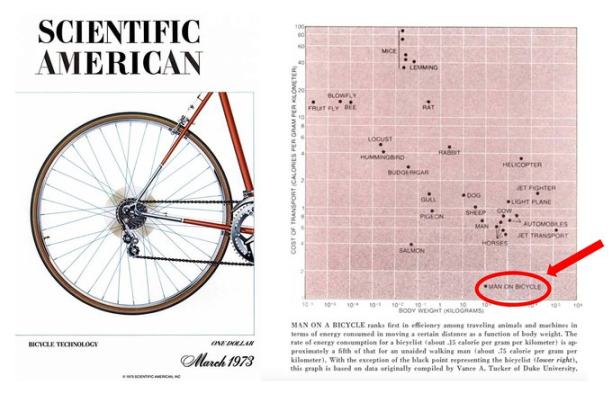

A 1970s Scientific American study on locomotion efficiency inspired Steve Jobs' famous "bicycle for the mind" metaphor. But for decades since, we've been "pedaling bicycles" on the information superhighway.

In the 1980s, Steve Jobs called the personal computer a "bicycle for the mind." A decade later, we paved the "information superhighway" called the internet. But today, most knowledge work still relies on human power. It's like we've been riding bicycles on a highway.

With AI assistants, people like Simon have upgraded from riding bicycles to driving cars.

When will other types of knowledge workers get to "drive cars"? Two problems must be solved.

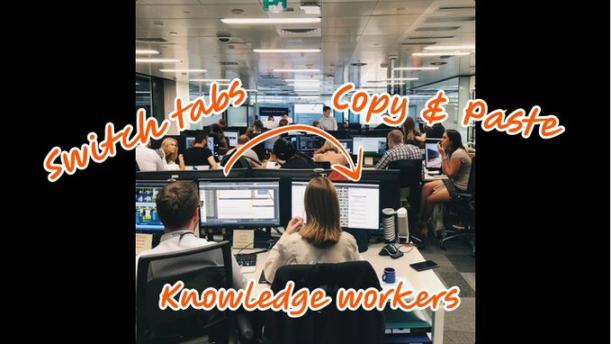

Why is AI-assisted knowledge work harder than programming assistance? Because knowledge work is more fragmented and harder to verify.

First is contextual fragmentation. In programming, tools and context are often centralized: the IDE, code repository, terminal. But general knowledge work is scattered across dozens of tools. Imagine an AI assistant trying to draft a product brief: it needs information from Slack threads, strategy docs, last quarter's data in a dashboard, and organizational memory that only exists in someone's head. For now, humans are the glue, copy-pasting and switching browser tabs to piece everything together. As long as context isn't integrated, AI assistants will be limited to narrow uses.

The second missing element is verifiability. Code has a magical property: you can verify it through tests and errors. Model developers use this, training AIs to code better through reinforcement learning, etc. But how do you verify if a project is well-managed or a strategy memo is excellent? We haven't found a way to improve general knowledge work models. Thus, humans must remain in the loop to supervise, guide, and demonstrate what is "good."

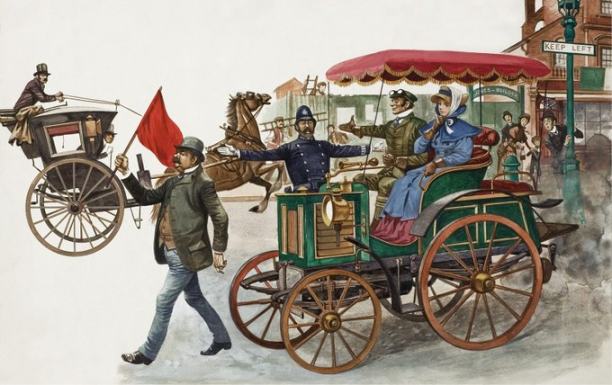

The 1865 Red Flag Act required cars on public roads to be preceded by a person on foot waving a red flag (repealed in 1896).

This year's programming assistant practice shows that "human-in-the-loop" isn't always ideal. It's like having a person check every bolt on an assembly line, or walk ahead of a car to clear the path (see the 1865 Red Flag Act). We should have humans supervising the loop from a higher level, not being inside it. Once context is integrated and work becomes verifiable, billions of workers will shift from "pedaling bicycles" to "driving cars," and from "driving" to "autopilot."

Organization: Steel and Steam

Companies are a modern invention. They become less effective as they scale, eventually hitting limits.

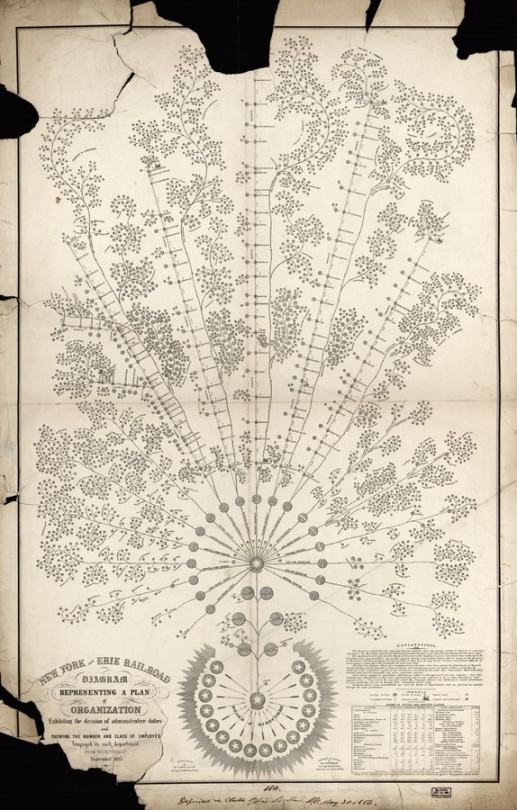

1855 organizational chart of the New York and Erie Railroad. The modern corporation and its structure evolved with railroads, the first enterprises requiring coordination of thousands over long distances.

A few hundred years ago, most companies were workshops of a dozen people. Today we have multinationals with hundreds of thousands of employees. The communication infrastructure—meetings and human brains connected by messages—buckles under exponentially growing loads. We try to solve it with hierarchies, processes, and documents, but this is like building skyscrapers with wood—using human-scale tools to solve industrial-scale problems.

Two historical metaphors show how the future might look different when organizations gain new technological raw materials.

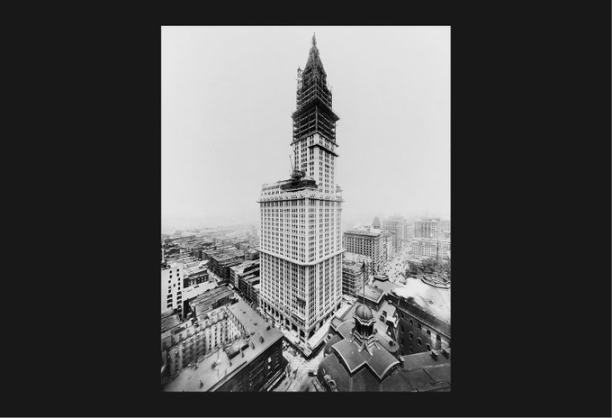

The miracle of steel: The Woolworth Building in New York, completed in 1913, was once the world's tallest building.

The first is steel. Before steel, 19th-century buildings were limited to six or seven stories. Iron was strong but brittle and heavy; add floors, and the structure would collapse under its own weight. Steel changed everything. It is strong and flexible, frames can be lighter, walls thinner, buildings soared to dozens of stories, making new types of architecture possible.

AI is the "steel" for organizations. It promises to maintain contextual coherence across workflows, presenting decisions when needed without noise. Human communication no longer has to be the load-bearing wall. Weekly two-hour alignment meetings might become five-minute asynchronous reviews; executive decisions requiring three layers of approval might be done in minutes. Companies can truly scale without the efficiency decay we've come to accept as inevitable.

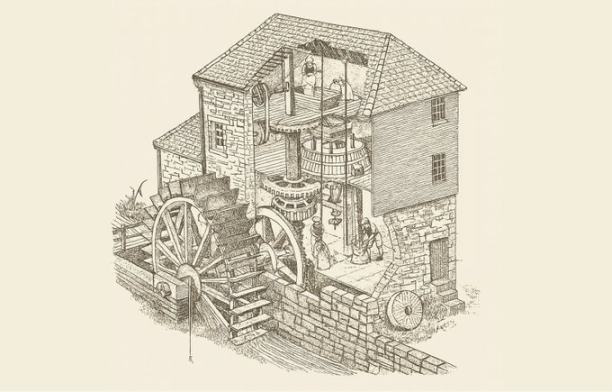

A mill powered by a waterwheel. Water power was powerful but unreliable and limited by location and season.

The second story is about the steam engine. In the early Industrial Revolution, early textile factories were built by rivers, powered by waterwheels. When steam engines appeared, owners initially just replaced the waterwheel with a steam engine, everything else remained the same, and productivity gains were limited.

The real breakthrough came when owners realized they could completely break free from the water source. They built larger factories near workers, ports, and raw materials, and redesigned the layout around the steam engine (later, with electrification, owners further broke free from the central power shaft, distributing small motors throughout the factory to power different machines). Productivity exploded, and the Second Industrial Revolution truly took off.

An 1835 engraving by Thomas Allom depicting a steam-powered textile mill in Lancashire, England.

We are still in the "replacing the waterwheel" stage. Stuffing AI chatbots into workflows designed for humans, we haven't yet reimagined what organizations will look like when old constraints vanish and companies can run on infinite intelligence that works while you sleep.

At my company Notion, we've been experimenting. Besides 1,000 employees, we now have over 700 AI assistants handling repetitive work: taking meeting notes, answering questions to consolidate team knowledge, handling IT requests, logging customer feedback, helping new hires with benefits, writing weekly status reports to avoid manual copy-pasting... This is just toddling. The real potential is limited only by our imagination and inertia.

Economy: From Florence to Megacities

Steel and steam changed not just buildings and factories, but cities.

Until a few hundred years ago, cities were on a human scale. You could walk across Florence in forty minutes; the pace of life was set by walking distance and the range of the human voice.

Then, steel frames made skyscrapers possible; steam engine-powered railroads connected city centers with hinterlands; elevators, subways, and highways followed. The scale and density of cities exploded—Tokyo, Chongqing, Dallas.

These are not just enlarged Florences; they are全新的 ways of life. Megacities are disorienting, anonymous, hard to navigate. This "illegibility" is the price of scale. But they also offer more opportunity, more freedom, supporting more people in more diverse combinations doing more activities than was possible in human-scale Renaissance cities.

I believe the knowledge economy is about to undergo the same transformation.

Today, knowledge work accounts for nearly half of US GDP, but its operation mostly remains on a human scale: teams of dozens, workflows dependent on meeting and email rhythms, organizations that struggle past a hundred people... We've been building "Florences" out of stone and wood.

When AI assistants are deployed at scale, we will build "Tokyos": organizations composed of thousands of AIs and humans; workflows that run continuously across time zones without waiting for someone to wake up; decisions synthesized with just the right amount of human input.

It will be a different experience: faster, with more leverage, but also disorienting at first. The rhythms of weekly meetings, quarterly planning, annual reviews might no longer fit; new rhythms will emerge. We will lose some legibility, but we will gain scale and speed.

Beyond the Waterwheel

Each technological material demands that people stop looking through the rear-view mirror and start imagining the new world. Carnegie gazed at steel and saw city skylines; the Lancashire mill owner looked at the steam engine and saw factory floors away from rivers.

We are still in the "waterwheel stage" of AI, cramming chatbots into workflows designed for humans. We shouldn't just settle for AI as a co-pilot; we need to imagine: what will knowledge work look like when human organizations are reinforced with steel, when trivial work is delegated to intelligence that never rests.

Steel, steam, and infinite intelligence. The next skyline is ahead, waiting for us to build it.