Digital Asset and a group of financial institutions have completed a second round of onchain US Treasury financing on the Canton Network, introducing real-time collateral reuse and expanding the number of stablecoins involved.

Five transactions were executed in the newest phase, building on the July pilot, which first demonstrated that US Treasurys and the USDC (USDC) stablecoin could be combined to finance and settle transactions on the blockchain.

In the latest trial, the companies used multiple stablecoins to finance positions against tokenized US Treasurys, widening the pool of onchain liquidity available for financing transactions.

The trial showed that tokenized US Treasurys could be passed between counterparties and reused as collateral in real-time, sidestepping the operational delays that typically accompany rehypothecation in traditional finance.

The effort brought together Bank of America, Citadel Securities, Cumberland DRW, Virtu Financial, Société Générale, Tradeweb, Circle, Brale and M1X Global, which are all a part of the Canton Network’s Industry Working Group.

Kelly Mathieson, chief business development officer at Digital Asset — the company behind the Canton Network — said in a statement that the test was “part of a thoughtful progression toward a new market model.”

Justin Peterson, chief technology officer of Tradeweb, added that “demonstrating real-time collateral reuse and expanded stablecoin liquidity isn’t just a technical achievement — it’s a blueprint for what the future of institutional finance can look like.”

Related: ‘We refused to do an ICO’: The truth behind Canton’s tokenomics

Canton Network expands footprint in tokenized RWAs

The Canton Network, a layer-1 blockchain built for institutional finance, has been expanding its presence across the tokenization sector this year.

On Dec. 4, its developer Digital Asset secured roughly $50 million in strategic backing from BNY, iCapital, Nasdaq and S&P Global. The new funding followed a $135 million raise earlier this year and is intended to support the network’s scaling efforts.

In October, asset manager Franklin Templeton said it would migrate its Benji Investments platform — which tokenizes shares of the firm’s flagship US money market fund — to the Canton Network.

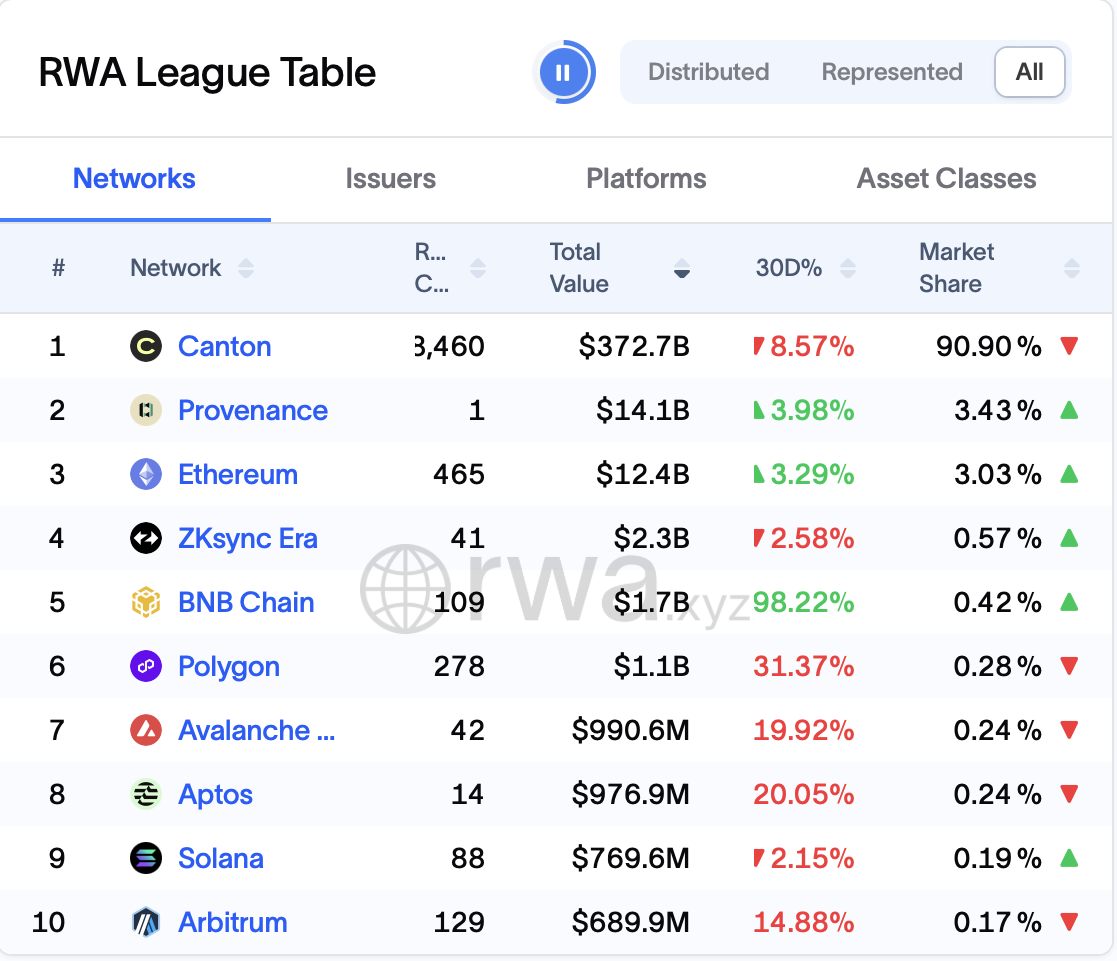

Data from RWA.xyz also shows the Canton Network now leads the market for tokenized real-world assets by a wide margin, with more than $370 billion represented onchain, far outpacing popular networks such as Ethereum, Polygon, Solana and other public chains.

Magazine: 6 reasons Jack Dorsey is definitely Satoshi... and 5 reasons he’s not