Author: Oliver Hsu (a16z)

Compiled by: Deep Tide TechFlow

Deep Tide Introduction: This article is from a16z researcher Oliver Hsu and is the most systematic "Physical AI" investment map since 2026. His judgment is: the language/code mainline is still scaling, but the areas that can truly develop the next generation of disruptive capabilities are the three fields adjacent to the mainline—general-purpose robots, autonomous science (AI scientists), and new human-computer interfaces like brain-computer interfaces. The author breaks down the five underlying capabilities that support them and argues that these three fronts will form a structurally reinforcing flywheel that feeds into each other. For those who want to understand the investment logic of Physical AI, this is currently the most complete framework.

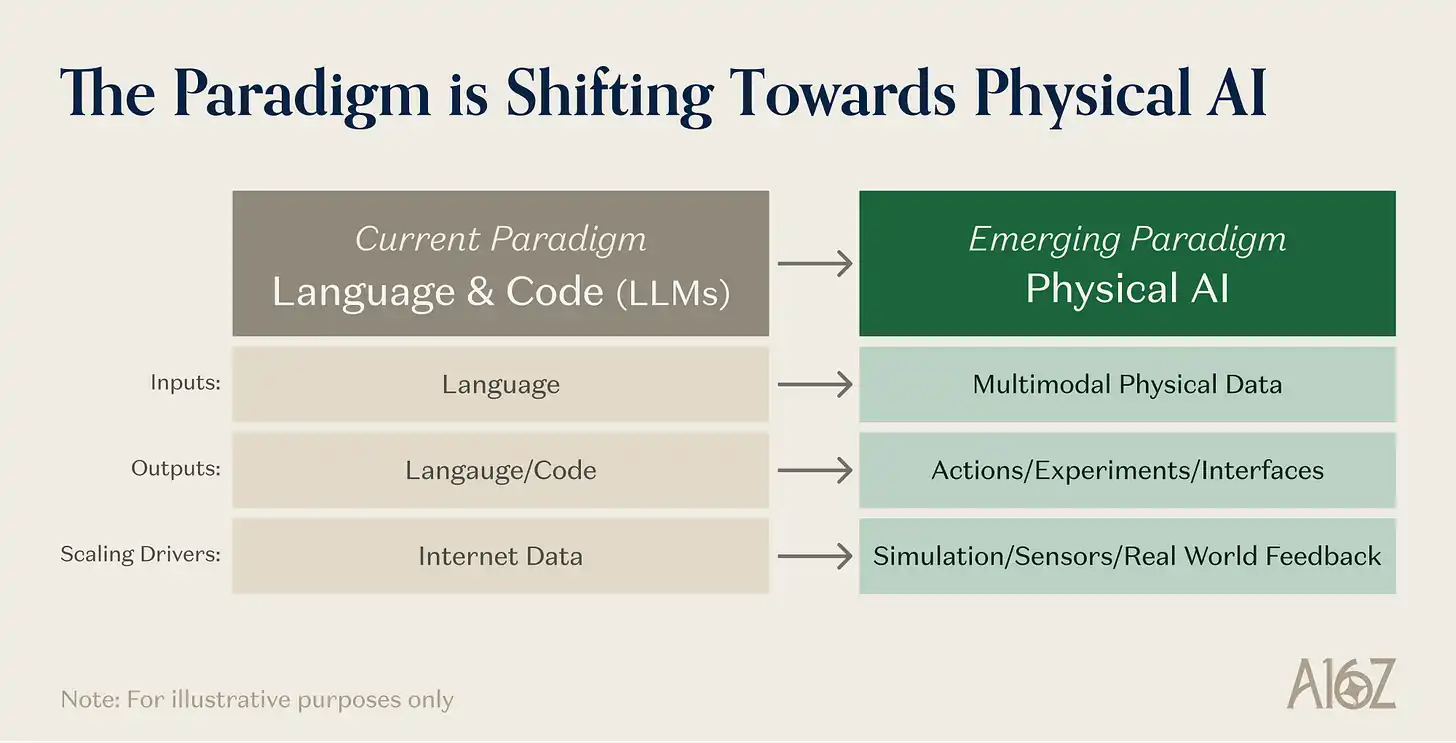

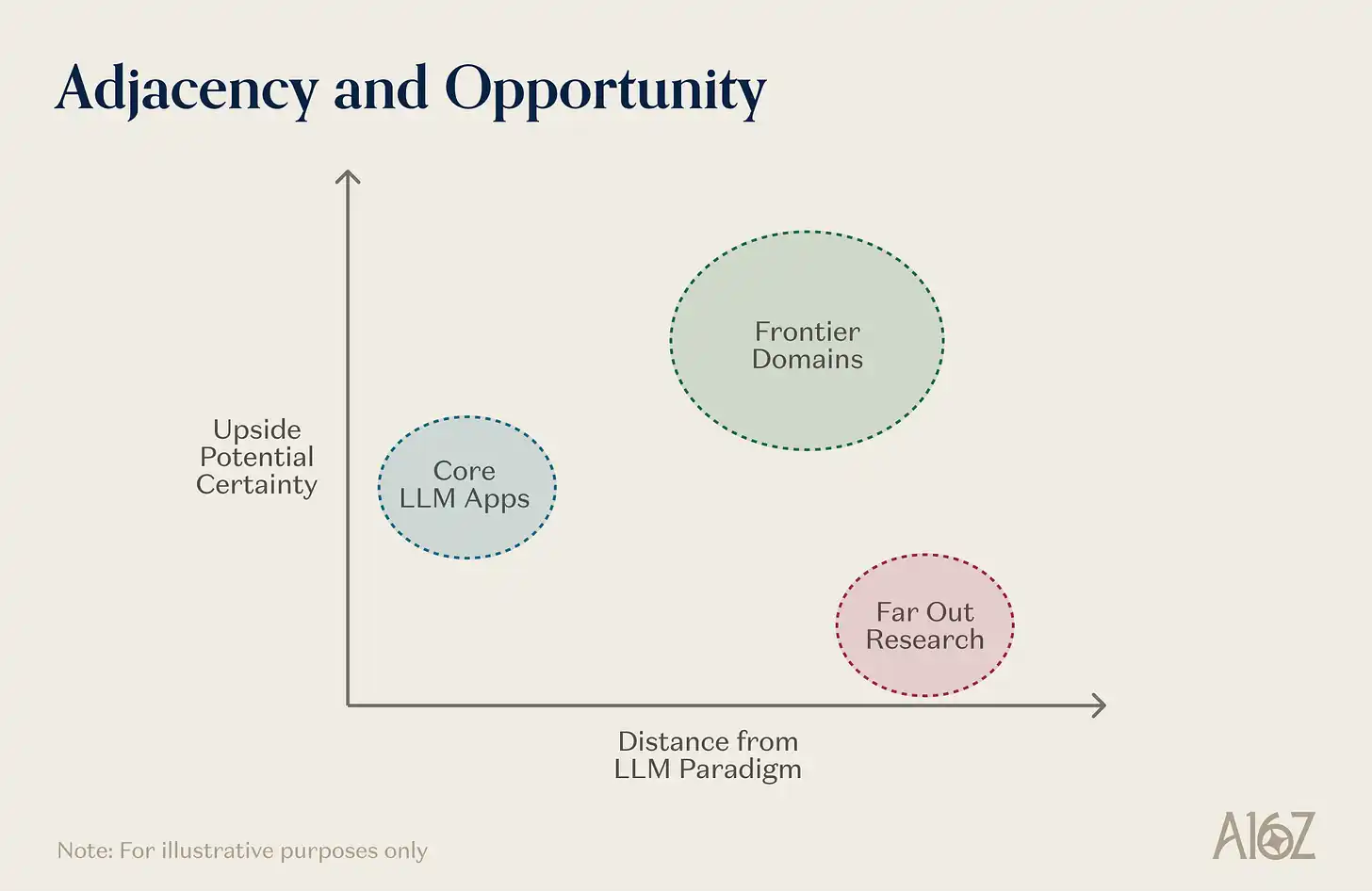

Today's dominant AI paradigm is organized around language and code. The scaling laws of large language models have been clearly defined, the commercial flywheel of data, computing power, and algorithmic improvements is turning, and the returns from each step up in capability are still significant, with most of these returns being visible. This paradigm deserves the capital and attention it attracts.

But another set of adjacent fields has already made substantial progress during their incubation period. These include VLA (Vision-Language-Action models), WAM (World Action Models), and other general-purpose robotics approaches, physical and scientific reasoning centered around "AI scientists," and new interfaces that leverage AI advancements to reshape human-computer interaction (including brain-computer interfaces and neurotechnology). Beyond the technology itself, these directions are beginning to attract talent, capital, and founders. The technical primitives for extending frontier AI into the physical world are maturing simultaneously, and progress over the past 18 months suggests these fields will soon enter their own scaling phases.

In any technological paradigm, the areas with the largest delta between current capabilities and medium-term potential often share two characteristics: first, they can benefit from the same scaling advantages driving the current frontier; second, they are just one step removed from the mainstream paradigm—close enough to inherit its infrastructure and research momentum, yet distant enough to require substantial additional work. This distance itself has a dual effect: it naturally forms a moat against fast followers, while also defining a problem space with sparser information and less crowding, thus more likely to give rise to new capabilities—precisely because the shortcuts haven't been exhausted.

Caption: Schematic of the relationship between the current AI paradigm (language/code) and adjacent frontier systems

Three fields fit this description today: robotic learning, autonomous science (especially in materials and life sciences), and new human-computer interfaces (including brain-computer interfaces, silent speech, neuro-wearables, and new sensory channels like digital olfaction). They are not entirely independent efforts; thematically, they belong to the same group of "frontier systems for the physical world." They share a set of underlying primitives: learned representations of physical dynamics, architectures for embodied action, simulation and synthetic data infrastructure, expanding sensory channels, and closed-loop agent orchestration. They reinforce each other through cross-domain feedback relationships. They are also the most likely places for qualitative capability leaps to emerge—products of the interaction between model scale, physical deployment, and new data modalities.

This article will outline the technical primitives supporting these systems, explain why these three fields represent frontier opportunities, and propose that their mutual reinforcement forms a structural flywheel pushing AI into the physical world.

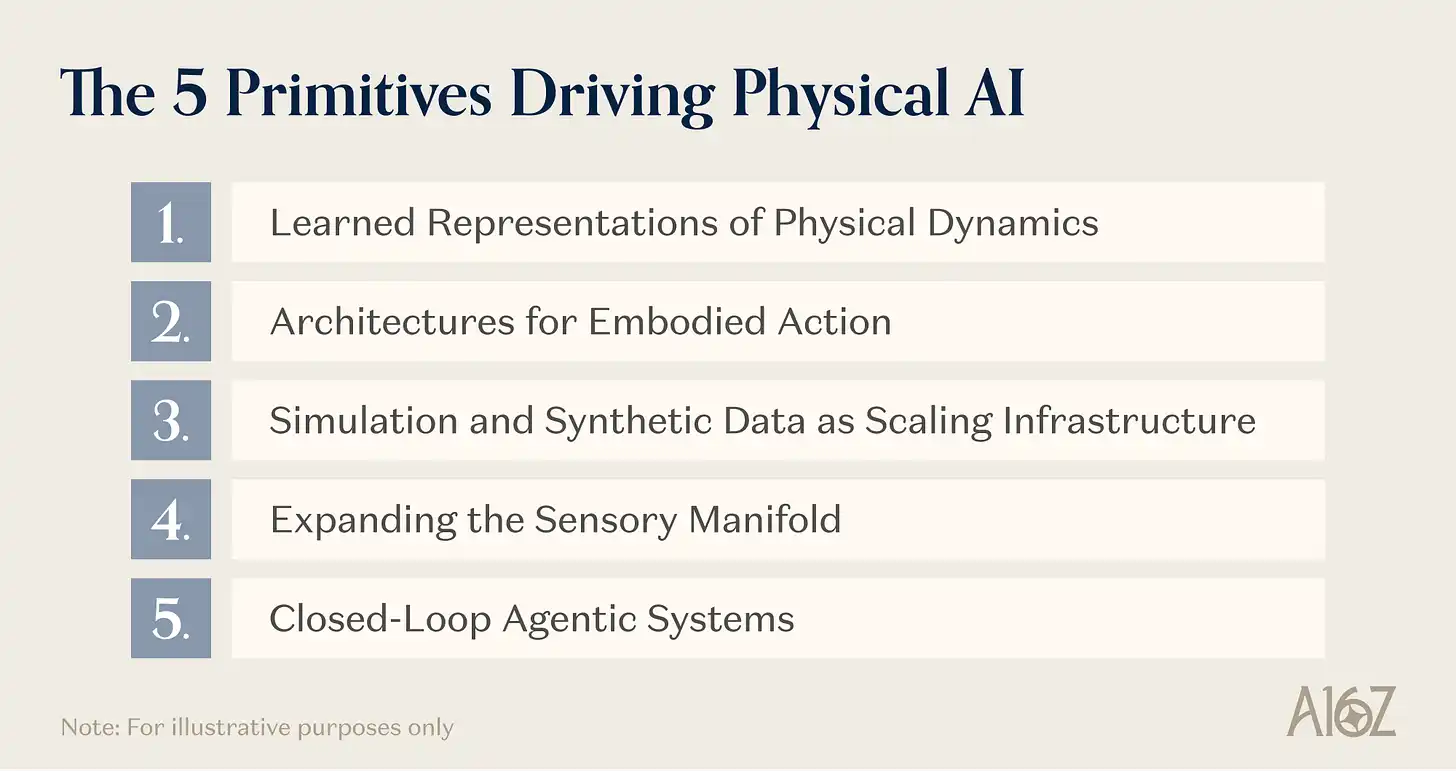

Five Underlying Primitives

Before looking at specific applications, understand the shared technical foundation of these frontier systems. Pushing frontier AI into the physical world relies on five main primitives. These technologies are not exclusive to any single application domain; they are building blocks—enabling systems that "extend AI into the physical world" to be built. Their simultaneous maturation is what makes the current moment special.

Caption: The five underlying primitives supporting Physical AI

Primitive 1: Learned Representations of Physical Dynamics

The most fundamental primitive is the ability to learn a compressed, general representation of physical world behavior—how objects move, deform, collide, and react to forces. Without this layer, every physical AI system would have to learn the physics of its domain from scratch, a cost no one can afford.

Several architectural schools are approaching this goal from different directions. VLA models start from the top: take pre-trained vision-language models—which already possess semantic understanding of objects, spatial relationships, and language—and add an action decoder on top to output motion control commands. The key point is that the enormous cost of learning to see and understand the world can be amortized by internet-scale image-text pre-training. Physical Intelligence's π0, Google DeepMind's Gemini Robotics, and NVIDIA's GR00T N1 are all validating this architecture at increasingly larger scales.

WAM models start from the bottom: based on video diffusion Transformers pre-trained on internet-scale video, inheriting rich priors about physical dynamics (how objects fall, get occluded, interact when force is applied), and then coupling these priors with action generation. NVIDIA's DreamZero demonstrated zero-shot generalization to novel tasks and environments, cross-embodiment migration from few human video demonstrations, and achieved meaningful improvements in real-world generalization.

A third approach might be most indicative of future directions, skipping pre-trained VLMs and video diffusion backbones entirely. Generalist's GEN-1 is a natively embodied foundation model trained from scratch on over 500,000 hours of real physical interaction data, primarily collected from people performing daily manipulation tasks using low-cost wearable devices. It is not a standard VLA (no vision-language backbone is being fine-tuned), nor a WAM. It is a foundation model specifically designed for physical interaction, learning from scratch not the statistical patterns of internet images, text, or video, but the statistical patterns of human-object contact.

Spatial intelligence, as pursued by companies like World Labs, is valuable for this primitive because it addresses a common shortcoming of VLA, WAMs, and natively embodied models: none explicitly model the 3D structure of the scene they are in. VLAs inherit 2D visual features from image-text pre-training; WAMs learn dynamics from video, which is a 2D projection of 3D reality; models learning from wearable sensor data capture force and kinematics but not scene geometry. Spatial intelligence models can help fill this gap—learning to reconstruct and generate the complete 3D structure of physical environments and reason about it: geometry, lighting, occlusion, object relationships, spatial layout.

The convergence of these various approaches is itself significant. Whether the representation is inherited from a VLM, co-learned from video, or built natively from physical interaction data, the underlying primitive is the same: a compressed, transferable model of physical world behavior. The data flywheel these representations can tap into is enormous and largely untapped—not just internet video and robot trajectories, but also the vast corpus of human bodily experience that wearable devices are beginning to collect at scale. The same representation can serve a robot learning to fold towels, an autonomous lab predicting reaction outcomes, and a neural decoder interpreting motor cortex grasp intentions.

Primitive 2: Architectures for Embodied Action

Physical representation alone is not enough. Translating "understanding" into reliable physical action requires architectures to solve several interrelated problems: mapping high-level intent to continuous motion commands, maintaining consistency over long action sequences, operating under real-time latency constraints, and continuously improving with experience.

A dual-system hierarchical architecture has become a standard design for complex embodied tasks: a slow but powerful vision-language model handles scene understanding and task reasoning (System 2), paired with a fast, lightweight visuomotor policy for real-time control (System 1). Variants of this approach are used by GR00T N1, Gemini Robotics, and Figure's Helix, addressing the fundamental tension between "large models providing rich reasoning" and "physical tasks requiring millisecond-level control frequencies." Generalist takes a different path, using "resonant reasoning" to allow thinking and acting to occur simultaneously.

The action generation mechanisms themselves are also evolving rapidly. The flow-matching and diffusion-based action heads pioneered by π0 have become the mainstream method for generating smooth, high-frequency continuous actions, replacing the discrete tokenization borrowed from language modeling. These methods treat action generation as a denoising process similar to image synthesis, producing trajectories that are physically smoother and more robust to error accumulation than autoregressive token prediction.

But perhaps the most critical architectural advancement is the extension of reinforcement learning to pre-trained VLAs—a foundation model trained on demonstration data can continue to improve through autonomous practice, just like a person refining a skill through repetition and self-correction. Physical Intelligence's π*0.6 work is the clearest large-scale demonstration of this principle. Their method, called RECAP (Reinforcement Learning with Experience and Correction based on Advantage-conditioned Policies), addresses the long-sequence credit assignment problem that pure imitation learning cannot solve. If a robot picks up an espresso machine handle at a slightly skewed angle, failure may not be immediate but manifest several steps later during insertion. Imitation learning has no mechanism to attribute this failure back to the earlier grasp; RL does. RECAP trains a value function to estimate the probability of success from any intermediate state and then has the VLA choose high-advantage actions. Crucially, it integrates multiple heterogeneous data types—demonstration data, on-policy autonomous experience, corrections provided by expert teleoperation during execution—into the same training pipeline.

The results of this approach are good news for the prospects of RL in the action domain. π*0.6 reliably folds 50 types of unseen clothing in real home environments, assembles cardboard boxes, and makes espresso on professional machines, running for hours continuously without human intervention. On the most difficult tasks, RECAP more than doubled throughput and halved failure rates compared to pure imitation baselines. The system also demonstrated that RL post-training produces qualitative behaviors not seen in imitation learning: smoother recovery motions, more efficient grasping strategies, adaptive error correction not present in the demonstration data.

These gains indicate one thing: the compute power scaling dynamics that pushed large models from GPT-2 to GPT-4 are beginning to operate in the embodied domain—only now at an earlier point on the curve, where the action space is continuous, high-dimensional, and subject to the unforgiving constraints of the physical world.

Primitive 3: Simulation and Synthetic Data as Scaling Infrastructure

In the language domain, the data problem was solved by the internet: naturally occurring, freely available trillions of text tokens. In the physical world, this problem is orders of magnitude more difficult—a consensus now, most directly signaled by the rapid increase in startup data providers focused on the physical world. Collecting real-world robot trajectories is expensive, risky to scale, and limited in diversity. A language model can learn from billions of conversations; a robot (for now) cannot have billions of physical interactions.

Simulation and synthetic data generation are the infrastructure layers addressing this constraint. Their maturation is a key reason why Physical AI is accelerating now, not five years ago.

Modern simulation stacks combine physics-based simulation engines, photorealistic ray-traced rendering, procedural environment generation, and world foundation models that generate photorealistic video from simulation inputs—the latter responsible for bridging the sim-to-real gap. The entire pipeline starts with neural reconstruction of real environments (possible with just a smartphone), populates them with physically accurate 3D assets, and proceeds to large-scale synthetic data generation with automatic labeling.

The significance of simulation stacks is that they are changing the economic assumptions underpinning Physical AI. If the bottleneck for Physical AI shifts from "collecting real data" to "designing diverse virtual environments," the cost curve collapses. Simulation scales with compute, not with manpower and physical hardware. This transformation of the economic structure for training Physical AI systems is of the same kind as the transformation internet text data brought to training language models—meaning investment in simulation infrastructure has enormous leverage for the entire ecosystem.

But simulation is not just a robotics primitive. The same infrastructure serves autonomous science (digital twins of lab equipment, simulated reaction environments for hypothesis pre-screening), new interfaces (simulated neural environments for training BCI decoders, synthetic sensory data for calibrating new sensors), and other domains where AI interacts with the physical world. Simulation is the universal data engine for physical world AI.

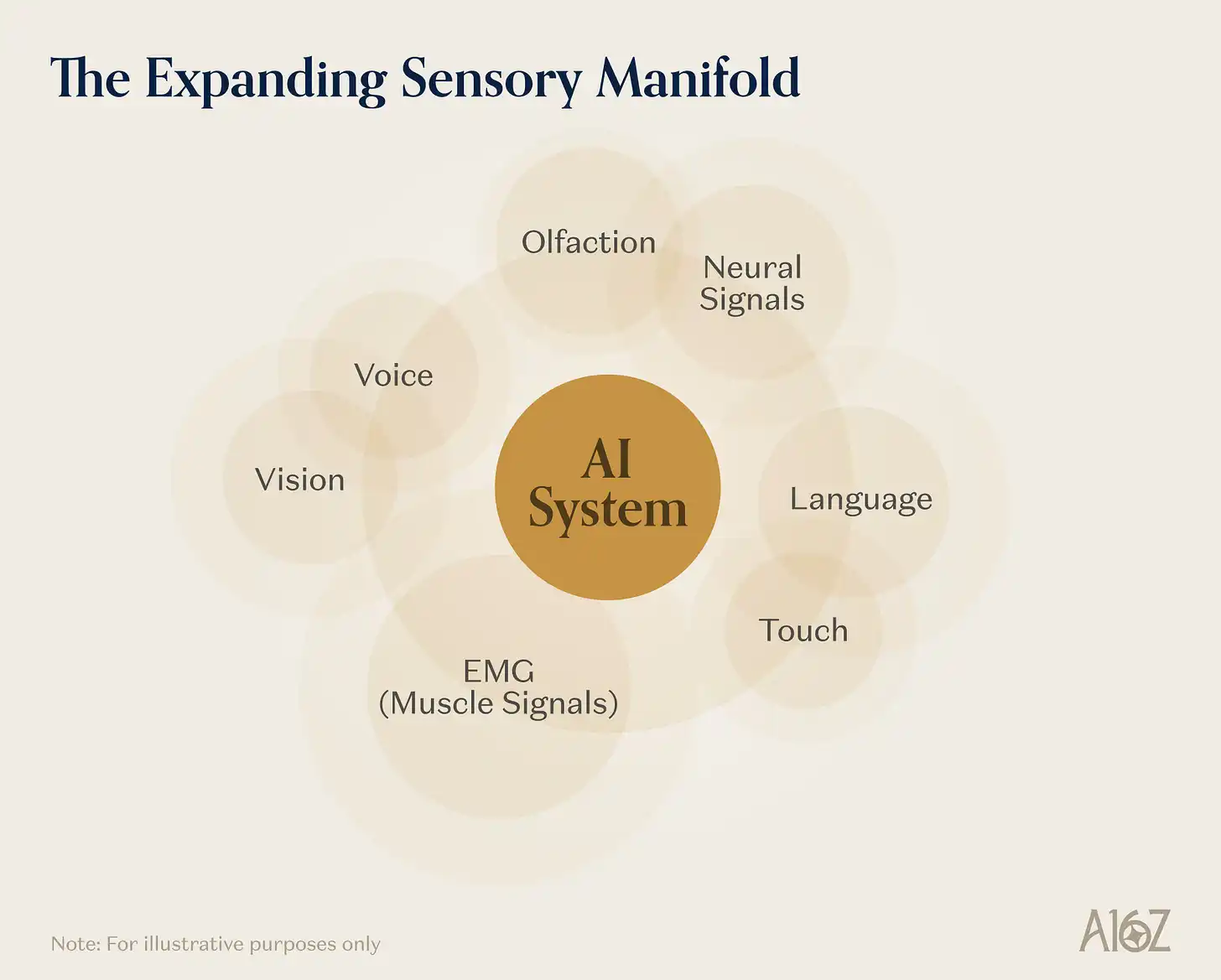

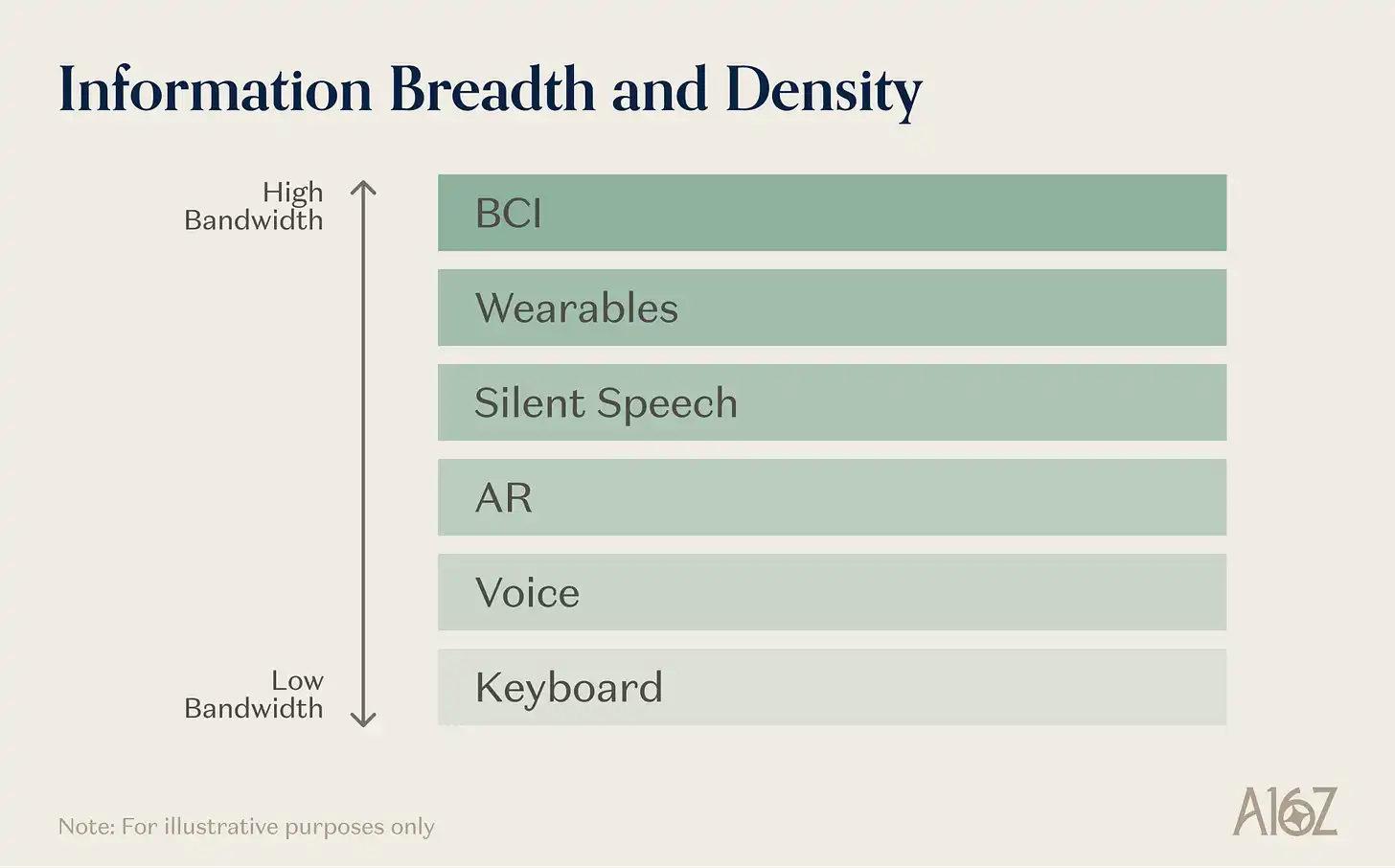

Primitive 4: Expanding Sensory Channels

The signals conveying information in the physical world are far richer than vision and language. Haptics conveys material properties, grasp stability, contact geometry—information cameras cannot see. Neural signals encode motor intent, cognitive states, and perceptual experiences with a bandwidth far exceeding any existing human-computer interface. Subvocal muscle activity encodes speech intent before any sound is produced. The fourth primitive is the rapid expansion of AI's access to these previously hard-to-reach sensory modalities—driven not only by research but also by an entire ecosystem building consumer-grade devices, software, and infrastructure.

Caption: Expanding AI sensory channels, from AR and EMG to brain-computer interfaces

The most直观的指标是新品类设备的出现。直观 metric is the emergence of new device categories. AR devices have significantly improved in experience and form factor in recent years (companies are already building applications for consumer and industrial scenarios on this platform); voice-first AI wearables give language-based AI a more complete physical world context—they literally follow users into physical environments. Long-term, neural interfaces may unlock even more complete interaction modalities. The shift in computing paradigms brought by AI creates an opportunity for a major upgrade in human-computer interaction, with companies like Sesame building new modalities and devices for this purpose.

More mainstream modalities like voice also create tailwinds for emerging interaction methods. Products like Wispr Flow push voice as a primary input method (due to its high information density and natural advantages), improving the market conditions for silent speech interfaces as well. Silent speech devices use various sensors to capture tongue and vocal cord movements, recognizing language silently—they represent a human-computer interaction modality with even higher information density than voice.

Brain-computer interfaces (invasive and non-invasive) represent a deeper frontier, with the commercial ecosystem around them steadily advancing. Signals will emerge at the confluence of clinical validation, regulatory approval, platform integration, and institutional capital—a convergence point for a technology category that was purely academic just a few years ago.

Haptic perception is entering embodied AI architectures, with some models in robotic learning explicitly incorporating touch as a first-class citizen. Olfactory interfaces are becoming real engineering products: wearable olfactory displays using micro odor generators with millisecond response times have been demonstrated in mixed reality applications; olfactory models are also beginning to pair with visual AI systems for chemical process monitoring.

The common pattern in these developments is: they converge on each other at the limit. AR glasses continuously generate visual and spatial data of user interaction with the physical environment; EMG wristbands capture the statistical patterns of human movement intent; silent speech interfaces capture the mapping from subvocalization to language output; BCIs capture neural activity at currently the highest resolution; tactile sensors capture the contact dynamics of physical manipulation. Each new device category is also a data generation platform, feeding the underlying models across multiple application domains. A robot trained on data using EMG to infer movement intent learns different grasping strategies than one trained only on teleoperation data; a lab interface responding to subvocal commands enables a completely different scientist-machine interaction compared to a keyboard-controlled lab; a neural decoder trained on high-density BCI data produces motor planning representations unavailable through any other channel.

The proliferation of these devices is expanding the effective dimensionality of the data manifold available for training frontier physical AI systems—and a significant portion of this expansion is driven by well-capitalized consumer goods companies, not just academic labs, meaning the data flywheel can expand along with market adoption rates.

Primitive 5: Closed-Loop Agent Systems

The final primitive is more architectural. It refers to the orchestration of perception, reasoning, and action into sustained, autonomous, closed-loop systems that operate over long time horizons without human intervention.

In language models, the corresponding development is the rise of agent systems—multi-step reasoning chains, tool use, self-correction processes—pushing models from single-turn Q&A tools to autonomous problem solvers. In the physical world, the same transition is happening, only with much more demanding requirements. A language agent can roll back errors at no cost; a physical agent cannot undo a spilled reagent.

Physical world agent systems have three characteristics that distinguish them from their digital counterparts. First, they need to be instrumented for experimentation or operate in a closed loop: directly interfacing with raw instrument data streams, physical state sensors, and execution primitives, grounding reasoning in physical reality, not textual descriptions of it. Second, they need long-sequence persistence: memory, provenance tracking, safety monitoring, recovery behaviors, linking multiple run cycles together, not treating each task as an independent episode. Third, they need closed-loop adaptation: revising strategies based on physical outcomes, not just textual feedback.

This primitive fuses individual capabilities (good world models, reliable action architectures, rich sensor suites) into complete systems capable of autonomous operation in the physical world. It is the integration layer, and its maturation is the prerequisite for the three application areas below to exist as real-world deployments rather than isolated research demonstrations.

Three Domains

The primitives above are general enabling layers; they themselves do not specify where the most important applications will emerge. Many domains involve physical action, physical measurement, or physical perception. What distinguishes "frontier systems" from "merely improved versions of existing systems" is the degree to which compounding returns occur from model capability improvements and scaling infrastructure within the domain—not just better performance, but the emergence of new capabilities previously impossible.

Robotics, AI-driven science, and new human-computer interfaces are the three domains with the strongest compounding effects. Each uniquely assembles the primitives, each is constrained by the limitations the current primitives are removing, and each, in operation, generates as a byproduct a form of structured physical data—data that in turn makes the primitives themselves better, creating a feedback loop that accelerates the entire system. They are not the only Physical AI domains worth watching, but they are where frontier AI capabilities interact most intensively with physical reality, and are furthest from the current language/code paradigm—thus offering the largest space for new capabilities to emerge—while also being highly complementary to it and able to benefit from its advantages.

Robotics

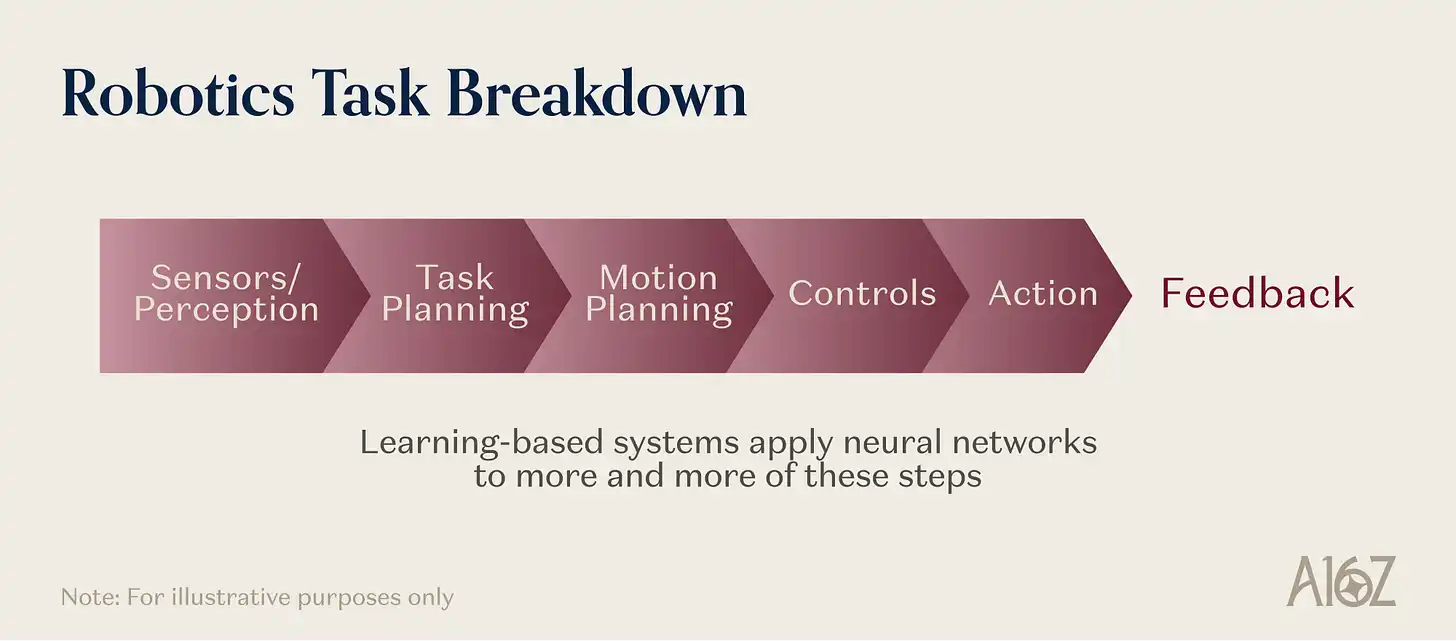

Robotics is the most literal embodiment of Physical AI: an AI system must perceive, reason, and exert physical action on the material world in real time. It also constitutes a stress test for every primitive.

Consider what a general-purpose robot must do to fold a towel. It needs a learned representation of how deformable materials behave under force—a physical prior not provided by language pre-training. It needs an action architecture that can translate high-level instructions into sequences of continuous motion commands at control frequencies above 20Hz. It needs simulation-generated training data because no one has collected millions of real towel-folding demonstrations. It needs tactile feedback to detect slippage and adjust grip force because vision cannot distinguish a secure grasp from a failing one. It also needs a closed-loop controller that can recognize errors during folding and recover, not just blindly execute memorized trajectories.

Caption: Robotics tasks simultaneously invoke all five underlying primitives

This is why robotics is a frontier system, not a mature engineering discipline with better tools. These primitives are not improving existing robotic capabilities; they are enabling categories of manipulation, movement, and interaction previously impossible outside narrow, controlled industrial environments.

Frontier progress has been significant in recent years—we have written about this before. First-generation VLAs proved that foundation models can control robots for diverse tasks. Architectural advances are bridging high-level reasoning and low-level control in robotic systems. On-device inference is becoming feasible. Cross-embodiment migration means a model can be adapted to a new robot platform with limited data. The remaining core challenge is reliability at scale, which remains the deployment bottleneck. 95% success rate per step translates to only 60% over a 10-step chain, while production environments require far higher rates. RL post-training holds great potential here to help the field cross the capability and robustness threshold needed for the scaling phase.

These advancements have implications for market structure. Value in the robotics industry has for decades been captured in the mechanical systems themselves. Mechanics remain a critical part of the stack, but as learned strategies become more standardized, value will migrate towards models, training infrastructure, and data flywheels. Robotics also feeds back into the primitives: every real-world trajectory is training data to improve world models, every deployment failure exposes gaps in simulation coverage, every test on a new embodiment expands the diversity of physical experience available for pre-training. Robotics is both the most demanding consumer of primitives and one of their most important sources of improvement signals.

Autonomous Science

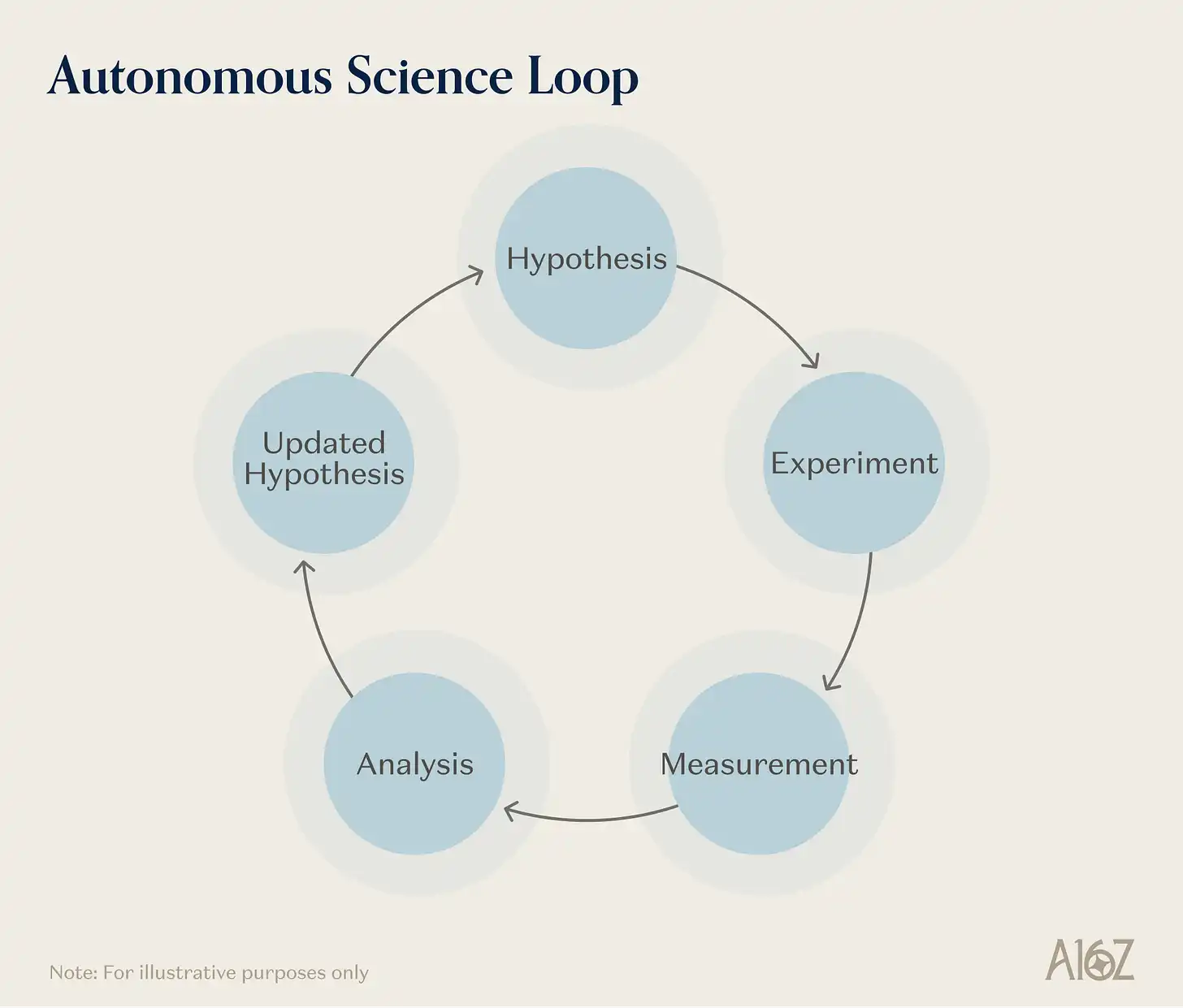

If robotics tests the primitives with "real-time physical action," autonomous science tests something slightly different—sustained multi-step reasoning about causally complex physical systems, over timeframes of hours or days, where experimental results must be interpreted, contextualized, and used to revise strategies.

Caption: How autonomous science (AI scientists) integrates the five underlying primitives

AI-driven science is the most thorough domain for primitive composition. A self-driving lab (SDL) needs learned representations of physical and chemical dynamics to predict experimental outcomes; needs embodied action to pipette, position samples, operate analytical instruments; needs simulation for pre-screening candidate experiments and allocating scarce instrument time; needs expanded sensing capabilities—spectroscopy, chromatography, mass spectrometry, and increasingly novel chemical and biological sensors—to characterize results. It更需要闭环智能体编排原语比其他任何领域都更需要闭环智能体编排原语:更需要闭环智能体编排原语 than any other field: the ability to maintain multi-round "hypothesis-experiment-analysis-revision" workflows无人介入, retaining provenance, monitoring safety, and adjusting strategies based on information revealed each round.

No other domain invokes these primitives so deeply. This is why autonomous science is a frontier "system," not just laboratory automation with better software. Companies like Periodic Labs and Medra, in materials science and life sciences respectively, synthesize scientific reasoning capabilities with physical validation capabilities to achieve scientific iteration, producing experimental training data along the way.

The value of such systems is intuitively obvious. Traditional material discovery takes years from concept to commercialization; AI-accelerated workflows could theoretically compress this process far more. The key constraint is shifting from hypothesis generation (which foundation models can assist well) to fabrication and validation (which requires physical instruments, robotic execution, closed-loop optimization). SDLs target this bottleneck.

Another important特性 of autonomous science—true for all physical world systems—is its role as a data engine. Every experiment run by an SDL produces not just a scientific result, but also a physically grounded, experimentally validated training signal. A measurement of how a polymer crystallizes under specific conditions enriches the world model's understanding of material dynamics; a validated synthesis pathway becomes training data for physical reasoning; a characterized failure tells the agent system where its predictions break down. The data produced by an AI scientist running real experiments is qualitatively different from internet text or simulation output—it is structured, causal, and empirically verified. This is precisely the kind of data physical reasoning models need most and lack from other sources. Autonomous science is the pathway that directly converts physical reality into structured knowledge, improving the entire Physical AI ecosystem.

New Interfaces

Robotics extends AI into physical action; autonomous science extends it into physical research. New interfaces extend it into the direct coupling of artificial intelligence with human perception, sensory experience, and bodily signals—devices spanning AR glasses, EMG wristbands, all the way to implantable brain-computer interfaces. What binds this category together is not a single technology but a common function: expanding the bandwidth and modalities of the channel between human intelligence and AI systems—and in the process generating human-world interaction data directly usable for building Physical AI.

Caption: The spectrum of new interfaces, from AR glasses to brain-computer interfaces

The distance from the mainstream paradigm is both the challenge and the potential of this field. Language models know about these modalities conceptually but are not natively familiar with the motor patterns of silent speech, the geometry of olfactory receptor binding, or the temporal dynamics of EMG signals. Representations to decode these signals must be learned from the expanding sensory channels. Many modalities lack internet-scale pre-training corpora; data often can only be produced by the interfaces themselves—meaning the system and its training data co-evolve, something without parallel in language AI.

The recent performance of this field is the rapid rise of AI wearables as a consumer category. AR glasses are perhaps the most visible example, but other wearables primarily using voice or vision as input are also emerging simultaneously.

This ecosystem of consumer devices both provides new hardware platforms for extending AI into the physical world and is becoming infrastructure for physical world data. A person wearing AI glasses can continuously produce first-person video streams of how people navigate physical environments, manipulate objects, and interact with the world; other wearables continuously capture biometric and motion data. The installed base of AI wearables is becoming a distributed physical world data acquisition network, recording human physical experience at a previously impossible scale. Consider the volume of smartphones as consumer devices—a new category of consumer device allows computers to perceive the world in new modalities at equivalent scale, opening a huge new channel for AI's interaction with the physical world.

Brain-computer interfaces represent a deeper frontier. Neuralink has implanted multiple patients, with surgical robots and decoding software iterating. Synchron's intravascular Stentrode has been used to allow paralyzed users to control digital and physical environments. Echo Neurotechnologies is developing a BCI system for speech restoration based on their research in high-resolution cortical speech decoding. New companies like Nudge are also being formed, gathering talent and capital to build new neural interface and brain interaction platforms. Technical milestones at the research level are also noteworthy: the BISC chip demonstrated wireless neural recording with 65,536 electrodes on a single chip; the BrainGate team decoded internal speech directly from the motor cortex.

The common thread running through AR glasses, AI wearables, silent speech devices, and implantable BCIs is not just that "they are all interfaces," but that they collectively constitute an increasing-bandwidth spectrum between human physical experience and AI systems—every point on this spectrum supports the continuous progress of the primitives behind the three major domains discussed here. A robot trained on high-quality first-person video from millions of AI glasses users learns operational priors completely different from one trained on curated teleoperation datasets; a lab AI responding to subvocal commands is a completely different experience in terms of latency and fluency compared to a keyboard-controlled lab; a neural decoder trained on high-density BCI data produces motor planning representations unavailable through any other channel.

New interfaces are the mechanism for making the sensory channels themselves larger—they open up previously non-existent data channels between the physical world and AI. And this expansion is driven by consumer device companies pursuing scaled deployment, meaning the data flywheel will accelerate along with consumer adoption.

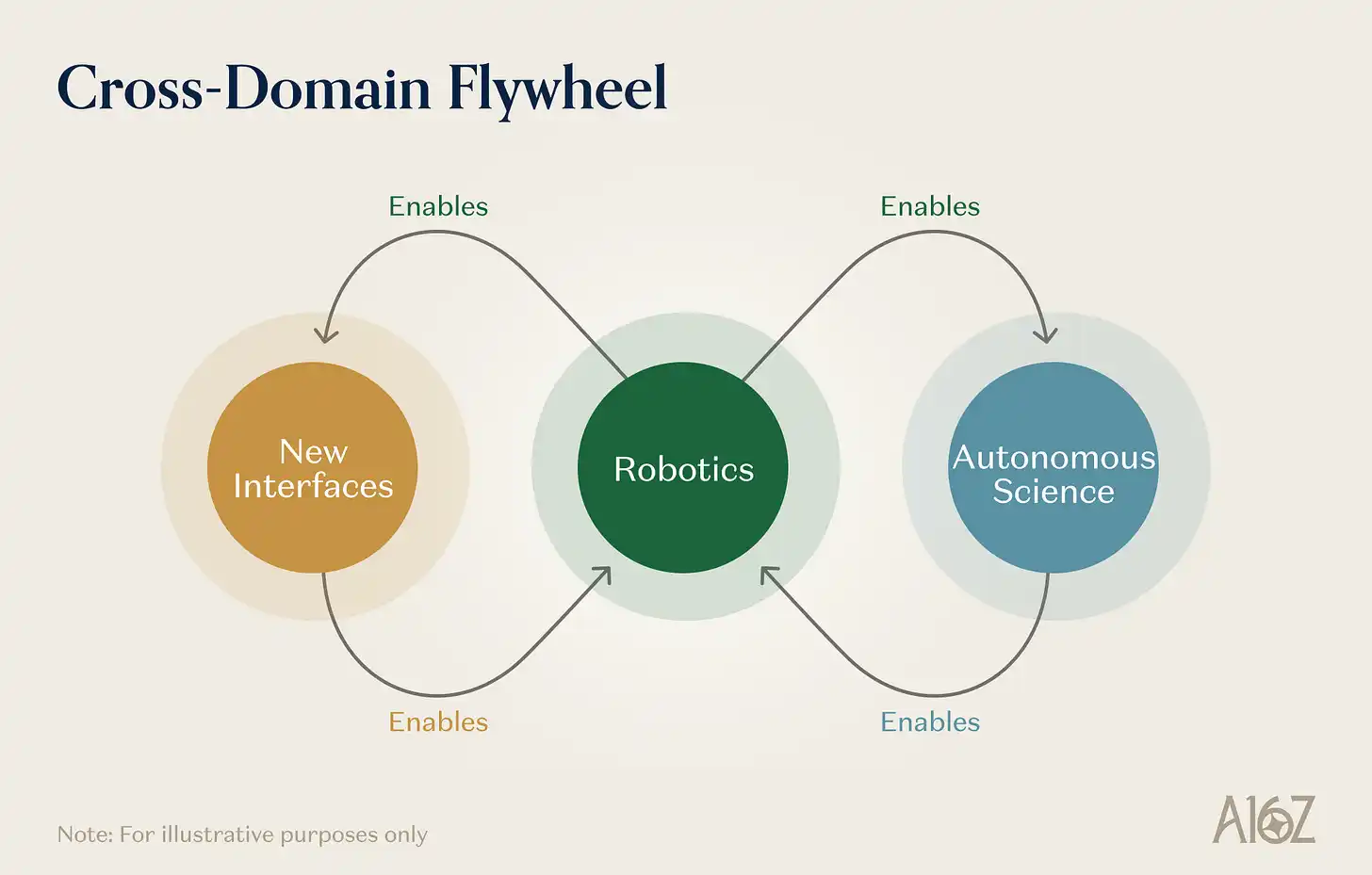

Systems for the Physical World

The reason to view robotics, autonomous science, and new interfaces as different instances of frontier systems composed from the same set of primitives is that they enable each other and compound.

Caption: The mutual feedback flywheel between robotics, autonomous science, and new interfaces

Robotics enables autonomous science. Self-driving labs are essentially robotic systems. The manipulation capabilities developed for general-purpose robots—dexterous grasping, liquid handling, precise positioning, multi-step task execution—can be directly transferred to laboratory automation. Every step forward in the generality and robustness of robot models expands the range of experimental protocols an SDL can execute autonomously. Every advance in robotic learning lowers the cost and increases the throughput of autonomous experimentation.

Autonomous science enables robotics. The scientific data produced by self-driving labs—validated physical measurements, causal experimental results, material property databases—can provide the kind of structured, grounded training data most needed by world models and physical reasoning engines. Furthermore, the materials and components needed for next-generation robots (better actuators, more sensitive tactile sensors, higher density batteries, etc.) are themselves products of materials science. Autonomous discovery platforms that accelerate materials innovation directly improve the hardware substrate on which robotic learning operates.

New interfaces enable robotics. AR devices are a scalable way to collect data on "how humans perceive and interact with the physical environment." Neural interfaces produce data about human movement intent, cognitive planning, and sensory processing. This data is extremely valuable for training robotic learning systems, especially for tasks involving human-robot collaboration or teleoperation.

There is a deeper observation here about the nature of frontier AI progress itself. The language/code paradigm has produced extraordinary results and is still rising strongly in the scaling era. But the new problems, new data types, new feedback signals, and new evaluation standards offered by the physical world are almost limitless. Grounding AI systems in physical reality—through robots manipulating objects, labs synthesizing materials, interfaces connecting to the biological and physical world—we open up new scaling axes complementary to the existing digital frontier—and likely mutually improving.

Caption: Interaction and emergence across the various scaling axes of Physical AI

What behaviors will emerge from these systems is difficult to predict precisely—emergence is defined by the interaction of independently understandable but combined unprecedented capabilities. But historical patterns are optimistic. Each time AI systems gained a new modality of interaction with the world—seeing (computer vision), speaking (speech recognition), reading and writing (language models)—the resulting capability leap far exceeded the sum of individual improvements. The transition to physical world systems represents the next such phase transition. In this sense, the primitives discussed in this article are being built right now, potentially enabling frontier AI systems to perceive, reason, and act upon the physical world, unlocking significant value and progress in the physical world.

Disclaimer: This article is for informational purposes only and does not constitute any investment advice. It should not be used as a basis for legal, commercial, investment, or tax advice.