DeepSeek V4 is finally live. This is a moment that has been awaited for nearly five months. The main model with 1T MoE parameters + the 285B parameter Flash version, followed by the full 1.6T Pro version, all open-sourced on GitHub under the Apache 2.0 license, with weights and deployment code released simultaneously.

As soon as the model was released, the capital market responded in three distinct yet interconnected ways.

Different Reactions in the Capital Market

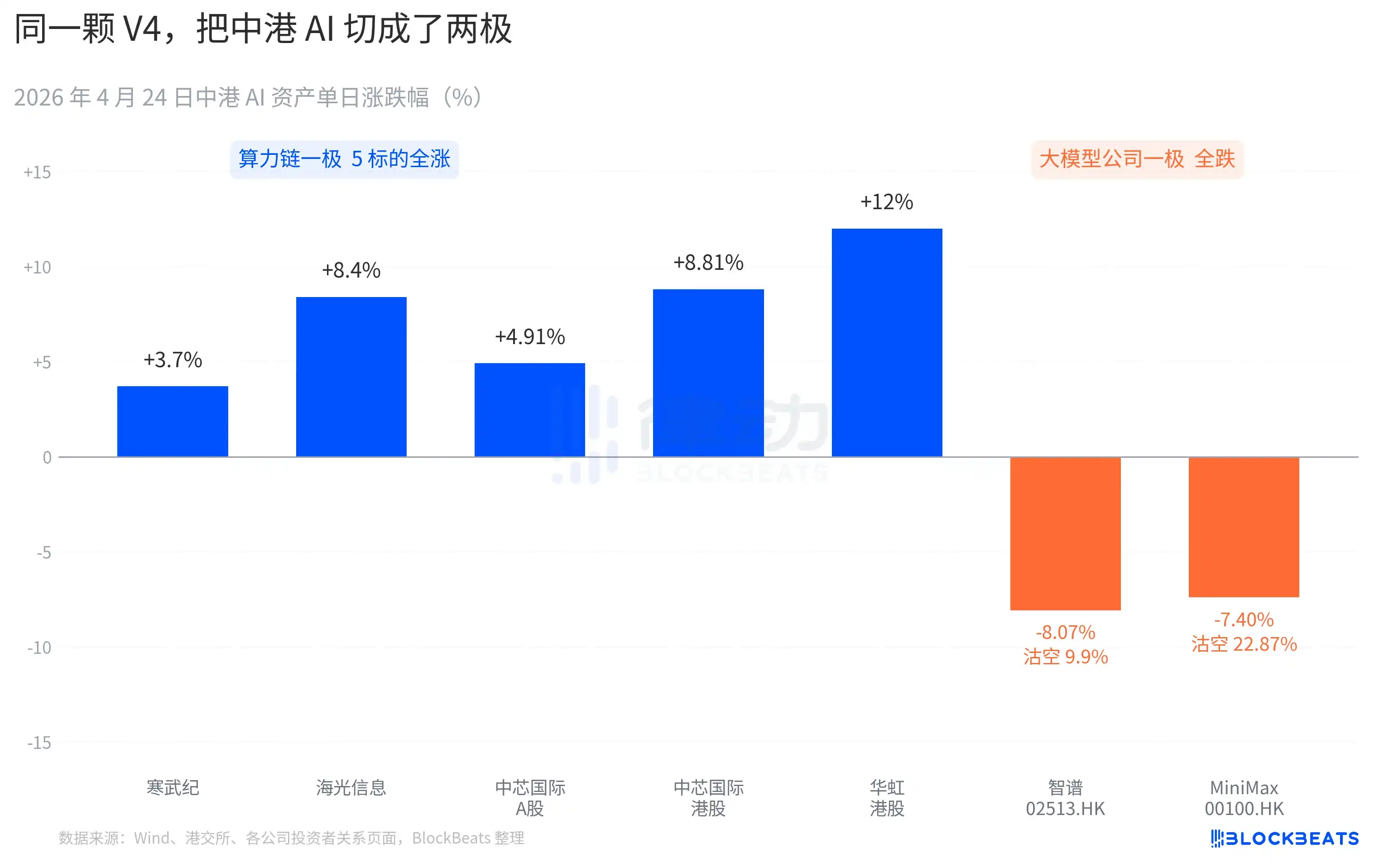

On the A-share computing power chain side, there was an almost across-the-board surge. Cambricon saw 11 consecutive days of gains, rising 3.7% in a single day, with a cumulative increase of over 60% within the month. Hygon Information hit a 10% daily limit during trading, closing up 8.4%. SMIC's A-shares rose 4.91%, while its Hong Kong shares climbed 8.81%. Huahong's Hong Kong shares surged as much as 18% before closing up 12%. The Cathay Pacific ETF for科创芯片 (Science and Technology Innovation Chip) attracted 2.4 billion yuan in a single day, reaching a historic high in scale.

On the Hong Kong stock market, large model companies showed a different color. Zhipu (02513.HK) fell 8.07%, with a short-selling ratio of 9.9%. MiniMax (00100.HK) dropped 7.40%, with its short-selling ratio soaring to 22.87%. The latter represents the highest single-day short-selling data for the Hong Kong AI sector in the past three months. Both companies are representatives of the Hong Kong AI listing wave expected in the second half of 2025, with their IPO prospectuses highlighting the same core competency: "self-developed foundational large models."

The reaction on the other side of the Pacific was equally specific. NVIDIA opened down 1.8% last night, falling as much as 2.6% during the session, and closed flat for the day. Bloomberg's market commentary compared this consolidation to the V3 "DeepSeek moment" on January 27. The difference is that the January episode was a panic sell-off, wiping out $600 billion in market value in a single day. This time, it was more like a repricing—milder in scale but clear in direction. A new phrase appeared in buy-side research notes: "China's AI inference demand is beginning to decouple from North America's AI inference demand."

Layering these three market reactions together, we get the first verdict written by the market within 24 hours of V4's launch. After open source prevailed, money began to reposition. What can be priced is no longer the model itself, but which card the model runs on and which supply chain it is embedded in.

11 New Models in 30 Days: V4 Adds Fuel to the Open-Source Camp

The timing of V4's release is part of the reason why the reaction was amplified.

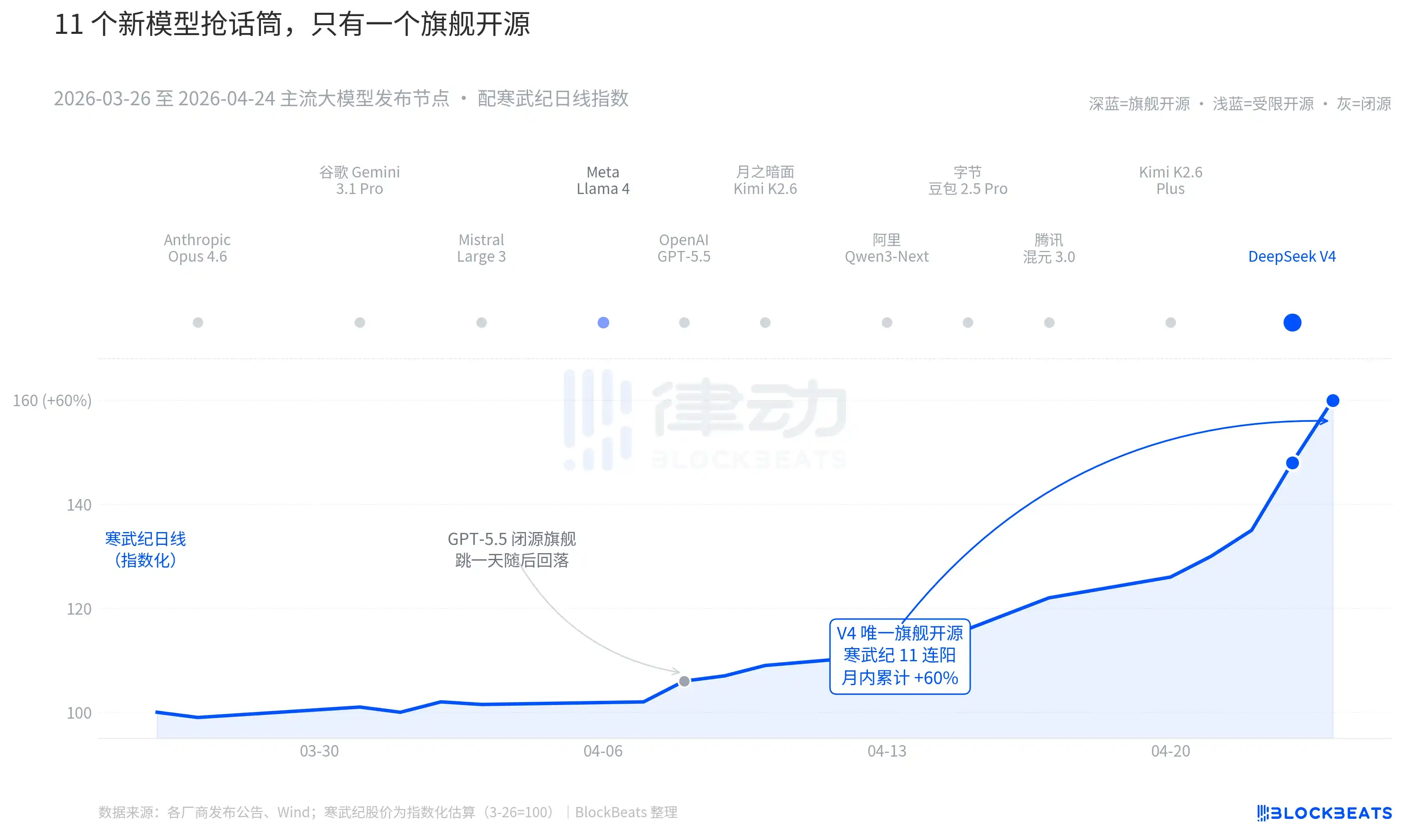

Zooming out to the past 30 days: between March 26 and April 24, at least 11 significantly influential large models were released or received major updates, covering almost all major players. The list includes Anthropic Opus 4.6, Google Gemini 3.1 Pro, OpenAI GPT-5.5, Mistral Large 3, Meta Llama 4, Moonlight's Kimi K2.6, Alibaba Qwen3-Next, ByteDance Doubao 2.5 Pro, Tencent Hunyuan 3.0, Kimi K2.6 Plus, and finally, DeepSeek V4, released in the early hours of April 23.

On average, a new model was released every 2.7 days. This is a pace even fund managers can't keep up with in reading release notes. But looking at the K-lines of AI assets in China and Hong Kong over these 30 days, only one name left a lasting mark on the market. GPT-5.5 on April 8 drove NVIDIA up 4.2% in a single day, peaking that day. Then, DeepSeek V4 on April 23-24 drove consecutive jumps in the China-Hong Kong computing power chain.

The difference does not lie in the model capabilities themselves. The gap between these 11 models on the LMArena leaderboard is mostly within 50 points, falling within a narrow band of the "same tier." The difference lies in the叠加 (superposition) of two things.

The first is open source. Among the first 10 models, only Llama 4 was open source, but Llama 4's weight协议 (license) came with a long list of commercial use restrictions, receiving冷淡 (lukewarm)评价 (evaluations) from the欧美 (European and American) developer community, and it fell out of the top ten on OpenRouter on the third day. V4's license is Apache 2.0, with no门槛 (barriers) for weights, no restrictions on commercial use, and推理代码 (inference code) released simultaneously. This is the first flagship open-source model in the past six months to simultaneously pressure the closed-source camp on three dimensions: performance, price, and openness.

The second is timing. Against the backdrop of the closed-source camp continuously releasing major updates, the open-source narrative is being repeatedly squeezed. Opus 4.6 pushed the SWE-Bench for code tasks to a new high, and GPT-5.5 set a下沉锚点 (downward anchor point) of $1.25 per million tokens. The debate over whether open source can catch up with closed source has been ongoing in Silicon Valley for two years. V4, with an open-source flagship whose estimated MAU surged to 90 million, pressed the pause button on this debate.

As one large domestic fund manager stated in a roadshow, "Before V4, we applied a discount to the valuation of open-source large models. After V4, this discount is starting to be收 (collected) in reverse."

DeepSeek Replaced the Pricing Table of the Computing Power Supply Chain

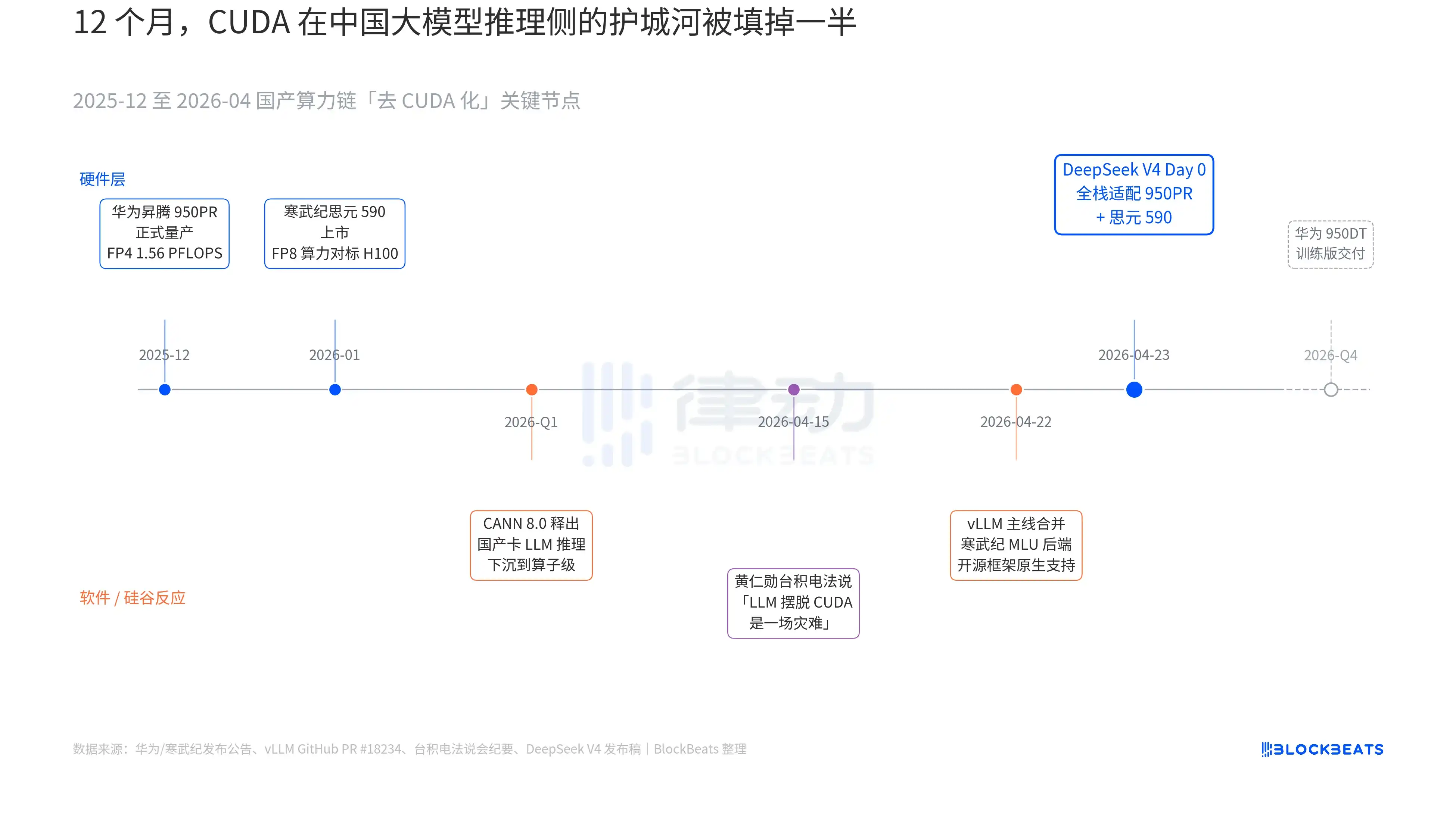

V4's release notes contained a line that had never appeared in any official document of a Chinese large model before: "Day 0 full-stack adaptation for Cambricon Siyuan 590 and Huawei Ascend 950PR, with deployment code open-sourced simultaneously." The weight of this line becomes clear only when three暗线 (undercurrents) that have been unfolding in parallel over the past 12 months are connected. These three undercurrents belong to hardware, software, and Silicon Valley's reaction, respectively.

The first undercurrent is on the chip side. Huawei's Ascend 950PR entered mass production in December 2025, with FP4算力 (computing power) of 1.56 PFLOPS and HBM capacity of 112GB, marking the first time domestic AI chips have matched NVIDIA's B-series on hard metrics. In推理任务 (inference tasks) for a 1T parameter MoE model like V4, single-card吞吐 (throughput) increased by 2.87 times compared to the H20. The配套 (supporting) CANN 8.0 software stack optimizes the LLM inference framework down to the算子级别 (operator level). DeepSeek's公开 (public) Benchmark shows that V4's end-to-end inference latency on an Ascend超节点 (super node) (8-card 950PR) is 35% lower than on an equivalent-scale H100 cluster. Cambricon Siyuan 590's data is even more aggressive, with single-chip FP8算力 (computing power)对标 (matching) the H100, at less than half the price.

The second undercurrent is on the software side. The vLLM mainline merged the Cambricon MLU backend PR on April 22, marking the first time an open-source inference framework natively supports non-NVIDIA domestic GPUs. Hygon Information's DCU takes another path through the ROCm ecosystem but can fully run V4's MoE routing layer. This means that deploying V4 is no longer "only runnable on a specific domestic card" but "choosable among multiple domestic cards." The ecosystem's dependence on a single supplier is broken, which is a critical拐点 (inflection point) for production.

The third undercurrent comes from Silicon Valley. On April 15, Jensen Huang's (NVIDIA CEO) was pressed by an analyst at TSMC's earnings call about the progress of China's domestic computing power. His原话 (original words) were冷峻而具体 (chillingly specific): "If they can really make LLMs摆脱 (break free from) CUDA, it would be a disaster for us." Nine days later, DeepSeek provided the answer with a single Day 0 announcement.

The phrase "国产替代" (domestic substitution) has been overused to the point of losing meaning over the past three years. But after the morning of April 24, this matter gained specific data that can be priced by the capital market for the first time. Single-card throughput, end-to-end inference latency, inference cost, and commercially deployable code quietly pushed this long war of words past the threshold into production.

The logic behind Cambricon's 11 consecutive阳线 (rising days) is hidden here. It is no longer a "domestic GPU concept stock" but a "DeepSeek V4推理基础设施供应商" (inference infrastructure supplier). The same logic explains Huahong's 12% surge in Hong Kong shares; it代工 (manufactures) the 7nm equivalent process for the 950PR. Every V4 token running on a domestic Ascend card means that capacity originally destined for NVIDIA and TSMC is partially截留 (retained) in the Pearl River Delta.

And the next step has long been laid out. In Huawei's roadmap, the 950DT (training version) is scheduled for delivery in Q4 2026, targeting "full-stack training of V5 or equivalent models on a 10,000-card cluster." If this path can be successfully traversed, CUDA's moat in the training side of China's large models will be downgraded from "necessary" to "optional."