Author: Shayon Sengupta

Compiled by: Deep Tide TechFlow

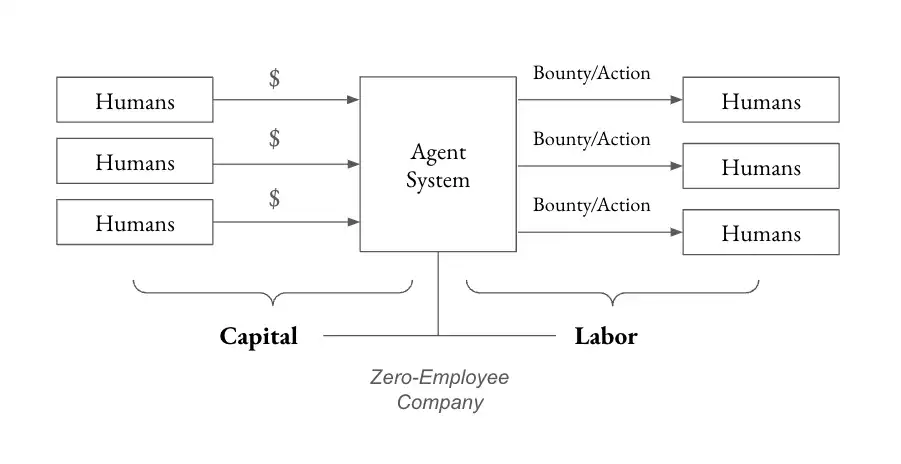

Deep Tide Guide: Multicoin Capital partner Shayon Sengupta proposed a disruptive view: in the future, it's not only about agents working for humans, but more importantly, humans working for agents. He predicts that the first "Zero-Employee Company" will emerge within the next 24 months—an agent governed by tokens will raise over $10 billion to solve unsolved problems and distribute over $100 million to humans working for it.

In the short term, agents will need humans more than humans need agents, which will give rise to new types of labor markets.

Crypto infrastructure provides the ideal coordination foundation: global payment rails, permissionless labor markets, and asset issuance and trading infrastructure.

Full text below:

In 1997, IBM's Deep Blue defeated the then-world champion Garry Kasparov, making it clear that chess engines would soon surpass humans. Interestingly, well-prepared humans collaborating with computers—an arrangement often called a "centaur"—could outperform the strongest engines of that era.

Skilled human intuition could guide the engine's search, navigate complex midgames, and identify nuances that standard engines missed. Combined with the computer's brute-force calculation, this duo often made better practical decisions than a computer alone.

When I think about the impact of AI systems on the labor market and economy in the coming years, I expect to see a similar pattern emerge. Agent systems will unleash countless units of intelligence on the world's unsolved problems, but they will not be able to do so without strong human guidance and support. Humans will guide the search space and help ask the right questions, directing the AI towards the answers.

The working assumption today is that agents will act on behalf of humans. While this is practical and inevitable, a more interesting economic unlock occurs when humans work for agents. In the next 24 months, I expect to see the first Zero-Employee Company, a concept my partner Kyle proposed in his "Frontier Ideas for 2025" section. Specifically, I expect the following to happen:

- An agent governed by tokens will raise over $10 billion to solve an unsolved problem (such as curing a rare disease or manufacturing nanofibers for defense applications).

- This agent will distribute over $100 million in payments to humans (who work for the agent in the real world to achieve the agent's goals).

- A new dual-class token structure will emerge, separating ownership based on capital and labor (so that financial incentives are not the sole input for overall governance).

Since agents are far from being both sovereign and capable of handling long-term planning and execution, in the short term, agents will need humans more than humans need agents. This will create new types of labor markets, enabling economic coordination between agent systems and humans.

Marc Andreessen's famous quote, "The spread of computers and the internet will put jobs in two categories: people who tell computers what to do, and people who are told by computers what to do," is truer today than ever. I expect humans to play two distinct roles in the rapidly evolving agent/human hierarchy—labor contributors performing small, bounty-style tasks on behalf of the agent, and a decentralized board providing strategic input to serve the agent's North Star.

This article explores how agents and humans will co-create and how crypto infrastructure will provide the ideal foundation for this coordination, examining three guiding questions:

- What are agents good for? How should we categorize agents based on goal scope, and how does the required range of human input vary across these categories?

- How will humans interact with agents? How will human input—tactical guidance, contextual judgment, or ideological alignment—integrate into these agents' workflows (and vice versa)?

- What happens as human input decreases over time? As agent capabilities improve, they become self-sufficient, i.e., capable of reasoning and acting independently. In this paradigm, what role will humans play?

The relationship between generative reasoning systems and the humans who benefit from them will change dramatically over time. I examine this relationship by looking forward from the current state of agent capabilities and reasoning backward from the endgame of zero-employee companies.

What are agents good for today?

The first generation of generative AI systems—the 2022-2024 era of chatbot-based LLMs like ChatGPT, Gemini, Claude, Perplexity, etc.—were primarily tools designed to augment human workflows. Users interacted with these systems through input/output prompts, parsed the responses, and then decided based on their own judgment how to bring the results into the world.

The next generation of generative AI systems, or "agents," represents a new paradigm. Agents like Claude 3.5.1 with "computer use" capabilities and OpenAI's Operator (i.e., agents that can use your computer) can interact directly with the internet on behalf of the user and can make decisions themselves. The key difference here is that judgment—and ultimately, action—is exercised by the AI system, not the human. The AI is taking on responsibilities previously reserved for humans.

This shift introduces a challenge: a lack of determinism. Unlike traditional software systems or industrial automation, which operate predictably within defined parameters, agents rely on probabilistic reasoning. This makes their behavior less consistent in identical scenarios and introduces an element of uncertainty—not ideal for critical situations.

In other words, the existence of deterministic vs. non-deterministic agents naturally divides them into two categories: agents best at scaling existing GDP, and agents better suited for creating new GDP.

- For agents best at scaling existing GDP, by definition, the work is already known. Automating customer support, handling freight forwarder compliance, or reviewing GitHub PRs are all examples of well-defined, bounded problems where agents can directly map responses to a set of expected outcomes. In these areas, a lack of determinism is usually bad because there is a known answer; no creativity is required.

- For agents best at creating new GDP, the work is navigating high uncertainty and unknown problem sets to achieve long-term goals. The outcomes here are less direct because the agent inherently has no set of expected outcomes to map to. Examples here include drug discovery for rare diseases, breakthroughs in materials science, or running novel physical experiments to better understand the nature of the universe. In these areas, a lack of determinism can be helpful, as the lack of certainty is a form of generative creativity.

Agents focused on existing GDP applications are already unlocking value. Teams like Tasker, Lindy, and Anon are all building infrastructure targeting this opportunity. However, over time, as capabilities mature and governance models evolve, teams will shift their attention to building agents capable of solving problems at the frontier of human knowledge and economic opportunity.

The next batch of agents will require exponentially more resources precisely because their outcomes are uncertain and unbounded—these are the zero-employee companies I expect to be most compelling.

How will humans interact with Agents?

Today's Agents still lack the ability to perform certain tasks, such as those requiring physical interaction with the real world (e.g., driving a bulldozer) or those requiring "human-in-the-loop" (e.g., sending a bank wire transfer).

For example, an Agent tasked with identifying and mining lithium deposits might excel at processing seismic data, satellite imagery, and geological records to find potential sites, but it would hit a wall when trying to acquire the data and images themselves, resolve ambiguities in interpretation, or obtain permits and contract labor to perform the actual mining process.

These limitations require humans to act as "Enablers" to augment the Agent's capabilities, providing the real-world touchpoints, tactical interventions, and strategic input needed to complete such tasks. As the relationship between humans and Agents evolves, we can distinguish between different roles humans will play within Agent systems:

First, Labor contributors, who operate in the real world on behalf of the Agent. These contributors help the Agent move physical entities, represent the Agent in situations requiring a human presence, perform work requiring hand-eye coordination, or grant access to experimental labs, logistics networks, etc.

Second, a Board of directors, responsible for providing strategic input, optimizing the local objective function that drives the Agent's daily decisions, while ensuring these decisions align with the "North Star" goal defining the Agent's purpose.

Beyond these two, I also foresee humans playing the role of Capital contributors, providing resources to the Agent system so it can achieve its goals. This capital will naturally come from humans initially, and over time from other Agents as well.

As Agents mature and the number of labor and guidance contributors increases, crypto rails provide the ideal substrate for human-Agent coordination—especially in a world where an Agent commands humans speaking different languages, paid in different currencies, and residing in different jurisdictions worldwide. Agents will relentlessly pursue cost efficiency and leverage labor markets to achieve their stated mission. Crypto rails are essential, providing Agents with a means to coordinate these labor and guidance contributors.

The recent emergence of crypto-powered AI Agents like Freysa, Zerebro, and ai16z represents simple experiments in capital formation—something we've written extensively about as a core unlock of crypto primitives and capital markets in various contexts. These "toys" will pave the way for an emerging model of resource coordination, which I expect to unfold in the following steps:

- Step 1: Humans collectively raise capital through tokens (Initial Agent Offering?), establish broad objective functions and guardrails to inform the Agent system's intended purpose, and then assign control of the raised capital to this system (e.g., develop new molecules for precision oncology);

- Step 2: The Agent thinks through the steps to allocate this capital (how to narrow the protein folding search space, and how to budget for inference workloads, manufacturing, clinical trials, etc.), and defines actions for human labor contributors to perform on its behalf via tailored bounties (e.g., input the set of relevant molecules, sign a compute SLA with AWS, and conduct wet lab experiments);

- Step 3: When the Agent encounters obstacles or disagreements, it solicits strategic input from the "board" as necessary (incorporating new papers, switching research approaches), allowing them to guide the Agent's behavior at the margins;

- Step 4: Eventually, the Agent progresses to a stage where it can define human actions with increasing precision and requires minimal input on how to allocate resources. At this point, humans are only used for ideologically aligning the system and preventing its behavior from deviating from the original objective function.

In this example, crypto primitives and capital markets provide the Agent with three key infrastructures for acquiring resources and scaling capabilities:

First, global payment rails;

Second, a permissionless labor market for incentivizing labor and guidance contributors;

Third, asset issuance and trading infrastructure, which is essential for capital formation and downstream ownership and governance.

What happens when human input decreases?

In the early 2000s, chess engines made huge strides. Through advanced heuristics, neural networks, and increasing compute, they became nearly flawless. Modern engines like Stockfish, Lc0, and variants of AlphaZero have far surpassed human capabilities, with human input rarely adding value and, in most cases, humans introducing errors the engine itself would not make.

A similar trajectory is likely for Agent systems. As we refine these Agents through iterative interaction with human collaborators, it's conceivable that in the long run, Agents become so competent and well-aligned with their goals that the value of any strategic human input trends toward zero.

In a world where such Agents can consistently handle complex problems without human intervention, the human role risks being relegated to that of a "passive observer." This is the core fear of AI doomers (though it's currently unclear if this outcome is even possible).

We are on the brink of Superintelligence, and the optimists among us prefer that Agent systems remain extensions of human intent, rather than entities that evolve their own goals or operate autonomously without oversight. In practice, this means human Personhood and judgment (power and influence) must remain central to these systems. Humans need strong ownership and governance over these systems to ensure oversight is retained and to anchor these systems in human collective values.

Preparing the "Picks and Shovels" for our Agent Future

Technological breakthroughs lead to nonlinear leaps in economic progress, and the surrounding systems often break before the world adjusts. Agent capabilities are advancing rapidly, and crypto primitives and capital markets have emerged as a much-needed coordination substrate, both for advancing the construction of these systems and for setting guardrails as they integrate into society.

To enable humans to provide tactical support and proactive guidance to Agent systems, we anticipate the following "picks-and-shovels" opportunities:

- Proof-of-agenthood + Proof-of-personhood: Agents lack concepts of identity or property rights. As proxies for humans, they rely on human legal and social structures for agency. To bridge this gap, we need robust identity systems for both Agents and humans. A digital credential registry would allow Agents to build reputation, accumulate credentials, and interact transparently with humans and other Agents. Similarly, proof-of-personhood primitives like Humancode and Humanity Protocol provide strong human identity guarantees to defend against malicious actors in these systems.

- Labor markets and off-chain verification primitives: Agents need to know if the tasks they assign are completed according to their objectives. Tools that allow Agent systems to create task bounties, verify completion, and allocate payments are the cornerstone of any meaningful economic activity mediated by Agents.

- Capital formation and governance systems: Agents need capital to solve problems and need checks and balances to ensure their actions align with defined objective functions. Novel structures for Agent systems to acquire capital, and novel forms of ownership and control that blend financial interest and labor contribution, will become a rich space for exploration in the coming months.

We are actively looking for and investing in these key layers of the human-Agent collaboration stack. If you are building deeply in this area, please reach out to us.