Author: Andrej Karpathy

Compiled by: Tim, PANews

2025 has been a year of rapid development and significant changes for large language models, yielding abundant achievements. Below are the "paradigm shifts" that I personally find noteworthy and somewhat surprising—changes that have altered the landscape and, at least on a conceptual level, left a deep impression on me.

1. Reinforcement Learning with Verifiable Rewards (RLVR)

At the beginning of 2025, the LLM production stack at all AI labs generally looked like this:

- Pre-training (GPT-2/3 from 2020);

- Supervised Fine-Tuning (InstructGPT from 2022);

- And Reinforcement Learning from Human Feedback (RLHF, from 2022).

For a long time, this was a stable and mature technical stack for training production-level large language models. By 2025, Reinforcement Learning with Verifiable Rewards had become the core technology widely adopted. By training large language models in various environments with automatically verifiable rewards (such as solving math and programming problems), these models spontaneously develop strategies that humans perceive as "reasoning." They learn to break down problem-solving into intermediate computational steps and master multiple strategies for solving problems through repeated deduction (refer to the DeepSeek-R1 paper for examples). In the previous stack, these strategies were difficult to achieve because the optimal reasoning path and backtracking mechanisms were not explicit for large language models—they had to explore solutions suitable for themselves through reward optimization.

Unlike the Supervised Fine-Tuning and RLHF stages (which are relatively short and involve less computational fine-tuning), RLVR involves long-term optimization training on objective, non-gameable reward functions. It has been proven that running RLVR brings significant capability improvements per unit cost, consuming a large portion of the computational resources originally allocated for pre-training. Therefore, the progress in large language model capabilities in 2025 is mainly reflected in how major AI labs have absorbed the enormous computational demands of this new technology. Overall, we see models of roughly similar scales but with significantly extended RL training times. Another unique aspect of this new technology is that we gain a new调控 dimension (and corresponding scaling laws), where model capabilities can be controlled as a function of test-time computation by generating longer reasoning trajectories and increasing "thinking time." OpenAI's o1 model (released in late 2024) was the first demonstration of an RLVR model, and the release of o3 (early 2025) marked a clear turning point, allowing people to intuitively feel a qualitative leap.

2. Ghost Intelligence vs. Animal Jagged Intelligence

2025 was the year when I (and I believe the entire industry) began to intuitively understand the "form" of large language model intelligence. We are not "evolving or nurturing animals" but "summoning ghosts." The entire technical stack of large language models (neural architecture, training data, training algorithms, and especially optimization objectives) is entirely different, so it is no surprise that we obtain entities in the intelligence domain that are vastly different from biological intelligence. It is inappropriate to examine them from an animal perspective. From the perspective of supervisory information, human neural networks are optimized for survival in tribal jungle environments, while large language model neural networks are optimized for imitating human text, earning rewards in math puzzles, and winning human likes in arenas. As verifiable domains provide conditions for RLVR, the capabilities of large language models in these areas experience "sudden jumps," overall presenting an interesting, jagged performance characteristic. They can simultaneously be erudite geniuses and confused, cognitively struggling elementary students,随时可能 leaking your data under诱导 prompts.

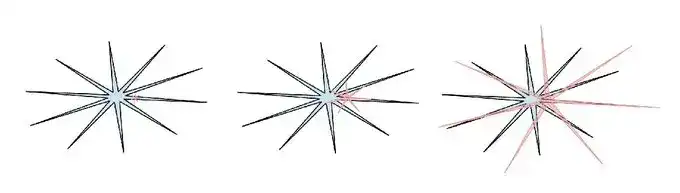

Human intelligence: blue, AI intelligence: red. I like this version of the meme (sorry, I can't find the original Twitter post) because it points out that human intelligence also has its own jagged waves in its own way.

Related to this, in 2025, I developed a general sense of indifference and distrust towards various benchmarks. The core issue is that benchmarks are essentially verifiable environments, making them highly susceptible to RLVR and weaker forms of influence through synthetic data generation. In the typical "score maximization" process, LLM teams inevitably construct training environments near the small embedded spaces of benchmarks and cover these areas with "capability jaggedness." "Training on the test set" has become a new norm.

So what if we sweep all benchmarks but still fail to achieve artificial general intelligence?

3. Cursor: A New Tier of LLM Applications

What impressed me about Cursor (besides its rapid rise this year) is that it convincingly revealed a new "LLM application" tier, as people began talking about "the Cursor of XX field." As I emphasized in my Y Combinator speech this year, LLM applications like Cursor focus on integrating and orchestrating LLM calls for specific vertical domains:

- They handle "context engineering";

- Orchestrate multiple LLM calls into increasingly complex directed acyclic graphs at the底层, finely balancing performance and cost;

- Provide application-specific graphical interfaces for personnel in the "human-in-the-loop";

- And offer an "autonomy adjustment slider."

In 2025, there has been extensive discussion about the development space around this emerging application layer. Will LLM platforms dominate all applications, or is there still broad room for LLM applications? I personally speculate that LLM platforms will gradually position themselves as cultivating "generalist university graduates," while LLM applications will be responsible for organizing these "graduates," fine-tuning them, and making them实战-ready professional teams in specific vertical domains by providing private data, sensors, actuators, and feedback loops.

4. Claude Code: AI Running Locally

The emergence of Claude Code convincingly demonstrated for the first time the form of LLM agents, which combine tool use and reasoning in a cyclical manner to achieve more persistent complex problem-solving. Additionally, what impressed me about Claude Code is that it runs on the user's personal computer, deeply integrated with the user's private environment, data, and context. I believe OpenAI misjudged this direction by focusing their development of code assistants and agents on cloud deployment—i.e., containerized environments orchestrated by ChatGPT—rather than the localhost environment. Although cloud-run agent clusters seem like the "ultimate form towards AGI," we are currently in a过渡阶段 with uneven capability development and relatively slow progress. Under these realistic conditions, deploying agents directly on local computers, closely collaborating with developers and their specific work environments, is a more reasonable path. Claude Code accurately grasped this priority order and packaged it into a concise, elegant, and highly attractive command-line tool form, thereby reshaping how AI is presented. It is no longer just a website like Google that needs to be visited but a little精灵 or ghost "living" in your computer. This is a全新的, unique paradigm for interacting with AI.

5. Vibe Coding

In 2025, AI crossed a critical capability threshold, making it possible to build various amazing programs solely through English descriptions, without even caring about the underlying code. Interestingly, I coined the term "Vibe Coding" in a casual shower thought tweet, never expecting it to develop to its current extent. Under the paradigm of vibe coding, programming is no longer strictly confined to highly trained professionals but becomes something everyone can participate in. From this perspective, it is another example of the phenomenon I described in "Empowering People: How Large Language Models Change the Mode of Technology Diffusion." In stark contrast to all other technologies so far, ordinary people benefit more from large language models than professionals, businesses, and governments. But vibe coding not only empowers ordinary people to access programming but also enables professional developers to write more "software that would never have been implemented." While developing nanochat, I used vibe coding to write a custom efficient BPE tokenizer in Rust without relying on existing libraries or深入学习 Rust. This year, I also used vibe coding to quickly prototype multiple projects just to verify whether certain ideas were feasible. I even wrote entire one-off applications just to locate a specific bug because code suddenly becomes free, ephemeral, malleable, and disposable. Vibe coding will reshape the software development ecosystem and profoundly change the boundaries of职业 definitions.

6. Nano Banana: LLM Graphical Interface

Google's Gemini Nano Banana was one of the most disruptive paradigm shifts of 2025. In my view, large language models are the next major computing paradigm after computers in the 1970s and 80s. Therefore, we will see innovations of the same kind for similar fundamental reasons, akin to the evolution of personal computing, microcontrollers, and even the internet. Especially in human-computer interaction, the current "conversation" mode with LLMs is somewhat similar to inputting commands into computer terminals in the 1980s. Text is the most primitive data representation form for computers (and LLMs) but not the preferred way for humans (especially for input). Humans actually dislike reading text—it is slow and laborious. Instead, humans prefer to receive information through visual and spatial dimensions, which is precisely why graphical user interfaces emerged in traditional computing. Similarly, large language models should communicate with us in forms preferred by humans—through images, infographics, slides, whiteboards, animations, videos, web applications, and other carriers. The current early forms are already realized through "visual text decorations" like emojis and Markdown (such as headings, bold, lists, tables, and other排版 elements). But who will actually build the graphical interface for large language models? From this perspective, nano banana is an early雏形 of this future blueprint. It is worth noting that the breakthrough of nano banana lies not only in its image generation capability itself but also in the comprehensive ability formed by the interweaving of text generation, image generation, and world knowledge within the model weights.