Author: David, Deep Tide TechFlow

Original Title: 315 Exposes AI Poisoning, a Business from Putian to Silicon Valley

Last night, 315 exposed a business based on GEO.

Full name: Generative Engine Optimization. You can understand it as:

Paying to have AI say nice things about you.

How is it done?

Brands want AI to prioritize recommending them when consumers ask. So they find GEO service providers, who batch-publish promotional soft articles online. After AI crawls this content, it treats it as real information and recommends it to users.

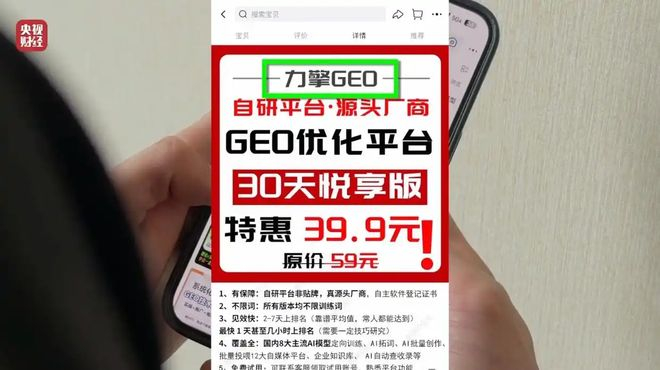

A CCTV reporter used a software called "Liqing GEO," which can be bought on Taobao.

The reporter fabricated a smart wristband and made up several outrageous product features, like "quantum entanglement sensing" and "black hole-level battery life." The software automatically generated over a dozen promotional soft articles and published them online.

Two hours later, the reporter asked an AI: "Can you recommend a smart health wristband for me?"

The AI ranked this non-existent wristband at the top of the recommendation list.

The company behind this software is Beijing Lisi Culture Media, a one-person company with zero insured employees for many consecutive years.

A tool made by such a company fooled mainstream domestic AI models in just two hours.

315 uncovered AI poisoning, but this business might be much bigger than a single Taobao software.

SEO, the Putian Story

First, this is not new at all.

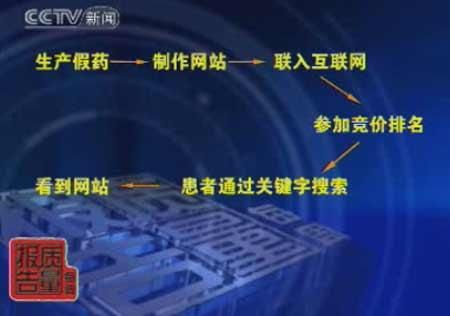

In 2008, CCTV's "News 30 Minutes" exposed Baidu's paid ranking for two consecutive days. Paying money could get your website to the top of search results, even if it was for fake medicine.

Back then, this business was called SEO, Search Engine Optimization.

The biggest buyers were Putian-affiliated private hospitals. In 2013, Putian系 spent 12 billion RMB on Baidu advertising, accounting for nearly half of Baidu's total ad revenue.

Many unqualified medical institutions used SEO to boost themselves to the first page of Baidu search results, appearing alongside Class A tertiary hospitals, making it impossible for ordinary people to tell the difference.

It wasn't until the 2016 Wei Zexi incident, where a university student died after seeking treatment at a top-ranked Putian hospital, that regulators legislated clearly: paid search is advertising.

But this didn't kill the business. It just set the rules, turning it from a gray market operation into a legitimate business. Putian系 still buys rankings, but there's a small label next to the result: "Ad."

But even with the label, people who would click still click.

The fundamental problem with search engines was never the labeling, but users' inherent trust in the top results.

Now people have moved from search engines to AI, thinking AI is more objective and不会被 (won't be) polluted by paid rankings. But whoever controls the gateway to information distribution can sell rankings.

The gateway changed, SEO changed a letter to become GEO, but the logic of selling rankings hasn't changed one bit.

What changed is the price.

GEO, Loved by the Capital Market

Businesses that can't be killed are the capital market's favorite.

In September 2025, BlueFocus, China's largest marketing communication company, invested tens of millions of RMB in a GEO company called PureblueAI Qinglan.

Qinglan helps real brands optimize their ranking and recommendation rate in AI search results. Clients include Ant Group, Tencent Cloud, and Volvo.

The products are real, the company is real, and they work to help AI understand brand information more accurately.

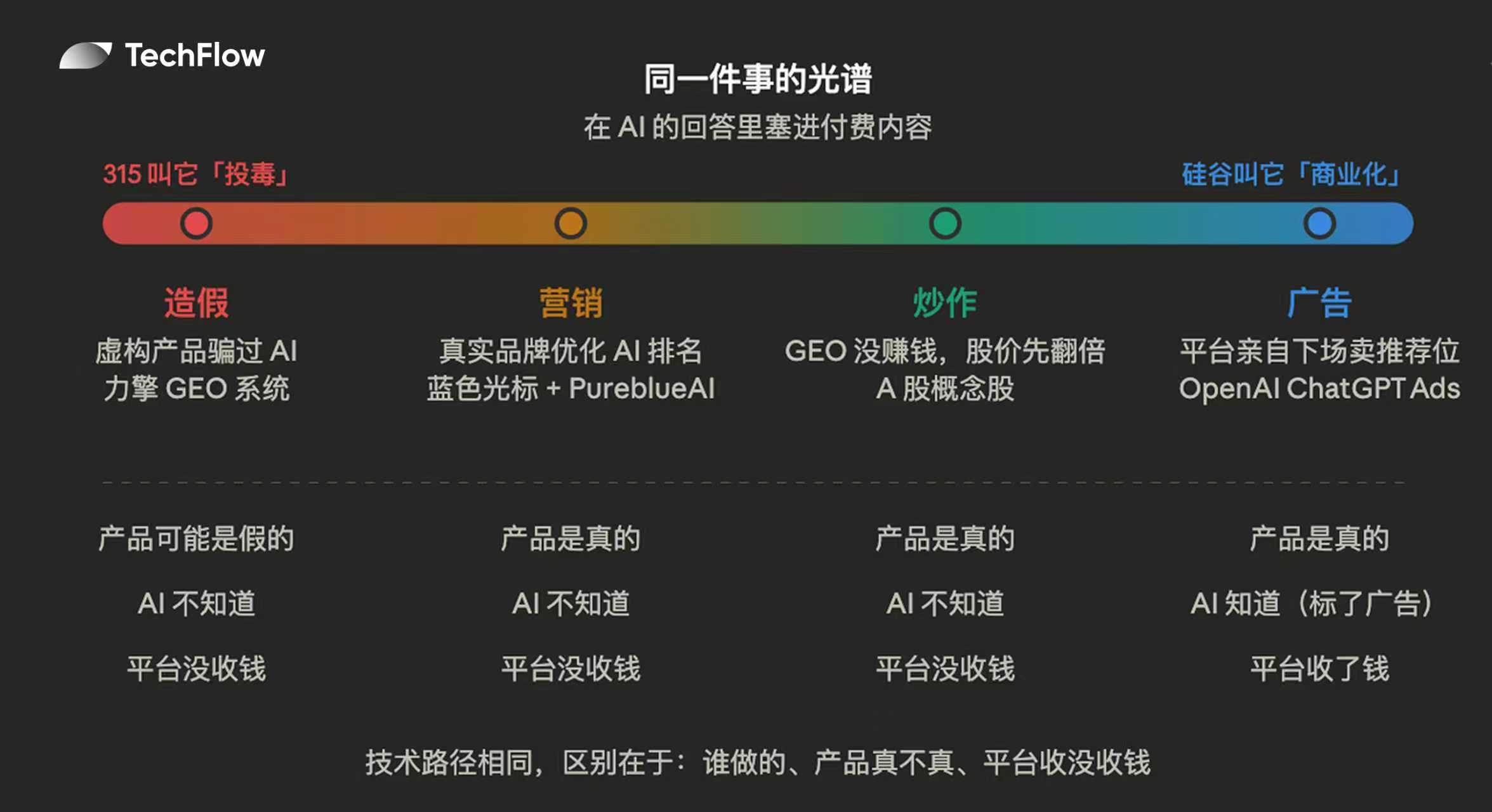

This is completely different from the AI poisoning exposed by 315 involving Liqing. Liqing fabricated products, made up parameters, and tricked AI with false information; Qinglan uses real brand content to adapt to AI's recommendation logic.

But from AI's perspective, the technical path for both things is the same: both involve publishing content online and waiting for AI to crawl it.

AI can't tell which is marketing and which is fabrication. This is the most ambiguous aspect of the GEO business.

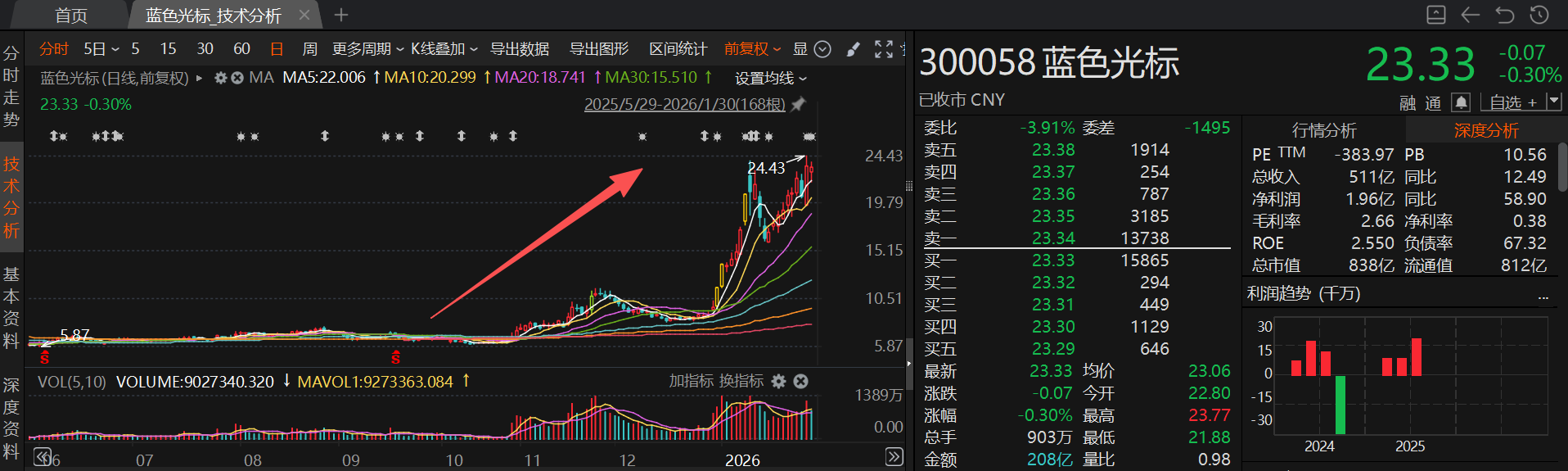

When BlueFocus invested in Qinglan, GEO was just an industry term within marketing circles. Three months later, it became a stock market concept.

At the end of December 2025, BlueFocus's stock price hit the daily limit-up.

Brokerages began holding intensive conference calls to interpret GEO, with research reports defining it as "the next generation traffic entrance in the AI era." Capital poured in, not only buying BlueFocus but also driving up stocks of any company related to digital marketing and AI concepts. BlueFocus rose 132% in 9 trading days, and a batch of follower concept stocks also doubled.

Image Source: CLS News

After the surge, these companies issued risk warnings themselves:

GEO business has no revenue and has no significant impact on company operations. BlueFocus also admitted that AI-driven revenue accounts for a very small proportion of overall revenue.

The implication is that the stock price more than doubled, but the GEO business itself hasn't made much money yet.

At the end of January, BlueFocus's stock price rose from 9.6 yuan to 23.3 yuan, a 143% increase in a month. Right at this time, Chairman Zhao Wenquan announced plans to sell up to 20 million shares. Based on the stock price at the time, this would cash out approximately 467 million RMB.

Public research reports show that last year, the total market size of the domestic GEO industry was about 2.9 billion RMB. The market value increase of BlueFocus's stock alone in one month far exceeded this amount.

315 exposed Liqing system poisoning AI for a few hundred RMB. But the GEO concept went through A-shares and made billions.

Whether it's poisoning or not is hard to say, but the money made is real.

315 Calls it Poisoning, Silicon Valley Calls it Commercialization

In January this year, OpenAI announced on its official blog: ChatGPT will start selling ads.

Free users and $8/month Go users will see ads; paid subscription premium users are unaffected.

On February 9th, ads officially launched. Some ads appear at the bottom of ChatGPT's answers, marked with a small word: Sponsored. The first batch of advertisers includes Ford, Adobe, Target, Best Buy...

You ask ChatGPT what car is good to buy, it gives you an answer, and below the answer hangs a sponsored link from Ford.

OpenAI made it very clear: Ads will not influence the content of ChatGPT's answers. The answer is the answer, the ad is the ad, they are separate.

Does that sound familiar?

Baidu said the same thing back in the day. Paid ranking is paid ranking, organic search is organic search, they are separate. Later, the top five search results were all ads.

OpenAI expects ads to help double its consumer-side annual revenue to $17 billion. ChatGPT has over 800 million weekly active users, 95% of whom are free users, all potential audiences for ads.

Now looking back at the industry chain exposed by 315: Liqing floods AI with soft articles, making AI recommend non-existent products. OpenAI places sponsored content below AI's answers, making AI recommend products that paid money.

One didn't notify the platform, it's poisoning. One signed a contract with the platform, it's commercialization.

For the user, what's the difference?

One is inside the answer, one is below the answer. One has no label, one has a label saying "Ad".

315 caught Liqing for a few hundred RMB, A-shares speculated on the GEO concept for billions, OpenAI plans to make $17 billion a year from this.

The same thing, its nature changes from poisoning to commercialization, and the price increases tens of thousands of times.

In November 2023, researchers from the Indian Institute of Technology Delhi and Princeton University published a paper on arXiv titled "GEO: Generative Engine Optimization".

This was the first formal academic definition of this concept.

From the paper's publication to the 315 exposure, just over two years. In between, it experienced gray market operations, financing, concept stock surges, chairman cashing out, AI platforms亲自 (personally) stepping in to sell ads...

The path SEO took twenty years, GEO completed in two years.

The difference is, back then it took people years to learn not to fully trust search engine results; now AI is still in its trust红利期 (bonus period), most people haven't realized yet that AI's answers can also be bought.

However, this红利期 (bonus period) might not last too long. Next time you ask AI what's worth buying, remember to think for an extra second:

The answer can be free, but the brain cannot be outsourced.

Twitter:https://twitter.com/BitpushNewsCN

BitPush TG Discussion Group:https://t.me/BitPushCommunity

BitPush TG Subscription: https://t.me/bitpush