According to CRU, fiber optic demand from AI data centers grew 75.9% year-over-year, widening the supply-demand gap from 6% to 15%. Fiber optic prices have more than tripled within months.

Capacity can no longer keep up.

This is why NVIDIA invested in Corning and accelerated fiber optic capacity expansion. Two months ago, it had already invested $2 billion in Lumentum and $2 billion in Coherent. These three investments total $4.5 billion, covering everything from lasers to optical chips to optical fiber.

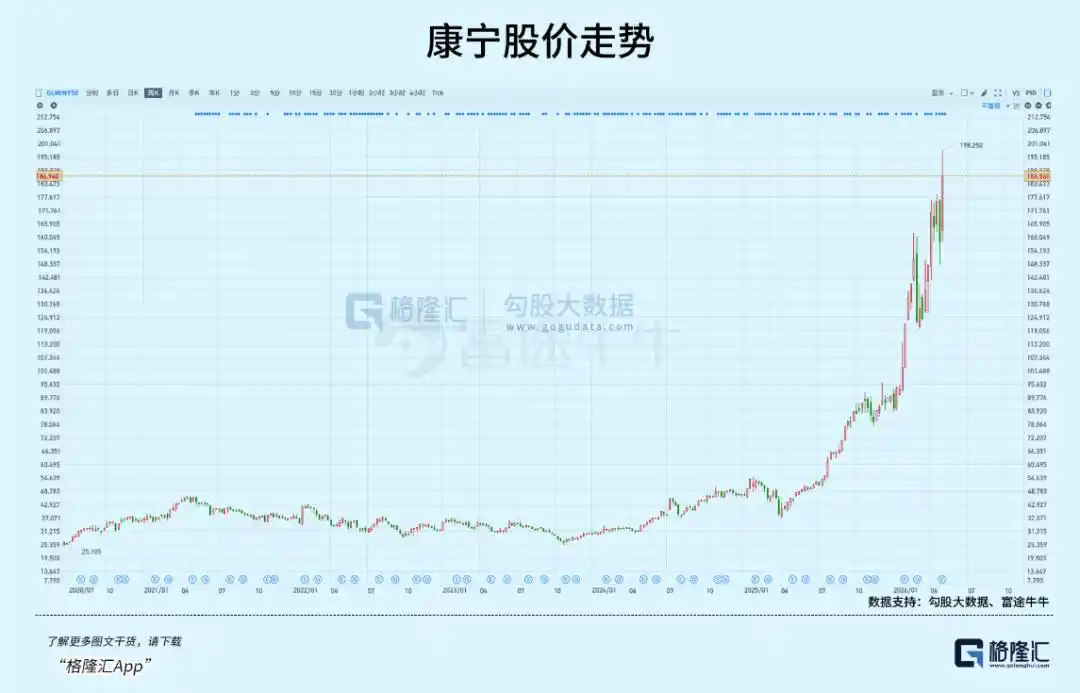

The chosen company, Corning, is a New York-based glass company founded in 1851. On May 6, its stock price touched $195.81 intraday, marking a 316.81% gain over the past year, with its market capitalization standing at over $160 billion.

How did a 175-year-old glass factory position itself on the AI infrastructure map?

01

The Nerve Fibers of AI Infrastructure

Three investments correspond to three segments.

According to Forbes and CNBC reports, Lumentum handles lasers, backed by multi-year purchase commitments and priority access to advanced capacity, and will build new factories in the US. Coherent focuses on next-generation silicon photonics, securing the supply of optical interconnect products. Corning is responsible for the fiber itself, committing to a 10x capacity expansion and three new factories.

In the NVIDIA official announcement, Jensen Huang stated: "AI is driving the largest infrastructure buildout in history." The underlying logic for NVIDIA's heavy bets in the optical upstream stems from two aspects.

First, supply-side rigidity.

The preform is to the fiber optic industry what the wafer is to the chip industry—it determines the entire industry's capacity ceiling. A fiber optic preform is a 1 to 2-meter-long cylindrical glass "mother blank." Its quality directly determines the attenuation rate, strength, and bandwidth of the finished fiber.

One preform can be drawn into hundreds of kilometers of fiber, but the process of making preforms—from raw material purification to precise chemical vapor deposition, then to drawing and strength testing—each step requires extremely high-precision process control.

Moreover, building a new production line requires meeting multiple preconditions simultaneously: cleanroom construction, deposition equipment debugging, process parameter calibration, and training of skilled operators. Any shortfall in any of these areas can affect the yield of the entire production line.

The entire expansion cycle takes 18 to 24 months. When a structural surge occurs on the demand side, this rigid constraint becomes a bottleneck for the entire supply chain.

Second, technological iteration forces a shift from "electrical to optical."

The dual constraints of transmission efficiency and power consumption are forcing large data centers to turn to optical interconnects. According to SemiAnalysis data, the Hopper architecture supports 900 GB/s, the Blackwell architecture 1,800 GB/s, and the next-generation Rubin is expected to reach 3,600 GB/s. Copper cables' transmission distance at speeds above 800G is compressed to less than 1 meter, with power consumption and signal integrity already hitting physical limits.

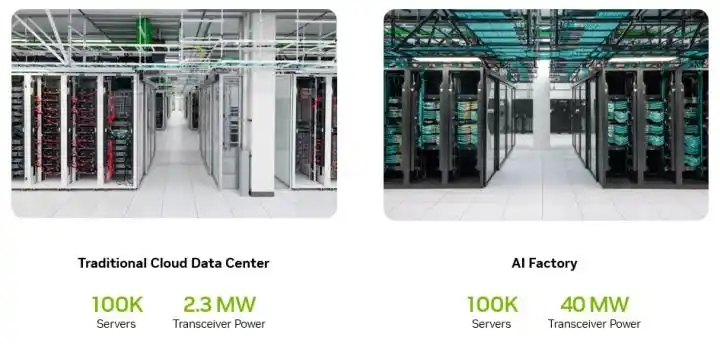

NVIDIA's developer blog disclosed that AI training clusters consume 50 to 150 megawatts, with optical transceivers potentially consuming up to 24 megawatts, accounting for over 10% of the entire data center's power. Co-packaged optics (CPO) solutions can save tens of megawatts of power. This energy advantage makes the CPO adoption curve increasingly steep, with TrendForce predicting CPO penetration could reach 35% by 2030.

The convergence of these two forces results in a structural explosion in fiber optic usage.

According to Corning's Investor Day data, the fiber usage in an AI cabinet is already 5 to 10 times that of a traditional cabinet.

Within the entire fiber optic market, the share of AI-driven fiber demand is climbing from less than 5% in 2024, with the Securities Daily reporting it's expected to reach 35% by 2027. In contrast, the overall fiber optic market growth is only 4.1% (CRU data).

Fiber in AI data centers is like nerve fibers in the human body. GPUs are the brain, the network is the synapse, and fiber is the axon conducting signals.

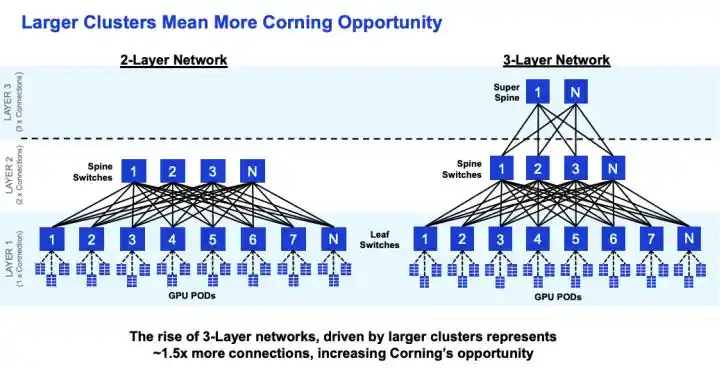

Fiber usage increases with cluster scale. For a 72-GPU AI node, fiber usage is already 16 times that of a traditional data center. ScaleFibre's actual measurements show that a 576-GPU cluster requires about 16 fibers per GPU. Each order-of-magnitude increase in GPU cluster size leads to a more-than-proportional growth in fiber consumption.

(Increased complexity in GPU clusters brings more communication demands)

In terms of market size, Grand View Research estimates the data center cabling market at about $20.2 billion, with fiber optics accounting for 56%. LightCounting predicts the datacom optical module market will grow from $22.8 billion to $41.4 billion.

On this optical chain targeted by NVIDIA, Corning's stock price started from $29 at the end of 2023, rising to $195 over two years. Gains were 60% in 2024, 88% in 2025, and over 100% year-to-date in 2026. The total gain is close to 6x.

Among global fiber optic stocks, this performance is leading. How did it transform from a company selling glass into the fiber optic king of the AI era?

02

Revenue Acceleration

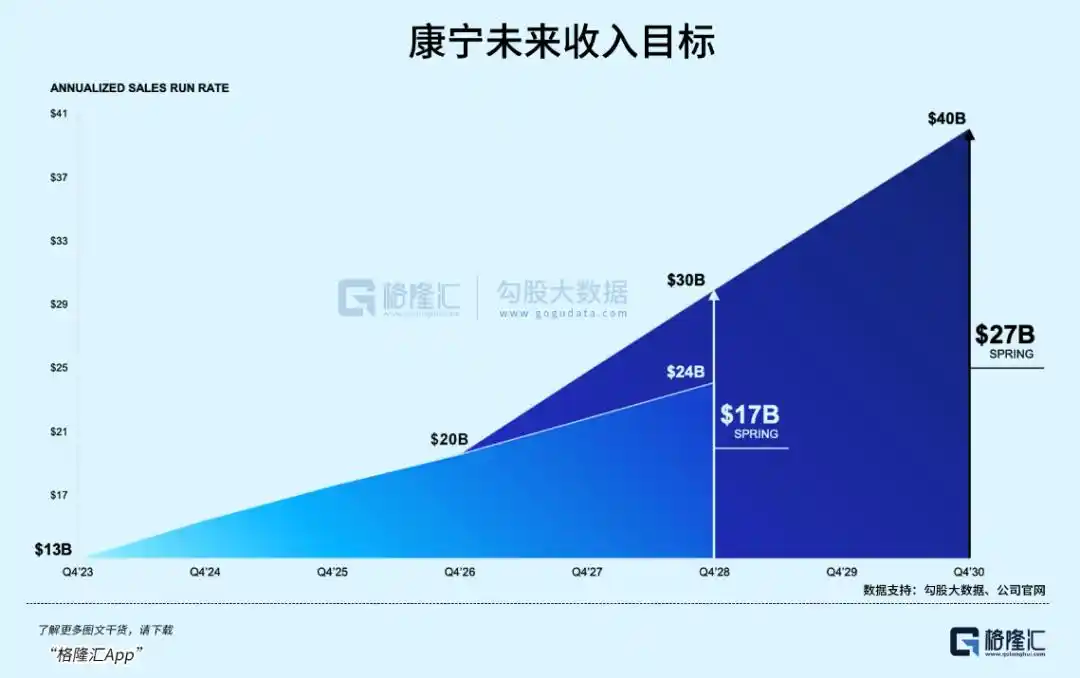

Corning's financial reports show that its Optical Communications - Enterprise revenue grew from $1.3 billion in 2023 to over $3 billion in 2025, doubling in two years. In Q1 2026, Optical Communications net sales increased 93% year-over-year. The CFO stated on the earnings call that the actual growth has far exceeded the target of a 30% CAGR.

Acceleration is also evident at the customer level. According to a CNBC report, Meta signed a multi-year fiber supply agreement valued at up to $6 billion. Corning's investor relations disclosure reveals that two other hyper-scale customers of similar scale have signed similar agreements, and NVIDIA's multi-year lock-in agreement is also in place. These four long-term agreements form the certain foundation for revenue.

Revenue and orders form a verifiable closed loop, further solidified by the expansion plans. AI fiber demand is not just a curve on a PowerPoint; it's real money already reflected on Corning's income statement.

However, Corning is not the world's largest fiber optic manufacturer.

According to CommMesh and TTI Fiber statistics, the leader in market share is Prysmian (Italy) at about 15%. Second is Yangtze Optical Fibre and Cable (YOFC, China) at about 10-12%. Corning is around 10%, ranking third. In terms of preform capacity, YOFC is the world's largest. In terms of comprehensive cable business, Prysmian is the strongest.

The reasons why Meta and NVIDIA chose Corning require understanding the special requirements AI data centers have for fiber optics.

The fiber needed for AI data centers is fundamentally different from standard fiber deployed in operator FTTH networks. It requires ultra-low loss, high-density, bend-insensitive, high-end specialty fiber. At transmission rates from 800G to 1.6T, every 0.01 dB/km difference in attenuation directly impacts signal quality and power consumption. Density determines how much fiber can fit within limited conduit space. Bend performance determines signal stability in high-density cabling within racks.

These three dimensions precisely point to the areas of Corning's deepest technological expertise. According to publicly available industry parameters, Corning's SMF-28 Ultra fiber has an attenuation of 0.15 dB/km, the lowest in the industry. Contamination control is at the parts-per-billion (ppb) level. In comparison, YOFC's fiber is around 0.16 dB/km, close but with a gap. Hengtong's is 0.18 dB/km, a more noticeable difference.

In terms of density, Corning's Investor Day data shows its Gen AI fiber optic system can pack 2 to 4 times more fiber into existing conduits. With AI data center rack space extremely tight, this capability directly translates into deployment efficiency advantages.

Positioning in the CPO field is also crucial. Corning collaborates directly with NVIDIA and Broadcom on CPO connectivity solutions, an area not yet entered by A-share fiber optic companies. Co-packaged optics requires deep physical-level integration of fiber with chips, where Corning's materials science background provides a unique advantage.

Customer structure is another structural difference. Within Corning's Optical Communications revenue, Enterprise (i.e., data center customers) already accounts for over 40%. A-share fiber optic companies primarily serve China's three major telecom operators, with AI data center demand accounting for less than 5% of their revenue. This creates a fundamental difference in revenue growth rates and predictability.

R&D investment is also on a different scale. Corning invests over $1 billion annually in R&D. YOFC invests around $140 million, and Hengtong around $200 million. These differences allow Corning to stand out in the high-end specialty fiber segment.

But these advantages did not appear out of thin air. According to ETHW records in the history of engineering, in 1970, Corning physicist Donald Keck measured the world's first low-loss optical fiber, with an attenuation of 16 to 17 dB/km. The OVD (Outside Vapor Deposition) process invented that year became the technological cornerstone for fiber optic manufacturing for the next 50 years.

When the telecom bubble burst in 2001, Corning's stock fell from over $100 to $1.5, and 12,000 employees were laid off. Wall Street repeatedly pressured the company to exit the fiber business. Corning refused, viewing fiber as a "physics-supported inevitability"—copper cannot scale indefinitely; light will eventually replace electricity. This judgment was validated 20 years later.

The price increase signals driven by the widening supply-demand gap are not only benefiting Corning but also other global fiber optic manufacturers. Data shows that Hengtong Optic-Electric's Q1 net profit increased 98.5% year-over-year, ZTT's increased 46.4%, and YOFC's optical interconnect components revenue increased 48.6%. The fiber optic price increase dividend is being released across the entire industry.

According to Corning's investor relations disclosure, the upgraded Springboard goal targets annualized sales reaching $40 billion by 2030. Management is betting on the long-term path, but the question is: how much of this expectation is already priced into the $195 stock price?

03

Conclusion

Before the AI narrative took off in early 2024, Corning's P/E ratio was 25-30x. Its valuation has now expanded more than 3 times. Comparing the current market capitalization to the 2026 target revenue of $20 billion results in a P/S ratio of about 8x. Corning's financial report shows Q2 guidance is $4.6 billion, slightly below the consensus estimate of $4.694 billion.

There are two core variables worth watching here.

One is the CPO adoption pace. NVIDIA's CPO products are scheduled for initial volume production in the second half of 2026. Each step forward for CPO amplifies the demand for high-end fiber optics by another notch. This is the core catalyst for whether Corning's valuation can continue to expand.

And, the scale of the two undisclosed customers. If they are hyper-scale enterprises like Microsoft or Amazon, actual procurement volumes could far exceed market expectations.

Hollow-core fiber is a potential variable that could change the landscape.

IEEE Spectrum reported that Microsoft has deployed 1,280 km of hollow-core fiber between two Azure data centers, reducing latency by 30% to 47%. However, high costs, immature ecosystem, and ongoing standardization mean it won't replace standard fiber in the short term. There is limited public information about Corning's involvement in hollow-core fiber. If competitors achieve a breakthrough first, it could alter the competitive landscape.

At this stage, the execution pace of orders is more important than the story itself.

But when the market cap rises too fast in the short term, or volatility arises from slower-than-expected progress, what seems like a potential Davis Double Play script can quickly turn into a rollercoaster.

This article is from the WeChat public account "Gelonghui APP" (ID: hkguruclub), author: Freddy.