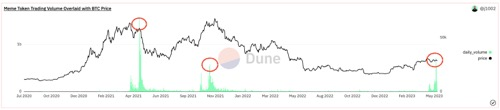

随着基于比特币 BRC-20 标准的 Meme (模因)代币交易量飙升至两年高位,比特币周一跌破2.75万美元,链上数据显示,从历史数据来看,模因币的投机狂热预示着比特币短期“见顶”或者看跌逆转。

meme热度“吸血”

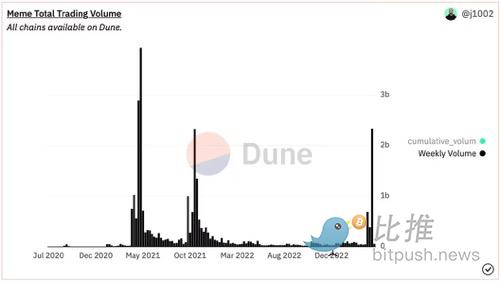

根据区块链观察员 James Tolan 基于 Dune 分析的追踪器,上周加密市场的 meme 币交易量为 23 亿美元,比前一周的 3.87 亿美元增长了六倍,达到 2021 年 5 月以来的最高水平。

这波投机狂潮由 pepecoin (PEPE) 引领,一种以悲伤蛙表情包为主题的代币,于 4 月中旬推出,最大供应量为 420 万亿。上周五, PEPE 的市值突破10 亿美元,最终达到 18.2 亿美元的峰值,对于一个推出不到一个月的加密货币来说是一个惊人的成就。比推终端数据显示,截至发稿时,PEPE 的市值为 9.31 亿美元。

PEPE 热潮还刺激了对其他低市值代币的投机,如 DINO、WSB、CHAD 和 4TOKEN,这些代币在过去两周内上涨了百分之几百。Tolan认为,从历史上看,无实际用例的山寨币投机狂热预示着比特币的看跌逆转。

截至发稿时,比特币交易价格跌破 27,500 美元,24小时跌幅5%,美元指数 (DXY) 小幅反弹,美元指数衡量美元兑包括欧元在内的主要货币的汇率,比特币的走势通常与美元指数相反,该指数上周五在意外强劲的美国就业数据公布后短暂反弹至 101.75,目前徘徊在 101.37 附近。

BRC-20 代币费用创多年新高

随着围绕模因币的炒作越来越多,比特币网络的交易量不断增加。根据加密数据分析平台Dune Analytics 的数据,BRC-20 的每日铸币费在 5 月 7 日创下了 247 BTC 的历史新高,该数值表明该费用达到了两年来的最高水平。

BRC-20 代币标准是比特币区块链上的实验性代币标准,以以太坊的 ERC-20 为蓝本。BRC-20 代币标准由一位名叫 Domo(推特账号“ @domdata”)的匿名链上分析师于 3 月创建,它允许程序员通过Ordinals协议创建和发送可替代代币。

与 ERC-20 代币不同,BRC-20 代币不使用智能合约,并且需要比特币钱包来铸造和交易这些代币。

BRC-20 代币标准近几个月出现爆炸式增长,尤其是随着 Pepe (PEPE) 和 Memetic (MEME) 等模因币的出现。5 月 3 日,比特币网络累计支付的费用超过 350 万美元,比 4 月底激增 400%。BTC Ordinals 的总费用为 641 BTC,YCharts的数据显示,比特币 5 月 7 日的平均交易费用达到 19.21 美元。

比特币网络上的BRC-20 代币数量已超过 14,000,其中许多是模因币。这些币的总市值超过 9.52 亿美元,24 小时交易量超过 1000 万美元。

CPI前的抛售

谈到宏观经济因素,交易员本周重点关注 5 月 10 日发布的美国4 月份消费者价格指数 (CPI) ,以衡量美联储在降低通胀方面的进展,该指数追踪范围广泛的商品和服务的价格变动,其他关键数据包括初请失业金人数和生产者物价指数。当美联储积极提高利率以抑制通货膨胀时,投资者通常会出售“风险资产”。

CPI 被称为整个加密货币的波动催化剂,匿名交易员 Aqua在推文中分析称,市场或出现“战术性抛售”, BTC/USD 进入更广泛的修正区间。他写道:“如果 CPI 数据良好,我们也许还有一次冲高机会,但这看起来越来越像一个修正,我们一定会在未来几周看到市场调整”。

全球资产管理公司贝莱德5月8日发布报告称,央行与通胀的斗争远未结束,风险较高的资产今年可能会继续遭受重创。该公司预计,未来几个月世界各国央行将进一步收紧货币政策,将全球经济推入衰退。

加密基金管理公司 BitBull Capital 首席执行官 Joe DiPasquale 分析道,自 3 月中旬左右的反弹以来,BTC 和 ETH 都没有测试过近期支撑。DiPasquale 表示,BTC 可能会在再次反弹之前测试 25,000 美元至 27,000 美元之间的支撑位,逢低买入 BTC 和 ETH 是一种“合理的策略”。